The Monotonicity of Information in the Central Limit Theorem and ...

The Monotonicity of Information in the Central Limit Theorem and ...

The Monotonicity of Information in the Central Limit Theorem and ...

Create successful ePaper yourself

Turn your PDF publications into a flip-book with our unique Google optimized e-Paper software.

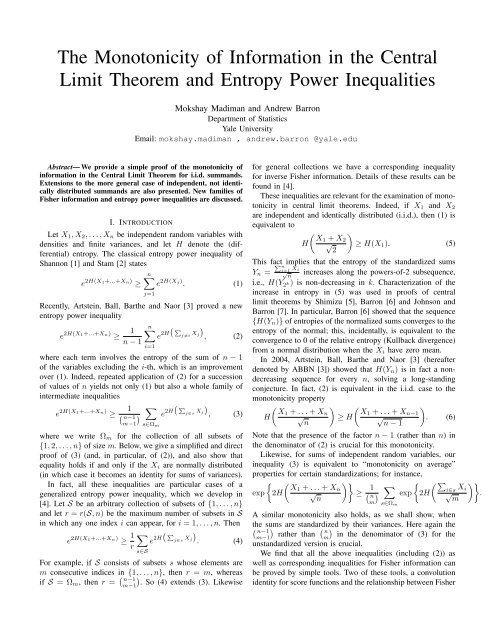

<strong>The</strong> <strong>Monotonicity</strong> <strong>of</strong> <strong>Information</strong> <strong>in</strong> <strong>the</strong> <strong>Central</strong><br />

<strong>Limit</strong> <strong>The</strong>orem <strong>and</strong> Entropy Power Inequalities<br />

Mokshay Madiman <strong>and</strong> Andrew Barron<br />

Department <strong>of</strong> Statistics<br />

Yale University<br />

Email: mokshay.madiman , <strong>and</strong>rew.barron @yale.edu<br />

Abstract— We provide a simple pro<strong>of</strong> <strong>of</strong> <strong>the</strong> monotonicity <strong>of</strong><br />

<strong>in</strong>formation <strong>in</strong> <strong>the</strong> <strong>Central</strong> <strong>Limit</strong> <strong>The</strong>orem for i.i.d. summ<strong>and</strong>s.<br />

Extensions to <strong>the</strong> more general case <strong>of</strong> <strong>in</strong>dependent, not identically<br />

distributed summ<strong>and</strong>s are also presented. New families <strong>of</strong><br />

Fisher <strong>in</strong>formation <strong>and</strong> entropy power <strong>in</strong>equalities are discussed.<br />

for general collections we have a correspond<strong>in</strong>g <strong>in</strong>equality<br />

for <strong>in</strong>verse Fisher <strong>in</strong>formation. Details <strong>of</strong> <strong>the</strong>se results can be<br />

found <strong>in</strong> [4].<br />

<strong>The</strong>se <strong>in</strong>equalities are relevant for <strong>the</strong> exam<strong>in</strong>ation <strong>of</strong> monotonicity<br />

<strong>in</strong> central limit <strong>the</strong>orems. Indeed, if X 1 <strong>and</strong> X 2<br />

are <strong>in</strong>dependent <strong>and</strong> identically distributed (i.i.d.), <strong>the</strong>n (1) is<br />

I. INTRODUCTION<br />

equivalent to<br />

( )<br />

X1 + X<br />

H √ 2<br />

≥ H(X 1 ). (5)<br />

2<br />

This fact P n<br />

implies that <strong>the</strong> entropy <strong>of</strong> <strong>the</strong> st<strong>and</strong>ardized sums<br />

i=1<br />

Y n = √ Xi<br />

n<br />

<strong>in</strong>creases along <strong>the</strong> powers-<strong>of</strong>-2 subsequence,<br />

i.e., H(Y 2 k) is non-decreas<strong>in</strong>g <strong>in</strong> k. Characterization <strong>of</strong> <strong>the</strong><br />

j=1<br />

<strong>in</strong>crease <strong>in</strong> entropy <strong>in</strong> (5) was used <strong>in</strong> pro<strong>of</strong>s <strong>of</strong> central<br />

limit <strong>the</strong>orems by Shimizu [5], Barron [6] <strong>and</strong> Johnson <strong>and</strong><br />

Barron [7]. In particular, Barron [6] showed that <strong>the</strong> sequence<br />

{H(Y<br />

n∑ ( n )} <strong>of</strong> entropies <strong>of</strong> <strong>the</strong> normalized sums converges to <strong>the</strong><br />

P<br />

e 2H entropy <strong>of</strong> <strong>the</strong> normal; this, <strong>in</strong>cidentally, is equivalent to <strong>the</strong><br />

j≠i<br />

), Xj (2)<br />

convergence to 0 <strong>of</strong> <strong>the</strong> relative entropy (Kullback divergence)<br />

i=1<br />

from a normal distribution when <strong>the</strong> X i have zero mean.<br />

In 2004, Artste<strong>in</strong>, Ball, Bar<strong>the</strong> <strong>and</strong> Naor [3] (hereafter<br />

denoted by ABBN [3]) showed that H(Y n ) is <strong>in</strong> fact a nondecreas<strong>in</strong>g<br />

sequence for every n, solv<strong>in</strong>g a long-st<strong>and</strong><strong>in</strong>g<br />

conjecture. In fact, (2) is equivalent <strong>in</strong> <strong>the</strong> i.i.d. case to <strong>the</strong><br />

( n−1<br />

) ∑<br />

monotonicity property<br />

( P<br />

)<br />

( ) (<br />

e 2H j∈s Xj , (3) X1 + . . . + X n X1 + . . . + X<br />

H √ ≥ H √ n−1<br />

). (6)<br />

m−1 s∈Ω m n n − 1<br />

for <strong>the</strong> collection <strong>of</strong> all subsets <strong>of</strong> Note that <strong>the</strong> presence <strong>of</strong> <strong>the</strong> factor n − 1 (ra<strong>the</strong>r than n) <strong>in</strong><br />

<strong>the</strong> denom<strong>in</strong>ator <strong>of</strong> (2) is crucial for this monotonicity.<br />

Likewise, for sums <strong>of</strong> <strong>in</strong>dependent r<strong>and</strong>om variables, our<br />

<strong>in</strong>equality (3) is equivalent to “monotonicity on average”<br />

properties for certa<strong>in</strong> st<strong>and</strong>ardizations; for <strong>in</strong>stance,<br />

{ ( )}<br />

X1 + . . . + X<br />

exp 2H √ n<br />

≥ ( 1<br />

n n<br />

) ∑ {<br />

exp 2H<br />

m s∈Ω m<br />

∑ ( P<br />

e 2H j∈s<br />

). Xj (4)<br />

s∈S<br />

)<br />

. So (4) extends (3). Likewise<br />

Let X 1 , X 2 , . . . , X n be <strong>in</strong>dependent r<strong>and</strong>om variables with<br />

densities <strong>and</strong> f<strong>in</strong>ite variances, <strong>and</strong> let H denote <strong>the</strong> (differential)<br />

entropy. <strong>The</strong> classical entropy power <strong>in</strong>equality <strong>of</strong><br />

Shannon [1] <strong>and</strong> Stam [2] states<br />

n∑<br />

e 2H(X1+...+Xn) ≥ e 2H(Xj) . (1)<br />

Recently, Artste<strong>in</strong>, Ball, Bar<strong>the</strong> <strong>and</strong> Naor [3] proved a new<br />

entropy power <strong>in</strong>equality<br />

e 2H(X1+...+Xn) ≥ 1<br />

n − 1<br />

where each term <strong>in</strong>volves <strong>the</strong> entropy <strong>of</strong> <strong>the</strong> sum <strong>of</strong> n − 1<br />

<strong>of</strong> <strong>the</strong> variables exclud<strong>in</strong>g <strong>the</strong> i-th, which is an improvement<br />

over (1). Indeed, repeated application <strong>of</strong> (2) for a succession<br />

<strong>of</strong> values <strong>of</strong> n yields not only (1) but also a whole family <strong>of</strong><br />

<strong>in</strong>termediate <strong>in</strong>equalities<br />

e 2H(X1+...+Xn) ≥ 1<br />

where we write Ω m<br />

{1, 2, . . . , n} <strong>of</strong> size m. Below, we give a simplified <strong>and</strong> direct<br />

pro<strong>of</strong> <strong>of</strong> (3) (<strong>and</strong>, <strong>in</strong> particular, <strong>of</strong> (2)), <strong>and</strong> also show that<br />

equality holds if <strong>and</strong> only if <strong>the</strong> X i are normally distributed<br />

(<strong>in</strong> which case it becomes an identity for sums <strong>of</strong> variances).<br />

In fact, all <strong>the</strong>se <strong>in</strong>equalities are particular cases <strong>of</strong> a<br />

generalized entropy power <strong>in</strong>equality, which we develop <strong>in</strong><br />

[4]. Let S be an arbitrary collection <strong>of</strong> subsets <strong>of</strong> {1, . . . , n}<br />

<strong>and</strong> let r = r(S, n) be <strong>the</strong> maximum number <strong>of</strong> subsets <strong>in</strong> S<br />

<strong>in</strong> which any one <strong>in</strong>dex i can appear, for i = 1, . . . , n. <strong>The</strong>n<br />

e 2H(X1+...+Xn) ≥ 1 r<br />

For example, if S consists <strong>of</strong> subsets s whose elements are<br />

m consecutive <strong>in</strong>dices <strong>in</strong> {1, . . . , n}, <strong>the</strong>n r = m, whereas<br />

if S = Ω m , <strong>the</strong>n r = ( n−1<br />

m−1<br />

A similar monotonicity also holds, as we shall show, when<br />

<strong>the</strong> sums are st<strong>and</strong>ardized by <strong>the</strong>ir variances. Here aga<strong>in</strong> <strong>the</strong><br />

( n−1<br />

m−1)<br />

ra<strong>the</strong>r than<br />

( n<br />

m)<br />

<strong>in</strong> <strong>the</strong> denom<strong>in</strong>ator <strong>of</strong> (3) for <strong>the</strong><br />

unst<strong>and</strong>ardized version is crucial.<br />

We f<strong>in</strong>d that all <strong>the</strong> above <strong>in</strong>equalities (<strong>in</strong>clud<strong>in</strong>g (2)) as<br />

well as correspond<strong>in</strong>g <strong>in</strong>equalities for Fisher <strong>in</strong>formation can<br />

be proved by simple tools. Two <strong>of</strong> <strong>the</strong>se tools, a convolution<br />

identity for score functions <strong>and</strong> <strong>the</strong> relationship between Fisher<br />

(∑<br />

i∈s X i<br />

√ m<br />

)}.

<strong>in</strong>formation <strong>and</strong> entropy (discussed <strong>in</strong> Section II), are familiar<br />

<strong>in</strong> past work on entropy power <strong>in</strong>equalities. An additional trick<br />

is needed to obta<strong>in</strong> <strong>the</strong> denom<strong>in</strong>ators <strong>of</strong> n−1 <strong>and</strong> ( n−1<br />

m−1)<br />

<strong>in</strong> (2)<br />

<strong>and</strong> (3) respectively. This is a simple variance drop <strong>in</strong>equality<br />

for statistics expressible via sums <strong>of</strong> functions <strong>of</strong> m out <strong>of</strong><br />

n variables, which is familiar <strong>in</strong> o<strong>the</strong>r statistical contexts (as<br />

we shall discuss). It was first used for <strong>in</strong>formation <strong>in</strong>equality<br />

development <strong>in</strong> ABBN [3]. <strong>The</strong> variational characterization <strong>of</strong><br />

Fisher <strong>in</strong>formation that is an essential <strong>in</strong>gredient <strong>of</strong> ABBN [3]<br />

is not needed <strong>in</strong> our pro<strong>of</strong>s.<br />

For clarity <strong>of</strong> presentation, we f<strong>in</strong>d it convenient to first<br />

outl<strong>in</strong>e <strong>the</strong> pro<strong>of</strong> <strong>of</strong> (6) for i.i.d. r<strong>and</strong>om variables. Thus,<br />

<strong>in</strong> Section III, we establish <strong>the</strong> monotonicity result (6) <strong>in</strong> a<br />

simple <strong>and</strong> reveal<strong>in</strong>g manner that boils down to <strong>the</strong> geometry<br />

<strong>of</strong> projections (conditional expectations). Whereas ABBN<br />

[3] requires that X 1 has a C 2 density for monotonicity <strong>of</strong><br />

Fisher divergence, absolute cont<strong>in</strong>uity <strong>of</strong> <strong>the</strong> density suffices<br />

<strong>in</strong> our approach. Fur<strong>the</strong>rmore, whereas <strong>the</strong> recent prepr<strong>in</strong>t <strong>of</strong><br />

Shlyakhtenko [8] proves <strong>the</strong> analogue <strong>of</strong> <strong>the</strong> monotonicity<br />

fact for non-commutative or “free” probability <strong>the</strong>ory, his<br />

method implies a pro<strong>of</strong> for <strong>the</strong> classical case only assum<strong>in</strong>g<br />

f<strong>in</strong>iteness <strong>of</strong> all moments, while our direct pro<strong>of</strong> requires only<br />

f<strong>in</strong>ite variance assumptions. Our pro<strong>of</strong> also reveals <strong>in</strong> a simple<br />

manner <strong>the</strong> cases <strong>of</strong> equality <strong>in</strong> (6) (c.f., Schultz [9]). Although<br />

we do not write it out for brevity, <strong>the</strong> monotonicity <strong>of</strong> entropy<br />

for st<strong>and</strong>ardized sums <strong>of</strong> d-dimensional r<strong>and</strong>om vectors has<br />

an identical pro<strong>of</strong>.<br />

We recall that for a r<strong>and</strong>om variable X with density f,<br />

<strong>the</strong> entropy is H(X) = −E[log f(X)]. For a differentiable<br />

density, <strong>the</strong> score function is ρ X (x) =<br />

∂<br />

∂x<br />

log f(x), <strong>and</strong> <strong>the</strong><br />

Fisher <strong>in</strong>formation is I(X) = E[ρ 2 X (X)]. <strong>The</strong>y are l<strong>in</strong>ked by<br />

an <strong>in</strong>tegral form <strong>of</strong> <strong>the</strong> de Bruijn identity due to Barron [6],<br />

which permits certa<strong>in</strong> convolution <strong>in</strong>equalities for I to translate<br />

<strong>in</strong>to correspond<strong>in</strong>g <strong>in</strong>equalities for H.<br />

Underly<strong>in</strong>g our <strong>in</strong>equalities is <strong>the</strong> demonstration for <strong>in</strong>dependent,<br />

not necessarily identically distributed (i.n.i.d.) r<strong>and</strong>om<br />

variables with absolutely cont<strong>in</strong>uous densities that<br />

( ) n − 1 ∑ ∑<br />

)<br />

I(X 1 + . . . + X n ) ≤<br />

w 2<br />

m − 1<br />

sI(<br />

X i (7)<br />

s∈Ω m i∈s<br />

for any non-negative weights w s that add to 1 over all subsets<br />

s ⊂ {1, . . . , n} <strong>of</strong> size m. Optimiz<strong>in</strong>g over w yields an<br />

<strong>in</strong>equality for <strong>in</strong>verse Fisher <strong>in</strong>formation that extends <strong>the</strong><br />

orig<strong>in</strong>al <strong>in</strong>equality <strong>of</strong> Stam:<br />

1<br />

I(X 1 + . . . + X n ) ≥ 1<br />

( n−1<br />

m−1<br />

) ∑<br />

I( ∑ i∈s X i) . (8)<br />

s∈Ω m<br />

1<br />

Alternatively, us<strong>in</strong>g a scal<strong>in</strong>g property <strong>of</strong> Fisher <strong>in</strong>formation<br />

to re-express our core <strong>in</strong>equality (7), we see that <strong>the</strong> Fisher<br />

<strong>in</strong>formation <strong>of</strong> <strong>the</strong> sum is bounded by a convex comb<strong>in</strong>ation<br />

<strong>of</strong> Fisher <strong>in</strong>formations <strong>of</strong> scaled partial sums:<br />

I(X 1 + . . . + X n ) ≤ ∑ ( ∑<br />

w s I √<br />

s∈Ω m<br />

i∈s X i<br />

w s<br />

( n−1<br />

m−1<br />

)<br />

)<br />

. (9)<br />

This <strong>in</strong>tegrates to give an <strong>in</strong>equality for entropy that is an<br />

extension <strong>of</strong> <strong>the</strong> “l<strong>in</strong>ear form <strong>of</strong> <strong>the</strong> entropy power <strong>in</strong>equality”<br />

developed by Dembo et al [10]. Specifically we obta<strong>in</strong><br />

H(X 1 + . . . + X n ) ≤ ∑ ( ∑<br />

w s H √<br />

s∈Ω m<br />

i∈s X i<br />

w s<br />

( n−1<br />

m−1<br />

)<br />

)<br />

. (10)<br />

Likewise us<strong>in</strong>g <strong>the</strong> scal<strong>in</strong>g property <strong>of</strong> entropy on (10) <strong>and</strong><br />

optimiz<strong>in</strong>g over w yields our extension <strong>of</strong> <strong>the</strong> entropy power<br />

<strong>in</strong>equality<br />

{<br />

( n−1<br />

m−1<br />

exp{2H(X 1 + . . . + X n )} ≥ 1<br />

) ∑<br />

s∈Ω m<br />

exp<br />

2H ( ∑ ) }<br />

X j .<br />

j∈s<br />

Thus both <strong>in</strong>verse Fisher <strong>in</strong>formation <strong>and</strong> entropy power<br />

satisfy an <strong>in</strong>equality <strong>of</strong> <strong>the</strong> form<br />

( ) n − 1<br />

ψ ( ) ∑ ∑<br />

X 1 + . . . + X n ≥ ψ(<br />

X i<br />

). (11)<br />

m − 1<br />

s∈Ω m i∈s<br />

We motivate <strong>the</strong> form (11) us<strong>in</strong>g <strong>the</strong> follow<strong>in</strong>g almost trivial<br />

fact, which is proved <strong>in</strong> <strong>the</strong> Appendix for <strong>the</strong> reader’s convenience.<br />

Let [n] = {1, 2, . . . , n}.<br />

Fact I: For arbitrary non-negative numbers {a 2 i : i ∈ [n]},<br />

∑<br />

( ) n − 1 ∑<br />

a 2 i =<br />

a 2 i , (12)<br />

m − 1<br />

∑<br />

s∈Ω m i∈s<br />

i∈[n]<br />

where <strong>the</strong> first sum on <strong>the</strong> left is taken over <strong>the</strong> collection<br />

Ω m = {s ⊂ [n] : |s| = m} <strong>of</strong> sets conta<strong>in</strong><strong>in</strong>g m <strong>in</strong>dices.<br />

If Fact I is thought <strong>of</strong> as (m, n)-additivity, <strong>the</strong>n (8) <strong>and</strong><br />

(3) represent <strong>the</strong> (m, n)-superadditivity <strong>of</strong> <strong>in</strong>verse Fisher <strong>in</strong>formation<br />

<strong>and</strong> entropy power respectively. In <strong>the</strong> case <strong>of</strong><br />

normal r<strong>and</strong>om variables, <strong>the</strong> <strong>in</strong>verse Fisher <strong>in</strong>formation <strong>and</strong><br />

<strong>the</strong> entropy power equal <strong>the</strong> variance. Thus <strong>in</strong> that case (8)<br />

<strong>and</strong> (3) become Fact I with a 2 i equal to <strong>the</strong> variance <strong>of</strong> X i.<br />

II. SCORE FUNCTIONS AND PROJECTIONS<br />

<strong>The</strong> first tool we need is a projection property <strong>of</strong> score<br />

functions <strong>of</strong> sums <strong>of</strong> <strong>in</strong>dependent r<strong>and</strong>om variables, which is<br />

well-known for smooth densities (c.f., Blachman [11]). For<br />

completeness, we give <strong>the</strong> pro<strong>of</strong>. As shown by Johnson <strong>and</strong><br />

Barron [7], it is sufficient that <strong>the</strong> densities are absolutely<br />

cont<strong>in</strong>uous; see [7][Appendix 1] for an explanation <strong>of</strong> why<br />

this is so.<br />

Lemma I:[CONVOLUTION IDENTITY FOR SCORE FUNC-<br />

TIONS] If V 1 <strong>and</strong> V 2 are <strong>in</strong>dependent r<strong>and</strong>om variables, <strong>and</strong><br />

V 1 has an absolutely cont<strong>in</strong>uous density with score ρ V1 , <strong>the</strong>n<br />

V 1 + V 2 has <strong>the</strong> score<br />

ρ V1+V 2<br />

(v) = E[ρ V1 (V 1 )|V 1 + V 2 = v] (13)<br />

Pro<strong>of</strong>: Let f V1 <strong>and</strong> f V be <strong>the</strong> densities <strong>of</strong> V 1 <strong>and</strong><br />

V = V 1 + V 2 respectively. <strong>The</strong>n, ei<strong>the</strong>r br<strong>in</strong>g<strong>in</strong>g <strong>the</strong> derivative

<strong>in</strong>side <strong>the</strong> <strong>in</strong>tegral for <strong>the</strong> smooth case, or via <strong>the</strong> more general<br />

formalism <strong>in</strong> [7],<br />

f ′ V (v) = ∂ ∂v E[f V 1<br />

(v − V 2 )]<br />

= E[f ′ V 1<br />

(v − V 2 )]<br />

= E[f V1 (v − V 2 )ρ V1 (v − V 2 )]<br />

so that<br />

ρ V (v) = f V ′ (v) [ ]<br />

f V (v) = E fV1 (v − V 2 )<br />

ρ V1 (v − V 2 )<br />

f V (v)<br />

= E[ρ V1 (V 1 )|V 1 + V 2 = v].<br />

(14)<br />

(15)<br />

<strong>The</strong> second tool we need is a “variance drop lemma”, which<br />

goes back at least to Hoeffd<strong>in</strong>g’s sem<strong>in</strong>al work [12] on U-<br />

statistics (see his <strong>The</strong>orem 5.2). An equivalent statement <strong>of</strong><br />

<strong>the</strong> variance drop lemma was formulated <strong>in</strong> ABBN [3]. In [4],<br />

we prove <strong>and</strong> use a more general result to study <strong>the</strong> i.n.i.d.<br />

case.<br />

First we need to recall a decomposition <strong>of</strong> functions <strong>in</strong><br />

L 2 (R n ), which is noth<strong>in</strong>g but <strong>the</strong> Analysis <strong>of</strong> Variance<br />

(ANOVA) decomposition <strong>of</strong> a statistic. <strong>The</strong> follow<strong>in</strong>g conventions<br />

are useful. [n] is <strong>the</strong> <strong>in</strong>dex set {1, 2, . . . , n}. For any<br />

s ⊂ [n], X s st<strong>and</strong>s for <strong>the</strong> collection <strong>of</strong> r<strong>and</strong>om variables<br />

{X i : i ∈ s}. For any j ∈ [n], E j ψ denotes <strong>the</strong> conditional<br />

expectation <strong>of</strong> ψ, given all r<strong>and</strong>om variables o<strong>the</strong>r than X j ,<br />

i.e.,<br />

E j ψ(x 1 , . . . , x n ) = E[ψ(X 1 , . . . , X n )|X i = x i ∀i ≠ j] (16)<br />

averages out <strong>the</strong> dependence on <strong>the</strong> j-th coord<strong>in</strong>ate.<br />

Fact II:[ANOVA DECOMPOSITION] Suppose ψ : R n → R<br />

satisfies Eψ 2 (X 1 , . . . , X n ) < ∞, i.e., ψ ∈ L 2 , for <strong>in</strong>dependent<br />

r<strong>and</strong>om variables X 1 , X 2 , . . . , X n . For s ⊂ [n], def<strong>in</strong>e<br />

<strong>the</strong> orthogonal l<strong>in</strong>ear subspaces<br />

H s = {ψ ∈ L 2 : E j ψ = ψ1 {j /∈s} ∀j ∈ [n]} (17)<br />

<strong>of</strong> functions depend<strong>in</strong>g only on <strong>the</strong> variables <strong>in</strong>dexed by<br />

s. <strong>The</strong>n L 2 is <strong>the</strong> orthogonal direct sum <strong>of</strong> this family <strong>of</strong><br />

subspaces, i.e., any ψ ∈ L 2 can be written <strong>in</strong> <strong>the</strong> form<br />

ψ = ∑<br />

ψ s , (18)<br />

where ψ s ∈ H s .<br />

s⊂[n]<br />

Remark: In <strong>the</strong> language <strong>of</strong> ANOVA familiar to statisticians,<br />

when φ is <strong>the</strong> empty set, ψ φ is <strong>the</strong> mean; ψ {1} , ψ {2} , . . . , ψ {n}<br />

are <strong>the</strong> ma<strong>in</strong> effects; {ψ s : |s| = 2} are <strong>the</strong> pairwise<br />

<strong>in</strong>teractions, <strong>and</strong> so on. Fact II implies that for any subset<br />

s ⊂ [n], <strong>the</strong> function ∑ {R:R⊂s} ψ R is <strong>the</strong> best approximation<br />

(<strong>in</strong> mean square) to ψ that depends only on <strong>the</strong> collection X s<br />

<strong>of</strong> r<strong>and</strong>om variables.<br />

Remark: <strong>The</strong> historical roots <strong>of</strong> this decomposition lie <strong>in</strong><br />

<strong>the</strong> work <strong>of</strong> von Mises [13] <strong>and</strong> Hoeffd<strong>in</strong>g [12]. For various<br />

ref<strong>in</strong>ements <strong>and</strong> <strong>in</strong>terpretations, see Kurkjian <strong>and</strong> Zelen [14],<br />

Jacobsen [15], Rub<strong>in</strong> <strong>and</strong> Vitale [16], Efron <strong>and</strong> Ste<strong>in</strong> [17],<br />

<strong>and</strong> Takemura [18]; <strong>the</strong>se works <strong>in</strong>clude applications <strong>of</strong> such<br />

decompositions to experimental design, l<strong>in</strong>ear models, U-<br />

statistics, <strong>and</strong> jackknife <strong>the</strong>ory. <strong>The</strong> Appendix conta<strong>in</strong>s a brief<br />

pro<strong>of</strong> <strong>of</strong> Fact II for <strong>the</strong> convenience <strong>of</strong> <strong>the</strong> reader.<br />

We say that a function f : R d → R is an additive function<br />

if <strong>the</strong>re exist functions f i : R → R such that f(x 1 , . . . , x d ) =<br />

∑<br />

i∈[d] f i(x i ).<br />

Lemma II:[VARIANCE DROP]Let ψ : R n−1 → R. Suppose,<br />

for each j ∈ [n], ψ j = ψ(X 1 , . . . , X j−1 , X j+1 , . . . , X n ) has<br />

mean 0. <strong>The</strong>n<br />

[<br />

∑ n ] 2<br />

E ψ j ≤ (n − 1) ∑<br />

E[ψ j ] 2 . (19)<br />

j=1<br />

j∈[n]<br />

Equality can hold only if ψ is an additive function.<br />

so that<br />

Pro<strong>of</strong>: By <strong>the</strong> Cauchy-Schwartz <strong>in</strong>equality, for any V j ,<br />

[ 1 ∑<br />

] 2<br />

V j ≤ 1 ∑<br />

[V j ] 2 , (20)<br />

n<br />

n<br />

j∈[n]<br />

[ ∑<br />

E<br />

j∈[n]<br />

j∈[n]<br />

] 2<br />

V j ≤ n ∑<br />

E[V j ] 2 . (21)<br />

j∈[n]<br />

Let Ē s be <strong>the</strong> operation that produces <strong>the</strong> component Ē s ψ =<br />

ψ s (see <strong>the</strong> appendix for a fur<strong>the</strong>r characterization <strong>of</strong> it); <strong>the</strong>n<br />

[ ∑<br />

] 2 [ ∑ ∑<br />

] 2<br />

E ψ j = E Ē s ψ j<br />

j∈[n]<br />

(a)<br />

= ∑<br />

(b)<br />

≤<br />

s⊂[n] j∈[n]<br />

s⊂[n]<br />

∑<br />

[ ∑<br />

] 2<br />

E Ē s ψ j<br />

j /∈s<br />

− 1)<br />

s⊂[n](n ∑ j /∈s<br />

(c)<br />

= (n − 1) ∑<br />

E[ψ j ] 2 .<br />

j∈[n]<br />

E [ Ē s ψ j<br />

] 2<br />

(22)<br />

Here, (a) <strong>and</strong> (c) employ <strong>the</strong> orthogonal decomposition <strong>of</strong><br />

Fact II <strong>and</strong> Parseval’s <strong>the</strong>orem. <strong>The</strong> <strong>in</strong>equality (b) is based<br />

on two facts: firstly, E j ψ j = ψ j s<strong>in</strong>ce ψ j is <strong>in</strong>dependent <strong>of</strong><br />

X j , <strong>and</strong> hence Ēsψ j = 0 if j ∈ s; secondly, we can ignore <strong>the</strong><br />

null set φ <strong>in</strong> <strong>the</strong> outer sum s<strong>in</strong>ce <strong>the</strong> mean <strong>of</strong> a score function<br />

is 0, <strong>and</strong> <strong>the</strong>refore {j : j /∈ s} <strong>in</strong> <strong>the</strong> <strong>in</strong>ner sum has at most<br />

n − 1 elements. For equality to hold, Ē s ψ j can only be nonzero<br />

when s has exactly 1 element, i.e., each ψ j must consist<br />

only <strong>of</strong> ma<strong>in</strong> effects <strong>and</strong> no <strong>in</strong>teractions, so that it must be<br />

additive.<br />

III. MONOTONICITY IN THE IID CASE<br />

For i.i.d. r<strong>and</strong>om variables, <strong>in</strong>equalities (2) <strong>and</strong> (3) reduce<br />

to <strong>the</strong> monotonicity H(Y n ) ≥ H(Y m ) for n > m, where<br />

Y n = 1 √ n<br />

n ∑<br />

i=1<br />

X i . (23)

For clarity <strong>of</strong> presentation <strong>of</strong> ideas, we focus first on <strong>the</strong> i.i.d.<br />

case, beg<strong>in</strong>n<strong>in</strong>g with Fisher <strong>in</strong>formation.<br />

Proposition I:[MONOTONICITY OF FISHER INFORMA-<br />

TION]For i.i.d. r<strong>and</strong>om variables with absolutely cont<strong>in</strong>uous<br />

densities,<br />

I(Y n ) ≤ I(Y n−1 ), (24)<br />

with equality iff X 1 is normal or I(Y n ) = ∞.<br />

Pro<strong>of</strong>: We use <strong>the</strong> follow<strong>in</strong>g notational conventions: <strong>The</strong><br />

(unnormalized) sum is V n = ∑ i∈[n] X i, <strong>the</strong> leave-one-out sum<br />

leav<strong>in</strong>g out X j is V (j) = ∑ i≠j X i, <strong>and</strong> <strong>the</strong> normalized leaveone-out<br />

sum is Y (j) = √ 1<br />

n−1<br />

∑i≠j X i.<br />

If X ′ = aX, <strong>the</strong>n ρ X ′(X ′ ) = 1 a ρ X(X); hence<br />

ρ Yn (Y n ) = √ nρ Vn (V n )<br />

(a)<br />

= √ nE[ρ V (j)(V (j) )|V n ]<br />

√ n<br />

=<br />

n − 1 E[ρ Y (j)(Y (j) )|V n ]<br />

(b)<br />

=<br />

1<br />

√<br />

n(n − 1)<br />

n<br />

∑<br />

j=1<br />

E[ρ Y (j)(Y (j) )|Y n ].<br />

(25)<br />

Here, (a) follows from application <strong>of</strong> Lemma I to V n = V (j) +<br />

X j , keep<strong>in</strong>g <strong>in</strong> m<strong>in</strong>d that Y n−1 (hence V (j) ) has an absolutely<br />

cont<strong>in</strong>uous density, while (b) follows from symmetry. Set ρ j =<br />

ρ Y (j)(Y (j) ); <strong>the</strong>n we have<br />

ρ Yn (Y n ) =<br />

[<br />

1 ∑ n ∣ ∣∣∣<br />

√ E ρ j Y n<br />

]. (26)<br />

n(n − 1)<br />

j=1<br />

S<strong>in</strong>ce <strong>the</strong> length <strong>of</strong> a vector is not less than <strong>the</strong> length <strong>of</strong> its<br />

projection (i.e., by Cauchy-Schwartz <strong>in</strong>equality),<br />

[ n<br />

I(Y n ) = E[ρ Yn (Y n )] 2 1<br />

≤<br />

n(n − 1) E ∑<br />

] 2<br />

ρ j . (27)<br />

Lemma II yields<br />

[<br />

∑ n ] 2<br />

E ρ j ≤ (n − 1) ∑<br />

j=1<br />

j∈[n]<br />

j=1<br />

E[ρ j ] 2 = (n − 1)nI(Y n−1 ), (28)<br />

which gives <strong>the</strong> <strong>in</strong>equality <strong>of</strong> Proposition I on substitution <strong>in</strong>to<br />

(27). <strong>The</strong> <strong>in</strong>equality implied by Lemma II can be tight only<br />

if each ρ j is an additive function, but we already know that<br />

ρ j is a function <strong>of</strong> <strong>the</strong> sum. <strong>The</strong> only functions that are both<br />

additive <strong>and</strong> functions <strong>of</strong> <strong>the</strong> sum are l<strong>in</strong>ear functions <strong>of</strong> <strong>the</strong><br />

sum; hence <strong>the</strong> two sides <strong>of</strong> (24) can be f<strong>in</strong>ite <strong>and</strong> equal only<br />

if <strong>the</strong> score ρ j is l<strong>in</strong>ear, i.e., if all <strong>the</strong> X i are normal. It is<br />

trivial to check that X 1 normal or I(Y n ) = ∞ imply equality.<br />

We can now prove <strong>the</strong> monotonicity result for entropy <strong>in</strong><br />

<strong>the</strong> i.i.d. case.<br />

<strong>The</strong>orem I: Suppose X i are i.i.d. r<strong>and</strong>om variables with<br />

densities. Suppose X 1 has mean 0 <strong>and</strong> f<strong>in</strong>ite variance, <strong>and</strong><br />

<strong>The</strong>n<br />

Y n = 1 √ n<br />

n ∑<br />

i=1<br />

X i (29)<br />

H(Y n ) ≥ H(Y n−1 ). (30)<br />

<strong>The</strong> two sides are f<strong>in</strong>ite <strong>and</strong> equal iff X 1 is normal.<br />

Pro<strong>of</strong>: Recall <strong>the</strong> <strong>in</strong>tegral form <strong>of</strong> <strong>the</strong> de Bruijn identity,<br />

which is now a st<strong>and</strong>ard method to “lift” results from Fisher<br />

divergence to relative entropy. This identity was first stated<br />

<strong>in</strong> its differential form by Stam [2] (<strong>and</strong> attributed by him to<br />

de Bruijn), <strong>and</strong> proved <strong>in</strong> its <strong>in</strong>tegral form by Barron [6]: if<br />

X t is equal <strong>in</strong> distribution to X + √ tZ, where Z is normally<br />

distributed <strong>in</strong>dependent <strong>of</strong> X, <strong>the</strong>n<br />

H(X) = 1 2 log(2πev) − 1 2<br />

∫ ∞<br />

0<br />

[<br />

I(X t ) − 1<br />

v + t<br />

]<br />

dt (31)<br />

is valid <strong>in</strong> <strong>the</strong> case that <strong>the</strong> variances <strong>of</strong> Z <strong>and</strong> X are both v.<br />

This has <strong>the</strong> advantage <strong>of</strong> positivity <strong>of</strong> <strong>the</strong> <strong>in</strong>tegr<strong>and</strong> but <strong>the</strong><br />

disadvantage that is seems to depend on v. One can use<br />

∫ ∞<br />

[ 1<br />

log v =<br />

1 + t − 1 ]<br />

(32)<br />

v + t<br />

to re-express it <strong>in</strong> <strong>the</strong> form<br />

H(X) = 1 2 log(2πe) − 1 2<br />

0<br />

∫ ∞<br />

0<br />

[<br />

I(X t ) − 1 ]<br />

dt. (33)<br />

1 + t<br />

Comb<strong>in</strong><strong>in</strong>g this with Proposition I, <strong>the</strong> pro<strong>of</strong> is f<strong>in</strong>ished.<br />

IV. EXTENSIONS<br />

For <strong>the</strong> case <strong>of</strong> <strong>in</strong>dependent, non-identically distributed<br />

(i.n.i.d.) summ<strong>and</strong>s, we need a general version <strong>of</strong> <strong>the</strong> “variance<br />

drop” lemma.<br />

Lemma III:[VARIANCE DROP: GENERAL VERSION]Suppose<br />

we are given a class <strong>of</strong> functions ψ (s) : R |s| → R for any<br />

s ∈ Ω m , <strong>and</strong> Eψ (s) (X 1 , . . . , X m ) = 0 for each s. Let w be<br />

any probability distribution on Ω m . Def<strong>in</strong>e<br />

U(X 1 , . . . , X n ) = ∑<br />

s∈Ω m<br />

w s ψ (s) (X s ), (34)<br />

where we write ψ (s) (X s ) for a function <strong>of</strong> X s .<strong>The</strong>n<br />

( ) n − 1 ∑<br />

EU 2 ≤<br />

w<br />

m − 1<br />

sE[ψ 2 (s) (X s )] 2 , (35)<br />

s∈Ω m<br />

<strong>and</strong> equality can hold only if each ψ (s) is an additive function<br />

(<strong>in</strong> <strong>the</strong> sense def<strong>in</strong>ed earlier).<br />

Remark: When ψ (s) = ψ (i.e., all <strong>the</strong> ψ (s) are <strong>the</strong> same), ψ is<br />

symmetric <strong>in</strong> its arguments, <strong>and</strong> w is uniform, <strong>the</strong>n U def<strong>in</strong>ed<br />

above is a U-statistic <strong>of</strong> degree m with symmetric, mean zero<br />

kernel ψ. Lemma III <strong>the</strong>n becomes <strong>the</strong> well-known bound for

<strong>the</strong> variance <strong>of</strong> a U-statistic shown by Hoeffd<strong>in</strong>g [12], namely<br />

EU 2 ≤ m n Eψ2 .<br />

This gives our core <strong>in</strong>equality (7).<br />

Proposition II:Let {X i } be <strong>in</strong>dependent r<strong>and</strong>om variables<br />

with densities <strong>and</strong> f<strong>in</strong>ite variances. Def<strong>in</strong>e<br />

T n = ∑<br />

X i <strong>and</strong> T m (s) = T (s) = ∑ X i , (36)<br />

i∈s<br />

i∈[n]<br />

where s ∈ Ω m = {s ⊂ [n] : |s| = m}. Let w be any<br />

probability distribution on Ω m . If each T m<br />

(s) has an absolute<br />

cont<strong>in</strong>uous density, <strong>the</strong>n<br />

( ) n − 1 ∑<br />

I(T n ) ≤<br />

w<br />

m − 1<br />

sI(T 2 m (s) ), (37)<br />

s∈Ω m<br />

where w s = w({s}). Both sides can be f<strong>in</strong>ite <strong>and</strong> equal only<br />

if each X i is normal.<br />

Pro<strong>of</strong>: In <strong>the</strong> sequel, for convenience, we abuse notation<br />

by us<strong>in</strong>g ρ to denote several different score functions; ρ(Y )<br />

always means ρ Y (Y ). For each j, Lemma I <strong>and</strong> <strong>the</strong> fact that<br />

T (s)<br />

m<br />

has an absolutely cont<strong>in</strong>uous density imply<br />

[ ( ∑<br />

)∣ ] ∣∣∣<br />

ρ(T n ) = E ρ X i T n . (38)<br />

i∈s<br />

Tak<strong>in</strong>g a convex comb<strong>in</strong>ations <strong>of</strong> <strong>the</strong>se identities gives, for any<br />

{w s } such that ∑ s∈Ω m<br />

w s = 1,<br />

ρ(T n ) = ∑ [ ( ∑<br />

)∣ ] ∣∣∣<br />

w s E ρ X i T n<br />

s∈Ω m i∈s<br />

[ ∑<br />

]<br />

(39)<br />

= E w s ρ(T (s) )<br />

∣ T n .<br />

s∈Ω m<br />

By apply<strong>in</strong>g <strong>the</strong> Cauchy-Schwartz <strong>in</strong>equality <strong>and</strong> Lemma III<br />

<strong>in</strong> succession, we get<br />

[ ∑<br />

] 2<br />

I(T n ) ≤ E w s ρ(T (s) )<br />

s∈Ω<br />

(<br />

m<br />

) n − 1 ∑<br />

≤<br />

E[w s ρ(T (s) )] 2 (40)<br />

m − 1<br />

s∈Ω<br />

( )<br />

m<br />

n − 1 ∑<br />

=<br />

w<br />

m − 1<br />

sI(T 2 (s) ).<br />

s∈Ω m<br />

<strong>The</strong> application <strong>of</strong> Lemma III can yield equality only if<br />

each ρ(T (s) ) is additive; s<strong>in</strong>ce <strong>the</strong> score ρ(T (s) ) is already a<br />

function <strong>of</strong> <strong>the</strong> sum T (s) , it must <strong>in</strong> fact be a l<strong>in</strong>ear function,<br />

so that each X i must be normal.<br />

A. Pro<strong>of</strong> <strong>of</strong> Fact I<br />

∑<br />

ā 2 s = ∑<br />

s∈Ω m<br />

∑<br />

s∈Ω m i∈s<br />

= ∑<br />

i∈[n]<br />

( n − 1<br />

m − 1<br />

APPENDIX<br />

a 2 i = ∑<br />

∑<br />

i∈[n] S∋i,|s|=m<br />

)<br />

a 2 i =<br />

( n − 1<br />

m − 1<br />

a 2 i<br />

) ∑<br />

a 2 i .<br />

i∈[n]<br />

(41)<br />

B. Pro<strong>of</strong> <strong>of</strong> Fact II<br />

Let E s denote <strong>the</strong> <strong>in</strong>tegrat<strong>in</strong>g out <strong>of</strong> <strong>the</strong> variables <strong>in</strong> s, so<br />

that E j = E {j} . Keep<strong>in</strong>g <strong>in</strong> m<strong>in</strong>d that <strong>the</strong> order <strong>of</strong> <strong>in</strong>tegrat<strong>in</strong>g<br />

out <strong>in</strong>dependent variables does not matter (i.e., <strong>the</strong> E j are<br />

commut<strong>in</strong>g projection operators <strong>in</strong> L 2 ), we can write<br />

n∏<br />

φ = [E j + (I − E j )]φ<br />

where<br />

j=1<br />

= ∑ ∏ ∏<br />

E j (I − E j )φ<br />

s⊂[n] j /∈s<br />

= ∑<br />

φ s ,<br />

s⊂[n]<br />

j∈s<br />

(42)<br />

φ s = Ēsφ<br />

∏<br />

≡ E s c (I − E j )φ. (43)<br />

j /∈s<br />

In order to show that <strong>the</strong> subspaces H s are orthogonal, observe<br />

that for any s 1 <strong>and</strong> s 2 , <strong>the</strong>re is at least one j such that s 1 is<br />

conta<strong>in</strong>ed <strong>in</strong> <strong>the</strong> image <strong>of</strong> E j <strong>and</strong> s 2 is conta<strong>in</strong>ed <strong>in</strong> <strong>the</strong> image<br />

<strong>of</strong> (I − E j ); hence every vector <strong>in</strong> s 1 is orthogonal to every<br />

vector <strong>in</strong> s 2 .<br />

REFERENCES<br />

[1] C. Shannon, “A ma<strong>the</strong>matical <strong>the</strong>ory <strong>of</strong> communication,” Bell System<br />

Tech. J., vol. 27, pp. 379–423, 623–656, 1948.<br />

[2] A. Stam, “Some <strong>in</strong>equalities satisfied by <strong>the</strong> quantities <strong>of</strong> <strong>in</strong>formation<br />

<strong>of</strong> Fisher <strong>and</strong> Shannon,” <strong>Information</strong> <strong>and</strong> Control, vol. 2, pp. 101–112,<br />

1959.<br />

[3] S. Artste<strong>in</strong>, K. M. Ball, F. Bar<strong>the</strong>, <strong>and</strong> A. Naor, “Solution <strong>of</strong> Shannon’s<br />

problem on <strong>the</strong> monotonicity <strong>of</strong> entropy,” J. Amer. Math. Soc., vol. 17,<br />

no. 4, pp. 975–982 (electronic), 2004.<br />

[4] M. Madiman <strong>and</strong> A. Barron, “Generalized entropy power <strong>in</strong>equalities<br />

<strong>and</strong> monotonicity properties <strong>of</strong> <strong>in</strong>formation,” Submitted, 2006.<br />

[5] R. Shimizu, “On Fisher’s amount <strong>of</strong> <strong>in</strong>formation for location family,” <strong>in</strong><br />

Statistical Distributions <strong>in</strong> Scientific Work, G. et al, Ed. Reidel, 1975,<br />

vol. 3, pp. 305–312.<br />

[6] A. Barron, “Entropy <strong>and</strong> <strong>the</strong> central limit <strong>the</strong>orem,” Ann. Probab.,<br />

vol. 14, pp. 336–342, 1986.<br />

[7] O. Johnson <strong>and</strong> A. Barron, “Fisher <strong>in</strong>formation <strong>in</strong>equalities <strong>and</strong> <strong>the</strong><br />

central limit <strong>the</strong>orem,” Probab. <strong>The</strong>ory Related Fields, vol. 129, no. 3,<br />

pp. 391–409, 2004.<br />

[8] D. Shlyakhtenko, “A free analogue <strong>of</strong> Shannon’s problem on<br />

monotonicity <strong>of</strong> entropy,” Prepr<strong>in</strong>t, 2005. [Onl<strong>in</strong>e]. Available:<br />

arxiv:math.OA/0510103<br />

[9] H. Schultz, “Semicircularity, gaussianity <strong>and</strong> monotonicity <strong>of</strong> entropy,”<br />

Prepr<strong>in</strong>t, 2005. [Onl<strong>in</strong>e]. Available: arxiv: math.OA/0512492<br />

[10] A. Dembo, T. Cover, <strong>and</strong> J. Thomas, “<strong>Information</strong>-<strong>the</strong>oretic <strong>in</strong>equalities,”<br />

IEEE Trans. Inform. <strong>The</strong>ory, vol. 37, no. 6, pp. 1501–1518, 1991.<br />

[11] N. Blachman, “<strong>The</strong> convolution <strong>in</strong>equality for entropy powers,” IEEE<br />

Trans. <strong>Information</strong> <strong>The</strong>ory, vol. IT-11, pp. 267–271, 1965.<br />

[12] W. Hoeffd<strong>in</strong>g, “A class <strong>of</strong> statistics with asymptotically normal distribution,”<br />

Ann. Math. Stat., vol. 19, no. 3, pp. 293–325, 1948.<br />

[13] R. von Mises, “On <strong>the</strong> asymptotic distribution <strong>of</strong> differentiable statistical<br />

functions,” Ann. Math. Stat., vol. 18, no. 3, pp. 309–348, 1947.<br />

[14] B. Kurkjian <strong>and</strong> M. Zelen, “A calculus for factorial arrangements,” Ann.<br />

Math. Statist., vol. 33, pp. 600–619, 1962.<br />

[15] R. L. Jacobsen, “L<strong>in</strong>ear algebra <strong>and</strong> ANOVA,” Ph.D. dissertation,<br />

Cornell University, 1968.<br />

[16] H. Rub<strong>in</strong> <strong>and</strong> R. A. Vitale, “Asymptotic distribution <strong>of</strong> symmetric<br />

statistics,” Ann. Statist., vol. 8, no. 1, pp. 165–170, 1980.<br />

[17] B. Efron <strong>and</strong> C. Ste<strong>in</strong>, “<strong>The</strong> jackknife estimate <strong>of</strong> variance,” Ann. Stat.,<br />

vol. 9, no. 3, pp. 586–596, 1981.<br />

[18] A. Takemura, “Tensor analysis <strong>of</strong> ANOVA decomposition,” J. Amer.<br />

Statist. Assoc., vol. 78, no. 384, pp. 894–900, 1983.