2007 Issue 2 - Raytheon

2007 Issue 2 - Raytheon

2007 Issue 2 - Raytheon

Create successful ePaper yourself

Turn your PDF publications into a flip-book with our unique Google optimized e-Paper software.

Feature<br />

Continued from page 15<br />

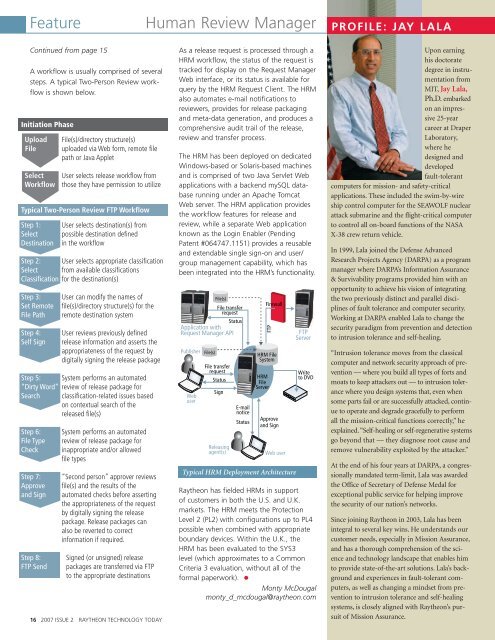

A workflow is usually comprised of several<br />

steps. A typical Two-Person Review workflow<br />

is shown below.<br />

Initiation Phase<br />

Upload File(s)/directory structure(s)<br />

File uploaded via Web form, remote file<br />

path or Java Applet<br />

Select User selects release workflow from<br />

Workflow those they have permission to utilize<br />

Typical Two-Person Review FTP Workflow<br />

Step 1: User selects destination(s) from<br />

Select possible destination defined<br />

Destination in the workflow<br />

Step 2: User selects appropriate classification<br />

Select from available classifications<br />

Classification for the destination(s)<br />

Step 3: User can modify the names of<br />

Set Remote file(s)/directory structure(s) for the<br />

File Path remote destination system<br />

Step 4: User reviews previously defined<br />

Self Sign release information and asserts the<br />

appropriateness of the request by<br />

digitally signing the release package<br />

Step 5: System performs an automated<br />

“Dirty Word” review of release package for<br />

Search classification-related issues based<br />

oncontextual search of the<br />

released file(s)<br />

Step 6: System performs an automated<br />

File Type review of release package for<br />

Check inappropriate and/or allowed<br />

file types<br />

Step 7: “Second person” approver reviews<br />

Approve file(s) and the results of the<br />

and Sign automated checks before asserting<br />

the appropriateness of the request<br />

by digitally signing the release<br />

package. Release packages can<br />

also be reverted to correct<br />

information if required.<br />

Step 8: Signed (or unsigned) release<br />

FTP Send packages are transferred via FTP<br />

tothe appropriate destinations<br />

16 <strong>2007</strong> ISSUE 2 RAYTHEON TECHNOLOGY TODAY<br />

Human Review Manager<br />

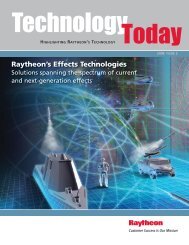

As a release request is processed through a<br />

HRM workflow, the status of the request is<br />

tracked for display on the Request Manager<br />

Web interface, or its status is available for<br />

query by the HRM Request Client. The HRM<br />

also automates e-mail notifications to<br />

reviewers, provides for release packaging<br />

and meta-data generation, and produces a<br />

comprehensive audit trail of the release,<br />

review and transfer process.<br />

The HRM has been deployed on dedicated<br />

Windows-based or Solaris-based machines<br />

and is comprised of two Java Servlet Web<br />

applications with a backend mySQL database<br />

running under an Apache Tomcat<br />

Web server. The HRM application provides<br />

the workflow features for release and<br />

review, while a separate Web application<br />

known as the Login Enabler (Pending<br />

Patent #064747.1151) provides a reusable<br />

and extendable single sign-on and user/<br />

group management capability, which has<br />

been integrated into the HRM’s functionality.<br />

Publisher<br />

Web<br />

user<br />

File transfer<br />

request<br />

Status<br />

Application with<br />

Request Manager API<br />

File(s)<br />

File(s)<br />

File transfer<br />

request<br />

Status<br />

Sign<br />

E-mail<br />

notice<br />

Status<br />

FTP<br />

HRM<br />

File<br />

Server<br />

Firewall<br />

HRM File<br />

System<br />

Approve<br />

and Sign<br />

Releasing<br />

agent(s) Web user<br />

Typical HRM Deployment Architecture<br />

FTP<br />

Server<br />

Write<br />

to DVD<br />

<strong>Raytheon</strong> has fielded HRMs in support<br />

of customers in both the U.S. and U.K.<br />

markets. The HRM meets the Protection<br />

Level 2 (PL2) with configurations up to PL4<br />

possible when combined with appropriate<br />

boundary devices. Within the U.K., the<br />

HRM has been evaluated to the SYS3<br />

level (which approximates to a Common<br />

Criteria 3 evaluation, without all of the<br />

formal paperwork). • Monty McDougal<br />

monty_d_mcdougal@raytheon.com<br />

PROFILE: JAY LALA<br />

Upon earning<br />

his doctorate<br />

degree in instrumentation<br />

from<br />

MIT, Jay Lala,<br />

Ph.D. embarked<br />

on an impressive<br />

25-year<br />

career at Draper<br />

Laboratory,<br />

where he<br />

designed and<br />

developed<br />

fault-tolerant<br />

computers for mission- and safety-critical<br />

applications. These included the swim-by-wire<br />

ship control computer for the SEAWOLF nuclear<br />

attack submarine and the flight-critical computer<br />

to control all on-board functions of the NASA<br />

X-38 crew return vehicle.<br />

In 1999, Lala joined the Defense Advanced<br />

Research Projects Agency (DARPA) as a program<br />

manager where DARPA’s Information Assurance<br />

& Survivability programs provided him with an<br />

opportunity to achieve his vision of integrating<br />

the two previously distinct and parallel disciplines<br />

of fault tolerance and computer security.<br />

Working at DARPA enabled Lala to change the<br />

security paradigm from prevention and detection<br />

to intrusion tolerance and self-healing.<br />

“Intrusion tolerance moves from the classical<br />

computer and network security approach of prevention<br />

— where you build all types of forts and<br />

moats to keep attackers out — to intrusion tolerance<br />

where you design systems that, even when<br />

some parts fail or are successfully attacked, continue<br />

to operate and degrade gracefully to perform<br />

all the mission-critical functions correctly,” he<br />

explained.“Self-healing or self-regenerative systems<br />

go beyond that — they diagnose root cause and<br />

remove vulnerability exploited by the attacker.”<br />

At the end of his four years at DARPA, a congressionally<br />

mandated term-limit, Lala was awarded<br />

the Office of Secretary of Defense Medal for<br />

exceptional public service for helping improve<br />

the security of our nation’s networks.<br />

Since joining <strong>Raytheon</strong> in 2003, Lala has been<br />

integral to several key wins. He understands our<br />

customer needs, especially in Mission Assurance,<br />

and has a thorough comprehension of the science<br />

and technology landscape that enables him<br />

to provide state-of-the-art solutions. Lala’s background<br />

and experiences in fault-tolerant computers,<br />

as well as changing a mindset from prevention<br />

to intrusion tolerance and self-healing<br />

systems, is closely aligned with <strong>Raytheon</strong>’s pursuit<br />

of Mission Assurance.