- Page 1 and 2: An Introduction to Parallel Program

- Page 3 and 4: Day 2 Course Outline (cont) Morning

- Page 5 and 6: Day 1 Morning - Lecture Course Outl

- Page 7 and 8: Parallel Processing What is paralle

- Page 9 and 10: Parallel Processing David Cronk 9

- Page 11 and 12: Parallel Processing Why parallel pr

- Page 13 and 14: Parallel Programming Outline › Pr

- Page 15 and 16: Parallel Programming Message Passin

- Page 17 and 18: Data Distribution David Cronk 17

- Page 19 and 20: Day 1 Morning - Lecture Course Outl

- Page 21 and 22: What is MPI Introduction to MPI ›

- Page 23 and 24: What is MPI A standard library spec

- Page 25 and 26: Continuing to grow! What is MPI ›

- Page 27 and 28: Day 1 Morning - Lecture Course Outl

- Page 29 and 30: Getting Started with MPI MPI contai

- Page 31 and 32: MPI_INIT (ierr) ierr: Integer error

- Page 33 and 34: Basic Program Structure program mai

- Page 35 and 36: MPI_COMM_RANK (comm, rank, ierr) co

- Page 37 and 38: program main include ‘mpif.h’ i

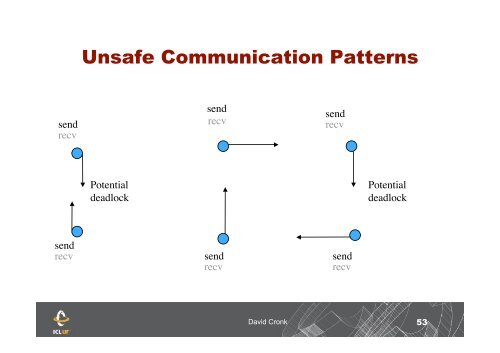

- Page 39 and 40: Message Passing Message passing is

- Page 41 and 42: Message Passing Processes are speci

- Page 43 and 44: MPI_STATUS Since a receive call onl

- Page 45 and 46: Types of data parallel MPI Programs

- Page 47 and 48: Peer/SPMD Example Compute the sum o

- Page 49 and 50: Master/Slave Example Master code: c

- Page 51: Master/Slave Example Master code: c

- Page 55 and 56: Jacobi Iteration Initializations .

- Page 57 and 58: Day 2 Course Outline (cont) Morning

- Page 59 and 60: Point-to-Point Communication Modes

- Page 61 and 62: MPI_ISEND (buf, cnt, dtype, dest, t

- Page 63 and 64: MPI_WAIT (request, status, ierr) Re

- Page 65 and 66: MPI_WAITALL (count, requests, statu

- Page 67 and 68: MPI_TESTANY (count, requests, index

- Page 69 and 70: Non-blocking Communication 100 cont

- Page 71 and 72: Non-blocking communication J = 0 do

- Page 73 and 74: Jacobi Iteration IF (myrank .EQ. 0)

- Page 75 and 76: Point-to-Point Communication Modes:

- Page 77 and 78: Point-to-Point Communication Modes:

- Page 79 and 80: Ready Mode SLAVE MASTER while (!don

- Page 81 and 82: TAKE A BREAK David Cronk 81

- Page 83 and 84: Collective Communication OUTLINE

- Page 85 and 86: Collective Communication Synchroniz

- Page 87 and 88: Collective Communication: Bcast MPI

- Page 89 and 90: Collective Communication: Gather A

- Page 91 and 92: MPI_Gather for (i = 0; i < 20; i++)

- Page 93 and 94: Collective Communication: Gatherv (

- Page 95 and 96: Collective Communication: Gatherv (

- Page 97 and 98: MPI_SCATTER IF (MYPE .EQ. ROOT) THE

- Page 99 and 100: Collective Communication: Scatterv

- Page 101 and 102: Collective Communication: Allgather

- Page 103 and 104:

Allgatherv int mycount; /* inited t

- Page 105 and 106:

Allgatherv p1 p2 p3 p4 MPI_Comm_ran

- Page 107 and 108:

Collective Communication: Alltoallv

- Page 109 and 110:

Example subroutine System_Change_in

- Page 111 and 112:

Example (cont) if (.not. rand_festk

- Page 113 and 114:

Collective Communication: Reduction

- Page 115 and 116:

MPI_REDUCE REAL a(n), b(n,m), c(m)

- Page 117 and 118:

MPI_ALLREDUCE REAL a(n), b(n,m), c(

- Page 119 and 120:

Collective Communication: Prefix Re

- Page 121 and 122:

Collective Communication: Reduction

- Page 123 and 124:

Collective Communication: Performan

- Page 125 and 126:

Derived Datatypes › Introduction

- Page 127 and 128:

Derived Datatypes (cont) Typemap =

- Page 129 and 130:

Datatype Constructors MPI_TYPE_DUP

- Page 131 and 132:

Datatype Constructors (cont) MPI_TY

- Page 133 and 134:

EXAMPLE REAL a(100,100), B(100,100)

- Page 135 and 136:

Datatype Constructors (cont) MPI_TY

- Page 137 and 138:

Datatype Constructors (cont) MPI_TY

- Page 139 and 140:

MPI_TYPE_CREATE_STRUCT int i; char

- Page 141 and 142:

MPI_TYPE_CREATE_RESIZED Struct s1 {

- Page 143 and 144:

Datatype Constructors (cont) MPI_TY

- Page 145 and 146:

Datatype Constructors: Subarrays (1

- Page 147 and 148:

Datatype Constructors (cont) Derive

- Page 149 and 150:

int i; char c[100]; MPI_Aint disp[2

- Page 151 and 152:

TAKE A BREAK David Cronk 151

- Page 153 and 154:

Communicators and Groups Many MPI u

- Page 155 and 156:

Communicators and Groups(cont) P 2

- Page 157 and 158:

Group Management You can access inf

- Page 159 and 160:

Course Outline (cont) Day 3 Morning

- Page 161 and 162:

One Sided Communication By requirin

- Page 163 and 164:

One Sided Communication Get Put Add

- Page 165 and 166:

One Sided Communication Data moveme

- Page 167 and 168:

Dynamic Process Management Spawning

- Page 169 and 170:

MPI-I/O Introduction › What is pa

- Page 171 and 172:

What is parallel I/O INTRODUCTION

- Page 173 and 174:

What is parallel I/O INTRODUCTION

- Page 175 and 176:

INTRODUCTION (cont) Integer dim par

- Page 177 and 178:

INTRODUCTION (cont) Why use paralle

- Page 179 and 180:

INTRODUCTION (cont) What is MPI-I/O

- Page 181 and 182:

Recommended references MPI - The Co