. K. Kulshreshtha, A. K<strong>on</strong>iaeva 4 / 13 <str<strong>on</strong>g>Vectorizing</str<strong>on</strong>g> <str<strong>on</strong>g>ADOL</str<strong>on</strong>g>-C using CUDA Euro AD 10.06.2013<strong>GPU</strong> Computing.NVIDIA’s CUDA architectureNVIDIA’s CUDA architectureHostDeviceGrid 1• E.g: NVIDIA adro 4000 with CUDARuntime versi<strong>on</strong> 4.2 hasKernel 1Block(0, 0)Block(0, 1)Block(1, 0)Block(1, 1)Block(2, 0)Block(2, 1)Maximum number <str<strong>on</strong>g>of</str<strong>on</strong>g> threads per block:1024Maximum sizes <str<strong>on</strong>g>of</str<strong>on</strong>g> each dimensi<strong>on</strong> <str<strong>on</strong>g>of</str<strong>on</strong>g> a block:1024 x 1024 x 64Maximum sizes <str<strong>on</strong>g>of</str<strong>on</strong>g> each dimensi<strong>on</strong> <str<strong>on</strong>g>of</str<strong>on</strong>g> a grid:65535 x 65535 x 65535Kernel 2Grid 2Block (1, 1)• Several kernels may be started parallely bydistributing <str<strong>on</strong>g>the</str<strong>on</strong>g>m <strong>on</strong> <str<strong>on</strong>g>the</str<strong>on</strong>g> gridThread(0, 0)Thread(0, 1)Thread(1, 0)Thread(1, 1)Thread(2, 0)Thread(2, 1)Thread(3, 0)Thread(3, 1)Thread(4, 0)Thread(4, 1)Thread(0, 2)Thread(1, 2)Thread(2, 2)Thread(3, 2)Thread(4, 2)The host issues a successi<strong>on</strong> <str<strong>on</strong>g>of</str<strong>on</strong>g> kernel invocati<strong>on</strong>s to <str<strong>on</strong>g>the</str<strong>on</strong>g> device. Each kernel is executed as a batch<str<strong>on</strong>g>of</str<strong>on</strong>g> threads <strong>org</strong>anized as a grid <str<strong>on</strong>g>of</str<strong>on</strong>g> thread blocks

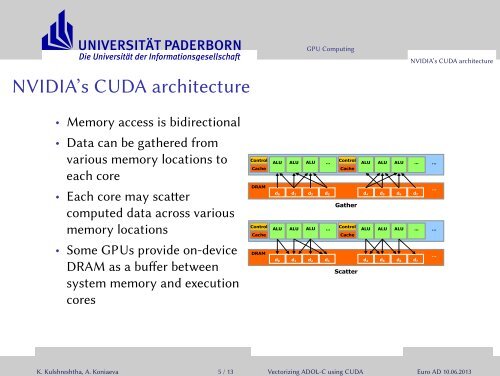

. K. Kulshreshtha, A. K<strong>on</strong>iaeva 5 / 13 <str<strong>on</strong>g>Vectorizing</str<strong>on</strong>g> <str<strong>on</strong>g>ADOL</str<strong>on</strong>g>-C using CUDA Euro AD 10.06.2013.NVIDIA’s CUDA architecture<strong>GPU</strong> ComputingNVIDIA’s CUDA architecture• Memory access is bidirecti<strong>on</strong>al• Data can be ga<str<strong>on</strong>g>the</str<strong>on</strong>g>red fromvarious memory locati<strong>on</strong>s toeach core• Each core may scaercomputed data across variousmemory locati<strong>on</strong>s• Some <strong>GPU</strong>s provide <strong>on</strong>-deviceDRAM as a buffer betweensystem memory and executi<strong>on</strong>coresC<strong>on</strong>trolALU ALU ALUC<strong>on</strong>trol...ALU ALU ALU ...CacheCacheDRAMd0 d1 d2 d3d4 d5 d6 d7Ga<str<strong>on</strong>g>the</str<strong>on</strong>g>rC<strong>on</strong>trolALU ALU ALUC<strong>on</strong>trol...ALU ALU ALU ...CacheCacheDRAMd0 d1 d2 d3d4 d5 d6 d7Scatter…………

- Page 1 and 2: .. K. Kulshreshtha, A. Koniaeva 1 /

- Page 3 and 4: . K. Kulshreshtha, A. Koniaeva 3 /

- Page 5: . K. Kulshreshtha, A. Koniaeva 4 /

- Page 9 and 10: . K. Kulshreshtha, A. Koniaeva 6 /

- Page 11 and 12: . K. Kulshreshtha, A. Koniaeva 7 /

- Page 13 and 14: . K. Kulshreshtha, A. Koniaeva 7 /

- Page 15 and 16: . K. Kulshreshtha, A. Koniaeva 9 /

- Page 17 and 18: . K. Kulshreshtha, A. Koniaeva 9 /

- Page 19 and 20: . K. Kulshreshtha, A. Koniaeva 9 /

- Page 21 and 22: . K. Kulshreshtha, A. Koniaeva 10 /

- Page 23 and 24: . K. Kulshreshtha, A. Koniaeva 12 /

- Page 25 and 26: Summary, Issues & Outlook.Summary,