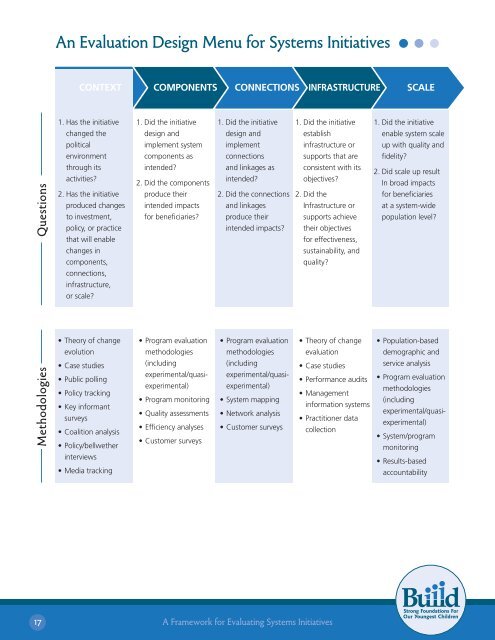

An Evaluation Design Menu <strong>for</strong> <strong>Systems</strong> <strong>Initiatives</strong>CONTEXTCOMPONENTSCONNECTIONS INFRASTRUCTURESCALE1. Has the initiative1. Did the initiative1. Did the initiative1. Did the initiative1. Did the initiativechanged thedesign anddesign andestablishenable system scalepoliticalimplement systemimplementinfrastructure orup with quality andenvironmentcomponents asconnectionssupports that arefidelity?Methodologies Questionsthrough itsactivities?2. Has the initiativeproduced changesto investment,policy, or practicethat will enablechanges incomponents,connections,infrastructure,or scale?• Theory of changeevolution• Case studies• Public polling• Policy tracking• Key in<strong>for</strong>mantsurveys• Coalition analysis• Policy/bellwetherinterviews• Media trackingconsistent with itsobjectives?2. Did theInfrastructure orsupports achievetheir objectives<strong>for</strong> effectiveness,sustainability, andquality?• Theory of changeevaluation• Case studies• Per<strong>for</strong>mance audits• Managementin<strong>for</strong>mation systems• Practitioner datacollectionintended?2. Did the componentsproduce theirintended impacts<strong>for</strong> beneficiaries?• Program evaluationmethodologies(includingexperimental/quasiexperimental)• Program monitoring• Quality assessments• Efficiency analyses• Customer surveysand linkages asintended?2. Did the connectionsand linkagesproduce theirintended impacts?• Program evaluationmethodologies(includingexperimental/quasiexperimental)• System mapping• Network analysis• Customer surveys2. Did scale up resultIn broad impacts<strong>for</strong> beneficiariesat a system-widepopulation level?• Population-baseddemographic andservice analysis• Program evaluationmethodologies(includingexperimental/quasiexperimental)• System/programmonitoring• Results-basedaccountability17A <strong>Framework</strong> <strong>for</strong> <strong>Evaluating</strong> <strong>Systems</strong> <strong>Initiatives</strong>

Evaluation questions, designs, and methods can be “mixed and matched” as appropriate. For example,a quasi-experimental design may co-exist with a theory of change approach, or case studies may beused alongside a results-based accountability approach. Initiative evaluations can incorporate multipledesigns and a wide range of data collection methods.The remainder of this section describes evaluation options <strong>for</strong> each focus area in more detail, discussingrelevant evaluation questions and methodologies. In addition, it offers in<strong>for</strong>mation about theevaluations of the real-life initiatives described earlier.1<strong>Evaluating</strong> Context<strong>Systems</strong> initiatives focused on context attempt to affect the political environment so it better supportssystems’ development and success.Evaluation QuestionsThe key evaluation questions <strong>for</strong> these initiatives are:1) Has the initiative changed the political environment through its activities?2) Has the initiative produced changes to investment, policy, or practice that will enable changes incomponents, connections, infrastructure, or scale?Evaluation Methodologies<strong>Initiatives</strong> with a context focus generally use a theory of change approach to evaluation. Once atheory is developed <strong>for</strong> how outcomes in the political context will be achieved, evaluators seekempirical evidence that the theory’s components are in place and that the theorized links betweenthem exist. In other words, they compare the theory with actual experience. 27 Evaluators “constructmethods <strong>for</strong> data collection and analysis to…examine the extent to which program [or initiative]theories hold. The evaluation should show which of the assumptions underlying the program breakdown, where they break down, and which of the several theories underlying the program are bestsupported by the evidence.” 28Mostly, evaluations that use this approach will not attempt to determine whether the theory’scomponents are causally linked. Rather, they assess the components separately, using multiple andtriangulated methods where possible, and then use both data and evaluative judgment to determinewhether a plausible and defensible case be made that the theory of change worked as anticipated andthat the initiative had its intended effects. As the Urban Health Initiative evaluation example belowdemonstrates, however, it is possible to incorporate design elements like comparison groups or othercounterfactuals into a theory of change approach to strengthen confidence in interpretations about thelinks between theory of change components.27Connell, J., Kubisch, A., Schorr, L., & Weiss, C. (1995) (Eds.). New approaches to evaluating community initiatives: Concepts, methods, and contexts.Washington, DC: The Aspen Institute.28Weiss, C. (1995). Nothing as practical as good theory: Exploring theory-based evaluation <strong>for</strong> comprehensive community initiatives <strong>for</strong> children andfamilies (p.67). In Connell, J., Kubisch, A., Schorr, L., & Weiss, C. (Eds.) New approaches to evaluating community initiatives: Concepts, methods,and contexts. Washington, DC: The Aspen Institute.18A <strong>Framework</strong> <strong>for</strong> <strong>Evaluating</strong> <strong>Systems</strong> <strong>Initiatives</strong>