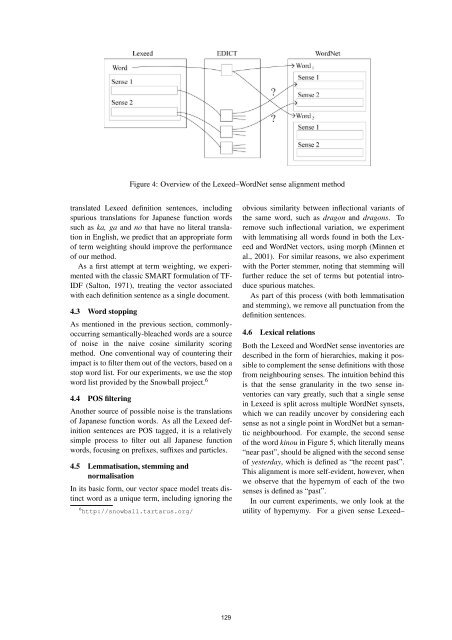

Figure 3: Example of normalisation of the translationstring; we stop at “rook” as <strong>Word</strong>Net has amatching entry <strong>for</strong> itWhen building the baseline <strong>for</strong> our evaluation, weused the SemCor corpus—a subset of the Browncorpus annotated with <strong>Word</strong>Net senses—to derivethe frequency counts of each <strong>Word</strong>Net synset (Landeset al., 1998). Section 5 discusses this process inmore detail.4 Proposed MethodsOur basic alignment method, along with various extensions,is outlined below.4.1 Basic alignment method using cosinesimilarityIn this paper, we align a semantic database ofJapanese (Lexeed) with a semantic network of English(<strong>Word</strong>Net) at the sense level. First, we useLexeed to find all possible senses of a given word,and retrieve the definition sentences <strong>for</strong> each.Since all the definition sentences are in Japanese,we use EDICT as a pivot to convert Lexeed definitionsentences into English. In this process, allpossible translations of all Japanese words foundin the definition sentences are returned, along withtheir POS classes. For every translation returned,we find entries in <strong>Word</strong>Net that match the translationand POS category. If there is no match <strong>for</strong> thegiven POS, we relax this constraint and search <strong>for</strong>entries in <strong>Word</strong>Net that match the translation but notthe POS.Problems arise when <strong>Word</strong>Net does not have amatching entry <strong>for</strong> the translation. This situationdoesn’t distinguish between hyponyms and troponyms, however,we treat the two identically.usually happens when the translation returned byEDICT is comprised of more than one English word.For a Japanese verb, e.g., the English translation inEDICT almost always begins with the auxiliary to(e.g. nomu is translated as to drink). <strong>Word</strong>Net doesnot contain a verbal entry <strong>for</strong> to drink, but does containan entry <strong>for</strong> drink. To handle this case of partialmatch, we locate the longest right word substring ofthe EDICT translation which is indexed in <strong>Word</strong>Net.A related problem is when the translation containsdomain or collocational in<strong>for</strong>mation in parentheses.For example, ryuu is translated as both dragon andpromoted rook (shogi). The first translation has amatching entry in <strong>Word</strong>Net but the second translationdoes not. In this second case, there is no rightword substring which matches in <strong>Word</strong>Net, as weend up with rook (shogi) and then (shogi), neitherof which is contained in <strong>Word</strong>Net. In order to dealwith this situation, we first normalise the translationstrings by removing all the brackets and query<strong>Word</strong>Net with the normalised string. Should therebe a matching entry, we stop here. If not, we thenremove all strings between brackets, and apply thelongest right word substring heuristic as above. Anillustration of this process is given in Figure 3.In the worst case of <strong>Word</strong>Net not having a matchingentry <strong>for</strong> any right word substring, we discardthe translation.At this point, we have aligned a given Japaneseword with (hopefully) one or more English words,but are still no closer to inducing sense alignmentpairs. In order to produce the sense alignments, wegenerate all pairings of Lexeed senses with <strong>Word</strong>Netsynsets <strong>for</strong> each <strong>Word</strong>Net-matched word translation.For each such pair, we compile out the Lexeed definitionsentence(s) word-translated into English, andthe <strong>Word</strong>Net glosses, and convert each into a simplevector of term frequencies. We then measure thesimilarity of each vector pair using cosine similarity.An overview of this alignment process is presentedin Figure 4.4.2 Weighting terms using TF-IDF mechanismThe basic alignment method does not use any <strong>for</strong>mof term weighting, and thus overemphasises commonfunction words such as the, which and and,and downplays the impact of rare words. As we expectto have a large amount of noise in the word-128

Figure 4: Overview of the Lexeed–<strong>Word</strong>Net sense alignment methodtranslated Lexeed definition sentences, includingspurious translations <strong>for</strong> Japanese function wordssuch as ka, ga and no that have no literal translationin English, we predict that an appropriate <strong>for</strong>mof term weighting should improve the per<strong>for</strong>manceof our method.As a first attempt at term weighting, we experimentedwith the classic SMART <strong>for</strong>mulation of TF-IDF (Salton, 1971), treating the vector associatedwith each definition sentence as a single document.4.3 <strong>Word</strong> stoppingAs mentioned in the previous section, commonlyoccurringsemantically-bleached words are a sourceof noise in the naive cosine similarity scoringmethod. One conventional way of countering theirimpact is to filter them out of the vectors, based on astop word list. For our experiments, we use the stopword list provided by the Snowball project. 64.4 POS filteringAnother source of possible noise is the translationsof Japanese function words. As all the Lexeed definitionsentences are POS tagged, it is a relativelysimple process to filter out all Japanese functionwords, focusing on prefixes, suffixes and particles.4.5 Lemmatisation, stemming andnormalisationIn its basic <strong>for</strong>m, our vector space model treats distinctword as a unique term, including ignoring the6 http://snowball.tartarus.org/obvious similarity between inflectional variants ofthe same word, such as dragon and dragons. Toremove such inflectional variation, we experimentwith lemmatising all words found in both the Lexeedand <strong>Word</strong>Net vectors, using morph (Minnen etal., 2001). For similar reasons, we also experimentwith the Porter stemmer, noting that stemming willfurther reduce the set of terms but potential introducespurious matches.As part of this process (with both lemmatisationand stemming), we remove all punctuation from thedefinition sentences.4.6 Lexical relationsBoth the Lexeed and <strong>Word</strong>Net sense inventories aredescribed in the <strong>for</strong>m of hierarchies, making it possibleto complement the sense definitions with thosefrom neighbouring senses. The intuition behind thisis that the sense granularity in the two sense inventoriescan vary greatly, such that a single sensein Lexeed is split across multiple <strong>Word</strong>Net synsets,which we can readily uncover by considering eachsense as not a single point in <strong>Word</strong>Net but a semanticneighbourhood. For example, the second senseof the word kinou in Figure 5, which literally means“near past”, should be aligned with the second senseof yesterday, which is defined as “the recent past”.This alignment is more self-evident, however, whenwe observe that the hypernym of each of the twosenses is defined as “past”.In our current experiments, we only look at theutility of hypernymy. For a given sense Lexeed–129