GIM2D: An IRAF package for the Quantitative ... - ADASS.Org

GIM2D: An IRAF package for the Quantitative ... - ADASS.Org

GIM2D: An IRAF package for the Quantitative ... - ADASS.Org

Create successful ePaper yourself

Turn your PDF publications into a flip-book with our unique Google optimized e-Paper software.

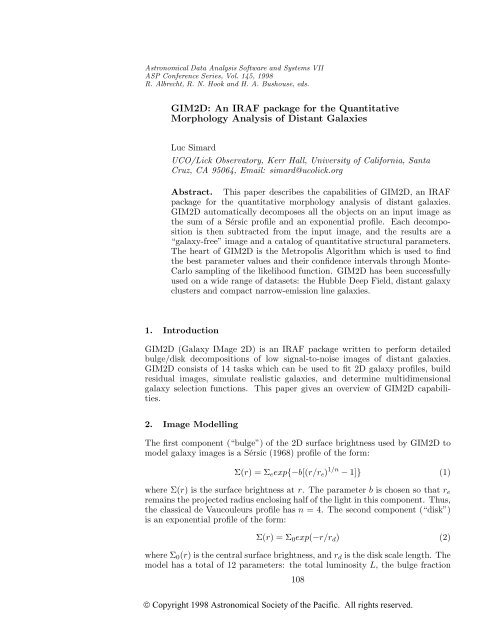

Astronomical Data <strong>An</strong>alysis Software and Systems VII<br />

ASP Conference Series, Vol. 145, 1998<br />

R. Albrecht, R. N. Hook and H. A. Bushouse, eds.<br />

<strong>GIM2D</strong>: <strong>An</strong> <strong>IRAF</strong> <strong>package</strong> <strong>for</strong> <strong>the</strong> <strong>Quantitative</strong><br />

Morphology <strong>An</strong>alysis of Distant Galaxies<br />

Luc Simard<br />

UCO/Lick Observatory, Kerr Hall, University of Cali<strong>for</strong>nia, Santa<br />

Cruz, CA 95064, Email: simard@ucolick.org<br />

Abstract. This paper describes <strong>the</strong> capabilities of <strong>GIM2D</strong>, an <strong>IRAF</strong><br />

<strong>package</strong> <strong>for</strong> <strong>the</strong> quantitative morphology analysis of distant galaxies.<br />

<strong>GIM2D</strong> automatically decomposes all <strong>the</strong> objects on an input image as<br />

<strong>the</strong> sum of a Sérsic profile and an exponential profile. Each decomposition<br />

is <strong>the</strong>n subtracted from <strong>the</strong> input image, and <strong>the</strong> results are a<br />

“galaxy-free” image and a catalog of quantitative structural parameters.<br />

The heart of <strong>GIM2D</strong> is <strong>the</strong> Metropolis Algorithm which is used to find<br />

<strong>the</strong> best parameter values and <strong>the</strong>ir confidence intervals through Monte-<br />

Carlo sampling of <strong>the</strong> likelihood function. <strong>GIM2D</strong> has been successfully<br />

used on a wide range of datasets: <strong>the</strong> Hubble Deep Field, distant galaxy<br />

clusters and compact narrow-emission line galaxies.<br />

1. Introduction<br />

<strong>GIM2D</strong> (Galaxy IMage 2D) is an <strong>IRAF</strong> <strong>package</strong> written to per<strong>for</strong>m detailed<br />

bulge/disk decompositions of low signal-to-noise images of distant galaxies.<br />

<strong>GIM2D</strong> consists of 14 tasks which can be used to fit 2D galaxy profiles, build<br />

residual images, simulate realistic galaxies, and determine multidimensional<br />

galaxy selection functions. This paper gives an overview of <strong>GIM2D</strong> capabilities.<br />

2. Image Modelling<br />

The first component (“bulge”) of <strong>the</strong> 2D surface brightness used by <strong>GIM2D</strong> to<br />

model galaxy images is a Sérsic (1968) profile of <strong>the</strong> <strong>for</strong>m:<br />

Σ(r) =Σeexp{−b[(r/re) 1/n − 1]} (1)<br />

where Σ(r) is <strong>the</strong> surface brightness at r. The parameter b is chosen so that re<br />

remains <strong>the</strong> projected radius enclosing half of <strong>the</strong> light in this component. Thus,<br />

<strong>the</strong> classical de Vaucouleurs profile has n = 4. The second component (“disk”)<br />

is an exponential profile of <strong>the</strong> <strong>for</strong>m:<br />

Σ(r) =Σ0exp(−r/rd) (2)<br />

where Σ0(r) is <strong>the</strong> central surface brightness, and rd is <strong>the</strong> disk scale length. The<br />

model has a total of 12 parameters: <strong>the</strong> total luminosity L, <strong>the</strong> bulge fraction<br />

108<br />

© Copyright 1998 Astronomical Society of <strong>the</strong> Pacific. All rights reserved.

<strong>GIM2D</strong>: <strong>Quantitative</strong> Morphology <strong>An</strong>alysis of Distant Galaxies 109<br />

B/T, <strong>the</strong> bulge effective radius re, <strong>the</strong> bulge ellipticity e, <strong>the</strong> bulge position<br />

angle φe, <strong>the</strong>Sérsic index n, <strong>the</strong> disk scale length rd, <strong>the</strong> disk inclination i, <strong>the</strong><br />

disk position angle φd, <strong>the</strong> centroiding offsets dx and dy, and <strong>the</strong> residual sky<br />

background level db.<br />

Detector undersampling (as in <strong>the</strong> HST/WFPC2 camera) is taken into account<br />

by generating <strong>the</strong> surface brightness model on an oversampled grid, convolving<br />

it with <strong>the</strong> appropriate Point-Spread-Function (PSF) and rebinning <strong>the</strong><br />

result to <strong>the</strong> detector resolution.<br />

3. Parameter Search with <strong>the</strong> Metropolis Algorithm<br />

The 12-dimensional parameter space can have a very complicated topology with<br />

local minima at low S/N ratios. It is <strong>the</strong>re<strong>for</strong>e important to choose an algorithm<br />

which does not easily get fooled by those local minima. The Metropolis Algorithm<br />

(Metropolis et al. 1953) was designed to search <strong>for</strong> parameter values in a<br />

complicated topology. Compared to gradient search methods, <strong>the</strong> Metropolis is<br />

not efficient i.e., it is CPU intensive. On <strong>the</strong> o<strong>the</strong>r hand, gradient searches are<br />

greedy. They will start from initial parameter values, dive in <strong>the</strong> first minimum<br />

<strong>the</strong>y encounter and claim it is <strong>the</strong> global one. The Metropolis does its best to<br />

avoid this trap.<br />

The Metropolis in <strong>GIM2D</strong> starts from an initial set of parameters given<br />

by <strong>the</strong> image moments of <strong>the</strong> object and computes <strong>the</strong> likelihood P(w|D, M)<br />

that <strong>the</strong> parameter set w is <strong>the</strong> true one given <strong>the</strong> data D and <strong>the</strong> model M.<br />

It <strong>the</strong>n generates random perturbations ∆x about that initial location with a<br />

given “temperature”. When <strong>the</strong> search is “hot”, large perturbations are tried.<br />

After each trial perturbation, <strong>the</strong> Metropolis computes <strong>the</strong> likelihood value P1 at<br />

<strong>the</strong> new location, and immediately accepts <strong>the</strong> trial perturbation if P1 is greater<br />

than <strong>the</strong> old value P0. However, if P1

110 Simard<br />

4. Image Reduction Pipeline<br />

<strong>GIM2D</strong> has a simple image reduction pipeline <strong>for</strong> HST (or ground-based) images<br />

which makes <strong>GIM2D</strong> particularly suitable <strong>for</strong> <strong>the</strong> analysis of archival data:<br />

• Obtain archival data, combine image stacks and remove cosmic rays. Cosmic<br />

rays can be removed using tasks such as STSDAS/CRREJ.<br />

• Run SExtractor (Bertin & Arnouts 1996) on <strong>the</strong> science image to produce<br />

an object catalog and a “segmentation” image. Pixel values in this<br />

segmentation image indicate to which object a given pixel belongs. The<br />

segmentation image is used by <strong>GIM2D</strong> to deblend galaxy images.<br />

• For HST images, Data Quality Files obtained with a science image stack<br />

can be combined and apply to <strong>the</strong> SExtractor segmentation image. Bad<br />

pixels identified by <strong>the</strong> DQF images are excluded from <strong>the</strong> bulge/disk<br />

decompositions.<br />

• Create a Point-Spread-Function (PSF) <strong>for</strong> each object. Tiny Tim can be<br />

used <strong>for</strong> HST images. <strong>GIM2D</strong> accepts three types of PSFs: Gaussian,<br />

Tiny Tim and user-specified.<br />

• Run bulge/disk decompositions. These decompositions are run under an<br />

<strong>IRAF</strong> script which can be sent to multiple computers so that <strong>the</strong>y can<br />

simultaneously work on <strong>the</strong> same science image.<br />

• Create a residual image. The <strong>GIM2D</strong> task GRESIDUAL creates a galaxysubtracted<br />

version of <strong>the</strong> input image àlaDAOPHOT. GRESIDUAL sums<br />

up all <strong>the</strong> output galaxy model images and subtracts this sum from <strong>the</strong><br />

original image. The resulting residual image is extremely useful to visually<br />

inspect <strong>the</strong> residual images of hundreds of objects at a glance. See Figure 1.<br />

• Determine galaxy selection function. The observed distribution of a parameter<br />

(e.g., bulge fraction) derived from a large number of objects in<br />

an image is <strong>the</strong> intrinsic distribution multiplied by <strong>the</strong> galaxy selection<br />

function which gives <strong>the</strong> probability that an object with a given set of<br />

structural parameters will be detected by SExtractor. <strong>GIM2D</strong> determines<br />

<strong>the</strong> galaxy selection function by taking an empty background section of<br />

<strong>the</strong> science image, adding simulated galaxies to that image section and<br />

running SExtractor to determine whe<strong>the</strong>r it would have been detected or<br />

not. The resulting multidimensional selection function can <strong>the</strong>n be used<br />

to “flat-field” <strong>the</strong> observed structural parameter distributions.<br />

5. Scientific Results<br />

5.1. Hubble Deep Field<br />

Hubble Deep Field F814W images were analyzed with <strong>GIM2D</strong> (Marleau and<br />

Simard 1998). The number of bulge-dominated galaxies is much lower (≤ 10%)<br />

than indicated by qualitative morphological studies. Some of <strong>the</strong>se studies<br />

quoted bulge-dominated galaxy fraction as high as 30% (van den Bergh et al.

simard@shamash.ucolick.org Fri Sep 12 00:03:52 1997<br />

NOAO/<strong>IRAF</strong> simard@shamash.ucolick.org Fri Sep 12 00:01:44 1997<br />

NOAO/<strong>IRAF</strong><br />

<strong>GIM2D</strong>: <strong>Quantitative</strong> Morphology <strong>An</strong>alysis of Distant Galaxies 111<br />

600<br />

400<br />

200<br />

cl24w4t - GAL-CLUS-002635+17<br />

200 400 600 800<br />

z1=-14.23 z2=25.83 ztrans=linear Con=-0.70 Brt=0.67 cmap=Grayscale ncolors=201<br />

-14 -6 1 9 17 25<br />

600<br />

400<br />

200<br />

cl24w4t_res - GAL-CLUS-002635+17<br />

200 400 600 800<br />

z1=-14.23 z2=25.83 ztrans=linear Con=-0.70 Brt=0.67 cmap=Grayscale ncolors=201<br />

-14 -6 1 9 17 25<br />

Figure 1. Left: HST/WFPC2/WF4 4400 seconds image of a region<br />

in <strong>the</strong> z = 0.4 cluster CL0024+16. Right: <strong>GIM2D</strong> residual image. 157<br />

objects were fitted, and galaxies which were too close to <strong>the</strong> edge of<br />

<strong>the</strong> image were not analyzed.<br />

1996). It was also found that a number of HDF galaxies have surface brightness<br />

profiles with Sérsic index n ≤ 1. Some of <strong>the</strong>m exhibit double exponential<br />

profiles which can be easily mistaken <strong>for</strong> pure bulge systems. However, <strong>the</strong>se<br />

systems have spectra resembling those of late-type star-<strong>for</strong>ming galaxies. O<strong>the</strong>r<br />

HDF objects are best-fitted with a single n

Astronomical Data <strong>An</strong>alysis Software and Systems VII<br />

ASP Conference Series, Vol. 145, 1998<br />

R. Albrecht, R. N. Hook and H. A. Bushouse, eds.<br />

Grid OCL : A Graphical Object Connecting Language<br />

I. J. Taylor 1<br />

Department of Physics and Astronomy, University of Wales, College of<br />

Cardiff, PO BOX 913, Cardiff, Wales, UK, Email:<br />

Ian.Taylor@astro.cf.ac.uk<br />

B. F. Schutz 2<br />

Albert Einstein Institute, Max Planck Institute <strong>for</strong> Gravitational<br />

Physics, Schlaatzweg 1, Potsdam, Germany. Email:<br />

schutz@aei-potsdam.mpg.de<br />

Abstract. In this paper, we present an overview of <strong>the</strong> Grid OCL<br />

graphical object connecting language. Grid OCL is an extension of Grid,<br />

introduced last year, that allows users to interactively build complex data<br />

processing systems by selecting a set of desired tools and connecting <strong>the</strong>m<br />

toge<strong>the</strong>r graphically. Algorithms written in this way can now also be run<br />

outside <strong>the</strong> graphical environment.<br />

1. Introduction<br />

Signal-processing systems are becoming an essential tool within <strong>the</strong> scientific<br />

community. This is primarily due to <strong>the</strong> need <strong>for</strong> constructing large complex<br />

algorithms which would take many hours of work to code using conventional<br />

programming languages. Grid OCL (Object Connecting Language) is a graphical<br />

interactive multi-threaded environment allowing users to construct complex<br />

algorithms by creating an object-oriented block diagram of <strong>the</strong> analysis required.<br />

2. <strong>An</strong> Overview<br />

When Grid OCL is run three windows are displayed. A ToolBox window, a Main-<br />

Grid window and a Dustbin window (to discard unwanted units). Figure 1 shows<br />

<strong>the</strong> ToolBox window which is divided into two sections. The top section shows<br />

<strong>the</strong> available toolboxes (found by scanning <strong>the</strong> toolbox paths specified in <strong>the</strong><br />

Setup menu) and <strong>the</strong> bottom shows <strong>the</strong> selected toolbox’s contents. Toolboxes<br />

(and associated tools) can be stored on a local server or distributed throughout<br />

several network servers. Simply adding <strong>the</strong> local or http address in <strong>the</strong> toolbox<br />

and tool path setup allows on-<strong>the</strong>-fly access to o<strong>the</strong>r people’s tools.<br />

1 A post doctoral programmer at Cardiff who has been developing Grid OCL since January 1996.<br />

2 Professor Schutz is a Director of <strong>the</strong> Albert Einstein Institute, Potsdam<br />

112<br />

© Copyright 1998 Astronomical Society of <strong>the</strong> Pacific. All rights reserved.

Grid OCL : A Graphical Object Connecting Language 113<br />

Figure 1. Grid OCL’s ToolBox window. Toolboxes can be organised<br />

in a similar way to files in a standard file manager.<br />

Units are created by dragging <strong>the</strong>m from <strong>the</strong> ToolBox window to <strong>the</strong> desired<br />

position in <strong>the</strong> MainGrid window and <strong>the</strong>n connected toge<strong>the</strong>r by dragging from<br />

an output socket on a sending unit to an input socket of <strong>the</strong> receiving unit. The<br />

algorithm is run by clicking on <strong>the</strong> start button (see Figure 2), in a single step<br />

fashion (i.e., one step at a time) or continuously.<br />

3. New Features and Extensions<br />

Groups of units can now be saved along with <strong>the</strong>ir respective parameters. Such<br />

groups can also contain groups, which can contain o<strong>the</strong>r groups and so on. This<br />

is a very powerful feature which allows <strong>the</strong> programmer to hide <strong>the</strong> complexity<br />

of programs and use groups as if <strong>the</strong>y were simply units <strong>the</strong>mselves. Many<br />

improvements have been made to <strong>the</strong> graphical interface, including compacting<br />

<strong>the</strong> look and style of <strong>the</strong> toolbox, adding a snap-to cable layout and many more<br />

in<strong>for</strong>mative windows. The major change however, is that now Grid consists of<br />

an object connecting language (OCL) and a separate user interface. This means<br />

that <strong>the</strong> units can be run from within <strong>the</strong> user interface or as a stand-alone<br />

program.<br />

Collaborators are working on tools <strong>for</strong> various signal and image problems,<br />

multimedia teaching aids and even to construct a musical composition system.<br />

Currently, in our new release we have toolboxes <strong>for</strong> various signal processing<br />

procedures, animation and a number of image processing/manipulation routines,<br />

text processing tools e.g., find and replace, grep and line counting recursively<br />

through subdirectories, ma<strong>the</strong>matical and statistical units, a general purpose<br />

ma<strong>the</strong>matical calculator (see next section) and a number of flexible importing<br />

and exporting units.

114 Taylor and Schutz<br />

4. MathCalc<br />

Figure 2. A snapshot of Grid OCL’s programming window.<br />

The MathCalc unit interprets, optimises and evaluates arithmetic expressions<br />

using stream-oriented arithmetic. It recognises a large number of functions and<br />

constants. It can be used to evaluate scalar expressions, to process input data<br />

sets, or to generate output data sets. All calculations are per<strong>for</strong>med in doubleprecision<br />

real arithmetic.<br />

Stream-oriented arithmetic can be defined as <strong>the</strong> application of an arithmetic<br />

expression to each element of a stream independently. Thus, if B is<br />

<strong>the</strong> sequence b1,b2, .., bn, <strong>the</strong>n <strong>the</strong> function sin(B) evaluates to <strong>the</strong> sequence<br />

sin(b1),sin(b2), ..., sin(bn). MathCalc distinguishes between constants and sequences<br />

or sets. Sets (data sets) are sequences of numbers and constants are<br />

single numbers, essentially sequences of length 1. In a MathCalc expression <strong>the</strong><br />

two can be mixed very freely, with <strong>the</strong> restriction that all sequences must have<br />

<strong>the</strong> same length. Sequences or constants can be obtained from <strong>the</strong> input nodes of<br />

<strong>the</strong> MathCalc unit. The example given in <strong>the</strong> MainGrid window (see Figure 2)<br />

demonstrates <strong>the</strong> flexibility of <strong>the</strong> MathCalc unit.<br />

The first MathCalc unit creates a 125 Hz sine wave by using <strong>the</strong> equation<br />

sin(((sequence(512) ∗ 2) ∗ PI) ∗0.125) where <strong>the</strong> sample rate is 1kHz (Math-<br />

Calc will optimise this to (2*PI*0.125) * sequence(512)). This is <strong>the</strong>n trans<strong>for</strong>med<br />

into a SampleSet type by adding its sampling frequency (i.e., 1 kHz).<br />

The second MathCalc unit adds Gaussian noise to its input (i.e., by typing<br />

gaussian(512)+#0s). The #0 means node 0 and <strong>the</strong> s meansthatitisasequence<br />

as opposed to a c which would a constant. The resultant amplitude<br />

spectrum (FFTASpect) is shown from Grapher1 (see Figure 3).<br />

Once <strong>the</strong> signal is displayed, it can be investigated fur<strong>the</strong>r by using one of<br />

<strong>the</strong> Grapher’s various zooming facilities. Zooming can be controlled via a zoom<br />

window which allows specific ranges to be set or by simply using <strong>the</strong> mouse to<br />

drag throughout <strong>the</strong> image. For example, by holding <strong>the</strong> control key down and<br />

dragging down <strong>the</strong> image is zoomed in vertically and by dragging across from<br />

left to right zoomed in horizontally. The reverse operations allow zooming out.<br />

Also once zoomed in, by holding <strong>the</strong> shift key and <strong>the</strong> control key down <strong>the</strong><br />

mouse can be used to move around <strong>the</strong> particular area you are interested in. We<br />

also have ano<strong>the</strong>r powerful zooming function which literally allows <strong>the</strong> user to<br />

drag to <strong>the</strong> position of interest and <strong>the</strong> image will zoom in accordingly.

Grid OCL : A Graphical Object Connecting Language 115<br />

Figure 3. Grapher1’s output: any grapher can simultaneously display<br />

many signals each with its own colour and line style.<br />

5. Grid OCL : Past and Present<br />

Grid originated from an implementation of <strong>the</strong> system using C++ and Inter-<br />

Views (Taylor & Schutz 1995) but was abandoned in early 1996. Version two<br />

(Taylor & Schutz 1996) was written using <strong>the</strong> Java Development Kit, JDK 1.0.2<br />

but this was updated in order to be compatible with <strong>the</strong> new JDK 1.1.x kit.<br />

We also re-implemented <strong>the</strong> base classes and to create OCL. The most recent<br />

version of Grid OCL (in November 1997) is a late alpha and goes by a different<br />

name (Triana OCL 4 ). Triana OCL will be entering its beta testing stage early<br />

next year followed by a final version shortly after. We are in <strong>the</strong> process of being<br />

able to provide a commercial version of <strong>the</strong> software <strong>for</strong> which support can be<br />

given. None-<strong>the</strong>-less we will always provide it in a free downloadable from <strong>the</strong><br />

WWW with a certain time limit (3 or 4 months). Our main goal is to create a<br />

very wide user base.<br />

References<br />

Taylor, I. J. & Schutz, B. F. 1995, The Grid Musical-Signal Processing System,<br />

International Computer Music Conference, 371<br />

Taylor, I. J. & Schutz, B. F. 1996, The Grid Signal Processing System. in ASP<br />

Conf. Ser., Vol. 125, Astronomical Data <strong>An</strong>alysis Software and Systems<br />

VI,ed.GarethHunt&H.E.Payne(SanFrancisco:ASP),18<br />

4 http://www.astro.cf.ac.uk/Triana/

Astronomical Data <strong>An</strong>alysis Software and Systems VII<br />

ASP Conference Series, Vol. 145, 1998<br />

R. Albrecht, R. N. Hook and H. A. Bushouse, eds.<br />

GUI-fying and Documenting your Shell Script<br />

Peter. J. Teuben 1<br />

Astronomy Department, University of Maryland, College Park, MD<br />

20742, Email: teuben@astro.umd.edu<br />

Abstract.<br />

We describe a simple method to annotate shell scripts and have a<br />

preprocessor extract a set of variables, present <strong>the</strong>m to <strong>the</strong> user in a<br />

GUI (using Tcl/Tk) with context sensitive help, and run <strong>the</strong> script. It<br />

<strong>the</strong>n becomes also very easy to rerun <strong>the</strong> script with different values of<br />

<strong>the</strong> parameters and accumulate output of different runs in a set of user<br />

defined areas on <strong>the</strong> screen, <strong>the</strong>reby generating a very powerful survey<br />

and analysis tool.<br />

1. Introduction<br />

Scripting languages have often been considered <strong>the</strong> glue between individual applications,<br />

and are meant to achieve a higher level of programming.<br />

When individual applications are (tightly) integrated into <strong>the</strong> scripting language,<br />

this offers very powerful scripts, fully graphical user interfaces and a<br />

result sometimes indistinguishable from applications. A recent example of this<br />

is <strong>the</strong> glish shell in AIPS++ (Shannon 1996). But of course <strong>the</strong> drawback of this<br />

tight integration is that applications are not always easily accessible to scripts<br />

that do not (or cannot) make use of <strong>the</strong> environment <strong>the</strong> scripting language was<br />

meant <strong>for</strong>.<br />

Apart from <strong>the</strong> black art of handcoding, one of <strong>the</strong> traditional methods<br />

to add a GUI to an application is using automatic GUI builders. This has<br />

<strong>the</strong> advantage that application code and user interaction code are more cleanly<br />

separated, but this sometimes also limits <strong>the</strong> flexibility with which <strong>the</strong> code can<br />

be written.<br />

This paper presents a simple implementation where <strong>the</strong> style of <strong>the</strong> underlying<br />

application is batch oriented, and in fact can be written in any language.<br />

The user interface must be cleanly defined in a set of parameters with optional<br />

values (e.g., named “keyword=value” pairs). Once <strong>the</strong> input values have been<br />

set, <strong>the</strong> application can be launched, results can be captured and presented in<br />

any way <strong>the</strong> script or application decides.<br />

2. Tcl/Tk: TkRun<br />

The GUI that is created will provide a simple interface to a program that is<br />

spawned by <strong>the</strong> GUI. This program must have a well defined Command Line<br />

116<br />

© Copyright 1998 Astronomical Society of <strong>the</strong> Pacific. All rights reserved.

GUI-fying and Documenting your Shell Script 117<br />

Interface (CLI), in <strong>the</strong> current implementation a “keyword=value” interface.<br />

Equally well, a Unix-style “-option value” could have been used (cf. Appleton’s<br />

parseargs <strong>package</strong>). The GUI builder, a small 600 line C program called tkrun,<br />

scans <strong>the</strong> script <strong>for</strong> special tags (easily added as comments, which automatically<br />

make <strong>the</strong> script self-documenting), and creates a Tcl/Tk script from which <strong>the</strong><br />

shell script itself (or any application of choice with a specified CLI) can be<br />

launched (see Figure 2 <strong>for</strong> a schematic).<br />

The added power one gets with this interface builder is <strong>the</strong> simplified reexecution<br />

of <strong>the</strong> script, which gives <strong>the</strong> user a powerful tool to quickly examine<br />

a complex parameter space of a particular problem.<br />

The input script must define a set of parameters, each with a keyword, an<br />

optional initial value, a widget style, usage requirements and one line help. The<br />

keyword widget style can be selected from a small set of input styles that standard<br />

Tcl/Tk provides (such as generic text entry, file browsers, radio buttons,<br />

sliders etc.)<br />

The current implementation has been tested under Tcl/Tk 7.6 as well as<br />

8.0, but is expected to move along as Tcl/Tk is developed fur<strong>the</strong>r. For example<br />

a more modern widget layout technique (grid instead of pack) should be used.<br />

Also keywords cannot have dependencies on each o<strong>the</strong>r, <strong>for</strong> example it would be<br />

nice to “grey out” certain options under certain circumstances, or allow ranges<br />

of some keywords to depend on <strong>the</strong> settings of o<strong>the</strong>rs.<br />

3. Sample Script: testscript<br />

Here is an example header from a C-shell script with which Figure 1 was made.<br />

Note that <strong>the</strong> script must supply a proper “keyword=value” parsing interface,<br />

as was done with a simple <strong>for</strong>each construct here. The latest version of tkrun<br />

is available through <strong>the</strong> NEMO 1 <strong>package</strong>.<br />

#! /bin/csh -f<br />

# :: define basic GUI elements <strong>for</strong> tkrun to extract<br />

#> IFILE in=<br />

#> OFILE out=<br />

#> ENTRY eps=0.01<br />

#> RADIO mode=gauss gauss,newton,leibniz<br />

#> CHECK options=mean,sigma sum,mean,sigma,skewness,kurtosis<br />

#> SCALE n=1 0:10:0.01<br />

# :: some one liners<br />

#> HELP in Input filename<br />

#> HELP out Output filename (should not exist yet)<br />

#> HELP eps Initial (small) step<br />

#> HELP mode Integration Method<br />

#> HELP options Statistics of residuals to show<br />

#> HELP n Order of polynomial<br />

# :: parse named arguments<br />

<strong>for</strong>each a ($*)<br />

set $a<br />

end<br />

1 http://www.astro.umd.edu/nemo/

118 Teuben<br />

Figure 1. With <strong>the</strong> command “tkrun testscript” <strong>the</strong> upper panel<br />

is created, providing a simple interface to <strong>the</strong> “key=val” command line<br />

interface of <strong>the</strong> script testscript (see below). The lower panel is a<br />

standard Tcl/Tk filebrowser that can be connected to keywords that<br />

are meant to be files. See Figure 2 <strong>for</strong> a schematic diagram explaining<br />

<strong>the</strong> interaction between <strong>the</strong> different programs and scripts.<br />

# :: actual start of code<br />

echo TESTSCRIPT in=$in out=$out eps=$eps mode=$mode options=$options n=$n<br />

# :: legacy script can be inserted here or keyword<br />

# :: values can be passed on to ano<strong>the</strong>r program<br />

Acknowledgments. I would like to thank Frank Valdes and Mark Pound<br />

<strong>for</strong> discussing some ideas surrounding this paper, and Jerry Hudson <strong>for</strong> his<br />

Graphics Command Manager FLO.<br />

References<br />

Appleton, Brad (parseargs, based on Eric Allman’s version)

tkrun testscript<br />

GUI-fying and Documenting your Shell Script 119<br />

testscript<br />

#! /bin/csh -f<br />

#> IFILE<br />

# tags<br />

in=<br />

#> ENTRY eps=<br />

...<br />

...<br />

# code:<br />

#! /bin/wish -f<br />

...<br />

lappend args ....<br />

exec testscript $args<br />

...<br />

testscript.tk<br />

testscript in=... out=... eps=... ....<br />

in:<br />

wish -f testscript.tk<br />

RUN<br />

Figure 2. Flow diagram: The command tkrun scans <strong>the</strong> C-shell<br />

script testscript (top left) <strong>for</strong> keywords and <strong>the</strong> Tcl/Tk script<br />

testscript.tk (bottom left) is automatically written and run. It<br />

presents <strong>the</strong> keywords to <strong>the</strong> user in a GUI (on <strong>the</strong> right, see Figure<br />

1 <strong>for</strong> a detailed view) ), of which <strong>the</strong> “Run” button will execute<br />

<strong>the</strong> C-shell code in <strong>the</strong> script testscript.<br />

Judson, Jerry (FLO: a Graphical Command Manager)<br />

Ousterhout, John, 1994, Tcl and <strong>the</strong> Tk Toolkit, Addison-Wesley<br />

Shannon, P., 1996, in ASP Conf. Ser., Vol. 101, Astronomical Data <strong>An</strong>alysis<br />

Software and Systems V, ed. George H. Jacoby & Jeannette Barnes (San<br />

Francisco: ASP), 319<br />

Teuben, P.J., 1995, in ASP Conf. Ser., Vol. 77, Astronomical Data <strong>An</strong>alysis<br />

Software and Systems IV, ed. R. A. Shaw, H. E. Payne & J. J. E. Hayes<br />

(San Francisco: ASP), 398<br />

eps:

Astronomical Data <strong>An</strong>alysis Software and Systems VII<br />

ASP Conference Series, Vol. 145, 1998<br />

R. Albrecht, R. N. Hook and H. A. Bushouse, eds.<br />

The Mosaic Data Capture Agent<br />

Doug Tody and Francisco G. Valdes<br />

<strong>IRAF</strong> Group, NOAO 1 , PO Box 26732, Tucson, AZ 85726<br />

Abstract. The Mosaic Data Capture Agent (DCA) plays a central role<br />

in connecting <strong>the</strong> raw data stream from <strong>the</strong> NOAO CCD Mosaic controller<br />

system to <strong>the</strong> rest of <strong>the</strong> data handling system (DHS). It is responsible <strong>for</strong><br />

assembling CCD data from multiple readouts into a multiextension FITS<br />

disk file, <strong>for</strong> supplying a distributed shared image to real-time elements<br />

of <strong>the</strong> DHS such as <strong>the</strong> Real-Time Display (RTD), and <strong>for</strong> feeding data<br />

to <strong>the</strong> <strong>IRAF</strong>-based data reduction agent (DRA).<br />

1. Overview<br />

A brief design of <strong>the</strong> data handling system (DHS) <strong>for</strong> <strong>the</strong> NOAO CCD Mosaic<br />

Camera 2 was presented earlier (Tody, 1997). It is based on a message bus<br />

architecture (Tody, 1998) that connects <strong>the</strong> various components of <strong>the</strong> DHS.<br />

One such component is <strong>the</strong> data capture agent (DCA). The DCA receives streams<br />

of readout data from <strong>the</strong> acquisition system and builds a data object which is<br />

shared by o<strong>the</strong>r components of <strong>the</strong> DHS. The image data is stored on disk as a<br />

multiextension FITS file (MEF) (see Valdes 1997b).<br />

The DCA is a general purpose network-based data service, using <strong>the</strong> message<br />

bus as <strong>the</strong> network interface. It receives request messages of various types<br />

and, through an event loop, dispatches each request to <strong>the</strong> appropriate event<br />

handler. The request message types currently implemented in <strong>the</strong> DCA are <strong>for</strong><br />

control, setting or querying server parameters, readout status, data <strong>for</strong>mat configuration,<br />

and writing header and pixel data. In addition to servicing client<br />

requests, <strong>the</strong> DCA can broadcast messages to in<strong>for</strong>m clients of <strong>the</strong> status of <strong>the</strong><br />

DCA during readout.<br />

The major subsystems of <strong>the</strong> DCA at present are <strong>the</strong> message bus interface,<br />

an event loop and event handlers <strong>for</strong> processing message requests, a distributed<br />

shared object implementation, a keyword database system, <strong>the</strong> TCL interpreter,<br />

and a TCL-based keyword translation module.<br />

1 National Optical Astronomy Observatories, operated by <strong>the</strong> Association of Universities <strong>for</strong><br />

Research in Astronomy, Inc. (AURA) under cooperative agreement with <strong>the</strong> National Science<br />

Foundation.<br />

2 http://www.noao.edu/kpno/mosaic/<br />

120<br />

© Copyright 1998 Astronomical Society of <strong>the</strong> Pacific. All rights reserved.

2. DCA User Interface<br />

The Mosaic Data Capture Agent 121<br />

The DCA may be started by issuing a command at <strong>the</strong> host level, or invoked as a<br />

service via <strong>the</strong> message bus. This can occur during login to an observing account,<br />

using a window manager menu, or by interactively issuing a user command. The<br />

command invocation can include DCA server parameters which can also be set<br />

or reset after invocation via messages.<br />

The DCA automatically connects to <strong>the</strong> message bus when it is started.<br />

The runtime operation of <strong>the</strong> DCA is monitored and controlled by clients via<br />

<strong>the</strong> message bus. In <strong>the</strong> Mosaic system <strong>the</strong>re are two clients; <strong>the</strong> data feed<br />

client (DFC) and <strong>the</strong> DCA console client (DCAGUI). In general any number<br />

of clients can access <strong>the</strong> DCA. Multiple data feed clients or GUIs can be active<br />

simultaneously.<br />

The DCA console client has a graphical user interface based on <strong>the</strong> <strong>IRAF</strong><br />

Widget Server (Tody, 1996). It can send control and parameter messages to <strong>the</strong><br />

DCA and act on messages broadcast by <strong>the</strong> DCA, e.g., to display <strong>the</strong> readout<br />

status. Currently <strong>the</strong> DCAGUI executes an autodisplay command <strong>for</strong> real time<br />

display during readout (see Valdes, 1998) and a post-processing command <strong>for</strong><br />

logging, archiving or o<strong>the</strong>r operations once <strong>the</strong> readout ends.<br />

3. The Readout Sequence<br />

<strong>An</strong> observation readout is driven by a sequence of DCA message requests. A<br />

readout sequence is initiated by <strong>the</strong> DFC with a message that includes a unique<br />

sequence number. Once a readout has started, each subsequent message associated<br />

with that readout must be tagged with <strong>the</strong> sequence number <strong>for</strong> that<br />

readout. Multiple readouts can be simultaneously in progress. Each readout<br />

sequence has a separate context identified by its sequence number.<br />

When a readout sequence is initiated <strong>the</strong> DCA creates a new image-class<br />

distributed shared object (DSO). The DFC passes in<strong>for</strong>mation about <strong>the</strong> size and<br />

structure of <strong>the</strong> object (such as <strong>the</strong> number of image extensions), allowing <strong>the</strong><br />

DCA to configure <strong>the</strong> DSO. At this point <strong>the</strong> DFC can begin sending pixel and<br />

header messages. At image creation time <strong>the</strong> DCA broadcasts a message so that<br />

clients like <strong>the</strong> DCAGUI can access it if desired. Currently <strong>the</strong> DCAGUI uses<br />

this to initiate an interim real-time display.<br />

When <strong>the</strong> readout is completed <strong>the</strong> DCA executes a keyword translation<br />

module (KTM), an externally supplied, interpreted TCL script which converts<br />

detector specific in<strong>for</strong>mation into standard header keywords. After <strong>the</strong> KTM<br />

finishes <strong>the</strong> DCA and DSO <strong>for</strong>mat <strong>the</strong> output keywords, write <strong>the</strong>m to <strong>the</strong><br />

image headers, and generation of <strong>the</strong> new image DSO is complete. The DCA<br />

broadcasts a message when <strong>the</strong> DSO is complete which can be used to trigger<br />

post-processing of <strong>the</strong> data.<br />

3.1. Pixel Messages<br />

The pixel data messages contain blocks of raw, unprocessed detector pixel data<br />

organized into one or more streams, one <strong>for</strong> each CCD amplifier. Each stream<br />

directs pixels to a region of an output image extension. This structure allows<br />

<strong>the</strong> data block to simultaneously contain data <strong>for</strong> several different regions and

122 Tody and Valdes<br />

<strong>the</strong> data can be arbitrarily interleaved, encoded, flipped, or aliased. Each block<br />

of data is processed asynchronously but <strong>the</strong> client can send a synchronization<br />

request periodically to check <strong>the</strong> output status.<br />

3.2. Keyword Messages<br />

The DCA receives header in<strong>for</strong>mation via <strong>the</strong> message bus. This in<strong>for</strong>mation<br />

consists of blocks of keywords organized into named, detector specific keyword<br />

groups. The keywords are stored in keyword databases (an internal random access<br />

data structure), one per keyword group. The set of keyword group names is<br />

arbitrary. Some examples <strong>for</strong> <strong>the</strong> NOAO Mosaic are ICS <strong>for</strong> instrument control<br />

system, TELESCOPE <strong>for</strong> telescope, and ACEBn <strong>for</strong> Arcon controller in<strong>for</strong>mation<br />

<strong>for</strong> controller n.<br />

The Arcon controller system <strong>for</strong> <strong>the</strong> NOAO Mosaic consists of a set of<br />

Arcon controllers each of which can readout one or more amplifiers. The current<br />

system has four controllers each reading out two CCDs using one amplifier per<br />

CCD. Thus <strong>the</strong>re can be controller in<strong>for</strong>mation <strong>for</strong> <strong>the</strong> whole system, <strong>for</strong> each<br />

controller, and <strong>for</strong> each amplifier readout.<br />

A keyword database library handles creation and maintenance of <strong>the</strong> keyword<br />

database. Note that keyword in<strong>for</strong>mation does not necessarily have to<br />

come only from <strong>the</strong> DFC, <strong>the</strong> current mode of operation <strong>for</strong> <strong>the</strong> NOAO Mosaic.<br />

O<strong>the</strong>r schemes are possible.<br />

4. Keyword Translation Module<br />

The keyword translation module (KTM) is a TCL script called by <strong>the</strong> DCA at<br />

<strong>the</strong> end of a readout, once all raw header in<strong>for</strong>mation has been received. The<br />

purpose of <strong>the</strong> KTM is to create <strong>the</strong> keywords <strong>for</strong> <strong>the</strong> global and extension<br />

headers. The KTM is passed a list of input keyword database descriptors and<br />

it returns a list of output keyword database descriptors, one <strong>for</strong> each header.<br />

The DCA TCL interpreter provides special TCL commands <strong>for</strong> manipulating<br />

(creating, accessing, searching, etc.) <strong>the</strong> keyword databases. When <strong>the</strong> KTM<br />

finishes <strong>the</strong> DCA, via <strong>the</strong> DSO, writes <strong>the</strong> keywords in <strong>the</strong> returned output<br />

keyword databases to <strong>the</strong> output MEF FITS file.<br />

The KTM per<strong>for</strong>ms a variety of trans<strong>for</strong>mations on <strong>the</strong> input keywords. A<br />

keyword can be copied verbatim if no change is desired. The keyword name or<br />

comment may be changed without changing <strong>the</strong> value. New keywords can be<br />

added with static in<strong>for</strong>mation and default values may be supplied <strong>for</strong> missing<br />

keywords. The KTM can compute new keywords and values from input keywords.<br />

Identical keywords in each extension may be merged into a single keyword<br />

in <strong>the</strong> global header. The KTM can detect incorrect or missing keywords and<br />

print warnings or errors.<br />

Two examples from <strong>the</strong> keyword translation module <strong>for</strong> <strong>the</strong> NOAO CCD<br />

Mosaic follow.<br />

1. The data acquisition system provides <strong>the</strong> keywords DATE-OBS and UT-<br />

SHUT giving <strong>the</strong> UT observation date in <strong>the</strong> old FITS date <strong>for</strong>mat<br />

(dd/mm/yy) and <strong>the</strong> UT of <strong>the</strong> shutter opening. The KTM converts <strong>the</strong>se

The Mosaic Data Capture Agent 123<br />

to TIME-OBS, MJD-OBS, OBSID, and <strong>the</strong> new Y2K-compliant FITS date<br />

<strong>for</strong>mat.<br />

2. The KTM determines on which telescope <strong>the</strong> Mosaic Camera is being<br />

used and writes <strong>the</strong> WCS scale, orientation, and distortion in<strong>for</strong>mation<br />

previously derived from astrometry calibrations <strong>for</strong> those telescopes. The<br />

coordinate reference point keywords, CRVAL1 and CRVAL2, are computed<br />

from <strong>the</strong> telescope right ascension and declination keywords.<br />

5. Distributed Shared Objects and Distributed Shared Images<br />

<strong>An</strong> important class of message bus component is <strong>the</strong> distributed shared object<br />

(DSO). DSOs allow data objects to be concurrently accessed by multiple clients.<br />

The DSO provides methods <strong>for</strong> accessing and manipulating <strong>the</strong> data object and<br />

locking facilities to ensure data consistency. DSOs are distributed, meaning<br />

that clients can be on any host or processor connected to <strong>the</strong> message bus. In<br />

<strong>the</strong> case of <strong>the</strong> Mosaic DHS, <strong>the</strong> principal DSO is <strong>the</strong> distributed shared image<br />

which is used <strong>for</strong> data capture, to drive <strong>the</strong> real-time display, and <strong>for</strong> quick<br />

look interaction from within <strong>IRAF</strong>. The distributed shared image uses shared<br />

memory <strong>for</strong> efficient concurrent access to <strong>the</strong> pixel data, and messaging to in<strong>for</strong>m<br />

clients of changes to <strong>the</strong> image.<br />

The current version of <strong>the</strong> DCA does not implement <strong>the</strong> full distributed<br />

shared image object. Instead it implements a mapped image file. The image<br />

being created appears as a valid FITS multiextension file immediately after <strong>the</strong><br />

configuration in<strong>for</strong>mation is received from <strong>the</strong> DFC. This allows applications<br />

to examine <strong>the</strong> file while <strong>the</strong> readout is in progress. <strong>An</strong> example of this is <strong>the</strong><br />

interim display routine that loads a display server frame buffer as <strong>the</strong> pixel data<br />

is recorded, giving a simple real-time display capability.<br />

6. Current Status and Future Developments<br />

A version of <strong>the</strong> Data Capture Agent is in production use at two telescopes on<br />

Kitt Peak with <strong>the</strong> NOAO CCD Mosaic Camera. The flexibility of <strong>the</strong> message<br />

bus architecture is used to provide two modes of operation. The standard mode<br />

uses two machines connected by fast E<strong>the</strong>rnet. One machine supports <strong>the</strong> observing<br />

user interface and <strong>the</strong> interface to <strong>the</strong> controller system. A DFC running<br />

on this host writes to <strong>the</strong> message bus and <strong>the</strong> DCA runs on ano<strong>the</strong>r machine<br />

(with a different OS) where <strong>the</strong> exposures are written to disk and <strong>the</strong> quick look<br />

interaction and any post-processing are done. The second mode is a fallback<br />

in case <strong>the</strong> second computer fails. The DCA can simply be run on <strong>the</strong> same<br />

machine as <strong>the</strong> UI and controller interface.<br />

Future developments will complete <strong>the</strong> distributed shared object and console<br />

client and add o<strong>the</strong>r clients such as <strong>the</strong> real-time display (Tody, 1997) and<br />

data reduction agent (Valdes 1997a).<br />

The design of <strong>the</strong> DCA (and <strong>the</strong> whole DHS based on <strong>the</strong> message bus<br />

architecture) is open, flexible, and efficient. It can be used with many data<br />

acquisition systems at a variety of observatories. All that is needed is to write a<br />

data feed client to connect to <strong>the</strong> message bus and a keyword translation module

124 Tody and Valdes<br />

appropriate <strong>for</strong> <strong>the</strong> data. Currently <strong>the</strong> lower level messaging system is based<br />

on <strong>the</strong> Parallel Virtual Machine (PVM) library but this can be replaced in <strong>the</strong><br />

future with o<strong>the</strong>r messaging systems such as CORBA.<br />

References<br />

Tody, D. 1996, in ASP Conf. Ser., Vol. 101, Astronomical Data <strong>An</strong>alysis Software<br />

and Systems V, ed. George H. Jacoby & Jeannette Barnes (San Francisco:<br />

ASP), 89<br />

Tody, D. 1997, in ASP Conf. Ser., Vol. 125, Astronomical Data <strong>An</strong>alysis Software<br />

and Systems VI, ed. Gareth Hunt & H. E. Payne (San Francisco: ASP),<br />

451<br />

Tody, D. 1998, this volume<br />

Valdes, F. 1997a, in ASP Conf. Ser., Vol. 125, Astronomical Data <strong>An</strong>alysis<br />

Software and Systems VI, ed. Gareth Hunt & H. E. Payne (San Francisco:<br />

ASP), 455<br />

Valdes, F. 1997b, in ASP Conf. Ser., Vol. 125, Astronomical Data <strong>An</strong>alysis<br />

Software and Systems VI, ed. Gareth Hunt & H. E. Payne (San Francisco:<br />

ASP), 459<br />

Valdes, F. 1998, this volume

Astronomical Data <strong>An</strong>alysis Software and Systems VII<br />

ASP Conference Series, Vol. 145, 1998<br />

R. Albrecht, R. N. Hook and H. A. Bushouse, eds.<br />

Packaging Radio/Sub-millimeter Spectral Data in FITS<br />

Zhong Wang<br />

Smithsonian Astrophysical Observatory, Cambridge, MA 02138 U.S.A.<br />

Email: zwang@cfa.harvard.edu<br />

Abstract. We report on an experiment to incorporate <strong>the</strong> multiple<br />

extension FITS file <strong>for</strong>mat in building data processing software <strong>for</strong> spectroscopic<br />

observations in radio and millimeter-submillimeter wavelengths.<br />

By packaging spectral header in<strong>for</strong>mation into table extensions and <strong>the</strong><br />

actual spectral data into ei<strong>the</strong>r table or image extensions, this approach<br />

provides a convenient and effective way to organize large amount of spectroscopic<br />

data, especially <strong>for</strong> pipeline-like processing tasks. Tests conducted<br />

based on a software <strong>package</strong> developed <strong>for</strong> <strong>the</strong> SWAS mission have<br />

demonstrated this to be a viable alternative to <strong>the</strong> conventional ways of<br />

organizing spectral data at <strong>the</strong>se wavelengths.<br />

1. Introduction: Characteristics of Submillimeter Spectral Data<br />

Historically, spectral data were taken roughly one spectrum at a time, and most<br />

existing data <strong>for</strong>mats reflect such a methodology. The traditional ways in which<br />

FITS can be used <strong>for</strong> spectral data are less than adequate in meeting <strong>the</strong> needs<br />

of a new generation of instruments that produce large amount of relatively homogeneous<br />

data, which never<strong>the</strong>less need to be reduced individually. A set of<br />

more efficient, better streamlined reduction procedures are necessary, which in<br />

turn require more careful considerations in data packaging. This is particularly<br />

important <strong>for</strong> projects that adopt non-interactive, pipeline-style data processing<br />

as <strong>the</strong>ir primary mode of operation.<br />

The SWAS 1 (Submillimeter Wave Astronomy Satellite) mission is essentially<br />

a small orbiting radio telescope working at relatively high (about 500<br />

GHz) frequencies (Wang 1995). It is designed to make simultaneous spectroscopic<br />

measurements at several sub-mm line wavelengths that are inaccessible<br />

from <strong>the</strong> ground. The spectral data have some special characteristics:<br />

• Integration time <strong>for</strong> each individual “scan” is set to be short (on <strong>the</strong> order<br />

of a few seconds). High signal-to-noise ratios are achieved by co-adding a<br />

large number of such scans at <strong>the</strong> data processing stage;<br />

1 Details of <strong>the</strong> SWAS mission can be found on <strong>the</strong> internet in SAO’s SWAS homepage at<br />

http://cfa-www.harvard.edu/cfa/oir/Research/swas.html<br />

125<br />

© Copyright 1998 Astronomical Society of <strong>the</strong> Pacific. All rights reserved.

126 Wang<br />

• Because of <strong>the</strong> noise properties and frequency shifts with time (due to <strong>the</strong><br />

motion of <strong>the</strong> spacecraft as well as instrumental drifts), individual scans<br />

need to be calibrated separately be<strong>for</strong>e co-adding;<br />

• More than one spectral band is recorded at any given time, thus a complete<br />

dataset consists of spectra of several different wavelength ranges from independent<br />

detectors. Yet <strong>the</strong>y are of <strong>the</strong> same source and are taken under<br />

very similar observing conditions;<br />

• Observations are broken up into individual “segments” each lasting typically<br />

30–40 minutes. During each segment, many spectroscopic scans<br />

(aside from calibration data) are simple repeats under nearly identical<br />

conditions, and <strong>the</strong>re<strong>for</strong>e share values of many header parameters with<br />

only slight variations (drifts).<br />

The data reduction plan <strong>for</strong> <strong>the</strong> mission calls <strong>for</strong> a highly efficient pipeline<br />

processing scheme in which a large amount of spectroscopic data can be sorted,<br />

selected, calibrated and co-added based on some user-changeable criteria. All<br />

processing operations have to be recorded and traceable, and are preferably done<br />

with minimal human intervention.<br />

2. Software Approach: Packaging Data in FITS<br />

We have selected <strong>IRAF</strong> as our primary programming plat<strong>for</strong>m <strong>for</strong> <strong>the</strong> SWAS<br />

pipeline software, and use FITS as <strong>the</strong> main <strong>for</strong>mat <strong>for</strong> our data product. However,<br />

to store individual scan data in <strong>the</strong> conventional one- (single spectrum) or<br />

two-dimensional (“multispec” or echelle) <strong>for</strong>mat would be very inefficient and<br />

even confusing.<br />

Our approach is to make use of <strong>the</strong> BINTABLE and IMAGE extensions of<br />

FITS and store spectral header and pixel data in <strong>the</strong>se two types of extensions<br />

in a single FITS data file. The basic rules we adopted are:<br />

• Each FITS file corresponds to a specific time interval of data taking (an<br />

observing segment). It contains a single BINTABLE extension and (optionally)<br />

several IMAGE extensions. It has a conventional header section<br />

containing some none-time-variable, shared parameters of <strong>the</strong> spectra;<br />

• The table extension has one row per spectral scan taken, and stores all of<br />

<strong>the</strong> time-variable spectral header in<strong>for</strong>mation corresponding to that scan<br />

(i.e., time, coordinates, frequency, noise statistics, etc);<br />

• The pixel data of <strong>the</strong> spectrograph output (<strong>the</strong> spectrum itself) can be<br />

stored in one of two ways: <strong>the</strong>y can ei<strong>the</strong>r be individual “array elements”<br />

of a table row, or an image row similar to <strong>the</strong> two-dimensional arrays used<br />

to save Echelle and multi-fiber spectra in <strong>the</strong> optical.<br />

In case of using variable-length, vector (array) columns to store pixel data,<br />

a table row can have more than one array element, each representing data<br />

of a separate spectral band. The length of an array element is <strong>the</strong> number<br />

of pixels in a spectrum of that band.

Packaging Radio/Sub-millimeter Spectral Data in FITS 127<br />

In case of images, each image row corresponds to a row in <strong>the</strong> header<br />

parameter table. The number of columns of <strong>the</strong> image is <strong>the</strong> same as <strong>the</strong><br />

number of pixels in a spectrum. There can be multiple image extensions<br />

in each data file, with different extensions representing data of different<br />

spectral bands.<br />

The choice between using <strong>the</strong> table extension of FITS alone versus using<br />

table plus image extensions depends on <strong>the</strong> actual application. In principle, saving<br />

everything in a single table is structurally more appealing and efficient, but<br />

not many existing software tools can accept variable-length vector column FITS<br />

tables — which means more development work <strong>for</strong> basic tools. On <strong>the</strong> o<strong>the</strong>r<br />

hand, making use of 2-D images can facilitate data inspection procedures with<br />

various tools that examine FITS images. Its shortcoming is that in<strong>for</strong>mation<br />

about a single spectrum is saved in two or more extensions, requiring care in<br />

managing <strong>the</strong>m.<br />

3. Advantages and Efficiency Gains<br />

Given <strong>the</strong> lack of a widely-accepted standard in <strong>for</strong>matting a largely homogeneous<br />

spectroscopic dataset, we find <strong>the</strong> packaging using FITS extensions to be<br />

a viable alternative to <strong>the</strong> conventional ways in which spectral data are saved:<br />

• It provides a convenient way to organize, sort, edit, monitor and visualize<br />

<strong>the</strong> large spectral dataset (much of it is done with existing tools within<br />

<strong>IRAF</strong> or those directly working with FITS), while preserving all <strong>the</strong> essential<br />

in<strong>for</strong>mation of each individual spectrum;<br />

• The programming of calibration tasks to process a relatively large, nearlyhomogeneous<br />

dataset is very much simplified. In particular, <strong>the</strong> spectral<br />

header parameters can be processed ei<strong>the</strong>r independently or as table<br />

columns. It also means that, <strong>for</strong> example, a subset of <strong>the</strong> header parameters<br />

of a large number of spectra can be easily manipulated without even<br />

accessing <strong>the</strong> actual pixel data;<br />

• The efficiency of data processing is clearly enhanced. Since spectral pixel<br />

data are always processed as arrays, <strong>the</strong> fractional time taken by file I/Os<br />

and data buffering is substantially reduced when, <strong>for</strong> example, those data<br />

are accessed as part of an image.<br />

Many of <strong>the</strong> existing software tools that deal with FITS table and image<br />

data are readily available <strong>for</strong> use with <strong>the</strong>se new data files, sometimes with minor<br />

modifications. This can significantly save <strong>the</strong> programming ef<strong>for</strong>t and shorten<br />

<strong>the</strong> software development cycle.<br />

4. Comments and Future Work<br />

Despite <strong>the</strong> considerable merits of this approach, some problems remain to be<br />

addressed, and we are working on future enhancement of our software.

128 Wang<br />

One of <strong>the</strong> main problems is <strong>the</strong> interface with existing software tools (such<br />

as visualization tools from certain existing tools <strong>package</strong>s). In practice, we were<br />

able to partly circumvent this shortcoming by writing customized interfaces,<br />

but <strong>the</strong> solutions are not always satisfactory. It appears that if a tabular style<br />

file <strong>for</strong>mat is deemed sufficiently important, a more fundamental (kernel-level)<br />

interface <strong>for</strong> spectral data in FITS should be developed.<br />

The approach described in this paper is an explorative step in enhancing <strong>the</strong><br />

use of FITS, especially in taking advantage of <strong>the</strong> multiple extension development<br />

and <strong>the</strong> use of vector columns in FITS tables. With <strong>the</strong> rapid advancement<br />

in database and data warehousing technology, one would ideally like to have a<br />

more rigorous database-like framework to deal with <strong>the</strong> management of <strong>the</strong> observational<br />

data. This, however, needs to be balanced with <strong>the</strong> requirement<br />

of cost-effective and quick prototyping of many projects’ practical applications.<br />

The latter often means maximizing <strong>the</strong> use of existing software tools and adopting<br />

conventional approaches. The multiple extension FITS adopted by <strong>the</strong> community<br />

and being incorporated by NOAO and STScI’s <strong>IRAF</strong> teams (e.g., Zarate<br />

& Greenfield 1996, Zarate 1998) and o<strong>the</strong>r software groups is an important and<br />

innovative development, which preserves <strong>the</strong> usefulness of many old tools while<br />

allowing new methods to be tested <strong>for</strong> astronomical data applications. We believe<br />

that more ef<strong>for</strong>ts are needed in exploring ways to take full advantage of<br />

this very useful data <strong>for</strong>mat.<br />

References<br />

Wang, Z. 1995, in ASP Conf. Ser., Vol. 77, Astronomical Data <strong>An</strong>alysis Software<br />

and Systems IV, ed. R. A. Shaw, H. E. Payne & J. J. E. Hayes (San<br />

Francisco: ASP), 402<br />

Zarate, N. & Greenfield, P. 1996, in ASP Conf. Ser., Vol. 101, Astronomical<br />

Data <strong>An</strong>alysis Software and Systems V, ed. George H. Jacoby & Jeannette<br />

Barnes (San Francisco: ASP), 331<br />

Zarate, N. 1998, this volume<br />

Acknowledgments. We are grateful to Phil Hodge and Nelson Zarate <strong>for</strong><br />

<strong>the</strong>ir help in <strong>the</strong> software development discussed in this paper. Dr. Mat<strong>the</strong>w<br />

Ashby has participated in implementation and testing of <strong>the</strong> SWAS software.

Astronomical Data <strong>An</strong>alysis Software and Systems VII<br />

ASP Conference Series, Vol. 145, 1998<br />

R. Albrecht, R. N. Hook and H. A. Bushouse, eds.<br />

The IDL Wavelet Workbench<br />

M. Werger<br />

Astrophysics Division, Space Science Department of ESA, ESTEC, 2200<br />

AG Noordwijk, The Ne<strong>the</strong>rlands, EMail: mwerger@astro.estec.esa.nl<br />

A. Graps<br />

Stan<strong>for</strong>d University, Center <strong>for</strong> Space Science and Astrophysics, HEPL<br />

<strong>An</strong>nex A210, Stan<strong>for</strong>d, Cali<strong>for</strong>nia, 94305-4085 EMail:<br />

amara@quake.stan<strong>for</strong>d.edu<br />

Abstract. Progress in <strong>the</strong> development of <strong>the</strong> 1996 release of <strong>the</strong> IDL<br />

Wavelet Workbench (WWB) is shown. The WWB is now improved in<br />

several ways, among <strong>the</strong>m are: (1) a smarter GUI which easily directs <strong>the</strong><br />

user to <strong>the</strong> possibilities of <strong>the</strong> WWB, (2) <strong>the</strong> inclusion of more wavelets,<br />

(3) <strong>the</strong> enhancement of <strong>the</strong> input and output modules to provide a better<br />

interface to <strong>the</strong> input and output data and (4) <strong>the</strong> addition of more<br />

analysis methods based on <strong>the</strong> wavelet trans<strong>for</strong>m.<br />

1. Introduction<br />

One of <strong>the</strong> most advanced <strong>package</strong>s <strong>for</strong> wavelet analysis is probably Wavelab 1<br />

written <strong>for</strong> MATLAB. New insights have been gained in many o<strong>the</strong>r fields by<br />

applying wavelet data analysis, thus it was a reasonable task <strong>for</strong> us in astronomical<br />

research to translate most of <strong>the</strong> code from <strong>the</strong> Wavelab <strong>package</strong> into<br />

IDL (Interactive Data Language, by Research Systems, Inc.). IDL was chosen<br />

because of its wide-spread availability in <strong>the</strong> astronomical community and because<br />

of its development environment. The last official version of <strong>the</strong> so-called<br />

IDL Wavelet Workbench (WWB) was in <strong>the</strong> Spring of 1996. It has been made<br />

publicly available at <strong>the</strong> ftp site of Research Systems, Inc. 2 .<br />

2. The 1996 version of IDL<br />

The 1996 version of <strong>the</strong> WWB consists of 111 different modules with approximately<br />

10,000 lines of code in total. Approximately all modules have been<br />

written or translated from MATLAB code into IDL by AG. The 1996 version<br />

can be run ei<strong>the</strong>r from <strong>the</strong> IDL command line or from a graphical user interface<br />

(GUI).<br />

1 http://stat.stan<strong>for</strong>d.edu/ ∼ wavelab/<br />

2 ftp://ftp.rsinc.com/<br />

129<br />

© Copyright 1998 Astronomical Society of <strong>the</strong> Pacific. All rights reserved.

130 Werger and Graps<br />

The WWB is written in a highly modularized way to be easily maintained<br />

and improved. In <strong>the</strong> 1996 version, COMMON blocks are used to store important<br />

variables <strong>for</strong> <strong>the</strong> different routines. These COMMON blocks can be set<br />

also from <strong>the</strong> command line. There<strong>for</strong>e, it is possible to use <strong>the</strong> WWB as a<br />

stand-alone <strong>package</strong> and also as a library to supplement ones own IDL routines.<br />

The 1996 WWB provides simple input and output routines. Its analysis<br />

and plotting libraries are sophisticated and employ most of <strong>the</strong> typical methods<br />

used in wavelet analysis like <strong>the</strong> Discrete Wavelet Trans<strong>for</strong>m, Multiresolution<br />

<strong>An</strong>alysis, Wavelet Packet <strong>An</strong>alysis, Scalegram, andScalogram. In addition, <strong>the</strong><br />

1996 WWB offers typical routines <strong>for</strong> de-noising and compression of one- and<br />

two-dimensional data. The available set of wavelets is restricted up to four important<br />

families: <strong>the</strong> Haar-wavelet and <strong>the</strong> families of <strong>the</strong> Daubechies-wavelets,<br />

Coiflets, and Symmlets.<br />

3. Current Developments<br />

The 1996 release <strong>the</strong> IDL WWB has been widely used <strong>for</strong> different tasks such<br />

as pattern detection, time-series analysis and de-noising of data. A lot of useful<br />

routines have been added to <strong>the</strong> WWB since 1996, or <strong>the</strong>y are <strong>for</strong>eseen to be<br />

included.<br />

• The current version makes use of <strong>the</strong> most recent changes to IDL (version<br />

5.0.2); now WWB uses pointers to handle arbitrary data arrays. Also, <strong>the</strong><br />

WWB command line interface and <strong>the</strong> GUI may be used at <strong>the</strong> same time.<br />

• The GUI has been simplified; now it includes more possibilities, but with<br />

an easier interface and a less complicated dialog structure.<br />

• All necessary variables are now kept in two IDL data structures, those<br />

variables also may be set from <strong>the</strong> command line.<br />

• The data input portion of <strong>the</strong> WWB has been upgraded to handle FITSfiles;<br />

<strong>the</strong> output portion of WWB has been upgraded so that one can use<br />

<strong>the</strong> GUI to set PostScript output.<br />

• More analysis routines are now available. In additional to <strong>the</strong> <strong>for</strong>ward<br />

DWT, now <strong>the</strong> backward DWT (IWT ) has been included to show possible<br />

differences between <strong>the</strong> original and trans<strong>for</strong>med data. A continuous<br />

wavelet trans<strong>for</strong>m using <strong>the</strong> Gauss, Sombrero, and Morlet wavelets has<br />

been added also.<br />

• The capabilities <strong>for</strong> time-series analysis has been greatly enhanced by<br />

adding wavelets and routines which improve period detection. For example,<br />

a routine has been added <strong>for</strong> detecting periods in unevenly-sampled<br />

time-series, and eleven new wavelet filters are provided.<br />

• The computations can now allow datasets more than 32767 points long.<br />

• Plotting capabilities of <strong>the</strong> Scalogram have been improved.

The IDL Wavelet Workbench 131<br />

• For a better understanding of <strong>the</strong> wavelet trans<strong>for</strong>m, a GUI <strong>for</strong> manipulating<br />

specific wavelet coefficients has been included. This greatly improves<br />

<strong>the</strong> learning and analyzing process.<br />

4. Future Plans<br />

There are some future plans <strong>for</strong> integrating capabilities to analyze multidimensional<br />

data and adding additional routines. Suggestions and contributions from<br />

<strong>the</strong> user community are greatly welcome.<br />

Acknowledgments. The 1996 WWB has been partly funded by RSI, Inc.

Astronomical Data <strong>An</strong>alysis Software and Systems VII<br />

ASP Conference Series, Vol. 145, 1998<br />

R. Albrecht, R. N. Hook and H. A. Bushouse, eds.<br />

<strong>IRAF</strong> Multiple Extensions FITS (MEF) Files Interface<br />

Nelson Zarate<br />

National Optical Astronomy Observatories<br />

Tucson, AZ 85719 (zarate@noao.edu)<br />

Abstract. The Multiple Extension FITS (MEF) file interface is an<br />

<strong>IRAF</strong> library providing facilities <strong>for</strong> general file operations upon FITS<br />

multi-extension files. The MEF library has been used as <strong>the</strong> basis <strong>for</strong> a<br />

set of new <strong>IRAF</strong> tasks providing file level operations <strong>for</strong> multi-extension<br />

files. These operations include functions like listing extensions, extracting,<br />

inserting, or appending extensions, deleting extensions, and manipulating<br />

extension headers. MEF supports extensions of any type since it<br />

is a file level interface and does not attempt to interpret <strong>the</strong> contents of<br />

a particular extension type. O<strong>the</strong>r <strong>IRAF</strong> interfaces such as IMIO (<strong>the</strong><br />

FITS image kernel) and STSDAS TABLES are available <strong>for</strong> dealing with<br />

specific types of extensions such as <strong>the</strong> IMAGE extension or binary tables.<br />

1. Introduction<br />

The Multiple Extensions FITS (MEF) interface consists of a number of routines<br />

to mainly read a FITS Primary Data Unit or an Extension Unit and manipulate<br />

<strong>the</strong> data at a file level. It is up to <strong>the</strong> application to take care of any details<br />

regarding data structuring and manipulation. For example, <strong>the</strong> MEF interface<br />

will read a BINTABLE extension and give to <strong>the</strong> calling program a set of parameters<br />

like dimensionality, datatype, header buffer pointer and data portion<br />

offset from <strong>the</strong> beginning of <strong>the</strong> file.<br />

Currently <strong>the</strong> routines available to an SPP program are:<br />

• mef = mef_open (fitsfile, acmode, oldp)<br />

• mef_rdhdr (mef, group, extname, extver)<br />

• mef_rdhdr_exnv (mef, extname, extver)<br />

• mef_wrhdr (mefi, mefo, in_phdu)<br />

• [irdb]val = mefget[irdb] (mef, keyword)<br />

• mefgstr (mef, keyword, outstr, maxch)<br />

• mef_app_file (mefi, mefo)<br />

• mef_copy_extn (mefi, mefo, group)<br />

132<br />

© Copyright 1998 Astronomical Society of <strong>the</strong> Pacific. All rights reserved.

<strong>IRAF</strong> Multiple Extensions FITS (MEF) Files Interface 133<br />

• mef_dummyhdr (fd, hdrfname)<br />

[irdb]: int, real, double, boolean.<br />

2. Initializing Routine<br />

2.1. mef = mef open (fitsfile, acmode, oldp)<br />

Initializes <strong>the</strong> MEF interface. Should be <strong>the</strong> first routine to be called when<br />

per<strong>for</strong>ming operations on FITS files using this set of routines. Returns a pointer<br />

to <strong>the</strong> MEF structure.<br />

fitsfile Pathname to <strong>the</strong> FITS file to be open. The general syntax is:<br />

dir$root.extn[group]<br />

• dir: Directory name where <strong>the</strong> file resides<br />

• root: Rootname<br />

• extn: (optional) Extension name — can be any extension string<br />

• group: Extension number to be opened<br />

The ‘[group]’ string is optional and is not part of <strong>the</strong> disk filename. It is<br />

used to specified which extension number to open. The extension number<br />

is zero based — zero <strong>for</strong> <strong>the</strong> primary extension, 1 <strong>for</strong> <strong>the</strong> first extension,<br />

and so on.<br />

acmode The access mode of <strong>the</strong> file. The possible values are:<br />

READ ONLY, READ WRITE, APPEND, NEW FILE<br />

oldp Not used. Reserve <strong>for</strong> future use.<br />

3. Header Routines<br />

3.1. mef rdhdr (mef, group, extname, extver)<br />

Read <strong>the</strong> FITS header of a MEF file that matches <strong>the</strong> EXTNAME or EXTVER<br />

keyword values or if not specified, read <strong>the</strong> extension number ‘group’. If no<br />

extension is found an error is posted. After reading <strong>the</strong> header <strong>the</strong> file pointer<br />

is positioned at <strong>the</strong> end of <strong>the</strong> last data FITS block (2880 bytes).<br />

mef The MEF pointer returned by mef open. When <strong>the</strong> routine returns, all<br />

of <strong>the</strong> elements of <strong>the</strong> MEF structure will have values belonging to <strong>the</strong><br />

header just read.<br />

group The extension number to be read — zero <strong>for</strong> <strong>the</strong> Primary Data Unit, 1<br />

<strong>for</strong> <strong>the</strong> first extension, and so on. If you want to find out an extension by<br />

<strong>the</strong> value of extname and/or extver <strong>the</strong>n ‘group’ should be -1.<br />

extname The string that will match <strong>the</strong> EXTNAME value of any extension.<br />

The first match is <strong>the</strong> extension header returned.

134 Zarate<br />

extver The integer value that will match <strong>the</strong> EXTVER value of any extension.<br />

If ‘extname’ is not null <strong>the</strong>n both values need to match be<strong>for</strong>e <strong>the</strong> routine<br />

returns. If <strong>the</strong>re are no values to match <strong>the</strong>n ‘extver’ should be INDEFL.<br />

3.2. mef rdhdr gn (mef,group)<br />

Read extension number ‘group’. If <strong>the</strong> extension number does not exist, an error<br />

is posted.<br />

mef The MEF pointer returned by mef open. When <strong>the</strong> routine returns, all<br />

of <strong>the</strong> elements of <strong>the</strong> MEF structure will have values belonging to <strong>the</strong><br />

header just read.<br />

group The extension number to be read — zero <strong>for</strong> <strong>the</strong> Primary Data Unit, 1<br />

<strong>for</strong> <strong>the</strong> first extension, and so on.<br />

3.3. mef rdhdr exnv (mef,extname, extver)<br />

Read group based on <strong>the</strong> Extname and Extver values.<br />

encountered, an error is posted.<br />

If <strong>the</strong> group is not<br />

mef The MEF pointer returned by mef open. When <strong>the</strong> routine returns, all<br />

of <strong>the</strong> elements of <strong>the</strong> MEF structure will have values belonging to <strong>the</strong><br />

header just read.<br />

extname The string that will match <strong>the</strong> EXTNAME value of any extension.<br />

The first match is <strong>the</strong> extension header returned.<br />

extver The integer value that will match <strong>the</strong> EXTVER value of any extension.<br />

If ‘extname’ is not null <strong>the</strong>n both values need to match be<strong>for</strong>e <strong>the</strong> routine<br />