Subgradient slides

Subgradient slides

Subgradient slides

You also want an ePaper? Increase the reach of your titles

YUMPU automatically turns print PDFs into web optimized ePapers that Google loves.

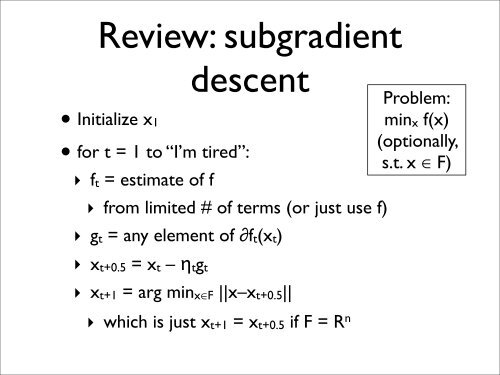

Review: subgradient<br />

descent<br />

• Initialize x1<br />

• for t = 1 to “I’m tired”:<br />

‣ ft = estimate of f<br />

Problem:<br />

minx f(x)<br />

(optionally,<br />

s.t. x ∈ F)<br />

‣ from limited # of terms (or just use f)<br />

‣ gt = any element of ∂ft(xt)<br />

‣ xt+0.5 = xt – ηtgt<br />

‣ xt+1 = arg minx∈F ||x–xt+0.5||<br />

‣ which is just xt+1 = xt+0.5 if F = R n

<strong>Subgradient</strong> in action<br />

!<br />

"<br />

#<br />

$<br />

!#<br />

!"<br />

!!<br />

!! !" !# $ # " !

Convergence summary<br />

• For strictly convex f(x) (i.e., λ>0):<br />

‣ set ƞt = 1/λt<br />

~<br />

‣ f(xt) – f(x*) = O(1/t)<br />

• For non-strictly convex f(x) (i.e., λ=0):<br />

‣ set ƞt = 1/√t<br />

‣ f(xt) – f(x*) = O(1/√t)<br />

• To get accuracy ϵ:<br />

~<br />

‣ λ>0: T = O(1/ϵ)<br />

‣ λ=0: T = O(1/ϵ 2 )<br />

Interior point: T =<br />

O(ln(1/ϵ)), but each<br />

iteration much slower

Convergence intuition<br />

• Q(x) = ||x – x * || 2 /2<br />

• Proof works by guaranteeing that Q(x) decreases<br />

‣ subtlety: only if f(xt) ≫ f(x * )<br />

‣ has to be like this: e.g., multiple minimizers of f<br />

• We showed (for λ=0):<br />

‣ f(x * ) ≥ f(xt) + Q(xt+1)/ƞt – Q(xt)/ƞt + ƞt||gt|| 2 /2<br />

• Suppose f(xt) ≥ f(x * ) + ϵ:

Typical SVM bound<br />

• Given n training examples, for any δ

Example: SVM<br />

• Suppose no b<br />

‣ L = ||w|| 2 /2 + (C/m) ∑ h(yixi T w))<br />

‣ ∂L = w + (C/m) ∑ yi xi ∂h(yixi T w))<br />

• If ||xi|| ≤ X:

What if we want b<br />

• Problem: λ=0<br />

‣<br />

• Solutions:<br />

‣ ignore the problem:<br />

‣ penalize b too:<br />

‣ change the algorithm slightly: