Dense Matrix Algorithms -- Chapter 8 Introduction

Dense Matrix Algorithms -- Chapter 8 Introduction

Dense Matrix Algorithms -- Chapter 8 Introduction

Create successful ePaper yourself

Turn your PDF publications into a flip-book with our unique Google optimized e-Paper software.

3<br />

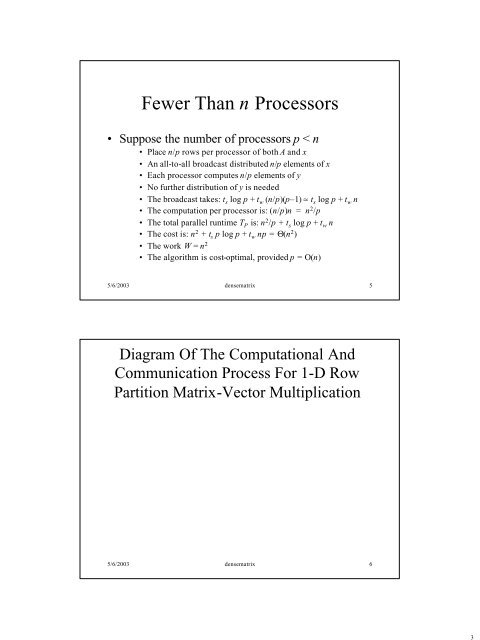

Fewer Than n Processors<br />

• Suppose the number of processors p < n<br />

• Place n/p rows per processor of both A and x<br />

• An all-to-all broadcast distributed n/p elements of x<br />

• Each processor computes n/p elements of y<br />

• No further distribution of y is needed<br />

• The broadcast takes: t s log p + t w (n/p)(p–1) ≈ t s log p + t w n<br />

• The computation per processor is: (n/p)n = n 2 /p<br />

• The total parallel runtime T P is: n 2 /p + t s log p + t w n<br />

• The cost is: n 2 + t s p log p + t w np = Θ(n 2 )<br />

• The work W = n 2<br />

• The algorithm is cost-optimal, provided p = O(n)<br />

5/6/2003 densematrix 5<br />

Diagram Of The Computational And<br />

Communication Process For 1-D Row<br />

Partition <strong>Matrix</strong>-Vector Multiplication<br />

5/6/2003 densematrix 6