Dense Matrix Algorithms -- Chapter 8 Introduction

Dense Matrix Algorithms -- Chapter 8 Introduction

Dense Matrix Algorithms -- Chapter 8 Introduction

You also want an ePaper? Increase the reach of your titles

YUMPU automatically turns print PDFs into web optimized ePapers that Google loves.

9<br />

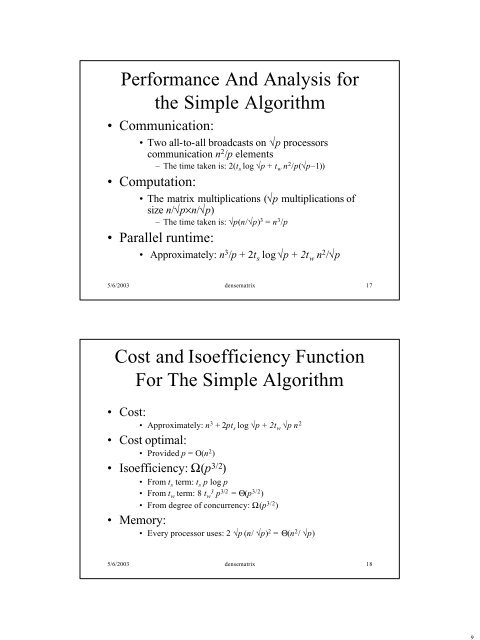

Performance And Analysis for<br />

the Simple Algorithm<br />

• Communication:<br />

• Two all-to-all broadcasts on √p processors<br />

communication n 2 /p elements<br />

– The time taken is: 2(t s log √p + t w n 2 /p(√p–1))<br />

• Computation:<br />

• The matrix multiplications (√p multiplications of<br />

size n/√p×n/√p)<br />

– The time taken is: √p(n/√p) 3 = n 3 /p<br />

• Parallel runtime:<br />

• Approximately: n 3 /p + 2t s log √p + 2t w n 2 /√p<br />

5/6/2003 densematrix 17<br />

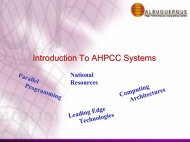

Cost and Isoefficiency Function<br />

For The Simple Algorithm<br />

• Cost:<br />

• Approximately: n 3 + 2pt s log √p + 2t w √p n 2<br />

• Cost optimal:<br />

• Provided p = O(n 2 )<br />

• Isoefficiency: Ω(p 3/2 )<br />

• From t s term: t s p log p<br />

• From t w term: 8 t w 3 p 3/2 = Θ(p 3/2 )<br />

• From degree of concurrency: Ω(p 3/2 )<br />

• Memory:<br />

• Every processor uses: 2 √p (n/ √p) 2 = Θ(n 2 / √p)<br />

5/6/2003 densematrix 18