A Network Interface Card Architecture for I/O Virtualization in ... - TUM

A Network Interface Card Architecture for I/O Virtualization in ... - TUM

A Network Interface Card Architecture for I/O Virtualization in ... - TUM

You also want an ePaper? Increase the reach of your titles

YUMPU automatically turns print PDFs into web optimized ePapers that Google loves.

A <strong>Network</strong> <strong>Interface</strong> <strong>Card</strong> <strong>Architecture</strong> <strong>for</strong> I/O <strong>Virtualization</strong><br />

<strong>in</strong> Embedded Systems<br />

Holm Rauchfuss<br />

Technische Universität<br />

München<br />

Institute <strong>for</strong> Integrated<br />

Systems<br />

D-80290 Munich, Germany<br />

holm.rauchfuss@tum.de<br />

Thomas Wild<br />

Technische Universität<br />

München<br />

Institute <strong>for</strong> Integrated<br />

Systems<br />

D-80290 Munich, Germany<br />

thomas.wild@tum.de<br />

Andreas Herkersdorf<br />

Technische Universität<br />

München<br />

Institute <strong>for</strong> Integrated<br />

Systems<br />

D-80290 Munich, Germany<br />

herkersdorf@tum.de<br />

ABSTRACT<br />

In this paper we present an architectural concept <strong>for</strong> network<br />

<strong>in</strong>terface cards (NIC) target<strong>in</strong>g embedded systems and support<strong>in</strong>g<br />

I/O virtualization. Current solutions <strong>for</strong> high per<strong>for</strong>mance<br />

comput<strong>in</strong>g do not sufficiently address embedded<br />

system requirements i.e., guarantee real-time constra<strong>in</strong>ts and<br />

differentiated service levels as well as only utilize limited<br />

HW resources. The central ideas of our work-<strong>in</strong>-progress<br />

concept are: A scalable and streaml<strong>in</strong>ed NIC architecture<br />

stor<strong>in</strong>g the rule sets (contexts) <strong>for</strong> virtual network <strong>in</strong>terfaces<br />

and associated <strong>in</strong><strong>for</strong>mation like DMA descriptors and<br />

producer/consumer lists primarily <strong>in</strong> the system memory.<br />

Only <strong>for</strong> currently active <strong>in</strong>terfaces or <strong>in</strong>terfaces with special<br />

requirements, e.g. hard real-time, the required <strong>in</strong><strong>for</strong>mation<br />

is cached on the NIC. By switch<strong>in</strong>g between the contexts<br />

the NIC can flexibly adapt to service a scalable number<br />

of <strong>in</strong>terfaces. With the contexts the proposed architecture<br />

also supports differentiated service levels. On the NIC<br />

(re-)configurable f<strong>in</strong>ite state mach<strong>in</strong>es (FSM) are handl<strong>in</strong>g<br />

the data path <strong>for</strong> I/O virtualization. This allows a more<br />

resource-limited NIC implementation. With a prelim<strong>in</strong>ary<br />

analysis we estimate the benefits of the proposed architecture<br />

and key components of the architecture are outl<strong>in</strong>ed.<br />

Categories and Subject Descriptors<br />

C.4 [Per<strong>for</strong>mance of Systems]: Design Studies, Per<strong>for</strong>mance<br />

Attributes; B.4.2 [Input/Output and Data Communications]:<br />

Input/Output Devices—Channels and Controllers<br />

General Terms<br />

Design, Per<strong>for</strong>mance<br />

Keywords<br />

I/O <strong>Virtualization</strong>, Embedded Systems, <strong>Network</strong> <strong>Interface</strong><br />

<strong>Card</strong><br />

1. INTRODUCTION<br />

Over the last decade(s), virtualization has become a ma<strong>in</strong>stream<br />

technique <strong>in</strong> data centers <strong>for</strong> better resource utilization<br />

by server consolidation. By abstraction, the physical<br />

ressources are shared between several virtual mach<strong>in</strong>es<br />

This paper appeared at the Second Workshop on I/O <strong>Virtualization</strong> (WIOV<br />

’10), March 13, 2010, Pittsburgh, PA, USA.<br />

(VM), so called doma<strong>in</strong>s. The improvement of underly<strong>in</strong>g<br />

virtual mach<strong>in</strong>e monitors (VMM) ([1], [2]) and HW ([4])<br />

<strong>for</strong> data centers have been targeted by research extensively.<br />

However virtualization is still an emerg<strong>in</strong>g topic <strong>for</strong> embedded<br />

systems, <strong>in</strong> particular multiprocessor system-on-chips.<br />

Their <strong>in</strong>creas<strong>in</strong>g per<strong>for</strong>mance and the comb<strong>in</strong>ation of applications<br />

with different requirements on a s<strong>in</strong>gle shared plat<strong>for</strong>m<br />

make them particularly well-suited <strong>for</strong> virtualization.<br />

First steps have been taken to analyze and adopt virtualization<br />

here ([6], [7]).<br />

A critical aspect is the virtualization of I/O, s<strong>in</strong>ce there the<br />

computational overhead and the per<strong>for</strong>mance degradation is<br />

high, <strong>in</strong> both data centers and embedded systems. Research<br />

<strong>for</strong> High Per<strong>for</strong>mance Comput<strong>in</strong>g (HPC) shows that near<br />

native throughput i.e., throughput equal to a set-up without<br />

virtualization, can be achieved by improvements <strong>in</strong> SW<br />

packet handl<strong>in</strong>g and offload<strong>in</strong>g virtualization onto the NIC<br />

([9], [10]). S<strong>in</strong>ce their focus is on overall system throughput<br />

maximization, but not on resource-limited architectures<br />

of NICs, the proposed architectures are not optimal <strong>for</strong> the<br />

usage <strong>in</strong> embedded systems and their specific requirements.<br />

The paper is structured as follows: Section 2 provides an<br />

overview on state of the art of I/O virtualization. Section<br />

3 describes the specific requirements <strong>for</strong> embedded systems<br />

and the fundamental concepts of the proposed NIC architecture.<br />

A prelim<strong>in</strong>ary per<strong>for</strong>mance estimation is given <strong>in</strong><br />

section 4. An exploration of key components is described <strong>in</strong><br />

section 5. Section 6 outl<strong>in</strong>es future work and summarizes<br />

the paper.<br />

2. STATE OF THE ART<br />

Shar<strong>in</strong>g physical network access between doma<strong>in</strong>s can be implemented<br />

<strong>in</strong> HW, SW or <strong>in</strong> a mixed mode [12]. The generic<br />

solution i.e., VMM only, dedicates one virtualization doma<strong>in</strong><br />

as driver doma<strong>in</strong> and exclusively assigns the network card to<br />

it. In such a system, other doma<strong>in</strong>s ga<strong>in</strong> network access by<br />

transferr<strong>in</strong>g packets via a SW-based bridge and front- and<br />

back-end device drivers [1]. Several protocol improvements<br />

reduce the overhead of the actual transmission of the packets<br />

between the doma<strong>in</strong>s, a comprehensive overview is given<br />

by [11]. I/O virtualization can also be per<strong>for</strong>med with<strong>in</strong> the<br />

VMM itself i.e., the hypervisor provides drivers <strong>for</strong> network<br />

cards and switches packets between the doma<strong>in</strong>s ([3]).

NIC<br />

Rx MAC Tx<br />

DMA<br />

NIC-CPU<br />

Management<br />

DMA-Mgmt.<br />

Signal<strong>in</strong>g<br />

Header-Pars<strong>in</strong>g<br />

Queue<strong>in</strong>g<br />

Schedul<strong>in</strong>g<br />

NIC Internal / Instruction Memory<br />

DMA<br />

System Bus<br />

CPU CPU<br />

System Memory<br />

P/C Lists<br />

Rx/Tx R<strong>in</strong>gs<br />

Packets<br />

communication path. This results <strong>in</strong> an <strong>in</strong>creased latency<br />

and (complex) schedul<strong>in</strong>g dependencies. Process<strong>in</strong>g time of<br />

the host cpu and system memory are utilized by this driver<br />

doma<strong>in</strong>. If the hypervisor is directly per<strong>for</strong>m<strong>in</strong>g I/O virtualization,<br />

the trusted comput<strong>in</strong>g base of the hypervisor is<br />

broadened with side-effects on security, footpr<strong>in</strong>t and verification.<br />

Multiple-queue network cards are limited <strong>in</strong> their number<br />

of available queue pairs. For support<strong>in</strong>g a scalable number<br />

of doma<strong>in</strong>s, such a NIC has either to keep unused pairs <strong>in</strong><br />

reserve or fallback to SW-based bridg<strong>in</strong>g <strong>for</strong> excess doma<strong>in</strong>s.<br />

Rx queues are served <strong>in</strong> the order given by packet arrival,<br />

result<strong>in</strong>g <strong>in</strong> possible head-of-l<strong>in</strong>e block<strong>in</strong>g <strong>for</strong> high-priority<br />

packets.<br />

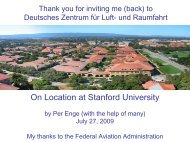

Figure 1: RiceNIC with central process<strong>in</strong>g on PowerPC<br />

CPU<br />

A further improvement to the upper scenario is the usage<br />

of multi-queue network cards [9] such as Intel’s VMDq [13].<br />

Those network cards offer multiple pairs of Tx/Rx queues.<br />

This allows HW offload<strong>in</strong>g of packet (de-)multiplex<strong>in</strong>g and<br />

queu<strong>in</strong>g <strong>for</strong> doma<strong>in</strong>s based on their MAC address (and VLAN<br />

tag). A Tx/Rx pair is assigned to a VM and the driver doma<strong>in</strong><br />

is granted access to the memory region with the respective<br />

Tx/Rx buffers. Tx queues are served round-rob<strong>in</strong>.<br />

Doma<strong>in</strong>s can also directly access a NIC via virtual network<br />

<strong>in</strong>terfaces. Apparently, such approaches require extensions<br />

of the NIC i.e., dedicated queues, buffers, <strong>in</strong>terfaces and<br />

additional management logic. Be<strong>for</strong>e a doma<strong>in</strong> can use its<br />

virtual network <strong>in</strong>terface the VMM has to configure the NIC<br />

accord<strong>in</strong>gly.<br />

This concept is presented based on an IXP2400 network processor<br />

as a self-virtualiz<strong>in</strong>g network card [8]. Here, one microeng<strong>in</strong>e<br />

is used <strong>for</strong> demultiplex<strong>in</strong>g Rx traffic and another<br />

one <strong>for</strong> multiplex<strong>in</strong>g Tx traffic. Management of the network<br />

card is per<strong>for</strong>med <strong>in</strong> SW on the NIC XScale CPU.<br />

The set-up is restricted to 8 doma<strong>in</strong>s, s<strong>in</strong>ce the microeng<strong>in</strong>e<br />

is limited to 8 threads. To avoid coord<strong>in</strong>ation by the SW on<br />

the XScale, none of the other free microeng<strong>in</strong>es can be used<br />

<strong>for</strong> process<strong>in</strong>g Rx or Tx traffic <strong>in</strong> parallel.<br />

Direct I/O is also addressed by RiceNIC [10]. Here concurrent<br />

network access is provided by a network card based on<br />

an FPGA. It conta<strong>in</strong>s a PowerPC CPU and several dedicated<br />

HW components (see Fig. 1 <strong>for</strong> an abstract representation).<br />

The SW on the PowerPC per<strong>for</strong>ms data and control<br />

path functions <strong>for</strong> packet process<strong>in</strong>g. Each virtual network<br />

<strong>in</strong>terface requires 388 KB of NIC memory: 4 KB <strong>for</strong> context<br />

and 128 KB each <strong>for</strong> metadata, Tx buffer and Rx buffer.<br />

Although the a<strong>for</strong>ementioned solutions provide near native<br />

throughput, they have several shortcom<strong>in</strong>gs <strong>in</strong> respect to<br />

their applicability <strong>in</strong> embedded environments.<br />

Similarly, the concept <strong>for</strong> direct I/O is also restricted by the<br />

number of <strong>in</strong> HW supported virtual network <strong>in</strong>terfaces.<br />

The utilized IXP2400 network processor is targeted as l<strong>in</strong>e<br />

card <strong>for</strong> packet <strong>for</strong>ward<strong>in</strong>g and process<strong>in</strong>g i.e., it does not<br />

represent an optimal reference architecture <strong>for</strong> network cards<br />

support<strong>in</strong>g virtualization due to its limited <strong>in</strong>terface to the<br />

host.<br />

The primary goal of RiceNIC is to have a configurable and<br />

flexible NIC architecture. There<strong>for</strong>e most functionality is<br />

per<strong>for</strong>med by the firmware on the PowerPC. As negative<br />

side effect of this, the firmware is <strong>in</strong> the critical path <strong>for</strong> all<br />

packet process<strong>in</strong>g e.g., header pars<strong>in</strong>g, DMA descriptor generation<br />

and packet (de-)multiplex<strong>in</strong>g. Furthermore, extend<strong>in</strong>g<br />

RiceNIC with extra virtual network <strong>in</strong>terfaces requires<br />

additional NIC memory <strong>for</strong> each of them.<br />

F<strong>in</strong>ally, as the overall throughput per<strong>for</strong>mance is focus of<br />

the I/O virtualization research, m<strong>in</strong>or ef<strong>for</strong>ts have been put<br />

<strong>in</strong>to resource-limited concepts <strong>for</strong> network cards themselves.<br />

This motivates our proposed concept, that is presented subsequently.<br />

3. CONCEPT FOR AN ES-VNIC ARCHITEC-<br />

TURE<br />

To better understand the need <strong>for</strong> efficient I/O virtualization<br />

<strong>in</strong> embedded systems, we give an <strong>in</strong>troductory example<br />

here: An automotive head unit <strong>for</strong> premium cars represents<br />

a flexible and high-per<strong>for</strong>mance, but still embedded<br />

system. It consolidates <strong>in</strong>fota<strong>in</strong>ment (video, audio, Internet<br />

access, etc.) and numerous car-related, safety-critical<br />

functions (park distance control, user <strong>in</strong>terface <strong>for</strong> driver<br />

assistance systems, warn<strong>in</strong>g signals, etc.) on one HW plat<strong>for</strong>m<br />

and is connected via network to other electronic control<br />

units. Based on the actual driv<strong>in</strong>g situation, different sets of<br />

functions – which can be partitioned <strong>in</strong> doma<strong>in</strong>s to achieve<br />

robustness via isolation – and their communication are active.<br />

Those situations can change quickly e.g., jump<strong>in</strong>g from<br />

normal radio listen<strong>in</strong>g to display<strong>in</strong>g an urgent traffic warn<strong>in</strong>g.<br />

Most functions have to be runn<strong>in</strong>g concurrently to prevent<br />

disruptive delays by start<strong>in</strong>g them first. To be usable<br />

<strong>in</strong> an automotive environment, the head unit has also to be<br />

implemented <strong>in</strong> a very cost- and power-efficient way.<br />

In case of SW-based bridg<strong>in</strong>g and multi-queue network cards<br />

rely on a driver doma<strong>in</strong> which is <strong>in</strong>terleaved <strong>in</strong> the network

3.1 Requirement Analysis<br />

To fit both embedded systems and I/O virtualization NIC<br />

architecture concepts need to address special requirements:<br />

• The goal of overall maximum throughput has to be<br />

complemented with low latency and real-time process<strong>in</strong>g<br />

of packets <strong>for</strong> specific doma<strong>in</strong>s. For an embedded<br />

system a mix of hard real-time, soft real-time and<br />

best-ef<strong>for</strong>t doma<strong>in</strong>s has to be supported. As example,<br />

a hard real-time doma<strong>in</strong> with a networked closed-loop<br />

control requires to transmit traffic without jitter as<br />

<strong>in</strong> opposite to a best-ef<strong>for</strong>t doma<strong>in</strong> with bursty video<br />

streams. Overall, the network card should provide calculable<br />

and predictable response time <strong>for</strong> traffic transfers.<br />

With this requirement the usage of SW should<br />

not be considered <strong>in</strong> the critical transmission path –<br />

either on the NIC itself or via driver doma<strong>in</strong>.<br />

• Different service levels require enriched methods to<br />

process packets and to signal specific events to the<br />

VMM and doma<strong>in</strong>s. This <strong>in</strong>cludes prioritization of<br />

packets and <strong>in</strong>terfaces, and also observation of bandwidth<br />

guarantees and packet dropp<strong>in</strong>g probabilities.<br />

• The general design of the network card has to <strong>in</strong>clude<br />

only a limited number of HW components <strong>for</strong> enabl<strong>in</strong>g<br />

virtualization. In relation to the power consumption<br />

and per<strong>for</strong>mance of the complete embedded system the<br />

NIC should only contribute a small fraction to it, but<br />

still provide high throughput i.e., several 100 Mb/s or<br />

higher. Furthermore, the usage of NIC memory should<br />

be limited to a m<strong>in</strong>imum. Instead the system memory<br />

should be used as much as possible.<br />

• Per<strong>for</strong>m<strong>in</strong>g I/O virtualization by the VMM or doma<strong>in</strong>s<br />

should be avoided to keep the cores free <strong>for</strong> actual process<strong>in</strong>g<br />

as <strong>in</strong> embedded system CPU power is usually<br />

more spare than <strong>in</strong> HPC systems.<br />

In general, I/O virtualization requires a NIC to per<strong>for</strong>m the<br />

follow<strong>in</strong>g tasks efficiently:<br />

• Header-Pars<strong>in</strong>g: The header of <strong>in</strong>com<strong>in</strong>g packets<br />

has to be parsed to determ<strong>in</strong>e the dest<strong>in</strong>ation doma<strong>in</strong>.<br />

The MAC dest<strong>in</strong>ation address and VLAN tag of the<br />

Ethernet header are only required <strong>for</strong> layer 2 switch<strong>in</strong>g.<br />

• Buffer<strong>in</strong>g: It must be possible to efficiently buffer a<br />

packet, because prior packets blocks further process<strong>in</strong>g<br />

or packets with higher priority have to be processed<br />

first.<br />

• Schedul<strong>in</strong>g: The NIC should be able to switch process<strong>in</strong>g<br />

between packets either due to temporarily block<strong>in</strong>gs<br />

or to handle packets of doma<strong>in</strong>s with higher priority<br />

first. There<strong>for</strong>e, the NIC can multiplex outgo<strong>in</strong>g<br />

packets from the doma<strong>in</strong>s and demultiplex <strong>in</strong>com<strong>in</strong>g<br />

traffic more sophisticated than by simple round-rob<strong>in</strong>.<br />

• DMA: The NIC should have the ability to transfer a<br />

packet to or from the (system) memory on its own.<br />

NIC<br />

Rx MAC Tx<br />

Local Cache <strong>for</strong> Contexts,<br />

P/C Lists, Rx/Tx Queues<br />

Header-Pars<strong>in</strong>g<br />

FSMs<br />

Management<br />

Schedul<strong>in</strong>g<br />

Queue-Alloc<br />

NIC Buffer<br />

Signal<strong>in</strong>g<br />

DMA<br />

System Bus<br />

System Memory<br />

CPU<br />

CPU<br />

Contexts<br />

P/C Lists<br />

Rx/Tx R<strong>in</strong>gs<br />

Packets<br />

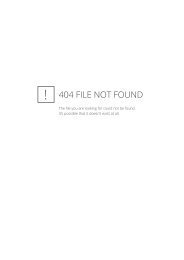

Figure 2: Concept of ES-VNIC architecture<br />

• Signal<strong>in</strong>g: Based on pre-def<strong>in</strong>ed service levels the<br />

NIC should be able to <strong>in</strong>dividually signal certa<strong>in</strong> events<br />

to the VMM or directly to doma<strong>in</strong>s. Events can be <strong>in</strong>terrupts<br />

<strong>for</strong> new packet arrival or request<strong>in</strong>g new DMA<br />

descriptors.<br />

• Management: The basic management <strong>for</strong> packet process<strong>in</strong>g<br />

i.e., (re-)configuration of HW blocks or coord<strong>in</strong>ation<br />

of the <strong>in</strong>dividual tasks should be per<strong>for</strong>med<br />

with<strong>in</strong> the NIC.<br />

3.2 Proposed <strong>Architecture</strong> and Exemplary Packet<br />

Process<strong>in</strong>g<br />

The above requirements and considerations are driv<strong>in</strong>g our<br />

proposal <strong>for</strong> a new Embedded System specific VNIC (ES-<br />

VNIC) architecture (see Fig. 2). It should provide the right<br />

trade-off between high throughput and QoS comb<strong>in</strong>ed with<br />

real-time versus ultimate throughput (<strong>in</strong> server or HPC environments<br />

with 10s of Gb/s). It relies on a tailored set<br />

of f<strong>in</strong>ite state mach<strong>in</strong>es specifically crafted <strong>for</strong> handl<strong>in</strong>g the<br />

tasks described above. By this, the footpr<strong>in</strong>t of I/O virtualization<br />

<strong>in</strong> the HW is reduced and better support <strong>for</strong><br />

real-time constra<strong>in</strong>ts and service levels of doma<strong>in</strong>s can be<br />

provided. By decoupl<strong>in</strong>g those FSMs, parallel and pipel<strong>in</strong>ed<br />

process<strong>in</strong>g is possible.<br />

To improve scalability, the resources (queues, caches, buffer)<br />

on the NIC are not be constantly occupied by doma<strong>in</strong>s or<br />

<strong>in</strong>terfaces, but <strong>in</strong>stead assigned (dynamically). Different levels<br />

of service may be provided. For <strong>in</strong>terfaces with real-time<br />

constra<strong>in</strong>ts, configuration and queues always reside with<strong>in</strong><br />

the ES-VNIC. Best-ef<strong>for</strong>t <strong>in</strong>terfaces <strong>in</strong> opposite share available<br />

resources i.e., their rule sets are loaded on-demand from<br />

system memory replac<strong>in</strong>g the <strong>in</strong><strong>for</strong>mation of <strong>in</strong>active <strong>in</strong>terfaces.<br />

The NIC conta<strong>in</strong>s a standard MAC which is wrapped by<br />

flexible HW extensions to enable direct I/O. Those extensions<br />

are described best by expla<strong>in</strong><strong>in</strong>g their <strong>in</strong>teraction <strong>for</strong><br />

process<strong>in</strong>g an <strong>in</strong>com<strong>in</strong>g Ethernet packet (see Fig. 3). This<br />

figure is a message sequence chart representation of the <strong>in</strong>com<strong>in</strong>g<br />

packet process<strong>in</strong>g: The communication between the

MAC NIC Buffer Header-Pars<strong>in</strong>g Schedul<strong>in</strong>g Queue-Alloc Management DMA System Memory<br />

Figure 3: Process<strong>in</strong>g packet with ES-VNIC (Rx path)<br />

different extensions is visualized by directed l<strong>in</strong>es i.e., hand<strong>in</strong>g<br />

over data or trigger<strong>in</strong>g those extensions. A block stands<br />

<strong>for</strong> a delay <strong>in</strong> this extension either <strong>for</strong> process<strong>in</strong>g or stor<strong>in</strong>g<br />

data. Time is progress<strong>in</strong>g down the Y axis i.e., the figure<br />

has to be read from top to down.<br />

A packet that arrives at the MAC is temporarily stored <strong>in</strong><br />

the NIC buffer and the header is sent <strong>in</strong> parallel to the<br />

header-pars<strong>in</strong>g unit where the relevant <strong>in</strong><strong>for</strong>mation regard<strong>in</strong>g<br />

to which doma<strong>in</strong> this packet should be routed is extracted.<br />

These actions are per<strong>for</strong>med at l<strong>in</strong>e speed. As only<br />

the header is parsed the header-pars<strong>in</strong>g unit completes be<strong>for</strong>e<br />

the complete packet is stored at the buffer.<br />

The NIC buffer allows to store a maximum-sized Ethernet<br />

packet on the whole. It is possible to access any packet<br />

arbitrarily. There<strong>for</strong>e, packets do not have to be processed<br />

<strong>in</strong> their <strong>in</strong>com<strong>in</strong>g order e.g., high-priority packets <strong>for</strong> realtime<br />

tasks can be preferred. The address of the packet is<br />

handed to the header-pars<strong>in</strong>g unit which comb<strong>in</strong>es it with<br />

the extracted header <strong>in</strong><strong>for</strong>mation <strong>for</strong> identify<strong>in</strong>g the packet.<br />

With the extracted header <strong>in</strong><strong>for</strong>mation the management FSM<br />

can then start to select the context <strong>for</strong> process<strong>in</strong>g this packet.<br />

In this context all relevant <strong>in</strong><strong>for</strong>mation regard<strong>in</strong>g the handl<strong>in</strong>g<br />

is stored, <strong>for</strong> example which priority such a packet<br />

should have, which are the conditions <strong>for</strong> signal<strong>in</strong>g the doma<strong>in</strong><br />

of the arrival of the packet, etc. The ma<strong>in</strong> store <strong>for</strong><br />

those contexts is on the system memory <strong>in</strong> order to limit the<br />

resources <strong>in</strong> the ES-VNIC. Only a small cache <strong>for</strong> contexts<br />

with packets under process<strong>in</strong>g is present on the ES-VNIC.<br />

Contexts <strong>for</strong> critical doma<strong>in</strong>s can be p<strong>in</strong>ned to the cache<br />

permanently. Contexts <strong>for</strong> best-ef<strong>for</strong>t or low-priority packets<br />

<strong>in</strong>stead have to be loaded from system memory, <strong>in</strong>volv<strong>in</strong>g<br />

writ<strong>in</strong>g back contexts which need to be replaced due to the<br />

cache size limitation. A context can conta<strong>in</strong> the rule set<br />

<strong>for</strong> a complete doma<strong>in</strong>, but also <strong>for</strong> <strong>in</strong>dividual Rx or Tx<br />

network <strong>in</strong>terfaces. A context can have several kilobytes of<br />

data due to conta<strong>in</strong><strong>in</strong>g advanced rules, priority sett<strong>in</strong>gs and<br />

configurations.<br />

As load<strong>in</strong>g and writ<strong>in</strong>g back may take a reasonable amount<br />

of time, the management FSM is designed to handle several<br />

such processes and contexts <strong>in</strong> parallel, switch<strong>in</strong>g between<br />

them to decrease stall<strong>in</strong>g. At any time several packets shall<br />

be processed by the ES-VNIC <strong>in</strong> parallel.<br />

Similar to the context, the DMA descriptors and the respective<br />

producer/consumer lists (P/C lists) have to be available<br />

at the local cache or to be fetched from the system memory if<br />

required. The DMA descriptors are stored <strong>in</strong> generic queues<br />

where they can be read by the scheduler. The queue-alloc<br />

unit is responsible to assign and fill those queues.<br />

Based on the contexts of the current packets, the schedul<strong>in</strong>g<br />

unit decides which packet should be processed next and<br />

fetches a DMA descriptor from the respective queue. Along<br />

the respective address of packet <strong>in</strong> the NIC buffer, this <strong>in</strong><strong>for</strong>mation<br />

is handed over to the DMA unit. The DMA unit<br />

will then write the packet over the system bus to the system<br />

memory. Afterwards, it <strong>in</strong><strong>for</strong>ms the management unit<br />

about the completion of the action. The respective producer/consumer<br />

list is updated and written back to the system<br />

memory where it can be read by the doma<strong>in</strong>. Then<br />

the management unit configures the signal<strong>in</strong>g unit accord<strong>in</strong>gly<br />

to the context i.e., immediate <strong>in</strong>terrupt <strong>for</strong> the packet<br />

or wait <strong>for</strong> reach<strong>in</strong>g a threshold of packets. The respective<br />

signal<strong>in</strong>g concludes the packet process<strong>in</strong>g.<br />

The same units are utilized <strong>for</strong> send<strong>in</strong>g a packet. Only the<br />

header-pars<strong>in</strong>g unit is not used as a packet is already associated<br />

with a Tx <strong>in</strong>terface and there<strong>for</strong>e with the respective<br />

context. The ES-VNIC management is triggered from the<br />

driver to send a packet. The respective context is loaded<br />

and the DMA descriptor is read to an allocated queue. If<br />

the schedul<strong>in</strong>g unit decides to send this packet the descriptor<br />

is handed over to the DMA unit which writes the packet<br />

to the NIC buffer. After completely written it is sent out<br />

via the MAC.<br />

Doma<strong>in</strong>s can modify the data structures <strong>for</strong> context and<br />

DMA descriptors <strong>in</strong> the system memory only after be<strong>in</strong>g<br />

validated by the hypervisor to prevent erroneous or malicious<br />

<strong>in</strong>put. This is abstracted via calls to the hypervisor<br />

<strong>in</strong> the driver <strong>for</strong> the doma<strong>in</strong>. The hypervisor notifies the<br />

ES-VNIC which <strong>in</strong>validates cached <strong>in</strong><strong>for</strong>mation and fetches<br />

new <strong>in</strong>put from system memory.

MAC<br />

DMA<br />

NIC Internal / Instruction<br />

Memory<br />

NIC-CPU DMA System Memory<br />

can be per<strong>for</strong>med <strong>in</strong> less clock cycles with f<strong>in</strong>ite state<br />

mach<strong>in</strong>es.<br />

• Hav<strong>in</strong>g a pipel<strong>in</strong>ed architecture with different stages,<br />

that are FSMs, allows the same throughput with a<br />

lower frequency than per<strong>for</strong>m<strong>in</strong>g the respective tasks<br />

<strong>in</strong> sequential SW on a CPU.<br />

These po<strong>in</strong>ts lead to the work hypothesis that the ES-VNIC<br />

architecture needs low and determ<strong>in</strong>istic process<strong>in</strong>g time.<br />

Prerequisite is that the FSMs are flexible enough to service a<br />

mix of hard real-time, soft real-time and best-ef<strong>for</strong>t doma<strong>in</strong>s.<br />

In a <strong>for</strong>mal approach the process<strong>in</strong>g time by ES-VNIC can<br />

be <strong>for</strong>mulated as follows:<br />

T DelayRx = max(T NIC Buffer , T Header−P ars<strong>in</strong>g )<br />

Figure 4: Process<strong>in</strong>g packet with a CPU-centric<br />

NIC (Rx path)<br />

4. PRELIMINARY PERFORMANCE ESTI-<br />

MATION<br />

Based on the presented ES-VNIC architecture concept we<br />

assess a prelim<strong>in</strong>ary per<strong>for</strong>mance estimation. Focus is on<br />

the <strong>in</strong>com<strong>in</strong>g packet process<strong>in</strong>g sequence as <strong>in</strong>troduced and<br />

described <strong>for</strong> ES-VNIC <strong>in</strong> section 3.<br />

The process<strong>in</strong>g sequence <strong>for</strong> a network card per<strong>for</strong>m<strong>in</strong>g I/O<br />

virtualization via CPU firmware like RiceNIC is depicted<br />

<strong>in</strong> Fig. 4. Incom<strong>in</strong>g packets are transferred from the MAC<br />

via DMA to the NIC <strong>in</strong>ternal memory. Afterwards the NIC<br />

CPU is notified. The SW then processes the packet <strong>in</strong>clud<strong>in</strong>g<br />

header-pars<strong>in</strong>g, schedul<strong>in</strong>g and queu<strong>in</strong>g plus manag<strong>in</strong>g<br />

and configur<strong>in</strong>g the other HW blocks. Dur<strong>in</strong>g process<strong>in</strong>g the<br />

SW has to access the NIC <strong>in</strong>ternal memory <strong>for</strong> packet data<br />

and <strong>in</strong>struction code. The number of accesses depends on<br />

the size and association of the NIC CPU. After be<strong>in</strong>g queued<br />

the packet is transferred via DMA to the system memory.<br />

A simple qualitative comparison of the sequences reveals the<br />

follow<strong>in</strong>g po<strong>in</strong>ts:<br />

• The firmware on the s<strong>in</strong>gle CPU per<strong>for</strong>m<strong>in</strong>g the tasks<br />

<strong>for</strong> I/O virtualization constitute a sequential trail of<br />

tasks which due to the process<strong>in</strong>g latency may evolve<br />

to a bottleneck. Add<strong>in</strong>g further CPUs is not a favorable<br />

solution as it would contradict the goal of a<br />

resource-limited implementation.<br />

• On a CPU with data cache (re-)load<strong>in</strong>g and <strong>in</strong>struction<br />

fetch<strong>in</strong>g, it is not optimal to per<strong>for</strong>m tasks like header<br />

pars<strong>in</strong>g, queu<strong>in</strong>g or manag<strong>in</strong>g DMA descriptors due<br />

to the lack of temporal locality (<strong>for</strong> example header<br />

pars<strong>in</strong>g is per<strong>for</strong>med only once per packet). These task<br />

+ T Management<br />

+ max(T Schedul<strong>in</strong>g , T Queue−Alloc )<br />

+ T DMA (1)<br />

T NIC Buffer is the time needed to transfer the <strong>in</strong>com<strong>in</strong>g<br />

packet to the NIC Buffer, T Header−P ars<strong>in</strong>g to parse the respective<br />

header. Both actions are per<strong>for</strong>med <strong>in</strong> parallel and<br />

at l<strong>in</strong>e speed. Apparently, T NIC Buffer is dom<strong>in</strong>ant here<br />

and dependent on the packet size.<br />

T Management subsumes sett<strong>in</strong>g the configuration <strong>for</strong> the follow<strong>in</strong>g<br />

FSMs accord<strong>in</strong>g to the context of this packet. This<br />

<strong>in</strong>cludes the conditional fetch of this context from the system<br />

memory first. If the context is cached, it should only<br />

need a few clock cycles to per<strong>for</strong>m this operation. The time<br />

<strong>for</strong> fetch<strong>in</strong>g context is dom<strong>in</strong>ated by the per<strong>for</strong>mance of system<br />

bus and memory. Contexts <strong>for</strong> (hard) real-time <strong>in</strong>terfaces<br />

need to be p<strong>in</strong>ned to the cache. On the one hand<br />

this constra<strong>in</strong>t results <strong>in</strong> an easy calculable upper bound <strong>for</strong><br />

T Management, but on the other hand will reduce the slots <strong>for</strong><br />

contexts of best-ef<strong>for</strong>t or low-priority packets.<br />

The queue-alloc and scheduler unit are triggered both by<br />

the management unit and run concurrently. The queue-alloc<br />

unit needs T Queue−Alloc to allocate the needed DMA descriptor<br />

and the scheduler unit requires T Schedul<strong>in</strong>g to schedule<br />

the next packet to be transferred via DMA. As DMA descriptors<br />

need to be fetched from system memory <strong>in</strong> case<br />

that they are not already on the NIC, the queue-alloc unit<br />

needs more time to f<strong>in</strong>ish. For (hard) real-time packets the<br />

DMA queues should there<strong>for</strong>e already be pre-allocated and<br />

the descriptors pre-fetched to guarantee an upper bound.<br />

F<strong>in</strong>ally, T DMA is the time needed to transmit a packet from<br />

the NIC Buffer to the system memory and depends on packet<br />

size and on the per<strong>for</strong>mance of system bus and memory.<br />

The follow<strong>in</strong>g term describes the delta time of ES-VNIC i.e.,<br />

the time which can be spent <strong>in</strong> each stage of the pipel<strong>in</strong>ed<br />

architecture <strong>for</strong> process<strong>in</strong>g a packet:

System<br />

Memory<br />

Rx R<strong>in</strong>gs<br />

Tx R<strong>in</strong>gs<br />

A B [m] C D<br />

[n]<br />

System<br />

Memory<br />

Contexts<br />

A B …<br />

Z<br />

[m+n]<br />

NIC<br />

NIC<br />

From P/C Lists<br />

Assignable<br />

Queues<br />

A<br />

[o]<br />

To Schedul<strong>in</strong>g<br />

Local<br />

Cache<br />

A<br />

X<br />

X<br />

X<br />

[v]<br />

To P/C Lists<br />

A<br />

Multithreaded<br />

[w]<br />

FSMs<br />

To Queue-Alloc<br />

To Schedul<strong>in</strong>g<br />

Figure 5: Key component: Queue-Allocation<br />

From Header Pars<strong>in</strong>g<br />

Figure 6: Key component: Management (with Contexts)<br />

T DeltaRx = max( max(T NIC Buffer , T Header−P ars<strong>in</strong>g ),<br />

T Management,<br />

max(T Schedul<strong>in</strong>g , T Queue−Alloc ),<br />

T DMA) (2)<br />

If this time matches the rate of consecutive <strong>in</strong>com<strong>in</strong>g packets,<br />

ES-VNIC can cope with the the speed of this traffic so<br />

that no packet drops will occur. This is crucial to support<br />

network <strong>in</strong>terfaces <strong>for</strong> hard real-time and critical doma<strong>in</strong>s.<br />

This time is strongly dom<strong>in</strong>ated by the system bus and memory.<br />

The per<strong>for</strong>mance of the ES-VNIC is apparently driven by<br />

the system bus and memory i.e., systematically l<strong>in</strong>ked to<br />

the per<strong>for</strong>mance of the (embedded) system itself.<br />

As worst-case scenario <strong>for</strong> T DeltaRx the requirement to handle<br />

a constant flow of packets with m<strong>in</strong>imum frame size and<br />

m<strong>in</strong>imum <strong>in</strong>terval <strong>for</strong> a 1 Gbit/s MAC can be used. A packet<br />

size of 64 byte and 20 byte overhead <strong>for</strong> preamble, start-offrame-delimiter<br />

and <strong>in</strong>terframe gap results <strong>in</strong>:<br />

(64 + 20) ∗ 8bit<br />

1Gbit/s<br />

= 672 nanoseconds (3)<br />

This means that every 672 nanoseconds a new packet arrives<br />

and has to be processed. With a clock of 125 MHz <strong>for</strong> Gigabit<br />

Ethernet every pipel<strong>in</strong>e stage would have only 84 cycles<br />

to complete its task.<br />

5. EXPLORATION OF KEY ARCHITECTURE<br />

COMPONENTS<br />

We started to model the key components of the proposed<br />

ES-VNIC architecture <strong>for</strong> simulation <strong>in</strong> SystemC [14]. As<br />

described <strong>in</strong> section 3, the architecture should only utilize<br />

flexible HW resources. Focus is there<strong>for</strong>e on the related<br />

FSMs, structures and data elements <strong>in</strong> queue-allocation (see<br />

Fig. 5) and management (see Fig. 6). The focus should be<br />

on design, the exploration of the size of local buffers as well<br />

as the underly<strong>in</strong>g data paths of the components and efficient<br />

load<strong>in</strong>g of contexts.<br />

5.1 Queue-Allocation<br />

The Rx and Tx r<strong>in</strong>gs that conta<strong>in</strong> the DMA descriptors are<br />

stored <strong>in</strong> the system memory – <strong>in</strong> this example Rx <strong>in</strong>terfaces<br />

A, B and Tx <strong>in</strong>terfaces C, D. Their content is def<strong>in</strong>ed by the<br />

network drivers.<br />

On the NIC, a limited set of assignable queues is available.<br />

For <strong>in</strong>terfaces with real-time constra<strong>in</strong>ts such a queue is<br />

blocked and filled with the maximum number of available<br />

descriptors. Otherwise, if triggered by a context <strong>for</strong> either<br />

send<strong>in</strong>g or receiv<strong>in</strong>g a packet, a queue-allocation is done<br />

i.e., if no queue already conta<strong>in</strong>s the respective descriptor(s)<br />

<strong>for</strong> this context a queue is reserved and the descriptors are<br />

fetched from the system memory. This fetch<strong>in</strong>g is done by a<br />

dedicated HW eng<strong>in</strong>e. In Fig. 5 one queue is blocked <strong>for</strong> A<br />

(depicted by an <strong>in</strong>scribed A <strong>in</strong> this queue), the others have<br />

to share the second queue. This may result <strong>in</strong> flush<strong>in</strong>g of<br />

descriptors <strong>for</strong> an <strong>in</strong>active context or a context with lower<br />

priority. A further fetch is issued if a threshold <strong>for</strong> the P/C<br />

list is reached. That threshold is def<strong>in</strong>ed by the context.<br />

There can be more or less network <strong>in</strong>terfaces <strong>for</strong> receiv<strong>in</strong>g<br />

packets than <strong>for</strong> send<strong>in</strong>g, s<strong>in</strong>ce Rx and Tx r<strong>in</strong>gs do not have<br />

to be paired. With this feature it is possible to have a Tx<br />

<strong>in</strong>terface <strong>for</strong> broadcast<strong>in</strong>g status <strong>in</strong><strong>for</strong>mation and no correspondent<br />

Rx <strong>in</strong>terface (if no acknowledges are needed); this<br />

is a quite common scenario <strong>for</strong> embedded systems. Furthermore,<br />

to prevent head-of-l<strong>in</strong>e block<strong>in</strong>gs <strong>for</strong> one doma<strong>in</strong>, several<br />

Rx <strong>in</strong>terfaces <strong>for</strong> receiv<strong>in</strong>g packets with different service<br />

levels can be established.<br />

In general, the number of assignable queues (o) is limited<br />

and smaller than the number of Rx r<strong>in</strong>gs (m) and Tx r<strong>in</strong>gs<br />

(n) <strong>in</strong> the system memory i.e., m + n > o.<br />

5.2 Management (with Contexts)<br />

Contexts <strong>in</strong> system memory, cache <strong>for</strong> them on the NIC,<br />

multithreaded FSMs and connections to other units do assembly<br />

management. In our example the <strong>in</strong>terfaces A to Z<br />

exist and their contexts are kept <strong>in</strong> system memory (m <strong>for</strong><br />

Rx <strong>in</strong>terfaces plus n <strong>for</strong> Tx <strong>in</strong>terfaces).<br />

If send<strong>in</strong>g or receiv<strong>in</strong>g a packet and not hav<strong>in</strong>g the respec-

tive context <strong>in</strong> the ES-VNIC the context is fetched from the<br />

system memory and stored <strong>in</strong> a cache slot (v). The data of<br />

the context is loaded <strong>in</strong>to one of the multithreaded FSMs<br />

(w) by a dedicated HW eng<strong>in</strong>e. Us<strong>in</strong>g fixed entry po<strong>in</strong>ts the<br />

packet process<strong>in</strong>g management is then started.<br />

Load<strong>in</strong>g the context results <strong>in</strong> two th<strong>in</strong>gs:<br />

• First the FSM is (re-)configured i.e., the respective<br />

state diagram is modified. By default the state diagram<br />

is preset to the most common case <strong>for</strong> an <strong>in</strong>terface.<br />

The context can then add or remove states<br />

and transitions adapt<strong>in</strong>g the ES-VNIC <strong>for</strong> process<strong>in</strong>g<br />

packet <strong>for</strong> this specific <strong>in</strong>terface. For example, FSMs<br />

<strong>for</strong> <strong>in</strong>terfaces be<strong>in</strong>g polled can be stripped from states<br />

and transitions <strong>for</strong> signal<strong>in</strong>g <strong>in</strong>com<strong>in</strong>g messages. Another<br />

option are additional (security) steps <strong>for</strong> a critical<br />

packet and its <strong>in</strong>terface prevent<strong>in</strong>g deletion of the<br />

packet from the NIC buffer after be<strong>in</strong>g copied <strong>in</strong> the<br />

system memory and be<strong>in</strong>g validated there.<br />

• Second data from the context is used as <strong>in</strong>put <strong>for</strong> registers<br />

that def<strong>in</strong>e and trigger the other FSMs (queuealloc,<br />

schedul<strong>in</strong>g, P/C lists). For multithread<strong>in</strong>g there<br />

are multiple sets of the <strong>in</strong>put and output register <strong>for</strong> an<br />

FSM. By mapp<strong>in</strong>g a thread to a packet the ES-VNIC<br />

can switch fast between process<strong>in</strong>g of several packets<br />

(similar to process<strong>in</strong>g <strong>in</strong> a multithreaded CPU).<br />

Similar to queues <strong>in</strong> queue-alloc, contexts can be p<strong>in</strong>ned to<br />

cache slots and FSMs. In our example here this would be<br />

<strong>for</strong> A represent<strong>in</strong>g a hard real-time <strong>in</strong>terface. The other<br />

<strong>in</strong>terfaces have to share the other available resources.<br />

6. FUTURE WORK AND SUMMARY<br />

Future work comprises of: Simulation of the key components<br />

to validate the proposed architecture and the prelim<strong>in</strong>ary<br />

per<strong>for</strong>mance estimations. Here, set-ups which require<br />

displacement of contexts, DMA descriptors and P/C lists<br />

on the ES-VNIC dur<strong>in</strong>g run-time are of particular <strong>in</strong>terest.<br />

This will <strong>in</strong>volve dimension<strong>in</strong>g of cache size, packet buffers,<br />

queues and the number of multithreaded FSMs as well as<br />

functional verification of the those FSMs. Afterwards, the<br />

network card architecture should be physically implemented<br />

as part of an MPSoC demonstrator <strong>in</strong> an FPGA to prove<br />

the applicability to real world scenarios.<br />

In this work-<strong>in</strong>-progress paper we <strong>in</strong>troduced a new virtualiz<strong>in</strong>g<br />

NIC architecture concept particularly address<strong>in</strong>g the<br />

requirements of I/O virtualization <strong>in</strong> embedded systems.<br />

We showed that current concepts that address HPC do not<br />

match with those requirements. Thus, the needs <strong>for</strong> this application<br />

area have been discussed and a favorable design has<br />

been deduced. A prelim<strong>in</strong>ary per<strong>for</strong>mance estimation and a<br />

short presentation of key elements have also been given.<br />

7. REFERENCES<br />

[1] P. Barham, B. Dragovic, K. Fraser, S. Hand, T. Harris,<br />

A. Ho, R. Neugebauer, I. Pratt, and A. Warfield. Xen<br />

and the art of virtualization. In Proceed<strong>in</strong>gs of the<br />

n<strong>in</strong>eteenth ACM symposium on Operat<strong>in</strong>g Systems<br />

Pr<strong>in</strong>ciples (SOSP19), ACM Press, 2003.<br />

[2] A. Kivity, Y. Kamay, and D. Laor. kvm: the L<strong>in</strong>ux<br />

Virtual Mach<strong>in</strong>e Monitor. In L<strong>in</strong>ux Symposium, 2007.<br />

[3] M. Mahal<strong>in</strong>gam and R. Brunner. I/O <strong>Virtualization</strong><br />

(IOV) For Dummies. In VMWorld, 2007.<br />

[4] L. van Doorn. Hardware virtualization trends. In<br />

Proceed<strong>in</strong>gs of the 2nd <strong>in</strong>ternational conference on<br />

Virtual execution environments, 2006 (June).<br />

[5] A. Menon, A. L. Cox, and W. Zwaenepoel. Optimiz<strong>in</strong>g<br />

network virtualization <strong>in</strong> Xen. In Proceed<strong>in</strong>gs of the<br />

USENIX Annual Technical Conference, 2006 (June).<br />

[6] G. Heiser. The role of virtualization <strong>in</strong> embedded<br />

systems. In Proceed<strong>in</strong>gs of the 1st workshop on Isolation<br />

and <strong>in</strong>tegration <strong>in</strong> embedded systems, 2008 (April).<br />

[7] H. Inoue, A. Ikeno, M. Kondo, J. Sakai, and<br />

M. Edahiro. VIRTUS: A new processor virtualization<br />

architecture <strong>for</strong> security-oriented next-generation<br />

mobile term<strong>in</strong>als. In Proceed<strong>in</strong>gs of the 43rd annual<br />

conference on Design automation, 2006.<br />

[8] H. Raj and K. Schwan. Implement<strong>in</strong>g a scalable<br />

self-virtualiz<strong>in</strong>g network <strong>in</strong>terface on a multicore<br />

plat<strong>for</strong>m. In Workshop on the Interaction between<br />

Operat<strong>in</strong>g Systems and Computer <strong>Architecture</strong>, 2005<br />

(October).<br />

[9] K. K. Ram, J. R. Santos, Y. Turner, A. L. Cox, and<br />

S. Rixner. Achiev<strong>in</strong>g 10 Gb/s us<strong>in</strong>g safe and<br />

transparent network <strong>in</strong>terface virtualization. In<br />

Proceed<strong>in</strong>gs of the 2009 ACM SIGPLAN/SIGOPS<br />

<strong>in</strong>ternational Conference on Virtual Execution<br />

Environments.<br />

[10] P. Willmann, J. Shafer, D. Carr, A. Menon, S. Rixner,<br />

A. L. Cox, and W. Zwaenepoel. Concurrent direct<br />

network access <strong>for</strong> virtual mach<strong>in</strong>e monitors. In<br />

Proceed<strong>in</strong>gs of the International Symposium on<br />

High-Per<strong>for</strong>mance Computer <strong>Architecture</strong>, 2007<br />

(February).<br />

[11] J. Wang. Survey of State-of-the-art <strong>in</strong> Inter-VM<br />

Communication Mechanisms. In Research Proficiency<br />

Report, 2009 (September).<br />

[12] J. R. Santos, Y. Turner, and J. Mudigona. Tam<strong>in</strong>g<br />

Heterogeneous NIC Capabilities <strong>for</strong> I/O <strong>Virtualization</strong>.<br />

In Proceed<strong>in</strong>gs of Workshop on I/O <strong>Virtualization</strong>,<br />

2008.<br />

[13] S. Ch<strong>in</strong>ni, R. Hiremane. Virtual Mach<strong>in</strong>e Device<br />

Queues. In Whitepaper, Intel, 2007.<br />

[14] T. Grötker, S. Liao, G. Mart<strong>in</strong> and S. Swan. System<br />

Design with SystemC. In Kluwer Academic Publishers,<br />

2002.<br />

With this paper, it is our objective to raise awareness <strong>for</strong> the<br />

research of I/O virtualization <strong>in</strong> embedded system network<br />

cards and the new challenges here.