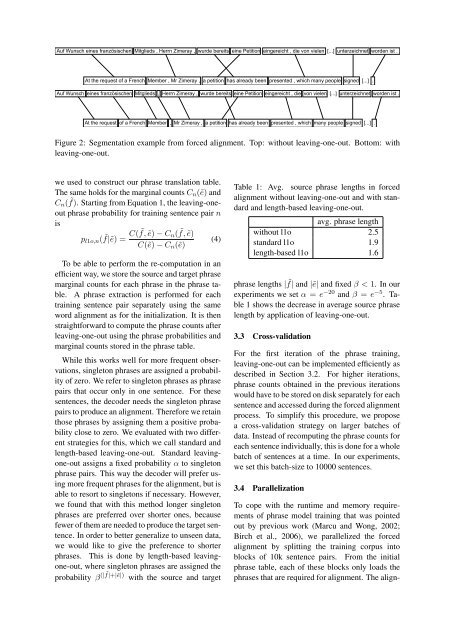

changed. In this section we describe our forcedalignment procedure that is the basic training procedurefor the models proposed here.3.1 Forced AlignmentThe idea of forced alignment is to perform aphrase segmentation and alignment of each sentencepair of the training data using the full translationsystem as in decoding. What we call segmentationand alignment here corresponds to the “concepts”used by (Marcu and Wong, 2002). We applyour normal phrase-based decoder on the sourceside of the training data and constrain the translationsto the corresponding target sentences fromthe training data.Given a source sentence f1 J and target sentencee I 1 , we search for the best phrase segmentation andalignment that covers both sentences. A segmentationof a sentence into K phrase is defined byk → s k := (i k , b k , j k ), for k = 1, . . . , Kwhere for each segment i k is last position of kthtarget phrase, and (b k , j k ) are the start and endpositions of the source phrase aligned to the kthtarget phrase. Consequently, we can modify Equation2 to define the best segmentation of a sentencepair as:{ M}ŝ ˆK ∑1 = argmax λ m h m (e I 1, s K 1 , f1 J ) (3)K,s K 1m=1The identical models as in search are used: conditionalphrase probabilities p( ˜f k |ẽ k ) and p(ẽ k | ˜f k ),<strong>with</strong>in-phrase lexical probabilities, distance-basedreordering model as well as word and phrasepenalty. A language model is not used in this case,as the system is constrained to the given target sentenceand thus the language model score has noeffect on the alignment.In addition to the phrase matching on the sourcesentence, we also discard all phrase translationcandidates, that do not match any sequence in thegiven target sentence.Sentences for which the decoder can not findan alignment are discarded for the phrase modeltraining. In our experiments, this is the case forroughly 5% of the training sentences.3.2 <strong>Leaving</strong>-one-outAs was mentioned in Section 2, previous approachesfound over-fitting to be a problem inphrase model training. In this section, we describea leaving-one-out method that can improvethe phrase alignment in situations, where the probabilityof rare phrases and alignments might beoverestimated. The training data that consists of Nparallel sentence pairs f n and e n for n = 1, . . . , Nis used for both the initialization of the translationmodel p( ˜f|ẽ) and the phrase model training.While this way we can make full use of the availabledata and avoid unknown words during training,it has the drawback that it can lead to overfitting.All phrases extracted from a specific sentencepair f n , e n can be used for the alignment ofthis sentence pair. This includes longer phrases,which only match in very few sentences in thedata. Therefore those long phrases are trained tofit only a few sentence pairs, strongly overestimatingtheir translation probabilities and failing togeneralize. In the extreme case, whole sentenceswill be learned as phrasal translations. The averagelength of the used phrases is an indicator ofthis kind of over-fitting, as the number of matchingtraining sentences decreases <strong>with</strong> increasingphrase length. We can see an example in Figure2. Without leaving-one-out the sentence is segmentedinto a few long phrases, which are unlikelyto occur in data to be translated. <strong>Phrase</strong> boundariesseem to be unintuitive and based on some hiddenstructures. With leaving-one-out the phrases areshorter and therefore better suited for generalizationto unseen data.Previous attempts have dealt <strong>with</strong> the overfittingproblem by limiting the maximum phraselength (DeNero et al., 2006; Marcu and Wong,2002) and by smoothing the phrase probabilitiesby lexical models on the phrase level (Ferrer andJuan, 2009). However, (DeNero et al., 2006) experiencedsimilar over-fitting <strong>with</strong> short phrases dueto the fact that the same word sequence can be segmentedin different ways, leading to specific segmentationsbeing learned for specific training sentencepairs. Our results confirm these findings. Todeal <strong>with</strong> this problem, instead of simple phraselength restriction, we propose to apply the leavingone-outmethod, which is also used for languagemodeling techniques (Kneser and Ney, 1995).When using leaving-one-out, we modify thephrase translation probabilities for each sentencepair. For a training example f n , e n , we have toremove all phrases C n ( ˜f, ẽ) that were extractedfrom this sentence pair from the phrase counts that