YSM Issue 88.4

Issue 88.4 of the Yale Scientific Magazine

Issue 88.4 of the Yale Scientific Magazine

- No tags were found...

You also want an ePaper? Increase the reach of your titles

YUMPU automatically turns print PDFs into web optimized ePapers that Google loves.

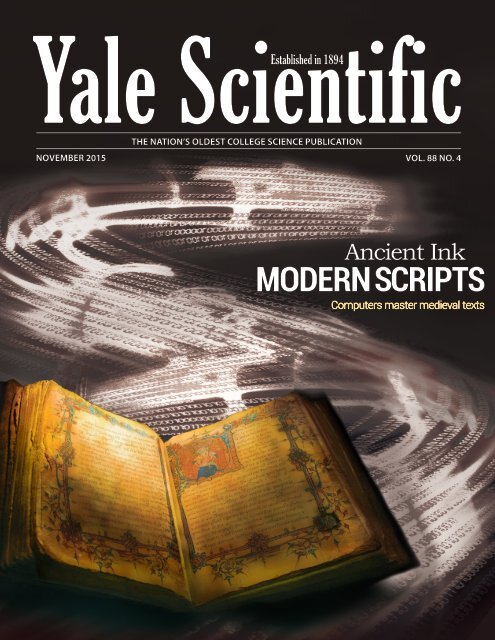

Yale Scientific<br />

Established in 1894<br />

THE NATION’S OLDEST COLLEGE SCIENCE PUBLICATION<br />

NOVEMBER 2015 VOL. 88 NO. 4<br />

Ancient Ink<br />

MODERN SCRIPTS<br />

Computers master medieval texts

N e w l y R e n o v a t e d<br />

& Under New Ownership<br />

Visit us at<br />

15 Broadway<br />

& 44 Whitney<br />

Now with<br />

event space!<br />

Hosting 20-30<br />

Fresh,<br />

Healthy,<br />

& Affordable<br />

Foods<br />

Call 203-787-4533<br />

or visit in store for details<br />

24 hours<br />

a day<br />

7 days a week

5<br />

6<br />

6<br />

7<br />

7<br />

8<br />

9<br />

10<br />

11<br />

12<br />

25<br />

26<br />

NEWS<br />

Letter From the Editor<br />

Banned Drug Repurposed for Diabetes<br />

ArcLight Illuminates Neurons<br />

New Organ Preservation Unit<br />

Surprising Soft Spot in the Lithosphere<br />

An Unexpected Defense Against Cancer<br />

New Device for the Visually Impaired<br />

Energy Lessons from Hunter-Gatherers<br />

<br />

Mosquitoes Resistant to Malaria<br />

FEATURES<br />

Environment<br />

Climate Change Spikes Volcanic Activity<br />

Cell Biology<br />

How Radioactive Elements Enter a Cell<br />

Yale Scientific<br />

Established 1894<br />

CONTENTS<br />

NOVEMBER 2015 VOL. 88 ISSUE NO. 4<br />

24<br />

Ancient Ink,<br />

Modern Scripts<br />

Through months of a<br />

squirrel’s cold slumber,<br />

neurons generate<br />

their own heat to keep<br />

functioning. Our cover<br />

story explains this<br />

feat of the nervous<br />

system and explores<br />

what it might mean for<br />

humans.<br />

ART BY CHRISTINA ZHANG<br />

Black Hole with a<br />

13<br />

Growth Problem<br />

22<br />

ON THE COVER<br />

Ancient Ink,<br />

Modern Scripts<br />

A new algorithm allows computer<br />

scientists to unlock the secrets of<br />

medieval manuscripts. From pen to<br />

pixel, researchers are using science<br />

to better understand historical texts.<br />

A supermassive black hole challenges the foundations<br />

of astrophysics, forcing astronomers to update the<br />

rule book of galaxy formation.<br />

28<br />

Robotics<br />

Robots with Electronic Skin<br />

30<br />

31<br />

32<br />

34<br />

35<br />

Computer Science<br />

Predicting Psychosis<br />

Debunking Science<br />

San Andreas<br />

Technology<br />

The Future of Electronics<br />

Engineering<br />

Who Lives on a Dry Surface Under the Sea?<br />

Science or Science Fiction?<br />

Telepathy and Mind Control<br />

16<br />

Tiny Proteins with<br />

Big Functions<br />

Contrary to common scientific belief,<br />

proteins need not be large to have<br />

powerful biological functions.<br />

18<br />

IMAGE COURTESY OF MEG URRY<br />

East Meets West in<br />

Cancer Treatment<br />

A Yale professor brings an ancient remedy<br />

to the forefront, showing that traditional<br />

herbs can combat cancer.<br />

36<br />

<br />

Grey Meyer MC ‘16<br />

37<br />

<br />

Michele Swanson YC ‘82<br />

38<br />

Q&As<br />

Do You Eat with Your Ears?<br />

How Do Organisms Glow in the Dark?<br />

20<br />

Nature’s<br />

Blueprint<br />

ART BY CHANTHIA MA<br />

Scientists learn lessons from nature’s greenery, modeling<br />

the next generation of solar technology on plant cells.<br />

<br />

<br />

November 2015<br />

<br />

3

FEATURE<br />

book reviews<br />

SPOTLIGHT<br />

SCIENCE IN THE SPOTLIGHT<br />

HOW TO CLONE A MAMMOTH Captivates Scientists and Non-scientists Alike<br />

BY ALEC RODRIGUEZ<br />

Science fiction novels, TV shows, and movies have time and time<br />

again toyed with the cloning of ancient animals. But just how close<br />

are we to bringing these species, and our childhood fantasies, back<br />

to life?<br />

While animals were first cloned about 20 years ago, modern<br />

technology has only recently made repopulating some areas of the<br />

world with extinct species seem feasible. In her book, How to Clone a<br />

Mammoth, evolutionary biologist Beth Shapiro attempts to separate<br />

facts from fiction on the future of these creatures. Her research<br />

includes work with ancient DNA, which holds the key to recreating<br />

lost species. The book, a sort of how-to guide to cloning these<br />

animals, takes us step-by-step through the process of de-extinction.<br />

It is written to engage scientific and non-scientific audiences alike,<br />

complete with fascinating stories and clear explanations.<br />

To Shapiro, de-extinction is not only marked by birth of a cloned<br />

or genetically modified animal, but also by the animal’s successful<br />

integration into a suitable habitat. She envisions that researchers<br />

could clone an extinct animal by inserting its genes into the genome<br />

of a related species. Along these lines, Shapiro provides thoughtprovoking<br />

insights and anecdotes related to the process of genetically<br />

engineering mammoth characteristics into Asian elephants. She<br />

argues that the genetic engineering and reintroduction of hybrid<br />

animals into suitable habitats constitutes effective “clonings” of<br />

extinct species.<br />

BY AMY HO<br />

Mark Steyn’s recent A Disgrace to the Profession attacks Michael E.<br />

Mann’s hockey stick graph of global warming — a reconstruction of<br />

Earth’s temperatures over the past millennium that depicts a sharp<br />

uptick over the past 150 years. It is less of a book than it is a collection of<br />

quotes from respected and accredited researchers, all disparaging Mann<br />

as a scientist and, often, as a person.<br />

Steyn’s main argument is that<br />

Mann did a great disservice to science<br />

when he used flawed data to create a<br />

graph that “proved” his argument<br />

about Earth’s rising temperatures.<br />

Steyn does not deny climate change,<br />

nor does he deny its anthropogenic<br />

causes. His issue, as he puts it, is<br />

with the shaft of the hockey stick,<br />

not the blade. His outrage lies not<br />

only in the use of poor data, but in<br />

Mann’s deletion of data in ignoring<br />

major historical climate shifts such<br />

as the Little Ice Age and the Medieval<br />

Warm Period.<br />

IMAGE COURTESY OF AMAZON<br />

While some sections of the book<br />

are a bit heavy on anecdotes, most<br />

are engaging, amusing, and relevant<br />

enough to the overall chapter themes<br />

to keep the book going. Shapiro<br />

includes personal tales ranging from<br />

asking her students which species<br />

they would de-extinct to her struggle<br />

trying to extract DNA from ember.<br />

The discussion of each core topic feels<br />

sufficient, with a wealth of examples.<br />

Shapiro tosses in some comments<br />

on current ecological issues here<br />

and there, and for good measure, she busts myths like the idea<br />

that species can be cloned from DNA “preserved” in ember. Sorry,<br />

Jurassic Park.<br />

The book is a quick, easy read — only about 200 pages — that would<br />

be of interest to any biology-inclined individual and accessible even<br />

to the biology neophyte. Shapiro summarizes technological processes<br />

simply and with graphics for visual learners. Most of all, Shapiro’s<br />

book leaves the reader optimistic for the future of Pleistocene Park<br />

— a habitat suitable for the reintroduction of mammoths.<br />

Our childhood fantasies, when backed with genetic engineering,<br />

could be just around the corner.<br />

A DISGRACE TO THE PROFESSION Attacks the Man Instead of the Science<br />

To Steyn, the Intergovernmental Panel on Climate Change (IPCC)<br />

and all those who supported the hockey stick graph also did a disservice<br />

to science by politicizing climate change to the extent that it gives<br />

validity to deniers. However, Steyn may be giving these doubters yet<br />

more ammo, because he has done nothing to de-politicize the issue.<br />

Steyn claims that Mann has drawn his battle lines wrong — but then,<br />

so has Steyn, by attacking Mann instead of focusing on the false science.<br />

Steyn’s writing style is broadly appealing, but his humor underestimates<br />

his audience. His colloquial tone could be seen as a satirical take on<br />

what Steyn refers to as Mann’s “cartoon climatology,” but it eventually<br />

subverts his argument by driving the same points over and over while<br />

never fully delving into scientific details. Although Steyn champions a<br />

nuanced view of climate science, his own nuance only goes so far as to<br />

tell his readers that they should be less certain, because meteorology and<br />

climate science are uncertain.<br />

“The only constant about climate is change,” Steyn points out,<br />

advocating for us to better understand climate and to adapt to changes as<br />

they come. It is an important point that deserves more attention than it<br />

gets in the book. A Disgrace to the Profession is an entertaining read that<br />

sounds like a blogger’s rant. Steyn makes few points that are especially<br />

compelling, but then insists on hammering them in.<br />

IMAGE COURTESY OF PRINCETON UNIV. PRESS<br />

4 November 2015

F R O M T H E E D I T O R<br />

Here at the Yale Scientific Magazine, we write about science because it<br />

inspires us. Some of the biggest responsibilities in science fall to our smallest<br />

molecules. Miniscule proteins called ubiquitin ligases are tasked with identifying<br />

and attacking deviant cancer cells (pg. 11). Such power can be dangerous. The<br />

simplest proteins known to exist are capable of spinning cell growth out of<br />

control to cause tumors (pg. 16) — dangerous, yes, but still impressive.<br />

And the researchers we interview are inspiring, in their creative approaches<br />

to answering questions and in their dedication to making a real-world impact.<br />

Want to know how human metabolism has changed with the modernization of<br />

society? Find people who continue to live as hunter-gatherers for comparison<br />

(pg. 10). Intrigued by the level of detail in medieval manuscripts? In our cover<br />

story, scientists take on the vast medieval corpus with an innovative and efficient<br />

computer algorithm (pg. 22). Others are extending the reach of their research<br />

far beyond laboratory walls. A project for a Yale engineering class turned into a<br />

new device that better preserves human organs for transplant, which became the<br />

company Revai (pg. 7). A collaboration between a mechanical engineer in New<br />

Haven and a theater company in London has culminated in exciting technology<br />

that allows the visually impaired to experience their surroundings (pg. 9).<br />

For this issue of our publication, we asked also: What inspires these scientists?<br />

Their research questions can stem from a single curiosity in the realm of biology<br />

or chemistry or physics. Often, they’re motivated to improve some aspect of the<br />

world, whether it’s human health or the environment. Scientists design solutions<br />

to achieve these improvements. For ideas, they turn to history: An ancient<br />

Chinese herbal remedy has resurfaced as a powerful 21st century drug (pg. 18).<br />

Or, they look to nature: Solar panels might be more effective if they were modeled<br />

after plant cells — after all, the basic operation of both solar cells and plant cells<br />

is to convert sunlight into useable energy (pg. 20). Even everyday electronics can<br />

be inspired by nature — particularly, by the inherent ability of certain materials<br />

to self-assemble (pg. 32).<br />

Between these covers, we’ve written about a diversity of topics in science,<br />

bringing you stories from the lab, from the field, and from the far corners of<br />

the universe. Whether you’re fascinated by the cosmos, natural disasters, or<br />

advanced robots, we hope you’ll see inspiration in this issue of the Yale Scientific.<br />

Yale Scientific<br />

Established in 1894<br />

THE NATION’S OLDEST COLLEGE SCIENCE PUBLICATION<br />

NOVEMBER 2015 VOL. 88 NO. 4<br />

Ancient Ink<br />

MODERN SCRIPTS<br />

A B O U T T H E A R T<br />

Computers master medieval texts<br />

Payal Marathe<br />

Editor-in-Chief<br />

The cover of this issue, designed by arts editor<br />

Christina Zhang, features the algorithm that<br />

identifies the number of colors on digitized<br />

medieval manuscripts. The art depicts the<br />

process of categorizing pixels using a binary code.<br />

Developed by Yale computer science and graphics<br />

professor Holly Rushmeier, this technology could<br />

help researchers decrypt medieval texts.<br />

Editor-in-Chief<br />

Managing Editors<br />

News Editor<br />

Features Editor<br />

Articles Editor<br />

Online Editors<br />

Copy Editors<br />

Special Editions Editor<br />

<br />

M A G A Z I N E<br />

Established in 1894<br />

NOVEMBER 2015 VOL. 88 NO. 4<br />

Production Managers<br />

Layout Editors<br />

Arts Editor<br />

Photography Editors<br />

Webmaster<br />

Publisher<br />

Operations Manager<br />

Advertising Manager<br />

Subscriptions Manager<br />

Alumni Outreach Coordinator<br />

Synapse Director<br />

Coordinator of Contest Outreach<br />

Science on Saturdays Coordinator<br />

Volunteer Coordinator<br />

Staff<br />

Aydin Akyol<br />

Alex Allen<br />

Caroline Ayinon<br />

Kevin Biju<br />

Rosario Castañeda<br />

Jonathan Galka<br />

Ellie Handler<br />

Emma Healy<br />

Amy Ho<br />

Newlyn Joseph<br />

Advisory Board<br />

Kurt Zilm, Chair<br />

Priyamvada Natarajan<br />

Fred Volkmar<br />

Stanley Eisenstat<br />

James Duncan<br />

Stephen Stearns<br />

Jakub Szefer<br />

Werner Wolf<br />

John Wettlaufer<br />

William Summers<br />

Scott Strobel<br />

Robert Bazell<br />

Ayaska Fernando<br />

Ivan Galea<br />

Hannah Kazis-Taylor<br />

Danya Levy<br />

Chanthia Ma<br />

Cheryl Mai<br />

Raul Monraz<br />

Ashlyn Oakes<br />

Archie Rajagopalan<br />

Alec Rodriguez<br />

Jessica Schmerler<br />

Zach Smithline<br />

Payal Marathe<br />

Adam Pissaris<br />

Nicole Tsai<br />

Christina de Fontnouvelle<br />

Theresa Steinmeyer<br />

Kevin Wang<br />

Grace Cao<br />

Jacob Marks<br />

Zachary Gardner<br />

Genevieve Sertic<br />

Julia Rothchild<br />

Allison Cheung<br />

Jenna DiRito<br />

Aviva Abusch<br />

Sofia Braunstein<br />

Amanda Mei<br />

Suryabrata Dutta<br />

Christina Zhang<br />

Katherine Lin<br />

Stephen Le Breton<br />

Peter Wang<br />

Jason Young<br />

Lionel Jin<br />

Sonia Wang<br />

Amanda Buckingham<br />

Patrick Demkowicz<br />

Kevin Hwang<br />

Ruiyi Gao<br />

Sarah Ludwin-Peery<br />

Milana Bochkur Dratver<br />

Aaron Tannenbaum<br />

Kendrick Umstattd<br />

Anson Wang<br />

Julia Wei<br />

Isabel Wolfe<br />

Suzanne Xu<br />

Holly Zhou<br />

Chemistry<br />

Astronomy<br />

Child Study Center<br />

Computer Science<br />

Diagnostic Radiology<br />

Ecology & Evolutionary Biology<br />

Electrical Engineering<br />

Emeritus<br />

Geology & Geophysics<br />

History of Science, Medicine & Public Health<br />

Molecular Biophysics & Biochemistry<br />

Molecular, Cellular & Developmental Biology<br />

Undergraduate Admissions<br />

Yale Science & Engineering Association<br />

The Yale Scientific Magazine (<strong>YSM</strong>) is published four times a year by<br />

Yale Scientific Publications, Inc. Third class postage paid in New Haven,<br />

CT 06520. Non-profit postage permit number 01106 paid for May 19,<br />

1927 under the act of August 1912. ISN:0091-287. We reserve the right<br />

to edit any submissions, solicited or unsolicited, for publication. This<br />

magazine is published by Yale College students, and Yale University<br />

is not responsible for its contents. Perspectives expressed by authors<br />

do not necessarily reflect the opinions of <strong>YSM</strong>. We retain the right to<br />

reprint contributions, both text and graphics, in future issues as well<br />

as a non-exclusive right to reproduce these in electronic form. The<br />

<strong>YSM</strong> welcomes comments and feedback. Letters to the editor should<br />

be under 200 words and should include the author’s name and contact<br />

information. We reserve the right to edit letters before publication.<br />

Please send questions and comments to ysm@yale.edu.

NEWS<br />

in brief<br />

Banned Drug Repurposed for Diabetes<br />

By Cheryl Mai<br />

PHOTO BY CHERYL MAI<br />

Rachel Perry, lead author of this<br />

study, is a postdoctoral fellow in the<br />

Shulman lab.<br />

The molecule behind a weight-loss pill<br />

banned in 1938 is making a comeback.<br />

Professor Gerald Shulman and his research<br />

team have made strides to reintroduce 2,4<br />

dinitrophenol (DNP), once a toxic weight-loss<br />

molecule, as a potential new treatment for type<br />

2 diabetes.<br />

Patients with type 2 diabetes are insulin<br />

resistant, which means they continue to<br />

produce insulin naturally, but their cells<br />

cannot respond to it. Previous research by the<br />

Shulman group revealed that fat accumulation<br />

in liver cells can induce insulin resistance, nonalcoholic<br />

fatty liver disease, and ultimately<br />

diabetes. Shulman’s team identified DNP, which<br />

reduces liver fat content, as a possible fix.<br />

DNP was banned because it was causing<br />

deadly spikes in body temperature due to<br />

mitochondrial uncoupling. This means the<br />

energy in glucose, usually harnessed to produce<br />

ATP, is released as heat instead. Shulman’s<br />

recent study offers a new solution to this old<br />

problem: CRMP, a controlled release system<br />

which limits the backlash of DNP on the body.<br />

CRMP is an orally administered bead of<br />

DNP coated with polymers that promote the<br />

slow-release of DNP. When the pace of DNP<br />

release is well regulated, overheating is much<br />

less likely to occur. Thus, patients could benefit<br />

from the active ingredients in the drug without<br />

suffering potentially fatal side effects.<br />

So far, findings have been promising: no<br />

toxic effects have been observed in rats with<br />

doses up to 100 times greater than the lethal<br />

dose of DNP.<br />

“When giving CRMP, you can’t even pick up<br />

a change in temperature,” Shulman said.<br />

Results also include a decrease in liver fat<br />

content by 65 percent in rats and a reversal of<br />

insulin resistance. These factors could be the<br />

key to treating diabetes.<br />

“Given that a third of Americans are projected<br />

to be diabetic by 2050, we are greatly in need of<br />

agents such as this to reverse diabetes and its<br />

downstream sequelae,” said Rachel Perry, lead<br />

author of the study.<br />

ArcLight Illuminates Neuronal Networks<br />

By Archie Rajagopalan<br />

IMAGE COURTESY OF PIXABAY<br />

With ArcLight, real-time imaging<br />

of neuronal networks could lead to a<br />

major breakthrough in understanding<br />

the brain’s many components.<br />

Scientists have engineered a protein that will<br />

more accurately monitor neuron firing. The<br />

protein, called ArcLight, serves as a fluorescent<br />

tag for genes and measures voltage changes in<br />

real time, offering new insight on how nerve<br />

cells operate and communicate.<br />

Neuron firing involves the rapid influx of<br />

calcium ions from outside of the neuron’s<br />

membrane. Proteins that illuminate in the<br />

presence of increased calcium levels can<br />

therefore track the completion of an action<br />

potential. For this reason, calcium sensors are<br />

commonly used as a proxy for action potential<br />

measurements. However, because calcium<br />

changes occur more slowly than voltage<br />

changes, calcium sensors do not provide<br />

precise measurements of neuron signaling.<br />

In a recent study by Yale postdoctoral fellow<br />

Douglas Storace, ArcLight was shown to be<br />

a more efficient candidate for this job. In the<br />

experiment, either ArcLight or a traditional<br />

calcium-based probe was injected into the<br />

olfactory bulb of a mouse. Simultaneously,<br />

using an epifluorescence microscope, Storace<br />

observed changes in fluorescence triggered<br />

by the mouse sniffing an odorant. Because<br />

ArcLight reports rapid changes in the electrical<br />

activity of neurons, Storace and his colleagues<br />

were able to obtain more direct measurements<br />

of neuron firing with ArcLight compared to<br />

ordinary calcium sensors.<br />

In addition to monitoring voltage changes<br />

directly, ArcLight is genetically encoded and<br />

can be targeted to specific populations of cells.<br />

This allows scientists to monitor the electrical<br />

activity of different cell types and may provide<br />

more information on how different neuronal<br />

pathways interact.<br />

“A more accurate way of monitoring<br />

the voltage in neurons gives us a lot more<br />

information about their activity,” Storace said.<br />

“Potentially, this discovery will give us enough<br />

information about neurons to lead to a major<br />

breakthrough.”<br />

6 November 2015

in brief<br />

NEWS<br />

New Startup Develops Organ Preservation Unit<br />

By Newlyn Joseph<br />

An organ transplant comes with a slew of<br />

complications. Perhaps the most commonly<br />

overlooked problem is the preservation of<br />

donor tissue prior to translpant. Current means<br />

of storing intestines before they are transplanted<br />

involve a simple container filled with ice. Until<br />

now, there has been little progress in developing<br />

more effective, efficient preservation strategies.<br />

The nascent company Revai, the result of<br />

a collaboration between the Yale Schools of<br />

Engineering, Medicine, and Management,<br />

addresses the challenge of preserving intestines<br />

for transplant. Company leaders John Geibel,<br />

Joseph Zinter, and Jesse Rich have developed<br />

the Intestinal Preservation Unit, a device<br />

that perfuses the intestine’s lumen and blood<br />

supply simultaneously and independently, at a<br />

rate determined by the surgeon. This “smart”<br />

device collects real-time data on temperature,<br />

perfusion time, and pump flow rates, allowing<br />

doctors to monitor all vital storage parameters.<br />

The technology has the potential to extend<br />

the lifetime of intestines in between the organ<br />

donor and the transplant recipient.<br />

“It’s the first time we have something new for<br />

this particular organ,” Geibel said.<br />

Revai has demonstrated that the preservation<br />

unit decreases the rate of necrosis, or massive<br />

cell death, in pig intestinal tissue. This exciting<br />

result held up when the unit was tested on<br />

human samples through partnerships with<br />

New England organ banks.<br />

“We’re the only team currently presenting<br />

peer-reviewed data on testing with human<br />

tissue,” said CEO Jesse Rich, proud that Revai is<br />

a frontrunner in this area of exploration.<br />

Students in a Yale class called Medical Device<br />

Design and Innovation built the first functional<br />

prototype of the Intestinal Preservation Unit<br />

for testing. The device went on to win the<br />

2014 BMEStart competition sponsored by the<br />

National Collegiate Inventors and Innovators<br />

Alliance. Revai plans to continue product<br />

development and testing for the unit, and<br />

will seek FDA approval to commercialize the<br />

device.<br />

PHOTO BY HOLLY ZHOU<br />

Joseph Zinter and Jesse Rich look at<br />

a model of their Intestinal Preservation<br />

Unit.<br />

Geologists Find Surprising Softness in Lithosphere<br />

By Danya Levy<br />

As a student 40 years ago, Shun-ichiro<br />

Karato learned of the physical principles<br />

governing grain boundaries in rocks, or<br />

the defects that occur within mineral structures.<br />

Now, as a Yale professor, he has applied<br />

these same concepts to a baffling geophysical<br />

puzzle. Karato has developed a new<br />

model to explain an unexpected decrease in<br />

the stiffness of the lithosphere.<br />

Earth’s outer layers of rock include the<br />

hard lithosphere — which scientists previously<br />

assumed to be stiff — and the softer<br />

asthenosphere. Seismological measurements<br />

performed across North America<br />

over the past several years have yielded a<br />

surprising result.<br />

“You should expect that the velocities [of<br />

seismological waves] would be high in the<br />

lithosphere and low in the asthenosphere,”<br />

Karato said. Instead, a drop was observed<br />

in the middle of the lithosphere, indicating<br />

softness. With the help of colleagues Tolulope<br />

Olugboji and Jeffrey Park, Karato came<br />

up with a new explanation for these findings.<br />

Recalling from his studies that grain<br />

boundaries can slide to cause elastic deformation,<br />

Karato made observations at a<br />

microscopic level and showed that mineral<br />

weakening occurs at lower temperatures<br />

than previously thought.<br />

Even if mineral grains themselves are<br />

strong, the grain boundaries can weaken<br />

at temperatures slightly below their melting<br />

point. As a result, seismic wave observations<br />

show increased softness even while<br />

the rock retains large-scale strength.<br />

Karato noted that there is still work to be<br />

done in this area. But his research is a significant<br />

step forward in understanding the<br />

earth’s complex layers.<br />

“This is what I love,” he said. “Looking at<br />

the beauty of the earth and then introducing<br />

some physics [sometimes] solves enigmatic<br />

problems.”<br />

PHOTO BY DANYA LEVY<br />

Professor Karato, who works in<br />

the Kline Geology Laboratory building,<br />

makes use of some of the most advanced<br />

high-pressure equipment.<br />

<br />

November 2015<br />

<br />

7

NEWS<br />

medicine<br />

AN UNEXPECTED DEFENSE<br />

Lupus-causing agent shows potential for cancer therapy<br />

BY ANSON WANG<br />

Some of the world’s deadliest diseases occur when the body<br />

begins to betray itself. In cancer, mutated cells proliferate<br />

and overrun normal ones. Lupus, an autoimmune disease,<br />

occurs when the body’s immune system begins to attack its<br />

own cells. But what if the mechanisms of one disease could<br />

be used to counteract another?<br />

This thought inspired recent work by James Hansen, a Yale<br />

professor of therapeutic radiology. Hansen transformed<br />

lupus autoantibodies — immune system proteins that target<br />

the body’s own proteins to cause lupus — into selective<br />

vehicles for drug delivery and cancer therapy.<br />

His focus was 3E10, an autoantibody associated with<br />

lupus. Hansen and his team knew 3E10 could penetrate<br />

a cell’s nucleus, inhibiting DNA repair and sparking<br />

symptoms of disease. What remained a mystery was the<br />

exact mechanism by which 3E10 accomplishes nuclear<br />

penetration, and why the autoantibody is apparently<br />

selective for tumor cells. Unlocking these scientific secrets<br />

opened up new possibilities to counteract disease, namely,<br />

by protecting against cancer.<br />

What Hansen’s team found was that 3E10’s ability to<br />

penetrate efficiently into a cell nucleus is dependent on<br />

the presence of DNA outside cell walls. When solutions<br />

absent of DNA were added to cells incubated with 3E10,<br />

no nuclear penetration occurred. With the addition of<br />

purified DNA to the cell solution, nuclear penetration by<br />

3E10 was induced immediately. In fact, the addition of<br />

solutions that included DNA increased nuclear penetration<br />

by 100 percent. The researchers went on to show that the<br />

actions of 3E10 also rely on ENT2, a nucleoside transporter.<br />

Once bound to DNA outside of a cell, the autoantibody can<br />

be transported into the nucleus of any cell via the ENT2<br />

nucleoside transporter.<br />

“Now that we understand how [3E10] penetrates into<br />

the nucleus of live cells in a DNA dependent manner, we<br />

believe we have an explanation for the specific targeting of<br />

the antibody to damaged or malignant tissues where DNA<br />

is released by dying cells,” Hansen said.<br />

Because there is a greater presence of extracellular DNA<br />

released by dying cells in the vicinity of a tumor, antibody<br />

penetration occurs at a higher rate in cancerous tissue. This<br />

insight holds tremendous meaning for cancer therapies.<br />

If a lupus autoantibody were coupled with an anti-cancer<br />

drug, scientists would have a way of targeting that drug to<br />

tissue in need. In this way, what causes one disease could be<br />

harnessed to treat another.<br />

The primary biological challenge for cancer therapy<br />

is to selectively target cancer cells while leaving healthy<br />

ones alone. The 3E10 autoantibody is a promising solution<br />

because it offers this specificity, a direct path to the tumor<br />

cells that will bypass all cells functioning normally. The<br />

molecule could carry therapeutic cargo, delivering anticancer<br />

drugs to unhealthy cells in live tissue.<br />

The Yale researchers were pleased with their next step as<br />

well — they showed that these engineered molecules were<br />

in fact tumor-specific. Tissue taken from mice injected with<br />

flourescently tagged autoantibodies showed the presence<br />

of the antibody in tumor cells, but not normal ones after<br />

staining.<br />

Now, Hansen and his colleagues are looking into using<br />

the 3E10 and their engineered molecules to kill cancer<br />

cells. Since some cancer cells are already sensitive to DNA<br />

damage, inhibition of DNA-repair by 3E10 alone may<br />

be enough to kill the cell. Normal cells with intact DNA<br />

repair mechanisms would be likely to resist these effects,<br />

making 3E10 nontoxic to normal tissue. The researchers are<br />

working to optimize the binding affinity of 3E10 so that it<br />

can penetrate cells more efficiently and can exert a greater<br />

influence on DNA repair. The goal is to conduct a clinical<br />

trial within the next few years.<br />

In the search for more effective drugs against cancer,<br />

answers can emerge from the most extraordinary places.<br />

“Our discovery that a lupus autoantibody can potentially be<br />

used as a weapon against cancer was completely unexpected.<br />

3E10 and other lupus antibodies continue to surprise and<br />

impress us, and we are very optimistic about the future of<br />

this technology,” Hansen said.<br />

The recent study was published in the journal Scientific<br />

Reports.<br />

IMAGE COURTESY OF JAMES HANSEN<br />

James Hansen (left) pictured with postdoctoral research<br />

associate Philip Noble.<br />

8 November 2015

technology<br />

NEWS<br />

NEW DEVICE FOR THE VISUALLY IMPAIRED<br />

Collaboration yields innovative navigation technology<br />

BY AARON TANNENBAUM<br />

Despite its small size and simple appearance, the Animotus<br />

is simultaneously a feat of engineering, a work of art, and a<br />

potentially transformative community service project.<br />

Adam Spiers, a postdoctoral researcher in Yale University’s<br />

department of mechanical engineering, has developed a<br />

groundbreaking navigational device for both visually impaired<br />

and sighted pedestrians. Dubbed Animotus, the device can<br />

wirelessly locate indoor targets and changes shape to point<br />

its user in the right direction towards these targets. Unlike<br />

devices that have been created for visually impaired navigation<br />

in the past, Spiers’ device communicates with its users by way<br />

of gradual rotations and extensions in the shape of its body.<br />

This subtly allows the user to remain focused on his or her<br />

surroundings. Prior iterations of this technology communicated<br />

largely through vibrations and sound.<br />

Spiers created Animotus in collaboration with Extant, a<br />

visually impaired British theater production company that<br />

specializes in inclusive performances. The device has already<br />

been successful in Extant’s interactive production of the novel<br />

“Flatland,” and with further research and development the<br />

Animotus may be able to transcend the realm of theater and<br />

dramatically change the way in which the visually impaired<br />

experience the world.<br />

Haptic technology, systems that make use of our sense of<br />

touch, is most widely recognized in the vibrations of cell phones.<br />

The potential applications of haptics, however, are far more<br />

complex and important than mere notifications. Spiers was<br />

drawn to the field of haptics for the implications on medical and<br />

assistive technology. In 2010, he first collaborated with Extant to<br />

<br />

IMAGE COURTESY OF ADAM SPIERS<br />

Animotus has a triangle imprinted on the top of the device to<br />

ensure that the user is holding it in the proper direction.<br />

apply his research in haptics to theater production.<br />

To facilitate a production of “The Question,” an immersive<br />

theater experience set in total darkness, Spiers created a<br />

device called the Haptic Lotus, which grew and shrunk in the<br />

user’s hands to notify him when he was nearing an intended<br />

destination. The device worked well, but could only alert<br />

users when they were nearing their targets, instead of actively<br />

directing them to specific sites. As such, the complexity of the<br />

show was limited.<br />

Thanks to Spiers’ newly designed Animotus, Extant’s 2015<br />

production of “Flatland” was far more complex. Spiers and<br />

the production team at Extant sent four audience members at<br />

a time into the pitch-black interactive set, which was built in<br />

an old church in London. Each of the four theatergoers was<br />

equipped with an Animotus device to guide her through the set<br />

and a pair of bone-conduction headphones to narrate the plot.<br />

The Animotus successfully guided each audience member on a<br />

unique route through the production with remarkable accuracy.<br />

Even more impressive, Spiers reported that the average<br />

walking speed was 1.125 meters per second, which is only 0.275<br />

meters per second slower than typical human walking speed.<br />

Furthermore, walking efficiency between areas of the set was<br />

47.5 percent, which indicates that users were generally able to<br />

reach their destinations without excessive detours.<br />

The success of Animotus with untrained users in “Flatland” left<br />

Spiers optimistic about future developments and applications for<br />

his device. If connected to GPS rather than indoor navigational<br />

targets, perhaps the device will be able to guide users outdoors<br />

wherever they choose to go. Of course, this introduces a host of<br />

safety hazards that did not exist in the controlled atmosphere of<br />

“Flatland,” but Spiers believes that with some training, visually<br />

impaired users may one day be able to confidently navigate<br />

outdoor streets with the help of an Animotus.<br />

Spiers is particularly encouraged by emails he has received<br />

from members of the visually impaired community, thanking<br />

him for his research on this subject and urging him to continue<br />

work on this project. “It’s very rewarding to know that you’re<br />

giving back to society, and that people care about what you’re<br />

doing,” Spiers said.<br />

Though the majority of Spiers’ work has been in the realm<br />

of assistive technologies for the visually impaired, he has also<br />

worked to develop surgical robots to allow doctors to remotely<br />

feel tissues and organs without actually touching them.<br />

Spiers cautions students who focus exclusively on one area<br />

of study, as he would not have accomplished what he has with<br />

the Animotus without an awareness of what was going on in a<br />

variety of fields. Luckily, for budding professionals in all fields,<br />

opportunities for collaborations akin to Spiers’ with Extant have<br />

never been more abundant.<br />

November 2015<br />

<br />

9

NEWS<br />

health<br />

LESSONS FROM THE HADZA<br />

Modern hunter-gatherers reveal energy use strategies<br />

BY JONATHAN GALKA<br />

The World Health Organization attributes obesity in<br />

developed countries to a decrease in exercise and energy<br />

expenditure compared to our hunter-gatherer ancestors, who<br />

led active lifestyles. In recent research, Yale professor Brian<br />

Wood examined total energy expenditure and metabolism in<br />

the Hadza population of northern Tanzania — a society of<br />

modern hunter-gatherers.<br />

The Hadza people continue traditional tactics of hunting<br />

and gathering. Every day, they walk long distances to<br />

forage, collect water and wood, and visit neighboring<br />

groups. Individuals remain active well into middle age. Few<br />

populations today continue to live an authentic huntergatherer<br />

lifestyle. This made the Hadza the perfect group<br />

for Wood and his team to research total energy expenditure,<br />

or the number of calories the body burns per day, adjusted<br />

for individuals who lead sedentary, moderate intensity, or<br />

strenuous lives. This total energy expenditure is a vital metric<br />

used to determine how much energy intake a person needs.<br />

The researchers examined the effects that body mass, fatfree<br />

mass, sex, and age have on total energy expenditure.<br />

They then investigated the effects of physical activity and<br />

daily workload. Finally, they looked at urinary biomarkers<br />

of metabolic stress, which reflect the amount of energy the<br />

body needs to maintain normal function.<br />

Wood was shocked by the results he saw. Conventional<br />

public health wisdom associates total energy expenditure<br />

with physical activity, and thus blames lower exercise rates<br />

for the western obesity epidemic. But his study found that<br />

fat-free mass was the strongest predictor of total energy<br />

expenditure. Yes, the Hadza people engage in more physical<br />

activity per day than their western counterparts, but when<br />

the team controlled for body size, there was no difference<br />

in the average daily energy expenditure between the two<br />

groups. “Neither sex nor any measure of physical activity or<br />

workload was correlated with total energy expenditure in<br />

analyses for fat-free mass,” Wood said.<br />

Moreover, despite their similar total energy expenditure,<br />

Hadza people showed higher levels of metabolic stress<br />

compared to people in western societies today. The overall<br />

suggestion that this data seemed to be making was that<br />

there is more to the obesity story than a decline in physical<br />

exercise. Wood and his colleagues have come up with an<br />

alternative explanation.<br />

“Adults with high levels of physical activity may adapt by<br />

reducing energy allocation to other physical activity,” Wood<br />

said.<br />

It would make sense, then, that total energy expenditure<br />

is similar across wildly different lifestyles — people who<br />

participate in strenuous activity every day reorganize their<br />

energy expenditure so that their total calories burned stays<br />

in check.<br />

To account for the higher levels of metabolic stressors<br />

in Hadza people, Wood and his research team suggested<br />

high rates of heavy sun exposure, tobacco use, exposure to<br />

smoke from cooking fires, and vigorous physical activity, all<br />

characteristic of the average Hadza adult.<br />

Daily energy requirements and measurements of physical<br />

activity in Hadza adults demonstrate incongruence with<br />

current accepted models of total energy expenditure: despite<br />

their high levels of daily activity, Hadza people show no<br />

evidence of a greater total energy expenditure relative to<br />

western populations.<br />

Wood said that further work is needed in order to<br />

determine if this phenomenon is common, particularly<br />

among other traditional hunter-gatherers.<br />

“Individuals may adapt to increased workloads to keep<br />

energy requirements in check,” he said, adding that these<br />

adaptations would have consequences for accepted models<br />

of energy expenditure. “Particularly, estimating total energy<br />

expenditure should be based more heavily on body size and<br />

composition and less heavily on activity level.”<br />

Collaborators on this research project included Herman<br />

Pontzer of Hunter College and David Raichlen of the<br />

University of Arizona.<br />

IMAGE COURTESY OF BRIAN WOOD<br />

Three hunter-gatherers who were subjects of Wood’s study<br />

stand overlooking the plains of Tanzania, home to the Hadza<br />

population.<br />

10 November 2015

cell biology<br />

NEWS<br />

THE PROTEIN EXTERMINATORS<br />

PROTACs offer alternative to current drug treatments<br />

BY KEVIN BIJU<br />

IMAGE COURTESY OF YALE UNIVERSITY<br />

Craig Crews, Yale professor of chemistry, has developed a<br />

variation on a class of proteins called PROTACs, which destroy<br />

rogue proteins within cancerous cells. Crews has also founded<br />

a company to bring his treatment idea closer to industry.<br />

Your house is infested with flies. The exterminators try<br />

their best to eliminate the problem, but they possess terribly<br />

bad eyesight. If you had the chance to give eyeglasses to the<br />

exterminators, wouldn’t you?<br />

In some ways, cancer is similar to this insect quandary.<br />

A cancerous cell often becomes infested with a host of<br />

aberrant proteins. The cell’s exterminators, proteins called<br />

E3 ubiquitin ligases, then attempt to destroy these harmful<br />

variants, but they cannot properly identify the malevolent<br />

proteins. The unfortunate result: both beneficial and<br />

harmful proteins are destroyed.<br />

How can we give eyeglasses to the E3 ubiquitin ligases?<br />

Craig Crews, professor of chemistry at Yale University, has<br />

found a promising solution.<br />

According to the National Cancer Institute, some 14<br />

percent of men develop prostate cancer during their lifetime.<br />

This common cancer has been linked to overexpression and<br />

mutation of a protein called the androgen receptor (AR).<br />

Consequently, prostate cancer research focuses on reducing<br />

AR levels. However, current inhibitory drugs are not specific<br />

enough and may end up blocking the wrong protein.<br />

Crews and his team have discovered an alternative. By<br />

using PROTACs (proteolysis targeting chimeras), they have<br />

been able to reduce AR expression levels by more than 90<br />

percent.<br />

“We’re hijacking E3 ubiquitin ligases to do our work,”<br />

Crews said.<br />

PROTACs are heterobifunctional molecules: one end<br />

binds to AR, the bad protein, and the other end binds to<br />

the E3 ligase, the exterminator. PROTACs use the cell’s own<br />

quality-control machinery to destroy the harmful protein.<br />

Crews added that PROTACs are especially promising<br />

because they are unlikely to be needed in large doses. “The<br />

exciting implication is we only need a small amount of the<br />

drug to clear out the entire rogue protein population,” he<br />

said. A lower required dose could lessen the risk of negative<br />

side effects that accompany any medication. It could also<br />

mean that purchasing the drug is economcally feasible for<br />

more people.<br />

To put his innovative research into action, Crews founded<br />

the pharmaceutical company Arvinas. Arvinas and the<br />

Crews Lab collaborate and research the exciting potential<br />

of PROTACs in treating cancer. PROTACs have been<br />

designed to target proteins associated with both colon and<br />

breast cancer.<br />

In addition to researching PROTACs, Crews has<br />

unearthed other techniques to exterminate proteins.<br />

“What I wanted to do is take a protein [AR] and add a<br />

little ‘grease’ to the outside and engage the cell’s degradation<br />

mechanism,” Crews said. This grease technique is called<br />

hydrophobic tagging and is highly similar to PROTACs<br />

in that it engages the cell’s own degradation machine to<br />

remove the harmful protein.<br />

Having been given eyeglasses to the E3 ligases, Crews is<br />

looking for new ways to optimize his technique.<br />

“My lab is still trying to fully explore what is possible with<br />

this technology,” he said. “It’s a fun place to be.”<br />

IMAGE COURTESY OF WIKIPEDIA<br />

Enzymes work with ubiquitin ligases to degrade aberrant<br />

proteins in cells.<br />

<br />

November 2015<br />

<br />

11

NEWS<br />

immunology<br />

MOSQUITOES RESISTANT TO MALARIA<br />

Scientists investigate immune response in A. gambiae<br />

BY JULIA WEI<br />

Anopheles gambiae is professor Richard Baxter’s insect of<br />

interest, and it is easy to see why: The mosquito species found<br />

in sub-Saharan Africa excels at transmitting malaria, one of<br />

the deadliest infectious diseases. “[Malaria] is a scourge of the<br />

developing world,” said Baxter, a professor in Yale’s chemistry<br />

department. Discovering a cure for malaria starts with<br />

understanding its most potent carrier.<br />

This is one research focus of the Baxter lab, where scientists<br />

are probing the immune system of A. gambiae mosquitoes<br />

for answers. Despite being ferocious in their transmission of<br />

malaria to human populations, these insects show a remarkable<br />

immunity against the disease themselves. With ongoing research<br />

and inquiry, scientists could one day harness the immune power<br />

of these mosquitoes to solve a global health crisis — rampant<br />

malaria in developing countries.<br />

The story of Baxter’s work actually starts with a 2006 study, a<br />

pioneering collaboration led by professor Kenneth Vernick at<br />

the University of Minnesota. Vernick and his team collected and<br />

analyzed samples of this killer bug in Mali. The researchers were<br />

surprised by what they found. Not only did offspring infected with<br />

malaria-positive blood carry varying numbers of Plasmodium,<br />

the parasite responsible for transmitting malaria, but a shocking<br />

22 percent of the mosquitos sampled carried no parasite at all.<br />

Since then, scientists have turned their attention to the complex<br />

interplay between malaria parasites and A. gambiae’s immune<br />

system. Vernick’s group correlated the mosquitos’ genomes with<br />

their degree of parasite infection, and identified the gene cluster<br />

APL1 as a significant factor in the insect’s ability to muster an<br />

immune response.<br />

Now, nearly a decade following Vernick’s research in Mali,<br />

A. gambiae’s immune mechanism is better understood. Three<br />

proteins are key players in the hypothesized immune chain of<br />

response: APL1, TEP1, and LRIM1. TEP1 binds directly to<br />

malaria parasites, which are then destroyed by the mosquito’s<br />

immune system. Of course the molecule cannot complete the job<br />

alone. TEP1 only works in combatting infection when a complex<br />

of LRIM1 and APL1 is present in the mosquito’s blood and is<br />

available as another line of defense.<br />

To complicate matters, this chain of response is a mere outline<br />

for the full complex mechanism. Gene cluster APL1 codes for<br />

three homologous proteins named APL1A, APL1B, and APL1C.<br />

According to Baxter, this family of proteins may serve as “a<br />

molecular scaffold” in the immune response. Though they all<br />

belong to the APL1 family, each individual protein may serve a<br />

distinct purpose within A. gambiae’s immune system. Herein lies<br />

one of Baxter’s goals — uncover the functions and mechanisms of<br />

the individual proteins.<br />

Prior research has elucidated the role of protein C in this<br />

family. Scientists have observed that LRIM1 forms a complex<br />

with APL1C, and this complex then factors in to the immune<br />

response for the mosquito. How proteins A and B contribute to<br />

the immune response was poorly understood.<br />

In Baxter’s lab, confirming whether LRIM1 forms similar<br />

complexes with APL1A and APL1B posed a challenge, namely<br />

because both proteins are unstable. Through trial and error,<br />

Baxter’s team found that LRIM1 does indeed form complexes<br />

with APL1A and APL1B. The scientists also observed modest<br />

binding to TEP1, the protein that attaches itself directly to the<br />

malaria parasite. This finding could further explain how the<br />

mosquito’s immune system is able to put up such a strong shield<br />

against malaria.<br />

Within the APL1 family, the APL1B protein still presents the<br />

most unanswered questions. Previous studies have shown that<br />

APL1A expression leads to phenotypes against human malaria,<br />

and APL1C to phenotypes against rodent malaria. The role of<br />

APL1B remains cloudy. “Being contrarian people, we decided<br />

to look at the structure of APL1B because it’s the odd one out,”<br />

Baxter said.<br />

His lab discovered that purified proteins APLIA and APL1C<br />

remain monomers in solution, while APL1B becomes a<br />

homodimer, two identical molecules linked together. Brady<br />

Summers, a Yale graduate student, went on to determine the<br />

crystal structure of APL1B.<br />

This focus on tiny molecules is all motivated by the overarching,<br />

large-scale issue of malaria around the globe. The more<br />

information that Baxter and other scientists can learn in the lab,<br />

the closer doctors will be to reducing the worldwide burden of<br />

malaria.<br />

“Vast amounts of money are spent on malaria control, but<br />

our methods and approaches have not changed a lot and are<br />

susceptible to resistance by both the parasite and the mosquito<br />

vector,” Baxter said. A better understanding of A. gambiae in<br />

the lab is the first step towards developing innovative, effective<br />

measures against malaria in medical practice.<br />

IMAGE COURTESY OF RICHARD BAXTER<br />

The APL1B protein, here in a homodimer, remains elusive.<br />

12 November 2015

BLACK HOLE<br />

WITH A GROWTH<br />

PROBLEM<br />

a supermassive black hole<br />

challenges foundations<br />

of modern astrophysics<br />

by Jessica Schmerler<br />

art by Ashlyn Oakes

A long, long time<br />

ago in a galaxy far,<br />

far away…<br />

<br />

<br />

the largest black holes discovered to<br />

date was formed. While working on a<br />

project to map out ancient moderate-<br />

<br />

of international researchers stumbled<br />

across an unusual supermassive black<br />

hole (SMBH). This group included<br />

<br />

Munson professor of astrophysics.<br />

<br />

was surprised to learn that certain<br />

qualities of the black hole seem to<br />

challenge widely accepted theories<br />

about the formation of galaxies.<br />

The astrophysical theory of co-evolution<br />

suggests that galaxies pre-date their<br />

black holes. But certain characteristics<br />

of the supermassive black hole located in<br />

CID-947 do not fit this timeline. As Urry<br />

put it: “If this object is representative [of the<br />

evolution of galaxies], it shows that black<br />

holes grow before their galaxies — backwards<br />

from what the standard picture says.”<br />

The researchers published their remarkable<br />

findings in July in the journal Science. Not only<br />

was this an important paper for astrophysicists<br />

everywhere, but it also reinforced the mysterious<br />

nature of black holes. Much remains unknown<br />

about galaxies, black holes, and their place<br />

in the history of the universe — current theories<br />

may not be sufficient to explain new observations.<br />

The ordinary and the supermassive<br />

Contrary to what their name might suggest,<br />

black holes are not giant expanses of empty space.<br />

They are physical objects that create a gravitational<br />

field so strong that nothing, not even light, can escape.<br />

As explained by Einstein’s theory of relativity,<br />

black holes can bend the fabric of space and time.<br />

An ordinary black hole forms when a star reaches<br />

the end of its life cycle and collapses inward — this<br />

sparks a burst of growth for the black hole as it absorbs<br />

surrounding masses. Supermassive black holes,<br />

on the other hand, are too large to be formed by a single<br />

star alone. There are two prevailing theories regarding<br />

their formation: They form when two black<br />

holes combine during a merging of galaxies, or they are<br />

generated from a cluster of stars in the early universe.<br />

If black holes trap light, the logical question that follows is<br />

how astrophysicists can even find them. The answer: they find<br />

them indirectly. Black holes are so massive that light around<br />

them behaves in characteristic, detectable ways. When orbiting<br />

masses are caught in the black hole’s gravitational field,<br />

they accelerate so rapidly that they emit large amounts of radiation<br />

— mainly X-ray flares — that can be detected by special<br />

telescopes. This radiation appears as a large, luminous circle<br />

known as the accretion disc around the center of the black hole.<br />

Active galactic nuclei are black holes that are actively forming,<br />

and they show a high concentration of circulating mass in their<br />

accretion discs, which in turn emits high concentrations of light.<br />

The faster a black hole is growing, the brighter the accretion disc.<br />

With this principle in mind, astrophysicists can collect relevant information<br />

about black holes, such as size and speed of formation.<br />

The theory of co-evolution<br />

Nearly all known galaxies have at their center a moderate to supermassive<br />

black hole. In the mid-1990s, researchers began to notice<br />

that these central black holes tended to relate to the size and shape of

astronomy<br />

FOCUS<br />

their host galaxies. Astrophysicists proposed<br />

that galaxies and supermassive<br />

black holes evolve together, each one<br />

determining the size of the other. This<br />

idea, known as the theory of co-evolution,<br />

became widely accepted in 2003.<br />

In an attempt to explain the underlying<br />

process, theoretical physicists have proposed<br />

that there are three distinct phases of<br />

co-evolution: the “Starburst Period,” when<br />

the galaxy expands, the “SMBH Prime<br />

Period,” when the black hole expands,<br />

and the “Quiescent Elliptical Galaxy,”<br />

when the masses of both the galaxy and<br />

the black hole stabilize and stop growing.<br />

The supermassive black hole at the center<br />

of the galaxy CID-947 weighs in at seven<br />

billion solar masses — seven billion times<br />

the size of our sun (which, for reference, has<br />

a radius 109 times that of the earth). Apart<br />

from its enormous size, what makes this<br />

black hole so remarkable are the size and<br />

character of the galaxy that surrounds it.<br />

Current data from surveys observing<br />

galaxies across the universe indicates that<br />

the total mass distributes between the<br />

black hole and the galaxy in an approximate<br />

ratio of 2:1,000, called the Magorrian<br />

relation. A typical supermassive black<br />

hole is about 0.2 percent of the mass of its<br />

host galaxy. Based on this mathematical<br />

relationship, the theory of co-evolution<br />

predicts that the CID-947 galaxy would<br />

be one of the largest galaxies ever discovered,<br />

but in reality CID-947 is quite ordinary<br />

in size. This system does not come<br />

close to conforming to the Magorrian relation,<br />

as the central black hole is a startling<br />

10 percent of the mass of the galaxy.<br />

An additional piece of the theory of<br />

co-evolution is that the growth of the<br />

supermassive black hole prevents star<br />

formation. Stars form when extremely<br />

cold gas clusters together, and the resultant<br />

high pressure causes an outward<br />

explosion, or a supernova. But when<br />

a supermassive black hole is growing,<br />

the energy from radiation creates a tremendous<br />

amount of heat — something<br />

that should interrupt star formation.<br />

Here, CID-947 once again defies expectations.<br />

Despite its extraordinary size, the<br />

supermassive black hole did not curtail the<br />

creation of new stars. Astrophysicists clearly<br />

observed radiation signatures consistent<br />

with star formation in the spectra captured<br />

IMAGE COURTESY OF WIKIMEDIA COMMONS<br />

The W.M. Keck Observatory rests at the<br />

summit of Mauna Kea in Hawaii.<br />

at the W.M. Keck Observatory in Hawaii.<br />

The discovery of the CID-947 supermassive<br />

black hole calls into question<br />

the foundations of the theory of co-evolution.<br />

That stars are still forming indicates<br />

that the galaxy is still growing,<br />

which means CID-947 could eventually<br />

reach a size in accordance with the Magorrian<br />

relation. Even so, the evolution of<br />

this galaxy still contradicts the theory of<br />

co-evolution, which states that the growth<br />

of the galaxy precedes and therefore dictates<br />

the growth of its central black hole.<br />

New frontiers in astrophysics<br />

Astrophysicists are used to looking<br />

back in time. The images in a telescope<br />

are formed by light emitted long before<br />

the moment of observation, which means<br />

the observer views events that occurred<br />

millions or even billions of years in the<br />

past. To see galaxies as they were at early<br />

epochs, you would have to observe them<br />

at great distances, since the light we see<br />

today has traveled billions of years, and<br />

was thus emitted billions of years ago.<br />

The team of astrophysicists that discovered<br />

the CID-947 black hole was observing<br />

for two nights at the Keck telescope in order<br />

to measure supermassive black holes in active<br />

galaxies as they existed some 12 billion<br />

years ago. The researchers did not expect<br />

to find large black holes, which are rare<br />

for this distance in space and time. Where<br />

they do exist, they usually belong to active<br />

galactic nuclei that are extremely bright.<br />

But of course the observers noticed the<br />

CID-947 supermassive black hole, which<br />

is comparable in size to the largest black<br />

holes in the local universe. Its low luminosity<br />

indicates that it is growing quite slowly.<br />

“Most black holes grow with low accretion<br />

rates such that they gain mass slowly.<br />

To have this big a black hole this early<br />

in the universe means it had to grow very<br />

rapidly at some earlier point,” Urry said.<br />

In fact, if it had been growing at the observed<br />

rate, a black hole this size would<br />

have to be older than the universe itself.<br />

What do these contradictions mean for<br />

the field of astrophysics? Urry and her<br />

colleagues suggest that if CID-947 does in<br />

fact grow to meet the Magorrian relation<br />

relative to its supermassive black hole, this<br />

ancient galaxy could be a model for the<br />

precursors of some of the most massive<br />

galaxies in the universe, such as NGC 1277<br />

of the Perseus constellation. Moreover, this<br />

research opens doors to better understanding<br />

black holes, galaxies, and the universe.<br />

ABOUT THE AUTHOR<br />

JESSICA SCHMERLER<br />

JESSICA SCHMERLER is a junior in Jonathan Edwards College majoring<br />

in molecular, cellular, and developmental biology. She is a member of the<br />

<br />

magazine, and contributes to several on-campus publications.<br />

<br />

enthusiasm about this fascinating discovery.<br />

<br />

<br />

Cambridge University Press, 2004.<br />

<br />

November 2015<br />

<br />

15

FOCUS<br />

biotechnology<br />

By Emma Healy • Art by Christina Zhang<br />

What constitutes a protein? At<br />

first, the answer seems simple<br />

to anyone with a background<br />

in basic biology. Amino acids join together<br />

into chains that fold into the unique<br />

three-dimensional structures we call proteins.<br />

Size matters in proteomics, the scientific<br />

study of proteins. These molecules<br />

are typically complex, comprised of hundreds,<br />

if not thousands, of amino acids.<br />

A protein with demonstrated biological<br />

function usually contains no fewer than<br />

300 amino acids. But findings from a recent<br />

study conducted at the Yale School of<br />

Medicine are challenging the notion that<br />

proteins need to be long chains in order<br />

to serve biological roles. Small size might<br />

not be an end-all for proteins.<br />

The recent research was headed by the<br />

laboratory of Yale genetics professor Daniel<br />

DiMaio. First author Erin Heim, a PhD<br />

student in the lab, and her colleagues conducted<br />

a genetic screen to isolate a set of<br />

functional proteins with the most minimal<br />

set of amino acids ever described.<br />

The chains are short and simple, yet they<br />

exert power over cell growth and tumor<br />

formation. Few scientists would have predicted<br />

that such simple molecules could<br />

have such huge implications for oncology,<br />

and for our basic understanding of proteins<br />

and amino acids.<br />

Engineering the world’s simplest<br />

proteins<br />

There are 20 commonly cited amino acids,<br />

and their order in a chain determines<br />

the structure and function of the resulting<br />

protein. Most proteins consist of many different<br />

amino acids. In contrast, the proteins<br />

identified in this study, aptly named<br />

LIL proteins, were made up entirely of two<br />

amino acids: leucine and isoleucine.<br />

Both of these amino acids are hydrophobic,<br />

meaning they fear water. The scientists<br />

at DiMaio’s lab were deliberately searching<br />

for hydrophobic qualities in proteins. An<br />

entirely hydrophobic protein is limited in<br />

where it can be located within the cell and<br />

what shapes it can assume. To maintain a<br />

safe distance from water, a hydrophobic<br />

protein would situate itself in the interior<br />

of a cell membrane, protected on both<br />

sides by equally water-fearing molecules<br />

called lipids. Moreover, the hydrophobic<br />

property reduces protein complexity by<br />

limiting the potential for interactions between<br />

the polar side chains of hydrophilic,<br />

or water-loving, amino acids. These polar<br />

side chains are prone to electron shuffling<br />

and other modifications, adding considerable<br />

complexity to the protein’s function.<br />

Heim and her group wanted to keep<br />

things simple — a protein that is completely<br />

hydrophobic is more predictable, and is<br />

thus easier to investigate as a research focus.<br />

“It’s rare that a protein is composed<br />

entirely of hydrophobic amino acids,” said<br />

Ross Federman, another PhD student in<br />

the DiMaio lab and another author on the<br />

recent paper.<br />

The LIL proteins were rare and incredibly<br />

valuable. “[Using these proteins] takes<br />

away most of the complication by knowing<br />

where they are and what they look like,”<br />

Heim said. In terms of both chemical reactivity<br />

and amino acid composition, she<br />

said the LIL proteins truly are the simplest<br />

to be engineered to have a biological function.<br />

Small proteins, big functions<br />

What was the consequential biological<br />

function? Through their research, the<br />

scientists were able to link their tiny LIL<br />

proteins to cell growth, proliferation, and<br />

cancer.<br />

The team started with a library of more<br />

than three million random LIL sequences<br />

and incorporated them into retroviruses,<br />

or viruses that infect by embedding their<br />

viral DNA into the host cell’s DNA. “We<br />

manipulate viruses to do our dirty work,<br />

essentially,” Heim said. “One or two viruses<br />

will get into every single cell, integrate<br />

into the cell’s DNA, and the cell will make<br />

that protein.”<br />

16 November 2015

proteomics<br />

FOCUS<br />

As cells with embedded viral DNA started<br />

to produce different proteins, the researchers<br />

watched for biological functions.<br />

In the end, they found a total of 11<br />

functional LIL proteins, all able to activate<br />

cell growth.<br />

Of course this sounds like a good thing,<br />

but uncontrolled cell growth can cause a<br />

proliferation of cancerous cells and tumors.<br />

The LIL proteins in this study affected<br />

cell growth by interacting with the<br />

receptor for platelet-derived growth factor<br />

beta, or PDGFβ. This protein is involved<br />

in the processes of cell proliferation, maturation,<br />

and movement. When the PDG-<br />

Fβ receptor gene is mutated, the protein’s<br />

involvement in cell growth is derailed, resulting<br />

in uncontrolled replication and tumor<br />

formation. By activating the PDGFβ<br />

receptor, the LIL proteins in this study<br />

grant cells independence from growth factor,<br />

meaning they can multiply freely and<br />

can potentially transform into cancerous<br />

cells.<br />

While this particular study engineered<br />

proteins that activated PDGFβ, Heim said<br />

that other work in the lab has turned similar<br />

proteins into inhibitors of the cancer-causing<br />

receptor. By finding proteins<br />

to block activation of PDGFβ, it may be<br />

possible to devise a new method against<br />

one origin of cancer. Even though the biological<br />

function in their most recent paper<br />

was malignant, Heim and her group are<br />

hopeful that these LIL proteins can also be<br />

applied to solve problems in genetics.<br />

Reevaluating perceptions of a protein<br />

No other protein is known to exist with<br />

sequences as simple as those within the<br />

LIL molecules. Other mini-proteins have<br />

been discovered, but none on record have<br />

been documented to display biological activity.<br />

For example, Trp-cage was previously<br />

identified as the smallest mini-protein<br />

in existence, recognized for its ability to<br />

spontaneously fold into a globular structure.<br />

Experiments on this molecule have<br />

been designed to improve understanding<br />

of protein folding dynamics. While Trpcage<br />

and similar mini-proteins serve an<br />

important purpose in research, they do<br />

not measure up to LIL proteins with regard<br />

to biological function.<br />

The recent study at the DiMaio lab pursued<br />

a question beyond basic, conceptual<br />

science: The team looked at the biological<br />

<br />

function of small proteins, not just their<br />

physical characteristics.<br />

The discovery of LIL molecules and the<br />

role they can play has significant implications<br />

for the way scientists think about<br />

proteins. In proteomics, researchers do<br />

not usually expect to find proteins with<br />

extraordinarily short or simple sequences.<br />

For this reason, these sequences tend<br />

to be overlooked or ignored during genome<br />

scans. “This paper shows that both<br />

[short and simple proteins] might actually<br />

be really important, so when somebody is<br />

scanning the genome and cutting out all<br />

of those possibilities, they’re losing a lot,”<br />

Heim said.<br />

Additionally, by limiting the amino acid<br />

diversity of these proteins, researchers<br />

were able to better understand the underlying<br />

mechanisms of amino acid variation.<br />

“If you want to gain insight into the heart<br />

of some mechanism, the more you can isolate<br />

variables, the better your results will<br />

be,” Federman said.<br />

This is especially true for proteins. These<br />

molecules are highly complex, possessing<br />

different energetic stabilities, varying conformations,<br />

and the potential for substantial<br />

differences in amino acid sequence. By<br />

studying LIL proteins, researchers at the<br />

DiMaio lab were able to isolate the effects<br />

of specific amino acid changes at the molecular<br />

level. This is critical information<br />

for protein engineers, who tend to view<br />

most hydrophobic amino acids similarly.<br />

This study contradicted that notion: “Leucine<br />

and isoleucine have very distinct activities,”<br />

Heim said. “Even when two amino<br />

acids look alike, they can actually have<br />

very dissimilar biology.”<br />

Daniel DiMaio is a professor of genetics at<br />

the Yale School of Medicine.<br />

Another ongoing project at the lab involves<br />

screening preexisting cancer databases<br />

in search of short-sequence proteins.<br />

According to Heim, it is possible that scientists<br />

will eventually find naturally occurring<br />

cancers containing similar structures<br />

to the LIL proteins isolated in this<br />

study. This continuing study would further<br />

elucidate the cancer-causing potential<br />

of tiny LIL molecules.<br />

To take their recent work to the next<br />

step, researchers in this group are looking<br />

to create proteins with functions that did<br />

not arise by evolution. The ability to build<br />

proteins with entirely new functions is an<br />

exciting and promising prospect. It presents<br />

an entirely new way of approaching<br />

protein research. The extent of insight into<br />

proteins is no longer bound by the trajectory<br />

of molecular evolution. Instead, scientific<br />

knowledge of proteins is being expanded<br />

daily in the hands of researchers<br />

like Heim and Federman.<br />

November 2015<br />

IMAGE COURTESY OF YALE SCHOOL OF MEDICINE<br />

ABOUT THE AUTHOR<br />

EMMA HEALY<br />

EMMA HEALY is a sophomore in Ezra Stiles college and a prospective<br />