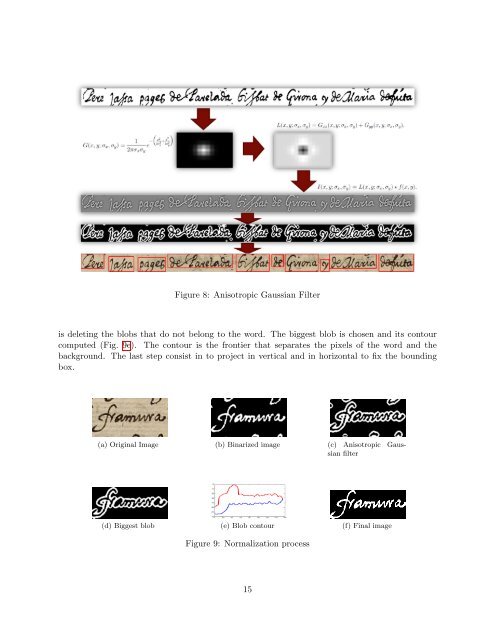

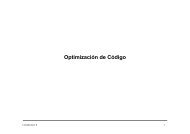

partial Gaussian derivatives along the two orientations at different scales, to merge the components of a word. An anisotropic Gaussian filter (Fig. 8) is def<strong>in</strong>ed as: 1 G(x, y; σx, σy) = e 2πσxσy x2 −( σ2 + x y2 σ2 ) y From the filter (1) the Laplacian of Gaussian operator is based on the addition of the second derivatives <strong>in</strong> x and y as follows: L(x, y; σx, σy) = Gxx(x, y; σx, σy) + Gyy(x, y; σx, σy) (2) A scale space representation of the l<strong>in</strong>e images is constructed by convolv<strong>in</strong>g the image with L from (2). Consider a two-dimensional image f(x,y); then, the correspond<strong>in</strong>g output image is I(x, y; σx, σy) = L(x, y; σx, σy) ∗ f(x, y) (3) As we can see <strong>in</strong> figure 8 the output is a grey-scale image, where the background has a middle grey-level and the words are lightly grey. It is very difficult to determ<strong>in</strong>e a threshold for select<strong>in</strong>g the pixels that corresponds to words. We have observed that most words have black contour. Our improvement allows, us<strong>in</strong>g this mask, to split each word <strong>in</strong> three areas: background, word and contours of the word. The mask converts the black th<strong>in</strong> contours <strong>in</strong> thick contours. The rest of the image is considered background. This ga<strong>in</strong>ed of the contour cause the jo<strong>in</strong><strong>in</strong>g of the letters that are together. This improvement allows to merge the characters of the word and is easier to split different words. The words, which are extracted from a scale space representation, are blob-like, but, to make sure that the blob merges all the parts of the words, we apply a clos<strong>in</strong>g operator to each word. 6.2 Fast rejection The previous process produces one blob for each word <strong>in</strong> the document, but sometimes these components do not represent words, because they are sta<strong>in</strong>s, l<strong>in</strong>es or small parts of a word that has not been merged with the orig<strong>in</strong>al word. The selection of the suitable words are done <strong>in</strong> two steps. First, the blobs which are very small, regard<strong>in</strong>g to the height and the width of the segmented l<strong>in</strong>e, are rejected. For the rema<strong>in</strong><strong>in</strong>g blobs, we choose those blobs with more pixels than a threshold, experimentally set. 6.3 Noise removal The images rema<strong>in</strong><strong>in</strong>g after the fast rejection step are subject to a normalization process to reduce their variability. Our proposal allows to clean the image and to fit the bound<strong>in</strong>g box to the word (Fig. 9). The first step consists <strong>in</strong> b<strong>in</strong>ariz<strong>in</strong>g the word image (Fig. 9b). Then, we apply the anisotropic Gaussian filter expla<strong>in</strong>ed before to merge the different parts of the same word (Fig. 9c). Once applied, the image is composed by several blobs, as we can see <strong>in</strong> figure 9d, then, the next step 14 (1)

Figure 8: Anisotropic Gaussian Filter is delet<strong>in</strong>g the blobs that do not belong to the word. The biggest blob is chosen and its contour computed (Fig. 9e). The contour is the frontier that separates the pixels of the word and the background. The last step consist <strong>in</strong> to project <strong>in</strong> vertical and <strong>in</strong> horizontal to fix the bound<strong>in</strong>g box. (a) Orig<strong>in</strong>al Image (b) B<strong>in</strong>arized image (c) Anisotropic Gaussian filter (d) Biggest blob (e) Blob contour (f) F<strong>in</strong>al image Figure 9: Normalization process 15