A Tree to String Transducer

A Tree to String Transducer

A Tree to String Transducer

You also want an ePaper? Increase the reach of your titles

YUMPU automatically turns print PDFs into web optimized ePapers that Google loves.

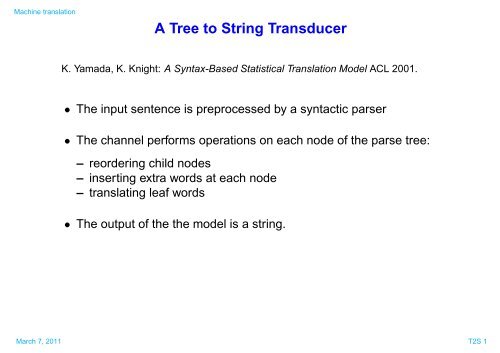

Machine translation<br />

A <strong>Tree</strong> <strong>to</strong> <strong>String</strong> <strong>Transducer</strong><br />

K. Yamada, K. Knight: A Syntax-Based Statistical Translation Model ACL 2001.<br />

• The input sentence is preprocessed by a syntactic parser<br />

• The channel performs operations on each node of the parse tree:<br />

– reordering child nodes<br />

– inserting extra words at each node<br />

– translating leaf words<br />

• The output of the the model is a string.<br />

March 7, 2011 T2S 1

Machine translation<br />

Parse <strong>Tree</strong>(E)<br />

PRP VB1<br />

VB<br />

PRP VB2 VB1<br />

he ha<br />

he adores<br />

TO VB<br />

MN TO<br />

music<br />

Sentence(J)<br />

<strong>to</strong><br />

VB<br />

listening<br />

VB2<br />

VB TO<br />

TO<br />

<strong>to</strong><br />

ga<br />

listening no<br />

MN<br />

music<br />

adores<br />

An Example ∗<br />

desu<br />

Reorder<br />

Insert<br />

Translate<br />

Take Leaves<br />

VB<br />

PRP VB2 VB1<br />

he<br />

TO VB<br />

MN TO<br />

music<br />

Kare ha ongaku wo kiku no ga daisuki desu<br />

<strong>to</strong><br />

listening<br />

VB<br />

adores<br />

PRP VB2 VB1<br />

kare ha<br />

TO VB<br />

MN TO<br />

ongaku wo kiku<br />

∗ Source: http://www.isi.edu/natural-language/people/cs562-8-22-06.pdf<br />

March 7, 2011 T2S 2<br />

ga<br />

no<br />

daisuki<br />

desu<br />

.

Machine translation<br />

⇒ The reordering is decided according <strong>to</strong> the r-table<br />

VB<br />

PRP VB1 VB2<br />

He adores VB TO<br />

listening TO NN<br />

original order reordering P(reorder)<br />

PRP VB1 VB2 0.074<br />

PRP VB2 VB1 0.723<br />

PRP VB1 VB2 VB1 PRP VB2 0.061<br />

· · · · · ·<br />

VB TO VB TO 0.252<br />

TO VB 0.749<br />

TO NN TO NN 0.107<br />

NN TO 0.893<br />

· · · · · ·<br />

<strong>to</strong> music<br />

VB<br />

PRP VB2<br />

VB1<br />

He TO VB adores<br />

NN<br />

music<br />

Reordering probability: 0.723 · 0.749 · 0.893 = 0.484<br />

TO<br />

<strong>to</strong><br />

listening<br />

March 7, 2011 T2S 3

Machine translation<br />

⇒The insertion of a new node is decided according <strong>to</strong> the n-table<br />

VB<br />

PRP VB2<br />

VB1<br />

parent TOP VB VB VB TO TO · · ·<br />

node VB VB PRP TO TO NN · · ·<br />

P(None) 0.735 0.687 0.344 0.709 0.900 0.800 · · ·<br />

P(Left) 0.004 0.061 0.004 0.030 0.003 0.096 · · ·<br />

P(right) 0.260 0.252 0.652 0.261 0.007 0.104 · · ·<br />

He TO VB adores<br />

NN<br />

music<br />

TO<br />

<strong>to</strong><br />

listening<br />

VB<br />

w P(ins-w)<br />

ha 0.219<br />

ta 0.131<br />

wo 0.099<br />

no 0.094<br />

ni 0.080<br />

te 0.078<br />

ga 0.062<br />

. .<br />

desu 0.0007<br />

PRP VB2<br />

VB1<br />

He ha TO VB ga adores desu<br />

NN<br />

music<br />

TO<br />

listening no<br />

Insertion probability: (0.652·0.219)·(0.252·0.094)·(0.252·0.062)·(0.252·0.0007)·<br />

0.735 · 0.709 · 0.900 · 0.800 = 3.498e − 9<br />

March 7, 2011 T2S 4<br />

<strong>to</strong><br />

.<br />

.

Machine translation<br />

⇒The translation is decided according <strong>to</strong> the t-table<br />

adores he listening music <strong>to</strong> · · ·<br />

daisuki 1.000 kare 0.952 kiku 0.333 ongaku 0.900 ni 0.216 · · ·<br />

NULL 0.016 kii 0.333 naru 0.100 NULL 0.204<br />

nani 0.005 mi 0.333 <strong>to</strong> 0.133<br />

VB<br />

PRP VB2<br />

VB1<br />

He ha TO VB ga adores desu<br />

NN<br />

music<br />

TO<br />

<strong>to</strong><br />

listening no<br />

.<br />

.<br />

.<br />

.<br />

.<br />

VB<br />

PRP VB2<br />

VB1<br />

kare ha TO VB ga daisuki desu<br />

NN<br />

ongaku<br />

TO<br />

wo<br />

.<br />

kiku no<br />

Translation probability: 0.952 · 0.900 · 0.038 · 1.000 = 0.0108<br />

March 7, 2011 T2S 5

Machine translation<br />

Formal description<br />

• Goal: Transform an English parse tree E in<strong>to</strong> a French sentence f<br />

• Definitions<br />

- E consists of nodes ε1, ε2,...,εn<br />

- f consists of words f1,f2, ...,fn<br />

- θi = (νi,ρi, τi) is a set of values of random variables associated <strong>to</strong> εi<br />

- θ = θ1,θ2, ...,θn is the set of all random variables associated with a parse<br />

tree E = ε1, ε2,...,εn<br />

P(f|E) =<br />

<br />

P(θ|E)<br />

θ:Str(θ(E))=f<br />

P(θ|E) = P(θ1, θ2, ...,θn|ε1,ε2, ...,εn)<br />

n<br />

= P(θi|θ1, θ2, ...,θn,ε1, ε2,...,εn)<br />

≈<br />

i=1<br />

n<br />

P(θi|εi)<br />

i=1<br />

March 7, 2011 T2S 6

Machine translation<br />

Where<br />

Formal description<br />

P(θi|εi) = P(νi, ρi,τi|εi) ≈ P(νi|εi)P(ρi|εi)P(τi|εi)<br />

= P(νi|N(εi))P(ρi|R(εi))P(τi|T (εi))<br />

= n(νi|N(εi))r(ρi|R(εi))t(τi|T (εi))<br />

n(ν|N(ε)) ≡ n(ν|N), r(ρ|R(ε)) ≡ r(ρ|R), t(τ|T (ε)) ≡ t(τ|T)<br />

are the parameters of the model<br />

For example:<br />

• n(ν|N) = P(right, ha|VB − PRP)<br />

• r(ρ|R) = P(PRP − VB2 − VB1|PRP − VB1 − VB2)<br />

P(f|E) = <br />

θ:Str(θ(E))=f<br />

n<br />

i=1 n(νi|N(εi))r(ρi|R(εi))t(τi|T (εi))<br />

March 7, 2011 T2S 7

Machine translation<br />

Estimation of the parameters<br />

1. Initialize all probability tables: n(ν|N), r(ρ|R) and t(τ|T)<br />

2. Reset all counters: c(ν, N), c(ρ, R) and c(τ, T)<br />

3. For each pair 〈E,f〉 in the training corpus<br />

For all θ , such that f = Str(θ(E))<br />

- Let cnt = P(θ|E)/ <br />

θ:Str(θ(E))=f P(θ|E)<br />

- For i = 1...n,<br />

c(νi, N(εi))+ = cnt<br />

c(ρi, R(εi))+ = cnt<br />

c(τi, T (εi))+ = cnt<br />

4. For each 〈ν, N〉, 〈ρ, R〉, and 〈τ, T 〉<br />

n(ν|N) = c(ν, N)/ <br />

ν<br />

c(ν, N)<br />

r(ρ|R) = c(ρ, R)/ <br />

ρ c(ρ, R)<br />

t(τ|T) = c(τ, T)/ <br />

τ<br />

c(τ, T)<br />

5. Repeat steps 2-4 for several iterations<br />

March 7, 2011 T2S 8

Machine translation<br />

Efficient EM training<br />

The EM algorithm uses a graph structure for a pair 〈E,f〉<br />

• A major-node v(εi,f l k ) shows a pairing of a subtree of E and a substring of f<br />

• Each major node connects <strong>to</strong> several ν-subnode v(ν; εi,f l k ), showing which<br />

value of ν is selected. The arc has weight P(ν|εi)<br />

• A ν-subnode v(ν; εi,f l k ) connects <strong>to</strong> a<br />

final-node with weight P(τ|εi) if εi is a<br />

terminal node<br />

• A ν-subnode connects <strong>to</strong> several ρsubnodes<br />

v(ρ; ν, εi,f l P(ρ|εi)<br />

k ) with weight<br />

• A ρ-subnode is connected <strong>to</strong> π-subnodes<br />

v(π; ρ, ν, εi,f l k ) with weight 1.0. The<br />

variable π shows a particular way of<br />

partitioning fl k<br />

P(ν|ε)<br />

P(ρ|ε)<br />

major-node<br />

ν-subnode<br />

ρ-subnode<br />

π-subnode<br />

• A π-subnode is connected <strong>to</strong> major-nodes corresponding <strong>to</strong> children of εi<br />

with weight 1.0. A major-node can be connected from different π-subnodes.<br />

major-node<br />

March 7, 2011 T2S 9

Machine translation<br />

Efficient EM training<br />

• A trace starting from the graph root, selecting one of the arcs from<br />

major-nodes, ν-subnodes and ρ-subnodes and all the arcs from πsubnodes<br />

corresponds <strong>to</strong> a particular <strong>to</strong> a particular θ<br />

• The product of the weight on the trace corresponds <strong>to</strong> P(θ|E)<br />

• An estimation algorithm similar <strong>to</strong> the inside-outside algorithm can<br />

be defined.<br />

• The time complexity is O(n 3 |ν||ρ||π|)<br />

March 7, 2011 T2S 10

Machine translation<br />

Decoder description<br />

K. Yamada, K. Knight: A decoder for Syntax-based Statistical MT ACL 2001.<br />

Modifications <strong>to</strong> the original MT for phrasal translations:<br />

• Fertility µ is used <strong>to</strong> allow 1-<strong>to</strong>-N mapping:<br />

t(τ|T) = t(f1f2 ...fl|e) = µ(l|e)<br />

l<br />

t(fi|e)<br />

• Direct translation φ of an English phrase e1e2 ...em <strong>to</strong> a foreign phrase<br />

f1f2 ...fl at non-terminal tree nodes:<br />

i=1<br />

ph(φ|Φ) = t(f1f2 . . .fl|e1e2 ...em) = µ(l|e1e2 . ..em)<br />

• Linear mix (if εi is non-terminal):<br />

l<br />

t(fi|e1e2 ...em)<br />

i=1<br />

P(θi|εi) = λΦi ph(φi|Φi) + (1 − λΦi )r(ρi|Ri)n(νi|Ni)<br />

March 7, 2011 T2S 11

Machine translation<br />

Decoder description<br />

• Given a French sentence, the decoder will find the most plausible English<br />

parse tree<br />

• Idea: a mechanism similar <strong>to</strong> normal parsing is used<br />

• Steps:<br />

1. Start from an English context-free grammar and incorporate <strong>to</strong> it the<br />

channel operations<br />

2. For each non-lexical rule (such as “VP → VB NP PP”), supplement the<br />

grammar with reordered rules and probabilities are taken from the r-table<br />

3. Rules such as “VP → VP X” and “X → word” are added and probabilities<br />

are taken from the n-table<br />

4. For each lexical rule in the English grammar, we add rules such as<br />

“englishWord → foreingWord”<br />

5. Parse a string of foreign words<br />

6. Undo reordering operations and remove leaf nodes with foreign words<br />

7. Among all possible tree, choose pick the best in which the product of the<br />

LM and the TM probability is the highest<br />

March 7, 2011 T2S 12