A4 - Faculty.jacobs-university.de

A4 - Faculty.jacobs-university.de

A4 - Faculty.jacobs-university.de

Create successful ePaper yourself

Turn your PDF publications into a flip-book with our unique Google optimized e-Paper software.

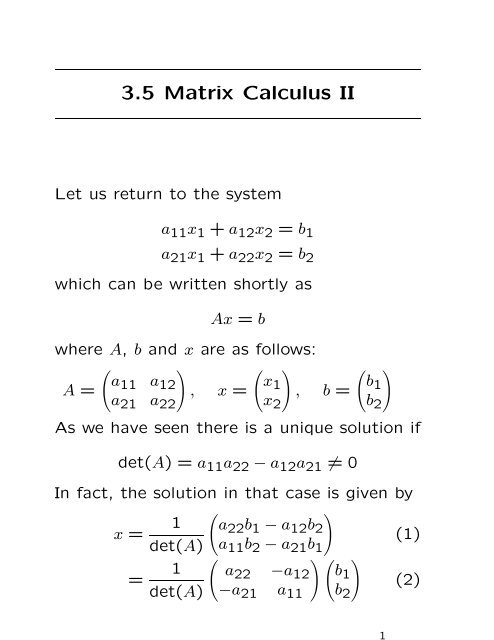

3.5 Matrix Calculus II<br />

Let us return to the system<br />

a11x1 + a12x2 = b1<br />

a21x1 + a22x2 = b2<br />

which can be written shortly as<br />

Ax = b<br />

where A, b and x are as follows:<br />

A =<br />

<br />

a11<br />

<br />

a12<br />

a21 a22<br />

, x =<br />

<br />

x1<br />

x2<br />

, b =<br />

<br />

b1<br />

b2<br />

As we have seen there is a unique solution if<br />

<strong>de</strong>t(A) = a11a22 − a12a21 = 0<br />

In fact, the solution in that case is given by<br />

x =<br />

=<br />

1<br />

<strong>de</strong>t(A)<br />

1<br />

<strong>de</strong>t(A)<br />

<br />

<br />

a22b1 − a12b2<br />

a11b2 − a21b1<br />

<br />

<br />

a22 −a12 b1<br />

−a21 a11 b2<br />

1<br />

(1)<br />

(2)

3.5 Matrix Calculus II<br />

Now it is tempting to multiply the matrix<br />

equation Ax = b by a matrix A −1 such that<br />

x = A −1 Ax = A −1 b<br />

If we compare this with (1) and (2) then it<br />

follows that<br />

A −1 =<br />

1<br />

<strong>de</strong>t(A)<br />

<br />

<br />

a22 −a12<br />

−a21 a11<br />

We have A −1 A = AA −1 = E, that is, A has<br />

an inverse matrix. For <strong>de</strong>t(A) = 0 there<br />

does not exist an inverse matrix for A. The<br />

<strong>de</strong>finition of an invertible matrix is as follows:<br />

Definition (Non-singular matrix). A square<br />

matrix A ∈ Mn is called non-singular or invertible<br />

if there exists a matrix B ∈ Mn such<br />

that<br />

AB = E = BA<br />

Any matrix B with the above property is<br />

called an inverse of A. If A does not have<br />

an inverse, A is called singular.<br />

2

3.5 Matrix Calculus II<br />

Theorem (Inverses are unique). If A has<br />

inverses B and C, then B = C.<br />

Proof. If B and C are inverses of A then<br />

AB = E = BA and AC = E = CA. It follows<br />

B = BE = B(AC) = (BA)C = EC = C<br />

Notation: If A has an inverse, it is <strong>de</strong>noted<br />

by A −1 . So AA −1 = A −1 A = E.<br />

Example: A =<br />

<br />

<br />

<br />

1 2<br />

4 8<br />

<br />

is singular. For sup-<br />

pose B =<br />

a<br />

c<br />

b<br />

d<br />

is an inverse of A. Then<br />

AB = E implies the inconsistent system<br />

1 = a + 2c<br />

0 = 4a + 8c<br />

3

3.5 Matrix Calculus II<br />

Theorem (Unique solution of systems of<br />

linear equations). If the coefficient matrix A<br />

of a system of n equations in n unknowns is<br />

non-singular, then the system Ax = b has the<br />

unique solution x = A −1 b.<br />

Theorem. Let A be a square matrix. If A<br />

is non-singular, then the homogeneous system<br />

Ax = 0 has only the trivial solution<br />

x = 0. Equivalently, if the homogeneous system<br />

Ax = 0 has a non-trivial solution, then A<br />

is singular.<br />

Example: Consi<strong>de</strong>r the system given by<br />

⎛<br />

⎜<br />

⎝<br />

1 2 3<br />

4 5 6<br />

7 8 9<br />

⎞ ⎛ ⎞<br />

x1<br />

⎟ ⎜ ⎟<br />

⎠ ⎝x2<br />

x3<br />

⎠ =<br />

If we apply the Gauß-Jordan algorithm we obtain<br />

the equivalent equation<br />

⎛<br />

⎜<br />

⎝<br />

1 0 −1<br />

0 1 2<br />

0 0 0<br />

⎞ ⎛ ⎞<br />

x1<br />

⎟ ⎜ ⎟<br />

⎠ ⎝x2<br />

x3<br />

⎠ =<br />

⎛<br />

⎜<br />

⎝<br />

0<br />

0<br />

0<br />

⎛<br />

⎜<br />

⎝<br />

⎞<br />

⎟<br />

⎠<br />

0<br />

0<br />

0<br />

⎞<br />

⎟<br />

⎠<br />

4

3.5 Matrix Calculus II<br />

We easily see a non-trivial solution<br />

x t = (1, −2, 1)<br />

and hence the matrix is singular.<br />

Theorem. A square matrix A is non-singular<br />

if and only if its reduced row-echelon form is<br />

non-singular.<br />

As soon as there is a zero row in A, the matrix<br />

will be singular.<br />

Corollary. Suppose that A, B ∈ Mn such that<br />

AB = E. Then also BA = E.<br />

Proof. Let AB = E and assume Bx = 0.<br />

Then A(Bx) = A0 = 0, so that x = Ex =<br />

(AB)x = 0. Hence B is non-singular by the<br />

theorem. Then from AB = E we <strong>de</strong>duce<br />

A = (AB)B −1 = EB −1 = B −1<br />

and BA = BB −1 = E.<br />

Theorem. If A and B are non-singular matrices<br />

in Mn then<br />

(AB) −1 = B −1 A −1<br />

5

3.5 Matrix Calculus II<br />

Recall that also (AB) t = B t A t for matrices<br />

A, B. There are three important classes of<br />

matrices that can be <strong>de</strong>fined concisely in<br />

terms of the transpose operation.<br />

Definition (Symmetric matrix). A square<br />

matrix A is called symmetric if At = A. In<br />

other words, A = (aij) such that aij = aji for<br />

all i, j. Hence<br />

<br />

a b<br />

b d<br />

is a general 2 × 2 symmetric matrix.<br />

Definition (Skew-symmetric matrix). A<br />

square matrix A is called skew-symmetric if<br />

A t = −A. In other words, A = (aij) such that<br />

aij = −aji for all i, j. Hence<br />

<br />

<br />

0 b<br />

−b 0<br />

is a general 2 × 2 skew-symmetric matrix.<br />

<br />

6

3.5 Matrix Calculus II<br />

Definition (Orthogonal matrix). A square<br />

matrix A is called orthogonal if A −1 = A t .<br />

Thus A must be necessarily non-singular.<br />

Example: Verify that the following matrix is<br />

orthogonal:<br />

A =<br />

⎛<br />

1 8<br />

⎜9<br />

9<br />

⎜<br />

⎝<br />

−4 9<br />

4<br />

9 −4 9 −7 ⎞<br />

⎟<br />

9<br />

⎟<br />

⎠<br />

8 1 4<br />

9 9 9<br />

We say that vectors v1, v2, . . . , vn form an orthonormal<br />

set if the vectors are perpendicular<br />

to each other and have lenght one, that<br />

is,<br />

vi · vj =<br />

⎧<br />

⎨<br />

⎩<br />

0 if i = j,<br />

1 if i = j<br />

Theorem. Let A be a square matrix. Then<br />

the following are equivalent:<br />

(a) A is orthogonal.<br />

(b) The rows of A form an orthonormal set.<br />

(c) The columns of A form an orthonormal<br />

set.<br />

7

3.5 Matrix Calculus II<br />

When is a matrix singular ? We want to<br />

know this for solving systems of linear equations.<br />

The answer is given by <strong>de</strong>terminants:<br />

each square matrix A is assigned a special<br />

scalar called the <strong>de</strong>terminant of A, <strong>de</strong>noted<br />

by <strong>de</strong>t(A) or |A|, i.e.,<br />

<br />

<br />

<br />

<br />

<br />

<br />

<br />

<br />

<br />

a11 a12 · · · a1n<br />

a21<br />

.<br />

a22<br />

.<br />

· · ·<br />

· · ·<br />

a2n<br />

.<br />

an1 an2 · · · ann<br />

A will be singular if and only if <strong>de</strong>t(A) = 0.<br />

For A ∈ Mn we know formulas for n = 1, 2:<br />

|a11| = a11,<br />

<br />

<br />

a11<br />

a12<br />

<br />

a21<br />

a22<br />

<br />

<br />

<br />

<br />

<br />

<br />

<br />

<br />

<br />

= a11a22 − a12a21<br />

The formulas will be much more complicated<br />

for bigger n. For n = 3 we have<br />

<br />

<br />

<br />

a11<br />

a12 a13<br />

<br />

<br />

<br />

<br />

a21<br />

a22 a23<br />

<br />

<br />

a31<br />

a32 a33<br />

<br />

= a11<br />

+ a13<br />

<br />

<br />

a22<br />

<br />

a32<br />

<br />

<br />

a23<br />

a21<br />

− a12 <br />

a33<br />

a31<br />

<br />

<br />

a23<br />

<br />

a33<br />

<br />

<br />

a21<br />

<br />

a31<br />

<br />

<br />

a22<br />

<br />

a32<br />

8

3.5 Matrix Calculus II<br />

or equivalently,<br />

<strong>de</strong>t(A) = a11|A11| − a12|A12| + a13|A13|<br />

where A1j <strong>de</strong>notes the 2 × 2 matrix obtained<br />

from A by omission of the first row and the<br />

j-th column. A more convenient method to<br />

compute <strong>de</strong>t(A) for A ∈ M3 is the rule of<br />

Sarrus.<br />

Definition (Determinant of a square matrix).<br />

The <strong>de</strong>terminant <strong>de</strong>t(A) of a matrix<br />

A ∈ Mn is recursively <strong>de</strong>fined by<br />

<strong>de</strong>t(A) =<br />

n<br />

(−1)<br />

j=1<br />

1+j a1j <strong>de</strong>t(A1j)<br />

where A1j is of size n−1 and is obtained from<br />

A by omission of the first row and the j-th<br />

column.<br />

As n increases, the number of terms in the <strong>de</strong>terminant<br />

becomes astronomical. We have<br />

n! terms, and Sterling’s formula says<br />

n! ∼ √ <br />

n n<br />

2πn<br />

e<br />

9

3.5 Matrix Calculus II<br />

For n = 4 the formula for the <strong>de</strong>terminant is<br />

given by<br />

<strong>de</strong>t(A) = a11a22a33a44 − a11a22a34a43<br />

− a11a23a32a44 + a11a23a34a42<br />

+ a11a24a32a43 − a11a24a33a42<br />

− a12a21a33a44 + a12a21a34a43<br />

+ a12a23a31a44 − a12a23a34a41<br />

− a12a24a31a43 + a12a24a33a41<br />

+ a13a21a32a44 − a13a21a34a42<br />

− a13a22a31a44 + a13a22a34a41<br />

+ a13a24a31a42 − a13a24a32a41<br />

− a14a21a32a43 + a14a21a33a42<br />

+ a14a22a31a43 − a14a22a33a41<br />

− a14a23a31a42 + a14a23a32a41<br />

Theorem. For A, B ∈ Mn holds<br />

<strong>de</strong>t(E) = 1<br />

<strong>de</strong>t(A t ) = <strong>de</strong>t(A)<br />

<strong>de</strong>t(AB) = <strong>de</strong>t(A) <strong>de</strong>t(B)<br />

10

3.5 Matrix Calculus II<br />

Example: Consi<strong>de</strong>r the system of linear<br />

equations Ax = b with<br />

A =<br />

⎛<br />

⎜<br />

⎝<br />

⎞<br />

1<br />

4<br />

2<br />

5<br />

3<br />

⎟<br />

6 ⎠ , x =<br />

7 9 10<br />

⎛ ⎞<br />

x1<br />

⎜ ⎟<br />

⎝x2<br />

x3<br />

⎠ , b =<br />

⎛<br />

⎜<br />

⎝<br />

2<br />

8<br />

13<br />

We compute <strong>de</strong>t(A) = −3, hence we obtain<br />

the unique solution by x = A−1b. How do we<br />

compute A−1 ?<br />

We can again use the Gauß-Jordan algorithm,<br />

this time simultaneously on the partitioned<br />

matrix [A | E]:<br />

⎛<br />

⎜<br />

⎝<br />

⎛<br />

⎜<br />

⎝<br />

1 2 3 1 0 0<br />

4 5 6 0 1 0<br />

7 8 10 0 0 1<br />

1 2 3 1 0 0<br />

0 1 2<br />

4<br />

3 −1 3 0<br />

0 0 1 1 −2 1<br />

⎞<br />

⎟<br />

⎠<br />

⎞<br />

⎟<br />

⎠<br />

⎛<br />

1 0 0 −<br />

⎜<br />

⎝<br />

2 3 −4 3 1<br />

0 1 0 − 2 11<br />

3 3<br />

0 0 1 1 −2<br />

−2<br />

1<br />

⎞<br />

⎟<br />

⎠<br />

⎞<br />

⎟<br />

⎠<br />

11

3.5 Matrix Calculus II<br />

Hence we obtain<br />

x = A −1 b =<br />

⎛<br />

−<br />

⎜<br />

⎝<br />

2 3 −4 3 1<br />

− 2 11<br />

3 3<br />

1 −2<br />

−2<br />

1<br />

⎞ ⎛<br />

⎟ ⎜<br />

⎠ ⎝<br />

⎞<br />

2<br />

⎟<br />

8 ⎠ =<br />

13<br />

⎛<br />

⎜<br />

⎝<br />

1<br />

2<br />

−1<br />

Least square solutions of linear equations:<br />

Suppose Ax = b represents a system of linear<br />

equations with real coefficients which may be<br />

inconsistent, because of the possibility of experimental<br />

errors in <strong>de</strong>termining A or b. As an<br />

example, the following system is inconsistent:<br />

x1 = 1<br />

x2 = 2<br />

x1 + x2 = 3.001<br />

Theorem. The associated normal equation<br />

A t Ax = A t b<br />

is always consistent and any solution of this<br />

system minimizes the sum<br />

r 2 1 + · · · + r2 m<br />

where the residuals ri are <strong>de</strong>fined by ri =<br />

ai1x1 + · · · + ainxn − bi for i = 1, . . . , m.<br />

12<br />

⎞<br />

⎟<br />

⎠

3.5 Matrix Calculus II<br />

Example: Consi<strong>de</strong>r the above inconsistent<br />

system Ax = b with<br />

A =<br />

⎛<br />

⎜<br />

⎝<br />

⎞<br />

1<br />

0<br />

0<br />

⎟<br />

1⎠<br />

, x =<br />

1 1<br />

Then we obtain<br />

A t A =<br />

<br />

2 1<br />

1 2<br />

<br />

<br />

x1<br />

x2<br />

, A t b =<br />

So the normal equations are<br />

, b =<br />

<br />

2x1 + x2 = 4.001<br />

x1 + 2x2 = 5.001<br />

which have the unique solution<br />

⎛<br />

⎜<br />

⎝<br />

4.001<br />

5.001<br />

x1 = 3.001<br />

3 , x2 = 6.001<br />

3<br />

The solution minimizes<br />

1<br />

2<br />

3.001<br />

r 2 1 + r2 2 + r2 3 = (x1 − 1) 2 + (x2 − 2) 2<br />

+ (x1 + x2 − 3.001) 2<br />

<br />

13<br />

⎞<br />

⎟<br />

⎠