Markov Chains

Markov Chains

Markov Chains

You also want an ePaper? Increase the reach of your titles

YUMPU automatically turns print PDFs into web optimized ePapers that Google loves.

<strong>Markov</strong> <strong>Chains</strong><br />

1 Discrete or Continuous <strong>Markov</strong> Chain<br />

What is the difference between a continuous time <strong>Markov</strong> Chain and a discrete <strong>Markov</strong><br />

Chain?<br />

See Ex Moshe Zukerman or Google for more detail.<br />

2 Two state <strong>Markov</strong> Chain<br />

Consider a communications system which transmits digits 0 and 1. Each digit transmitted<br />

must pass through several stages, at each of which there is a probability p that<br />

the digit entered will be unchanged when it leaves. Letting X n denote the digit entering<br />

the nth stage, then {X n , n = 0, 1, .....} is a two state <strong>Markov</strong> Chain.<br />

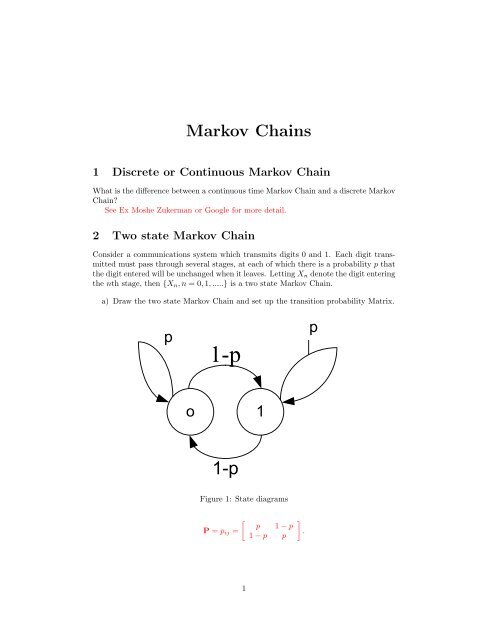

a) Draw the two state <strong>Markov</strong> Chain and set up the transition probability Matrix.<br />

p<br />

1-p<br />

p<br />

o 1<br />

1-p<br />

Figure 1: State diagrams<br />

[<br />

P = p ij =<br />

p 1 − p<br />

1 − p p<br />

]<br />

.<br />

1

) Solve the state equations to find the limiting probabilities.<br />

π 0 + π 1 = 1<br />

Which implies that<br />

π 0 = π 0 p + π 1 (1 − p)<br />

π 1 = π 2 = 0.5<br />

3 One server with blocking<br />

Rejected Customers<br />

Figure 2: State diagrams<br />

Fig. 2 shows a system that consists of one server that can only handle one customer<br />

at a time. This means that if a customer arrives and the server is busy, the customer<br />

will be rejected. Let I be the number in the system I = 0, 1. Assume that at every<br />

time unit a new customer will arrive with probability α and the probability that a<br />

customer in service will complete service is β. This means that if there is both a<br />

service completion and an arriving customer in a time unit, the system will remain<br />

unchanged.<br />

a) The probability r is the probability that when the system is in state 1, the system<br />

will remain in this state. Determine r.<br />

r = αβ + α(1 − β) + (1 − β)(1 − α)<br />

b) Draw the state transition diagram with all the transition probabilities.<br />

c) Set up the state transition matrix and use this to find the stationary probabilities<br />

P (I = 0) and P (I = 1).<br />

[ ]<br />

0.74 0.25<br />

P = p ij =<br />

.<br />

0.375 0.625<br />

π 0 = 0.6andπ 1 = 0.4<br />

2

1-α<br />

α<br />

r<br />

o 1<br />

β(1-α)<br />

Figure 3: State diagrams<br />

d) Show that you will get the same answer by using cut-equations.<br />

e) What is the expected number of customers in service (the Carried traffic)?<br />

A ′ = 1 ∗ π 1<br />

4 Negative exponential distribution<br />

Let X 1 , ..., X n be independent exponential random variables with mean µ i<br />

E(X i ) = 1 , i = 1, ..., n.<br />

µ<br />

Define<br />

and<br />

Y n = min(X 1 , ..., X n )<br />

Z n = max(X 1 , ..., X n )<br />

.<br />

a) Determine the distribution of Y n and Z n<br />

3

See Moshe Zukerman page 19 for some hints. The variables are independent so :<br />

Use that<br />

n∏<br />

P (Y n > x) = P (X i > x)<br />

i=1<br />

and<br />

n∏<br />

P (Z n ≤ x) = P (X i ≤ x)<br />

Then just plug in P (X i > x = e µ−it and P (X i ≤ x = 1 − e muit<br />

i=1<br />

b) Show that the probability that X i is the smallest one among X 1 , ..., X n is equal<br />

µ<br />

to i<br />

µ 1+...+µ n<br />

, i = 1, ..., n<br />

Using the continuous version of total probability:<br />

P (X i = min(X 1 , ...., X n )) =<br />

∫ ∞<br />

0<br />

∫ ∞<br />

0<br />

∏<br />

P (X i > x)f Xi (x)dx<br />

j≠i<br />

e −(µ1+µ2+..µn)x µ i e −µix dx =<br />

µ 1 + µ 2 + ... + µ n<br />

µ i<br />

5 Continuous Time <strong>Markov</strong> Chain<br />

Do problem 2.21 in Moshe Zukerman.<br />

Birth rate: b i (negative Exponential distribution)<br />

Death rate: d i (negative Exponential distribution)<br />

P(increase by 1): this means that a birth happens before a death. Since the birth<br />

rate and death rate is negative exponential distributions:<br />

Similarly:<br />

d i<br />

P (increase by 1) =<br />

b i + d i<br />

b i<br />

P (decrease by 1) =<br />

b i + d i<br />

See above problem for the derivation of the probability of one negative exponential<br />

bigger than another and also Zukerman p. 19.<br />

4