CONFERENCE PROCEEDINGS - IPSC - Europa

CONFERENCE PROCEEDINGS - IPSC - Europa

CONFERENCE PROCEEDINGS - IPSC - Europa

You also want an ePaper? Increase the reach of your titles

YUMPU automatically turns print PDFs into web optimized ePapers that Google loves.

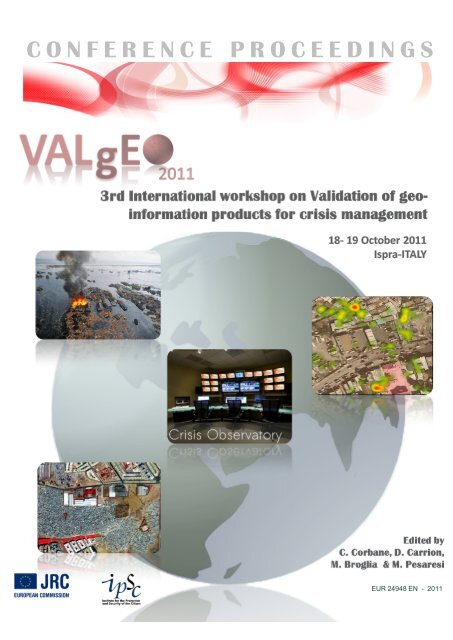

C O N F E R E N C E P R O C E E D I N G S<br />

EUR 24948 EN - 2011

VALgEO 2011<br />

Workshop Proceedings<br />

JRC, Ispra, 18-19 19 October 2011<br />

Edited by<br />

C. Corbane, D. Carrion,<br />

M. Broglia and M. Pesaresi

The mission of the JRC-<strong>IPSC</strong> is to provide research results and to support EU policy-makers in<br />

their effort towards global security and towards protection of European citizens from accidents,<br />

deliberate attacks, fraud and illegal actions against EU policies.<br />

European Commission<br />

Joint Research Centre<br />

Institute for the Protection and Security of the Citizen<br />

Contact information<br />

Address: JRC - TP 267 - Via E. Fermi, 2749 - 21027 Ispra (VA), Italy<br />

E-mail: marco.broglia@jrc.ec.europa.eu<br />

Tel.: +39 0332 785435<br />

Fax: +39 0332 785154<br />

http://ipsc.jrc.ec.europa.eu/<br />

http://www.jrc.ec.europa.eu/<br />

Legal Notice<br />

Neither the European Commission nor any person acting on behalf of the Commission<br />

is responsible for the use which might be made of this publication.<br />

Europe Direct is a service to help you find answers<br />

to your questions about the European Union<br />

Freephone number (*):<br />

00 800 6 7 8 9 10 11<br />

(*) Certain mobile telephone operators do not allow access to 00 800 numbers or these calls may be billed.<br />

A great deal of additional information on the European Union is available on the Internet.<br />

It can be accessed through the <strong>Europa</strong> server http://europa.eu/<br />

JRC66899<br />

EUR 24948 EN<br />

ISBN 978-92-79-21379-3 (print)<br />

ISSN 1018-5593 (print)<br />

ISBN 978-92-79-21380-9 (PDF)<br />

ISSN 1831-9424 (online)<br />

doi:10.2788/73045<br />

Luxembourg: Publications Office of the European Union<br />

© European Union, 2011<br />

Reproduction is authorised provided the source is acknowledged<br />

Printed in Italy

CONTENTS<br />

RATIONALE.................................................................................................................................................................................1<br />

AGENDA .....................................................................................................................................................................................3<br />

FORWARD ..................................................................................................................................................................................7<br />

SESSION I ....................................................................................................................................................................................9<br />

THE ROLE OF VALIDATION IN INFORMATION AND COMMUNICATION TECHNOLOGIES FOR CRISIS MANAGEMENT ...........9<br />

Potential applications of tracking macro-trends within Crisis Management.................................................................11<br />

The OpenStreetMap way to data creation and validation in emergency preparedness and response .........................13<br />

Impact Opportunities and Methodology Challenges: Crisis Mapping and Geoanalytics in Human Rights Research....15<br />

Web and mobile emergencies network to real-time information and geodata management. .....................................17<br />

An Integrated Quality Score for Volunteered Geographic Information on Forest Fires .................................................29<br />

SESSION II .................................................................................................................................................................................35<br />

VALIDATION OF REMOTE SENSING DERIVED EMERGENCY SUPPORT PRODUCTS ...............................................................35<br />

Definition of a reference data set to assess the quality of building information extracted automatically from VHR<br />

satellite images ...............................................................................................................................................................37<br />

On the Validation of An Automatic Roofless Building Counting Process .......................................................................47<br />

Evacuation plans : interest and limits.............................................................................................................................55<br />

Outside the Matrix, a review of the interpretation of Error Matrix results....................................................................57<br />

On the complexity of validation in the security domain – experiences from the G-MOSAIC project and beyond .........69<br />

SESSION III................................................................................................................................................................................71<br />

USABILITY OF WEB BASED DISASTER MANAGEMENT PLATFORMS AND READABILITY OF CRISIS INFORMATION .............71<br />

Emergency Support System: Spatial Event Processing on Sensor Networks ..................................................................73<br />

Near‐real‐time monitoring of volcanic emissions using a new web‐based, satellite‐data‐driven, reporting system:<br />

HotVolc Observing System (HVOS) .................................................................................................................................81<br />

Image interpreters and interpreted images: an eye tracking study applied to damage assessment. ...........................83<br />

Crisis maps readability: first results of an experiment using the eye-tracker ................................................................93<br />

SESSION IV ...............................................................................................................................................................................95<br />

TOWARDS ROUTINE VALIDATION AND QUALITY CONTROL OF CRISIS MAPS ......................................................................95<br />

A methodological framework for qualifying new thematic services for an implementation into SAFER emergency<br />

response and support services........................................................................................................................................97<br />

A methodology for a user oriented validation of satellite based crisis maps...............................................................105<br />

Quality policy implementation: ISO certification of a Rapid Mapping production chain.............................................107<br />

AUTHORS INDEX ....................................................................................................................................................................109

RATIONALE<br />

Over the past decade, the international community has responded to an increasing number of major<br />

natural and man-made disasters. In parallel, the emergency management has become increasingly complex<br />

and specialized due to the necessity for various authorities and organizations to cooperate during emergency,<br />

and to the emergence of disasters of an unexpected or unknown nature. With these growing challenges, the<br />

need for more sophisticated tools for the production, sharing and integration of geospatial information<br />

without prejudice to the usability of end-user products, has given rise to a rapid development of geoinformational<br />

technologies to assist in crisis management operations. Recent events such as the earthquake in<br />

Japan, the flooding in Australia and the crisis in the Middle East and North African region showed that Earth<br />

observation, ICT and Web-mapping technologies are now playing a vital role in crisis management efforts,<br />

especially during the preparedness and response phases.<br />

No matter what the origin of crises, their geographical context and the dimension of their impacts, there is a<br />

common need by all actors involved in crisis management for timely, relevant, usable and most of all reliable<br />

information. For the community concerned with validation of geo-information, this poses new challenges in<br />

terms of having access to methodologies that can address the increasing variety and amount of data, and that<br />

help to render validation closer to a routine process.<br />

Following the two successful VALgEO workshops held in 2009 and 2010, we are pleased to announce the<br />

organization of the 3rd edition of the international workshop on validation of geo-information for crisis<br />

management. The annual VALgEO workshop sets out to act as an integrative agent between the needs of<br />

practitioners in situation centers and in the field guiding the Research and Development community, with a<br />

special focus on the quality of information.<br />

The following topics will be addressed in four main sessions:<br />

• The role of validation in Information and Communication Technologies (ICT) for crisis<br />

management<br />

• Validation of Remote Sensing derived emergency support products<br />

• Usability of Web based disaster management platforms and readability of crisis<br />

information<br />

• Towards routine validation and quality control of crisis maps<br />

1

Workshop chair<br />

Martino Pesaresi, Joint Research Centre, Italy<br />

martino.pesaresi@jrc.ec.europa.eu<br />

Organizing committee<br />

Christina Corbane, Joint Research Centre, Italy<br />

Daniela Carrion, Joint Research Centre, Italy<br />

Marco Broglia, Joint Research Centre, Italy<br />

Barbara Secreto, Joint Research Centre, Italy<br />

christina.corban@jrc.ec.europa.eu<br />

daniela.carrion@jrc.ec.europa.eu<br />

marco.broglia@jrc.ec.europa.eu<br />

barbara.secreto@ec.europa.eu<br />

Scientific committee<br />

Michael Judex, German Federal Office of Civil Protection, Germany<br />

Daniel Stauffacher, ICT4Peace Foundation, Switzerland<br />

Peter Zeil, University of Salzburg, Austria<br />

Tom De Groeve, Joint Research Centre, Italy<br />

Marco Broglia, Joint Research Centre, Italy<br />

Daniela Carrion, Joint Research Centre, Italy<br />

Christina Corbane, Joint Research Centre, Italy<br />

michael.judex@bbk.bund.de<br />

daniel_stauffacher@ict4peace.org<br />

peter.zeil@sbg.ac.at<br />

tom.de-groeve@jrc.ec.europa.eu<br />

marco.broglia@jrc.ec.europa.eu<br />

daniela.carrion@jrc.ec.europa.eu<br />

christina.corban@jrc.ec.europa.eu<br />

2

AGENDA<br />

TUESDAY, October 18 th 2011<br />

9:00 Workshop Opening and Welcome Address<br />

Delilah Al Khudhairy – Joint Research Centre (Head, Global Security and Crisis Management Unit)<br />

Martino Pesaresi- Joint Research Centre (ISFEREA Action Leader)<br />

9:40 Torsten Redlinger- European Commission (DG ENTR, GMES Bureau)<br />

SESSION I– THE ROLE OF VALIDATION IN INFORMATION AND COMMUNICATION TECHNOLOGIES (ICT) FOR<br />

CRISIS MANAGEMENT<br />

Chair: Daniel Stauffacher. ICT4Peace Foundation, Geneva, Switzerland<br />

10:00 Invited Speaker: Daniel Stauffacher. ICT4Peace Foundation<br />

10:20 Potential applications of tracking macro-trends within Crisis Management<br />

Invited Speaker: Douglas Hubbard. Hubbard Decision Research, United States<br />

10:40 The OpenStreetMap way to data creation and validation in emergency preparedness and response<br />

Invited Speaker: Nicolas Chavent, Open Street Map, France<br />

11:00 Coffee Break<br />

11: 10 Impact Opportunities and Methodology Challenges: Crisis Mapping and Geoanalytics in Human<br />

Rights Research<br />

Scott Edwards & Koettl C. – George Washington University/Amnesty International<br />

11:30 Web and mobile emergencies network to real-time information and geodata management<br />

Elena Rapisardi 1-3 , Lanfranco M. 2-3 , Dilolli A. 4 & Lombardo D. 4<br />

1<br />

Openresilience<br />

2<br />

Doctoral School in Strategic Sciences, SUISS, University of Turin<br />

3<br />

NatRisk, Interdepartmental Centre for Natural Risks, University of Turin<br />

4<br />

Vigili del Fuoco, Comando Provinciale di Torino<br />

11: 50 An Integrated Quality Score for Volunteered Geographic Information on Forest Fires<br />

12:10 Lunch<br />

Ostermann Frank & Spinsanti L.<br />

European Commission, Joint Research Centre, SDI Unit, IES<br />

3

SESSION II – VALIDATION OF REMOTE SENSING DERIVED EMERGENCY SUPPORT PRODUCTS<br />

Chair : Dirk Tiede , University of Salzburg, Austria<br />

13:40 Recent experiences on the use of remote sensing for damage assessment and its validation: 2010<br />

Pakistan flood, 2011 Tohoku Tsunami and 2011 (February) Christchurch earthquake<br />

Keiko Saito 1,6 , R. Eguchi 2 , G. Lemoine 3 , L. Barrington 4 , J. Bevington 2 , S. Gill 5 , A. King 6 , A. Lin 4 , M. Green 7 ,<br />

R. Spence 1 & P. Wood 8<br />

1<br />

Cambridge Architectural Research Ltd, UK - 2 ImageCat Inc, USA ; ImageCat Ltd, UK- 3 Joint Research<br />

Centre, EU - 4 Tomnod, USA- 5 Global Facility for Disaster Risk Reduction, The World Bank- 6 GNS, New<br />

Zealand - 7 EERI, USA - 8 New Zealand Society for Earthquake Engineering<br />

14:00 Definition of a reference dataset to assess the quality of building information extracted<br />

automatically from VHR satellite images<br />

Annett Wania, Kemper T., Ehrlich D., Soille P. & Gallego J.<br />

Joint Research Centre<br />

14:20 On the Validation of An Automatic Roofless Building Counting Process<br />

Lionel Gueguen, Pesaresi M. & Soille P.<br />

Joint Research Centre<br />

14: 40 Evacuation plans : interest and limits<br />

Alix Roumagnac & Moreau K.<br />

PREDICT Services<br />

15: 00 Outside the Matrix, a review of the interpretation of Error Matrix results<br />

Pablo Vega Ezquieta 1 , Tiede D. 2 , Joyanes G. 1 , Gorzynska M. 1 & Ussorio A. 1<br />

1<br />

European Union Satellite Centre- 2 Z-GIS Research, University of Salzburg<br />

15: 20 On the complexity of validation in the security domain – experiences from the G-MOSAIC project<br />

and beyond<br />

Thomas Kemper, Wania A. & Blaes X.<br />

Joint Research Centre<br />

15: 40 Coffee Break<br />

SESSION III– USABILITY OF WEB BASED DISASTER MANAGEMENT PLATFORMS AND READABILITY OF CRISIS<br />

INFORMATION<br />

Chair : Tom De Groeve, Joint Research Centre<br />

15:50 Emergency Support System: Spatial Event Processing on Sensor Networks<br />

Roman Szturc, Horáková B., Janiurek D. & Stankovič J.<br />

Intergraph CS<br />

16:10 Near-real-time monitoring of volcanic emissions using a new web-based, satellite-data-driven,<br />

reporting system: HotVolc Observing System (HVOS)<br />

Mathieu Gouhier 1 , Labazuy P. 1 , Harris A. 1 , Guéhenneux Y. 1 , Cacault P. 2 , Rivet S. 2 & Bergès J-C. 3<br />

1<br />

Laboratoire Magmas et Volcans, CNRS, IRD, Observatoire de Physique du Globe de Clermont-Ferrand,<br />

Université Blaise Pascal- 2 Observatoire de Physique du Globe de Clermont-Ferrand, CNRS, Université<br />

Blaise Pascal - 3 PRODIG, UMR 8586, CNRS, Université Paris 1<br />

16:30 1- Image interpreters/interpreted images, study of recognition mechanisms<br />

2- Crisis maps readability: first results of an experiment using the eye-tracker<br />

Roberta Castoldi, Carrion D., Corbane C., Broglia M. & Pesaresi, M.<br />

Joint Research Centre<br />

16: 50 Closure of DAY 1<br />

20:00 Social dinner at Hotel Belvedere<br />

4

WEDNESDAY, October 19 th 2011<br />

SESSION IV–TOWARDS ROUTINE VALIDATION AND QUALITY CONTROL OF CRISIS MAPS<br />

Chair: Michael Judex, German Federal Office of Civil Protection, Germany<br />

9:00 Validation, standardisation, innovation and ability to respond to user requirements<br />

Joshua Lyons - UNITAR/UNOSAT<br />

9:20 A methodological framework for qualifying new thematic services for an implementation into<br />

SAFER emergency response and support services<br />

Hannes Römer , Zwenzner H. , Gähler M. & Voigt S.- German Aerospace Center (DLR)<br />

9:40 A methodology for a user oriented validation of satellite based crisis maps<br />

Michael Judex 1 , Sartori G. 2 , Santini, M. 3 , Guzmann, R. 3 , Senegas, O. 4 & Schmitt, T. 5<br />

1<br />

Federal Office of Civil Protection and Disaster Assistance, Germany- 2 World Food Programme, Italy-<br />

3<br />

Dipartimento della Protezione Civile- 4 United Nations Institute for Training and Research (UNOSAT)-<br />

5<br />

Ministère de l’intérieur, Direction de la Sécurité Civile<br />

10:00 Quality policy implementation: ISO certification of a Rapid Mapping production chain<br />

Bernard Allenbach 1 , Rapp JF. 1 , Fontannaz D. 2 & Chaubet JY. 3<br />

1<br />

SERTIT- 2 CNES - 3 APAVE<br />

10:20 Introduction to the LIVE EXERCISE AT THE CRISIS ROOM<br />

10:30 Coffee break & transfer to the crisis room<br />

SESSION V- LIVE EXERCISE AT THE CRISIS ROOM<br />

Coordinator: Alessandro Annunziato, Joint Research Centre, Italy<br />

11:00 Presentation of crisis room and related tools<br />

Tom de Groeve & Galliano D.<br />

European Commission, Joint Research Centre<br />

11:20 Simulation of a case study<br />

All<br />

12:30 Lunch<br />

SESSION VI- WRAP UP SESSION<br />

14:00 Panel discussion<br />

Scientific committee of VALgEO 2011<br />

15:00 Recommandations and conclusion<br />

All<br />

5

SESSION V- LIVE EXERCISE AT THE CRISIS ROOM<br />

Coordinator: Alessandro Annunziato, Joint Research Centre, Italy<br />

11:00 Presentation of the crisis room and the related tools<br />

Tom De Groeve & Alessandro Annunziato<br />

European Commission, Joint Research Centre (JRC)<br />

11:10 Presentation of collected data & discussion of interoperable mobile applications for data collection<br />

iphone application: Beate Stollberg (JRC)<br />

Field Reporting tool: Daniele Galliano (JRC)<br />

11:30 Presentation and demonstration of the PDNA suite<br />

Daniele Galliano (JRC)<br />

11:45 End user tailored interface application for collaboration in GIS environment - solution example<br />

Michał Krupiński (Space Research Centre Polish Academy of Sciences)<br />

Piotr Koza (Astri Polska)<br />

12:00 IQ demonstration for automatic image information extraction<br />

Lionel Gueguen & Vasileios Syrris (JRC)<br />

12:20 Questions and Answers<br />

12:30 Lunch<br />

6

FORWARD<br />

This third edition of the international workshop on validation of geo-information for crisis<br />

management confirms that the topics we are addressing are important and that we need this sort of platform<br />

to regularly discuss the new challenges we face as a result of continuous evolution in technology, especially ICT<br />

(including space), which is impacting both the quality and quantity of information relevant to crisis<br />

management.<br />

2010 marks the start of a new era in the way ICT is beng used in crisis management. I would like to begin with<br />

the Haiti earthquake in 2010 which even though it was not a typical disaster, it marked a new epoch in the way<br />

various novel and traditional ICT solutions were used in an integrated manner by the emergency response<br />

communities as well as professional organizations and voluntary intiatives. But it also confirmed that we have<br />

yet to apply lessons learned from past major disasters. The rapid advances in ICT, including space, are not<br />

necessarily facilitating the work of the international humanitarian relief, emergency rescue and post-disaster<br />

recovery/reconstruction communities. On the contrary, today we are facing an increasing deluge of<br />

information, with the risk that only a small fraction of it is relevant to, or reliable enough for, effective crisis<br />

management.<br />

Sanjana Hattotuwa (2010) captures this helplessness very well in the quotation “Where is the knowledge we<br />

have lost in information?”<br />

In Haiti alone, there were hundreds of email messages exchanged amongst the disaster response community<br />

and hundreds of information products in the form of maps being produced by various entities on a daily basis.<br />

How much rich and relevant knowledge was present in the deluge of information which cost significant<br />

amount of resources to produce and disseminate….? Can we measure this?<br />

Furthermore, Haiti showed that in addition to the contributions of traditional communities engaged in crisis<br />

response, the citizens of impacted countries were also making potentially important contributions, thereby<br />

shifting the balance we have become familiar with from impacted communities that are at the receiving end of<br />

assistance and information, to one in which the impacted communities are empowered and are becoming<br />

increasingly responsible for themselves through actively engaging in the crisis management process. It is only a<br />

matter of time when the type of community engagement we saw in Haiti will become familiar as opposed to<br />

exceptional.<br />

The crowd sourcing/social media and collaborative analysis and mapping technologies we saw being used in<br />

Haiti at different levels mark the beginning of a new era in crisis management. The era of “citizen or<br />

community crisis management”.<br />

Time will tell if this new marriage between technology and the community will have a sustainable future. Some<br />

experts reckon it will take a decade. And by then, we could expect to live in a world whereby citizens will<br />

become important sources of local and regional human observations who can supply information to the<br />

disaster response communities that are not readily met by increasingly improved remotely sensed data<br />

including space and airborne. Equally important, we expect that citizens will likewise become increasingly<br />

responsible for guiding their recovery and reconstruction. I agree with the predictions of these experts.<br />

Moreover I think there will be a future between technology and communities in crisis management. In a<br />

decade, children born between the 1990s and 2000s will be between their late teens and late 20s. These<br />

young adults would have grown up in a world where the digital camera and the internet are things that have<br />

7

always been there. So, they will be equipped to contribute and participate in a future, where they will be able<br />

to contribute directly to help themselves in the event of a crisis. This is something we, as an older generation,<br />

can only begin to imagine and contemplate.<br />

Today the crisis management community is living in an increasingly complex information and technological<br />

world. Interactive and real-time type platforms are edging in on static maps, but many challenges and<br />

problems will have to overcome before the static maps with which we have become familiar in crisis<br />

management become relics of the past. We cannot afford to have less effective responsiveness to disasters,<br />

whilst advances in technologies and the way they are being used outweigh the benefits they can bring.<br />

In other words, today, we face important questions such as: Are rapid advances in ICT and new ways of using<br />

ICT helping us make improvements with regard to producing relevant and trusted information and making it<br />

available in a reliable and timely manner to the stakeholders engaged in crisis management? Not necessarily.<br />

For effective crisis management we do not need a fast growing and enormous amount of information available<br />

through a variety of media. With the increasing number of information sources and contributing actors<br />

(specialists, citizens, mapping and ICT volunteers) in crisis management, we risk even less knowledge and value<br />

at the expense of more information. Validation and trusted analysis have become more critical than before to<br />

creating value and trust in knowledge in crisis management.<br />

This is why we need a platform like VALgEO to bring us together to discuss the elements of validation that will<br />

result in value and trust in knowledge for crisis management. But in our discussions during this workshop and<br />

subsequent ones we already have to think 10 years ahead. We have to discuss and identify the ‘validation and<br />

trust’ elements that accommodate not only the traditional geo-information products and information sources<br />

that are being used by today’s crisis management communities, but we have to already think ahead in terms<br />

of the eventual regular use of new information sources such as the citizen as well as community / participatory<br />

ICT and mapping volunteers. Without agreed principles and standards related to validation and trusted<br />

analysis, we risk having even less content and trusted knowledge in the future at the expense of yet ever more<br />

tools, services and technologies, to the dis-benefit of the disaster affected communities and the disaster<br />

response and post-response communities.<br />

The VALgEO community which you have helped to establish at our first and second workshops and through<br />

your participation this year, can make important steps in developing recommendations for agreed principles<br />

and standards as well as other important components of validation in a new crisis management information<br />

landscape in which the information sources are extending beyond traditional remote and in-situ sources, and<br />

in which the community or the citizen will become increasingly engaged.<br />

We look forward to a vibrant and exciting workshop, and we are optimistic that we, the VALgEO Community,<br />

can make progress both at this year’s workshop and in future workshops towards achieving these goals. We<br />

are entering a very exciting period in which we have never had it so good in terms of the variety of sources and<br />

technologies which can be used to produce and disseminate crisis relevant information and knowledge. Let us<br />

now take the time to reflect and understand this landscape in order to come up with recommendations and<br />

principles that will benefit the crisis management process in the longer-term.<br />

AL-KHUDHAIRY D.<br />

Head, Global Security and Crisis Management Unit<br />

European Commission - Joint Research Centre, Institute for the Protection and Security of the Citizen (<strong>IPSC</strong>)<br />

8

SESSION I<br />

THE ROLE OF VALIDATION IN INFORMATION AND<br />

COMMUNICATION TECHNOLOGIES FOR CRISIS<br />

MANAGEMENT<br />

Chair: Daniel Stauffacher<br />

The contemporary global crisis management environment is increasingly relying on ICT<br />

as a source for critical, timely decision-making information. Effective crisis management requires<br />

not only quick decisions for an immediate response but most of all a co-ordinated reaction. In<br />

order to reach a coherent action at all levels, crisis management organizations need to rely on<br />

accurate information that must be produced, transmitted and shared with speed and precision.<br />

This places the challenges of ICT less on technical capacities rather than on the effective<br />

management and integration of an optimum amount of quality information.<br />

The challenge of ICT for crisis management today is in building trust in both the systems used to<br />

process the information and the people handling it. Validation and multiple checking of<br />

information flows are therefore essential to avoid the risk of having less knowledge at the<br />

expense of more information. The workshop aims to i) assess the needs for a formal validation<br />

within ICT for crisis management, ii) help in understanding the attitudes of the end-users towards<br />

these technologies and finally iii) define an agenda for research on valid methods and measures<br />

to assess the quality and accuracy of the information.<br />

9

ABSTRACT<br />

Potential applications of tracking macro-trends within Crisis<br />

Management<br />

HUBBARD D.<br />

Hubbard Decision Research<br />

dwhubbard@hubbardresearch.com<br />

Abstract:<br />

The objective of this workshop is to have a discussion exploring how crisis management might<br />

benefit from adding other macro-trend tracking to geolocation data. Douglas Hubbard, the author<br />

of Pulse: The New Science of Harnessing Internet Buzz to Track Threats and Opportunities will<br />

facilitate a discussion about how tools like Google Trends, Facebook, and Twitter might be used to<br />

track trends relevant to crisis management including when does not include specific geolocation<br />

data. Methods of analyzing social networks have been developed that would not only track but<br />

forecast the transmission of disease throughout a population. Just as Twitter and Facebook helped<br />

to mobilize social unrest in the Middle East, they can also be used to forecast social upheavals<br />

before they become a humanitarian crisis. The possibility exists for more elaborate models that<br />

use multiple data sources that could actively track a macroscopic picture of certain kinds of risks.<br />

11

ABSTRACT<br />

The OpenStreetMap way to data creation and validation in<br />

emergency preparedness and response<br />

CHAVENT N.<br />

Open Street Map, France<br />

nicolas.chavent@gmail.com<br />

Abstract:<br />

This presentation will look back at past activations of the Humanitarian OpenStreetMap Team<br />

(HOT) since the Haiti Earthquake January 2010 featuring remote and on-the-ground work of the<br />

OpenStreetMap (OSM) project in the context of emergency preparedness and emergency response<br />

to discuss how this wiki approach to geodata management had been and is currently addressing<br />

“the challenge of ICT for crisis management today [which] is in building trust in both the systems<br />

used to process the information and the people handling it”.<br />

This discussion will feature the following elements:<br />

• The HOT/OSM approach to geodata creation in emergency preparedness and response<br />

to be a source for “critical, timely decision-making information”.<br />

• The way that HOT/OSM ensure coordination with the humanitarian system through the<br />

emergency preparedness and response cycle to help contributing to crisis management as a coordinated<br />

reaction.<br />

• The typology of validation flows emerging from past operational contexts depending on<br />

the intensity of the remote mapping work, the strength of the local OSM groups, the level of<br />

coordination and interaction between OSM (remote and on the ground) and the humanitarian<br />

response system.<br />

We feel that the analysis of those use cases of the OSM work in emergency preparedness and<br />

response are likely to contribute in a significative manner to the goals of the workshop<br />

i) assess the needs for a formal validation within ICT for crisis management,<br />

ii) help in understanding the attitudes of the end-users towards these technologies and finally<br />

iii) define an agenda for research on valid methods and measures to assess the quality and<br />

accuracy of the information.<br />

13

ABSTRACT<br />

Impact Opportunities and Methodology Challenges:<br />

Crisis Mapping and Geoanalytics in Human Rights Research<br />

EDWARDS S. 1 and KOETTL C. 2<br />

1 George Washington University/Amnesty International, USA<br />

2 Amnesty International, USA<br />

sedwards@aiusa.org<br />

Abstract<br />

Crises are inherently complex—with the intertwining of multiple interdependent causal processes<br />

and emergence of properties at differing levels of societal aggregation. This complexity is especially<br />

challenging when crises are approached from a right-based perspective. As in disaster relief, the<br />

ability to source timely, geo-referenced information in human rights emergencies provides—at<br />

minimum—critical situational awareness for researchers in the midst of great need, and<br />

overwhelming complexity.<br />

Further—and based on cursory evaluation instances of web-based crowd maps—it is likely that<br />

these tools offer the ability to capture representative human rights data above current legal<br />

research methodologies, in many contexts. By layering crowd-derived events data into GIS analytic<br />

products, human rights researchers and advocates may demonstrate the constituent elements of<br />

grave crimes, such as qualities of “widespread” or “systematic” in the case of Crimes Against<br />

Humanity. Additionally, the layering of events data into analytic tools can allow human rights<br />

researchers to offer policy recommendations with greater technical specificity, and thus with<br />

greater effect.<br />

In the context of human rights research, this paper will evaluate current opportunities and<br />

challenges as it relates to the integration of mapping and GIS research and analytic tools<br />

increasingly used in disaster response. Challenges related to the verification of events data entail—<br />

for most human rights organizations—serious risk to the credibility of reporting, and thus to policy<br />

impact. These and related challenges will be explored, as well as analytic measures that can be<br />

employed to minimize them, particularly in the context of crisis.<br />

15

SHORT PAPER<br />

Web and mobile emergencies network to real-time information<br />

and geodata management.<br />

RAPISARDI E. 1-3 , LANFRANCO M. 2-3 , DILOLLI A. 4 and LOMBARDO D. 4<br />

1 Openresilience, http://openresilience.16012005.com/<br />

2 Doctoral School in Strategic Sciences, SUISS, University of Turin, Italy.<br />

3 NatRisk, Interdepartmental Centre for Natural Risks, University of Turin, Italy; www.natrisk.org.<br />

4 Vigili del Fuoco, Comando Provinciale di Torino, Italy.<br />

e.rapisardi@gmail.com<br />

Abstract:<br />

Major and minor disasters are part of our environment. The challenge we all have to face is to<br />

switch from relief to preparedness. Recent events from Haiti to Japan revealed a new scenario:<br />

web and mobile technologies can play a crucial role to manage the disasters, increasing and<br />

improving the information flow between the different actors and players - citizens, civil protection<br />

bodies, local and central governments, volunteers, media. In this perspective, “the post-Gutemberg<br />

revolution” is changing our communication framework and practices. Mobile devices and advanced<br />

web data management may ameliorate preparedness and boost crises response in the shadow of<br />

natural and man-made disasters, and are defining new approaches and operational models. Key<br />

words are: crowdsourcing, geolocation, geomapping. A full integration of web and mobile solutions<br />

allows geopositioning and geolocalization, video and photo sharing, voice and data<br />

communications, and guarantees accessibility anytime and anywhere. This can also give the direct<br />

push to set up an effective operational dual side system to “inform, monitor and control”. Starting<br />

from the international experiences, Open Resilience Network and Italian Firefighters have carried<br />

out tabletop and full scale exercises to test tools and procedures and experiment the use of new<br />

technologies to better manage information flow from/to different actors. The paper will focus the<br />

ongoing experimental work on missing person emergency, led by Italian Firefighters TAS team -<br />

Andrea Di Lolli and Davide Lombardo - and supported by a multi-competences team from Open<br />

Resilience Network and University of Turin - Elena Rapisardi and Massimo Lanfranco. The aim of<br />

the paper is to share methods and technologies used, and to show the operational results of the<br />

exercise carried out during PROTEC2011, in order to stimulate comments that will be taken into<br />

account in the further research steps.<br />

Keywords: missing person, disaster relief, crowdsourcing, geolocation, geomapping<br />

17

1. Introduction<br />

Disasters preparedness and relief operations have been widely studied and debated in the last 20 years.<br />

“At risk”, edited by Ben Winser (Winser et al., 1994), expands the disaster consequences management to the<br />

preemptive measures linked to social vulnerability, switching from a “war against nature” (hazard reduction)<br />

to a “fight against poverty” (risk reduction), that the same year led UNDP to the human security concept<br />

introduced in the Human Development Report (UNDP, 1994).<br />

Quarantelli (1998) drafted a comprehensive review of previous works, implementing the technical point of<br />

view with a sociological approach that lead to a full spectrum approach to Disaster Risk Management.<br />

9/11 Twin Towers attack boost and refreshed studies on disasters: the “war against terror” it’s a new paradigm<br />

that remind the “fight” against natural disasters (struggling the effects rather than the root causes), but some<br />

authors (Alexander, 2001) pointed out that effects management of natural and anthropogenic disasters have<br />

the same operational needs and procedures.<br />

On the other hand, also the well defined “disaster cycle” (fig. 1) has been investigated by sociological<br />

approach, that led to the community based risk reduction and the resilience concept. These concepts fit well<br />

with UN efforts to overrun the simple humanitarian relief, which became more and more costly in last 10<br />

years.<br />

Figure 1. The disaster cycle: a life long work to web/mobile technologies<br />

Web access and mobile devices seem to be the key for achieving all the goals that scholars and practitioners<br />

were debating in the last 20 years at global and local levels:<br />

- Citizens engagement in preparedness, planning, relief, rebuilding;<br />

- Faster relief with improved situational awareness;<br />

- Communication strategy with a Bottom/Up and Top/Down merge (two way data exchange);<br />

- Resilience enhancing with local storytelling.<br />

UN Foundation (HHI, 2011) points up mobile technologies involvement during Haiti Earthquake, drawing the<br />

state of the art situation.<br />

Since early 2000, the “GeoSITLab” (GIS and Geomatics Laboratory) at the University of Turin started to<br />

enhance the application of Geomatics technologies for geothematic mapping and for geological and<br />

geomorphological field activities (Giardino et al., 2004). These activities were implemented at NatRisk<br />

Interdepartmental Centre (natural risks) and at Strategic Sciences School (man-made risks) with different<br />

approach relates to “natural sciences” and “social” approaches.<br />

18

In the shadows of Haiti earthquake, GeoSITLab developed a mobile GIS application based on ArcPad software<br />

for direct mapping and damages assessing with smartphones and deliver it on the ground with AGIRE NGO<br />

(Giardino et al., 2010). Data collected by NGO operators in Haiti were immediately transmitted to Italian<br />

Operational Centre for retrofitting / rebuilding cost evaluation and donors search.<br />

OpenResilence, whose members started working in VGI with Ushahidi and Crisis Mappers Net, offer to<br />

professional and practitioners of forest fire fighting the next step, meshing mobile technologies and Webmapping<br />

2.0 (http://openforesteitaliane.crowdmap.com/) .<br />

The aim of our research is to come up with ideas that should link and connect governmental emergency<br />

operators and citizens (Civil Protection 2.0), both on the side of collaborative mapping (data exchange) and<br />

information dissemination (http://www.slideshare.net/elenis/protec-informing-the-public).<br />

2. The Talent of the Crowd in face of emergency and disasters<br />

In 1455 the Gutemberg revolutionary printing system changed the institutionalized information model and<br />

lowered the production costs, increased the books production, favored the democratic access to knowledge,<br />

stimulated literacy and contributed to the critical thinking.<br />

“For more than 150 years, modern complex democracies have depended in large measure on an industrial<br />

information economy for these basic functions. In the past decade and a half, we have begun to see a radical<br />

change in the organization of information production. Enabled by technological change, we are beginning to<br />

see a series of economic, social, and cultural adaptations that make possible a radical transformation of how<br />

we make the information environment we occupy as autonomous individuals, citizens, and members of<br />

cultural and social groups.” (Benkler, 2006).<br />

In this scenario, we are individuals with multiple and crossing socio-cultural-economic memberships, where<br />

information could be seen as the channel of the Simmel’s “Intersection of Social Circles”; a sociological<br />

concept, that in some ways Google+ recently transformed in a social media, with a distinctive approach with<br />

respect to Facebook and Twitter.<br />

The first Web 2.0 Conference, on October 2004, could be taken as the turning point to a new approach to the<br />

information: Web 2.0 (O’Reilly, 2007) introduced a set of principles and practices that tie together a veritable<br />

solar system of sites, where the first one principle was: “The web as platform” [Tim O’Reilly].<br />

This stream of thoughts and actions proposes a new approach that consider the collective<br />

knowledge/intelligence as superior to the single individual knowledge/intelligence. Web 2.0 radically changed<br />

the basis and the ways in which information is created, spread and consumed. In the post-Gutemberg<br />

revolution “with advances in technology, the gap between professionals and amateurs has narrowed, paving<br />

the way for companies to take advantage of the talent of the public.” [Darren Gilbert].<br />

Apart from the light and shadows of the “social media” success, we can state that the post-Gutemberg<br />

revolution is “The end of institutionalised mediation models” [Richard Stacy], and the crowdsourcing as a<br />

participatory approach.<br />

#share, #collaborate, #communicate, #cooperate, #support, #include - e.g. #diversity.<br />

Key words that would be appreciated by the society models of the utopian socialism the first quarter of the<br />

19th century. In 2011 Web 2.0 has become an everyday reality, web 2.0 has an impact also in emergency and<br />

disaster response.<br />

When a disaster or an emergency occurs, it is crucial to collect and analyze volumes of data and to distil from<br />

the chaos the critical information needed to target the rescue mission most efficiently.<br />

19

Since the Haiti earthquake, a completely new “engagement” took place “For the first time, members of the<br />

community affected by the disaster issued pleas for help using social media and widely available mobile<br />

technologies. Around the world, thousands of ordinary citizens mobilized to aggregate, translate, and plot<br />

these pleas on maps and to organize technical efforts to support the disaster response. In one case, hundreds<br />

of geospatial information systems experts used fresh satellite imagery to rebuild missing maps of Haiti and plot<br />

a picture of the changed reality on the ground. This work—done through OpenStreetMap—became an<br />

essential element of the response, providing much of the street-level mapping data that was used for logistics<br />

and camp management.” (HHI, 2011).<br />

“Without information sharing there can be no coordination. If we are not talking to each other and sharing<br />

information then we go back 30 years.” [Ramiro Galvez, UNDAC].<br />

This is a clear and undoutable effect of the post- Gutemberg revolution on the emergency and crisis response,<br />

that is leading to the creation of Volunteer and Technical Communities (VTCs) working on disaster and conflict<br />

management. This 2.0 world wide community is allowing the setting up of technical development community<br />

and operational processes/procedures, that are changing risk and crisis management as focused on “citizens as<br />

sensors” and on “preparedness”. On the other hand, the VTCs communities are now facing the issue of trust<br />

and reliability of a wide information flow involving the “crowd” and the emergency bodies.<br />

3. Italian Civil Protection system<br />

Italian Civil Protection National Service is based on horizontal and vertical coordination of central and local<br />

bodies (Regions, Provinces, municipalities, national and local public agencies, and any other public and private<br />

institution and organisation). One of the backbone of the Italian Civil Protection System are the civil protection<br />

volunteering organizations, whose duties and roles differ on regional basis. The Civil Protection Volunteers are<br />

called to action during small emergencies and major disasters. The Abruzzo earthquake, in 2009, highlighted<br />

the need of a more efficient communication flow between the volunteers organizations and professionals, and<br />

of common shared protocols and tools to manage information. As a matter of fact, the “diversity” in managing<br />

information causes a sort of “friction” and a weak collaboration in terms of data and information sharing.<br />

Despite the adoption of softwares and device (radio), there is a low level of awareness on how the web 2.0<br />

usage, in line with the web 2.0 litteracy of the internet population. The mobile phones and web penetration<br />

(Italy has the European record for mobiles per capita with 122 phones for 100 inhabitants, 70% of population<br />

with internet access, 13% of population with mobile internet access) and the social network “fever”, can be<br />

considered as a driving factor to raise awareness and develop skills so to allow a wider adoption of web 2.0<br />

solutions and tools. Moreover the volunteers organisation have to cope with small budgets that should<br />

include equipments first. In this perspective the free and open tools (e.g. android market, content sharing<br />

platforms) are a concrete opportunity to increase the web 2.0 penetration and develop acknowledged<br />

practices to implement web 2.0 information sharing in C3 activities (Command, Control, Communications).<br />

Fire and rescue services are provided by Vigili del Fuoco (VVF - Fire Fighters), a national government<br />

department ruled by Ministry of Interior. Territorial divisions are based on provincial Fire Departments with<br />

operational teams at biggest municipal level. Fire Fighters are also the primary emergency response agency for<br />

HAZMAT and CBRN accidents.<br />

According to the national legal framework, fire and rescue departments have the duty to operate as first<br />

responders under a well-defined command structure providing 24-hour emergency response. Unlike law<br />

enforcement, which operates individually for most duties, fire departments operate under a highly organized<br />

20

team structure with the close supervision of a commanding officer. Fire departments also act at the direction<br />

of the Prefect (Ministry of Interior local coordinator) during major disasters.<br />

Fulltime professional personnel staff fire and rescue departments but volunteers provide reinforcement at<br />

minor municipality’s stations.<br />

Recently, after a big failure in procedures for search of a kidnapped girl, Fire Fighters were assigned to the<br />

overall coordination of search for missed persons.<br />

TAS Teams (Topografia Appicata al Soccorso - Topography Applied to Rescue) were set up during L’Aquila<br />

Earthquake (April 2009) to support relief operation and damage assessment, through the use of GIS<br />

technology. The TAS teams work to coordinate Fire and Rescue teams from Operational Room (SO115) and to<br />

guide tactical activities from a mobile Incident Command Post (UCL - Unità di Comando Locale – Local<br />

Command Unit) placed on special vans.<br />

4. The Real Time Data Management<br />

The use of digital base maps in relief operations can be considered as the first step towards an innovation of<br />

practices and procedures of the TAS teams, and in a broader sense of the relief activities as a whole. As stated<br />

in the previous paragraph, any emergency requires an information flow between different actors , physically<br />

located in different places.<br />

Starting from other experiences in the field, specifically the one of Centro Intercomunale di Protezione Civile<br />

Colline Marittime e Bassa Val di Cecina [COI] 1 , a joint research group [the authors of this article] has been set<br />

up to test and experiment open and free web solutions in order to guarantee sharing and collaboration on<br />

geographical data. Despite the budget lacking, the choice to use easy and common tools and web solutions<br />

available for free on the internet, although used in other scenarios and with diverse purposes, gave the<br />

possibility to start trials. The concrete experiences of the wider VTCs community played a fundamental role to<br />

benefit from, avoiding to start from scratch.<br />

After some testing, the team focused the testing phase on two different tools: Ushahidi (to ensure the<br />

participation of the citizens - crowdsourcing) and Google Maps (see also Google Crisis response).<br />

On the 27 th of June in the town of Carignano (TO), for the first time during a real rescue mission for a missing<br />

person emergency, the TAS used a geodata software to acquire and record the geolocated information related<br />

to the occurence. The processing of geographic data through the use of GIS software used by staff of the TAS<br />

Turin Provincial Fire Department, have been published on the web using Google My Maps, so to be shared by a<br />

restricted number of users, as the Operational Rooms (SO115) in Turin and Aosta, the Municipal Police Station<br />

of Carignano and the local media.<br />

This process allowed a real-time information flow from the incident area: data and physical condition of the<br />

missed person, the zoning of area of operation, the point of last sighting, the geolocation of search units, the<br />

geolocation of discovered personal effects.<br />

These were basic information but very useful to the immediate reconstruction of the incident scenarios also<br />

for Judicial Police activities.<br />

1 During the exercise, the team used the tools and solutions tested and adopted by the Centro Intercomunale di Protezione<br />

Civile Colline Marittime e Bassa Val di Cecina (COI), to manage and share geolocated information between, volunteers<br />

teams, COI Operational room, and COC (Centro Operativo Comunale - municipal operational centre). These solutions,<br />

including a blog website to inform in real time the population and media representatives, have been successfully tested<br />

during a missing person intervention in Cecina.<br />

21

Missed person search procedure provide the locating of an ICP, based on UCL van when possible, where TAS<br />

personnel must:<br />

1. zone the search area,<br />

2. upload GPS devices with appropriate maps and search routes or areas,<br />

3. settle Search And Rescue (SAR) teams area of operation (AO) and tune radio devices (TETRA system for<br />

VVF teams),<br />

4. monitor communication, facilitate cooperation and head operations,<br />

5. download GPS tracks (once SAR teams come back) to check not covered areas,<br />

6. inform Operational Room (SO115) on activities.<br />

A common platform to share information uploaded by different organizations professionals (Fire Fighters,<br />

municipal and national police forces, Civil Protection volunteers, specialized SAR teams) should improve<br />

dramatically operations efficiency.<br />

Information sharing on web 2.0 platform would be used for missed person search as for every emergency<br />

operation.<br />

Nevertheless this is a goal not only for Italian Fire Fighters internal procedures, that linked ICP to field teams<br />

and SO115, but also for all public bodies involved in emergency and disaster management.<br />

The platform is suitable to coordinate different emergency operation and major disasters relief.<br />

Real time information sharing is proper to address, for example, technical support by geologists during severe<br />

storms that lead to floods and landslides or by air analysts during CBRN terrorist attacks.<br />

At the same time the platform would facilitate information dissemination to media and directly to citizens.<br />

5. Protec2011 Exercice<br />

The Protec2011 Exercise was based on a missing person search scenario and it was carried out during Protec<br />

2011 Exibition (http://www.protec-italia.it/indexk.php). This might allow to involve the conference attendees<br />

as VGI’s sensors and to get independent feedbacks on procedures and activities.<br />

The TAS team was interested to test interaction among GPS devices and data formats, radios, mobile phones<br />

and geo mapping software and also to verify the IT infrastructure capacities.<br />

OpenResilience aimed to test VGI platforms like Ushahidi, Google Maps and Twitter to see if they satisfy the<br />

requirements related to the rescue operations. We are also involved to see the results of real time translation<br />

among different GIS data formats (shp, KML, wpt, GPX, PLT) and different software platforms using GIS or<br />

web-GIS (OziExplorer, ArcPad, Google-maps, Ushahidi, Global Mapper).<br />

Usually each format or platform is used for a specific purpose, this creates many difficulties in emergency<br />

management (U.S. House of Representatives, 2006). The winning idea is to develop a “black box” able to<br />

contain and share different information from different actors and make them available to everyone.<br />

An extra test is the opportunity offered by open source software for smartphones, with automatic delivery of<br />

georeferenced informations (SMS, MMS, photos, videos) to an emergency service number (like US 911 or EU<br />

112), that would allow a more effective rescue response.<br />

As the exercice location was ideal (full Wi-Fi, WiMAX, cellular phone, TETRA coverage), the interaction among<br />

different infratructures and the device switch among them was to be tested too.<br />

This will allow better exercise tuning before country tests within difficult terrain. Moreover the urban search<br />

give interesting data to future improvement for fire operations, earthquake USAR and damage assesment,<br />

HAZMAT pollution and CBRN contamination.<br />

22

The exercise focuses on the test of web technologies and mapping instruments for the emergency<br />

management of information fluxes among different actors and aims to open a two-way communication<br />

channel with citizens.<br />

3.1. The scenario<br />

Mrs. Paola Bianchi, 75 years old, affected by Alzheimer’s disase, is missed from her house during the morning.<br />

His family raised alarm at 2:00 pm. The Police department calls up, as protocol, the Fire Department drills<br />

Prefect and Civil Protection volunteers responsible.<br />

At Operational Room (COC, placed inside Protec2011 Green Room) a Command Post is activated.<br />

TAS Team join the last seeing area with the UCL van (Photo 2), that will be used as ICP and technical rescue<br />

management centre (as decreed by Italian Law). A TAS professional will receive search area zoning ruled by OR<br />

and upload GPS devices, while a second professional will facilitate information exchange between SAR teams<br />

and OR.<br />

3.2. The crew<br />

OpenResilience and TAS Team planned the exercise and partecipate as described in Table 1. Turin and Aosta<br />

Fire Departments provided SAR personnel and K9 teams, while students from the University of Turin played as<br />

civil protection volunteers, media reporters and citizens. A UNITO technician was a perfect Paola Bianchi,<br />

whose photo was published on exercise blog (http://esercitazioneprotec.wordpress.com/). Some Protec2011<br />

conference attendees partecipate as witness.<br />

[1 ] UCL DiLolli A. & Lombardo D. (search coordinators) + 1 VVF + 2 Prisma<br />

Engineering (LSUnet)<br />

[2] Operational Room Rapisardi E. (exercice coordinator) + 2 web 2.0 specialists + 2 VVF<br />

[3] Search team 1 2 VVF + K9 unit<br />

[4] Search team 2 2 VVF + K9 unit<br />

[5] Search team 3 Lanfranco M. (devices tester) + 2 GIS specialists (UNITO students)<br />

[6] Civil Protection<br />

Volunteers<br />

UNITO students<br />

[7] Citizens UNITO students + Protec 2011 attendees<br />

[8] Audio / Video Operators 2 VVF + 2 UNITO students<br />

[9] Media Observer http://www.ilgiornaledellaprotezionecivile.it/<br />

Table 1. Crew composition<br />

3.3. Communication Infrastructure<br />

Commercial GSM/UMTS cellular network<br />

Lingotto Fiere internal Wi-Fi (plus an outdoor ad-hoc exercise network)<br />

Fire Department WiMAX<br />

23

The whole Turin Province is covered by a WiMAX 5GB network, utilized by Provincial Command Centre for data<br />

exchange among Detachments and SO115. This network can be also used for terminals connecting within<br />

urban and sub-urban zones, through a fixed antennas network and “on demand” mobile repeaters. The access<br />

is secured by password.<br />

Fire Department radio network<br />

Italian Fire Department own a nation wide radio network. The radio network link rescue teams to Provincial<br />

Operational Rooms while backbones link Regional Commands with National Crisis Room. The VVF radio<br />

network never failed during disasters since the Friuli Erthquake, back in 1976. TAS teams are able to geolocate<br />

VHF vehicle devices and some UHF personnel radio.<br />

GSM/UMT cellular network: Prisma Engeenering (http://www.prisma-eng.com/lsu_net.html)<br />

LSUnet can carry (in a backpack or with a trolley) a GSM (or UMTS) wherever necessary. Disaster, often,<br />

undermine mobile networks directly (i.e. interrupting the power supply) or indirectly (network congestion due<br />

to an excess of information exchange among people involved).<br />

A LSUnet emergency network allows first responders to restore a cellular coverage in a short time (10 minutes)<br />

to use standard phones or smartphones to coordinate relief efforts and/or to get a two way contact with<br />

affected citizens.<br />

Photo 1. COC (Operational Room)<br />

Photo 2. UCL (Incident Command Centre)<br />

6. Discussion<br />

The Protec2011 Exercise has been an important test to highlight how the VVF procedures could be transferred<br />

in a web 2.0 environment and which are the strength and weak points of the adopted solutions .<br />

From a wider perspective, the excercise underlined that geolocated information sharing is perceived as a need<br />

in any rescue or relief operation, as real time communication, e.g. between the UCL and the COC, at least<br />

allows the situational awareness and remote tactical control.<br />

The citizens involvement [crowdsourcing] has been undoubtable considered a plus, never experimented<br />

before. The new emerging geolocation tools and platforms, althought considered “poor” and low-reliable by<br />

academia community, represent a new challenge in a world where stakeholders’ information and geolocated<br />

informations needs are rapidly increasing and are the expression of a democratization of geodata access, that<br />

24

eflects a collaborative and proactive approach to cope with risks and disasters [“Towards a more resilient<br />

society” - Third Civil Protection Forum, 2009].<br />

However, to have a more reliable data, the post-Gutenberg map makers should acquire some sort of<br />

competence on mobile applications or to be prepared through specific information campaign (web and<br />

mobile litteracy). The challenge is to “design a more robust collaborative mapping strategy” (Kerle, 2011)<br />

defining common guidelines.<br />

From the technological point of view, crowdmapping should take into account that geolocation accuracy is<br />

highly depending on the device’s GPS quality [in tested commercial cellulars - iphone, blackberry, HTC,<br />

Samsung - GPS chips showed different level of accuracy].<br />

One more feature to be introduced is the sms channel to allow citizens to send reports, even though the sms<br />

has no geolocated information.<br />

On the back-end side, we are aware that we should focus more on the capability of the VVF and COC operators<br />

to interpret and validate citizen’s reports. Ushahidi experience teaches that a validation processes must be set<br />

up and should follow specific rules: this means that the personnel in charge of the validation should be trained<br />

on this specific issue and should develop some experience in the field.<br />

During debriefing, the participants underlined that the whole system should use a unique platform in order to<br />

have all data in the same map: trackings, citizens reports, VVF operations.<br />

On the connectivity side, the internal Wi-Fi infrastructure (used by COC and UCL) was not appropriate for the<br />

purpose and the WiMAX did’t work inside, but the test of the LSUnet by Prisma Engineering for cellular voice<br />

communication was extremely positive; however this communication network would not support any public<br />

web platforms as, in this exercise, it sets up only a local voice channel .<br />

7. Step forward<br />

Collaborative mapping is the crucial need in any rescue and relief operation. Our recent experience lead us to<br />

focus the research on the development of a unique platform [web and mobile] that allows different levels of<br />

geolocated information sharing, on a “user permissions” base [anonymus user, registered user level 1, ….]. Our<br />

approach is to use the solutions that are free and open [such as Google Maps, Google Earth, Google 3d,<br />

Ushahidi, OpenStreetMap, or Android apps for route tracking] and to develop a stable tool through the<br />

integration of diverse solutions ensuring a high level of sharing and collaboration among different players.<br />

Step by step<br />

The next steps of our research team, apart from the crucial fundraising task, should start with a more strict<br />

evaluation of the information formats and standards used by the different civil protection players and bodies,<br />

and with an analysis of the information flow in some sample operations (e.g. ,missing persons search, critical<br />

infrastructure crippling, USAR).<br />

Further on we will carry out a platform/projects/solutions review to draw a bigger picture, so to acquire the<br />

necessary information to design and implement the whole web/mobile system, that will be tested in TAS<br />

Team exercises and operations during next winter season.<br />

A vademecum and the setting up of training package targeting different users, will complete the basic<br />

research and highlight the spread of adopted platform to all other Fire Departments and further involvement<br />

of local government.<br />

25

Acknowledgements<br />

The project has been started on behalf of the Turin Provincial Chief of Fire Fighters (Ing. Silvio Saffiotti) and<br />

with the Ministry of Interior authorization. The Lingotto Fiere crew strongly support our activities during<br />

PROTEC2011, also with ad-hoc Wi-Fi network. Prisma Engineering gave us LSUnet station. Student from<br />

University of Turin feed volunteers team. Barbara Bersanti and Antonio Campus from Centro Intercomunale<br />

Protezione Civile Colline Marittime e Bassa Val di Cecina ruled Operational Room.<br />

References<br />

ALEXANDER D.E, 2001, Nature's Impartiality, Man's Inumanity. Disasters 26(1), pp.1-9.<br />

BENKLER Y., 2006. The wealth of networks. http://cyber.law.harvard.edu/wealth_of_networks/<br />

BURNINGHAM K., FIELDING J., THRUSH D., 2008, "It'll never happen to me": understanding public awareness of local<br />

flood risk. doi:10.1111/j.0361-3666.2007.01036.x., pp. 216-238.<br />

DRABEK T.E., 1999, Understanding Disaster Warning Responses. The Social Sciences Jounal 36(3), pp. 515-523.<br />

GIARDINO, M., GIORDAN, D., AND AMBROGIO, S., 2004. GIS technologies for data collection, management and<br />

visualization of large slope instabilities: two applications in the Western Italian Alps. Natural Hazards and Earth<br />

System Sciences 4, pp. 197–211.<br />

GIARDINO M., PEROTTI L., LANFRANCO M., PERRONE G., 2010. GIS and Geomatics for disaster management and<br />

Emergency relief: a proactive response to natural hazards. Proceeding of Gi4DM 2010 Conference – Geomatics<br />

for Crisis Management. Turin (I).<br />

HARVARD HUMANITARIAN INITIATIVE, 2011, Disaster Relief 2.0: The Future of Information Sharing in Humanitarian<br />

Emergencies. Washington, D.C. and Berkshire, UK: UN Foundation & Vodafone Foundation Technology<br />

Partnership.<br />

KERLE N., 2011., Remote Sensing Based Post-Disaster Damage Mapping - Ready for a collaborative approach?,<br />

www.earthmagazine.org.<br />

MECHLER R., KUNDZEWICZ Z.W., 2010, Assesing Adaptation to Extreme Wheater Events in Europe - Editorial. Mitig<br />

Adapt Strateg Glob Change 15(7), pp. 611-620.<br />

MCCLEARY P., 2011, Battlefield 411. Defense Technology International 6, vol. 5, p. 48.<br />

MCCLEARY P., 2011, Small-Unit Comms. Defense Technology International 7, vol. 5, p. 47.<br />

PEEK L.A., SUTTON J.N, 2003, An Explorating Comparision of Disasters, Riots and Terrorism Acts. Disasters (27)4,<br />

pp. 319-335.<br />

PERRY R.W., LINDELL M.K., 2003, Preparadness for emergency Response: Guidelines for the Emergency Planning<br />

Process. Disasters 27(4), pp. 336-350.<br />

PLOTNICK L., WHITE C., PLUMMER M., 2009, The Design of an Online Social Network for Emergency Management:<br />

A One-Stop Shop. In: Proceeding of 15 ACIS, San Francisco (USA).<br />

QUARANTELLI, E.L. (ed.), 1998. What is a Disaster? Perspectives on the Question. Routledge, Oxon (UK).<br />

26

O'REILLY T., 2007. What Is Web 2.0: Design Patterns and Business Models for the Next Generation of Software.<br />

http://oreilly.com/web2/archive/what-is-web-20.html<br />

UN/ISDR, 2005, Hyogo Framework for Action 2005-2015: Building the resilience of nations and communities to<br />

disasters (HFA). United Nations International Strategy for Disaster Reduction, Kobe, Hyogo (J).<br />

UNDP, 1994. Human Development Report. http://hdr.undp.org/en/reports/global/hdr1994/<br />

U.S. HOUSE OF REPRESENTATIVES, 2006. A Filure of Initiative. Final Report of the Select Bipartisan Committee to<br />

Investigate the Preparation for and Response to Hurricane Katrina.<br />

http://www.gpoaccess.gov/katrinareport/mainreport.pdf<br />

WINSER, B., BLAIKIE P., CANNON T., DAVIS I., 2005, At Risk. 2nd edition, Routledge, Oxon (UK).<br />

WHITE C., PLOTNICK L., KUSHMA J., HILTZ S.R., TUROFF M., 2009, An Online Social Network for Emergency<br />

Management. In: Proceeding of 6 ISCRAM Conference, Gotheburg (S).<br />

SHEN X., 2010, Flood Risk Perception and Communication within Risk Management in Different Cultural<br />

Context. Graduate Research Series 1, UNU-EHS, Bonn (D).<br />

ZLATANOVA S., DILO A., 2010, A data Model for Operational and Situational information in Emergency Response:<br />

the Dutch Case. in: Proceeding of Gi4DM2010, Turin (I).<br />

27

SHORT PAPER<br />

An Integrated Quality Score for Volunteered Geographic<br />

Information on Forest Fires<br />

OSTERMANN F. and SPINSANTI L.<br />

Joint Research Centre of the European Commission, Italy<br />

frank.ostermann@jrc.ec.europa.eu<br />

Abstract: The paper presents the most recent developments in an exploratory research project<br />

that investigates the potential utility of volunteered geographic information (VGI) for fighting forest<br />

fires. As social media platforms change the way people communicate and share information in<br />

crisis situations, we focus on the value and options to integrate VGI with existing spatial data<br />

infrastructures (SDI) and crisis response procedures. Two major obstacles to using VGI in crisis<br />

situations are (1) a lack of quality control and (2) an increasing amount of information.<br />

Consequently, the overall quality and fitness-for-use of VGI needs assessment first. One year ago,<br />

we proposed a workflow for automatically processing and assessing the quality and the accuracy of<br />

VGI in the context of forest fires. This contribution presents the advancements since then, focusing<br />

on the approach to define and implement a measure of the overall quality/fitness-for-use of the<br />

content analyzed. A proposed integrated quality score (IQS) consists of two main criteria, i.e.<br />

relevance and credibility. For both criteria, we have identified several contributing components.<br />

The geographic context of a message has crucial significance, since we argue that it allows<br />

assessing both relevance and credibility. However, the geographic context is difficult to establish,<br />

since a single piece of VGI can contain multiple types of geographic references, each of varying<br />

quality itself.<br />

Keywords: VGI, Forest fire, quality measure, crisis management, social networks.<br />

29

1. Introduction<br />

There is already a substantial amount of information provided by the general public on/during natural and<br />

man-made disasters (Palen & Liu, 2007a). However, the expected future development and adoption of<br />

integrated mobile devices such as smart phones makes it likely that the amount of near-real time,<br />

geographically referenced volunteered information will increase manifold during the coming years. In our case<br />

study, as it is possible to observe in the Table 1 in next section, 2011 retrieved data is more than doubled with<br />

respect to 2010 one.<br />

This development is going to change the way information is collected, distributed and used. The uni-directional<br />

vertical flow of information from officials to public via traditional broadcasting media like radio or television of<br />

the past is overcome by horizontal peer-to-peer(s) communication. The lines between public and official<br />

already blur when official administrative agencies (e.g. in British Columbia 2 ) use regular accounts of private<br />

companies like Facebook or Twitter for communication services. However, until now these newly created<br />

back-channels do not yet integrate well with traditional established emergency response protocols. Clearly,<br />

there are a lot of open questions to be investigated, and recent examples for research on the role of<br />

volunteered information during concrete disasters includes wildfires (De Longueville et al., 2010; De<br />

Longueville, Smith, & Luraschi, 2009; Hudson-Smith, Crooks, Gibin, Milton, & Batty, 2009; Liu & Palen, 2010).<br />

In the case of volunteered information on crisis events, its potential utility depends on the possibility to<br />

georeference the information - if we cannot locate the user-generated content, it is impossible to act on it.<br />

While some volunteered information is explicitly geographic by itself (e.g. OpenStreetMap), other is only<br />

implicitly geographic, since it mentions a place or has geographic coordinates as meta-data. We group both<br />

types under the label of Volunteered Geographic Information (VGI). Another notable aspect is that this VGI is<br />

intrinsically heterogeneous as it is provided by different people, using different media such as photographs,<br />

text, or video, and authors often overcome device and software limitations in imaginative and unpredictable<br />

ways.<br />

The work presented here is part of an exploratory research project with the objectives to (i) to develop, test,<br />

and deploy workflows able to quality control volunteered geographic information and (ii) to assess the value of<br />

volunteered geographic information in supporting both early warning, and local impact assessments of forest<br />

fires. For more details see(Spinsanti and Ostermann 2010).<br />

As test cases for a proof-of-concept implementation, we decided to analyze two different social networks:<br />

Twitter and Flickr. The first is a micro-blogging network while the second is a photo sharing network. The<br />

research aims to study the two separately to individuate their specific characteristics but also to investigate<br />

how the two can complement each other.<br />

We started harvesting VGI at the beginning of the forest fire season in July 2010, and continued until end of<br />

September 2010. At the moment of writing, we are collecting the 2011 season data. Using the public Twitter<br />

streaming API with a filter of 12-17 wildfire related keywords (e.g. fire, forest, evacuation) in 8 different<br />

languages, we collected a total of 24.5 GB of data, equaling around 8 million Tweets for 2010. Using a similar<br />

2 British Columbia Forest Fire Information - http://www.bcforestfireinfo.gov.bc.ca/<br />

The Twitter profile http://twitter.com/#!/BCGovFireInfo<br />

The Facebook profile http://www.facebook.com/group.php?gid=2290613964<br />

30

set of keywords for searching Flickr, we retrieved meta data for around 700 thousand images for 2010. For<br />

2011 we can see (Table 1) the trend of retrieved information is increased of more than +200% for both Twitter<br />

and Flickr. All these VGI are potentially related to a forest fire. The large amount of information leads to the<br />

essential need of an automatic methodology to assess the quality and the accuracy of the VGI. First, however,<br />

we have to geocode any location information.<br />

2. Creating VGI - geocoding user-generated content<br />

As we have defined in the previous section, VGI is information (text, image, video, etc.) with one or more<br />

geographic references, which can be explicit (coordinates), or implicit in the form of placenames (toponyms).<br />

The explict georeferences can be generated in two ways: either automatically by the device if it has Global<br />

Positioning System (GPS) hardware and then added by the software used to transmit the information; or<br />