Parallel Programming in Fortran 95 using OpenMP - People

Parallel Programming in Fortran 95 using OpenMP - People

Parallel Programming in Fortran 95 using OpenMP - People

You also want an ePaper? Increase the reach of your titles

YUMPU automatically turns print PDFs into web optimized ePapers that Google loves.

3.2. Other clauses 49<br />

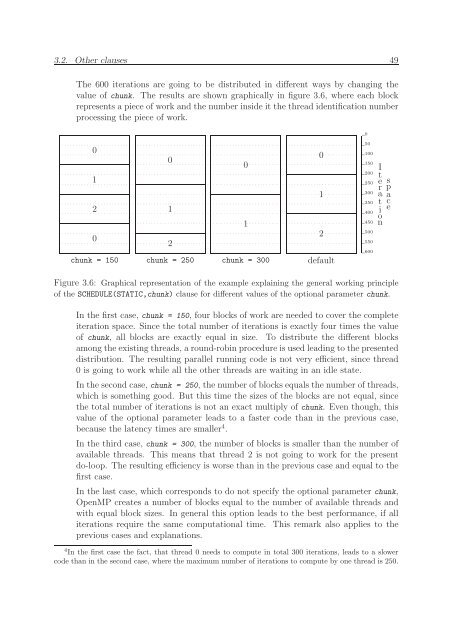

The 600 iterations are go<strong>in</strong>g to be distributed <strong>in</strong> different ways by chang<strong>in</strong>g the<br />

value of chunk. The results are shown graphically <strong>in</strong> figure 3.6, where each block<br />

represents a piece of work and the number <strong>in</strong>side it the thread identification number<br />

process<strong>in</strong>g the piece of work.<br />

0<br />

0<br />

0<br />

0<br />

0<br />

1<br />

1<br />

2<br />

1<br />

1<br />

0<br />

2<br />

2<br />

chunk = 150 chunk = 250 chunk = 300 default<br />

50<br />

100<br />

150<br />

200<br />

250<br />

300<br />

350<br />

400<br />

450<br />

500<br />

550<br />

600<br />

I<br />

t<br />

e r<br />

a<br />

ti<br />

o<br />

n<br />

s<br />

p<br />

ace<br />

Figure 3.6: Graphical representation of the example expla<strong>in</strong><strong>in</strong>g the general work<strong>in</strong>g pr<strong>in</strong>ciple<br />

of the SCHEDULE(STATIC,chunk) clause for different values of the optional parameter chunk.<br />

In the first case, chunk = 150, four blocks of work are needed to cover the complete<br />

iteration space. S<strong>in</strong>ce the total number of iterations is exactly four times the value<br />

of chunk, all blocks are exactly equal <strong>in</strong> size. To distribute the different blocks<br />

among the exist<strong>in</strong>g threads, a round-rob<strong>in</strong> procedure is used lead<strong>in</strong>g to the presented<br />

distribution. The result<strong>in</strong>g parallel runn<strong>in</strong>g code is not very efficient, s<strong>in</strong>ce thread<br />

0 is go<strong>in</strong>g to work while all the other threads are wait<strong>in</strong>g <strong>in</strong> an idle state.<br />

In the second case, chunk = 250, the number of blocks equals the number of threads,<br />

which is someth<strong>in</strong>g good. But this time the sizes of the blocks are not equal, s<strong>in</strong>ce<br />

the total number of iterations is not an exact multiply of chunk. Even though, this<br />

value of the optional parameter leads to a faster code than <strong>in</strong> the previous case,<br />

because the latency times are smaller 4 .<br />

In the third case, chunk = 300, the number of blocks is smaller than the number of<br />

available threads. This means that thread 2 is not go<strong>in</strong>g to work for the present<br />

do-loop. The result<strong>in</strong>g efficiency is worse than <strong>in</strong> the previous case and equal to the<br />

first case.<br />

In the last case, which corresponds to do not specify the optional parameter chunk,<br />

<strong>OpenMP</strong> creates a number of blocks equal to the number of available threads and<br />

with equal block sizes. In general this option leads to the best performance, if all<br />

iterations require the same computational time. This remark also applies to the<br />

previous cases and explanations.<br />

4 In the first case the fact, that thread 0 needs to compute <strong>in</strong> total 300 iterations, leads to a slower<br />

code than <strong>in</strong> the second case, where the maximum number of iterations to compute by one thread is 250.