Entropy and Mutual Information

Entropy and Mutual Information

Entropy and Mutual Information

You also want an ePaper? Increase the reach of your titles

YUMPU automatically turns print PDFs into web optimized ePapers that Google loves.

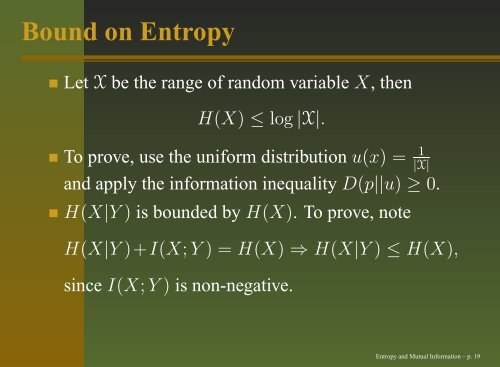

Bound on <strong>Entropy</strong>Let X be the range of r<strong>and</strong>om variable X, thenH(X) ≤ log |X|.To prove, use the uniform distribution u(x) = 1|X|<strong>and</strong> apply the information inequality D(p||u) ≥ 0.H(X|Y ) is bounded by H(X). To prove, noteH(X|Y )+I(X; Y ) = H(X) ⇒ H(X|Y ) ≤ H(X),since I(X; Y ) is non-negative.<strong>Entropy</strong> <strong>and</strong> <strong>Mutual</strong> <strong>Information</strong> – p. 19