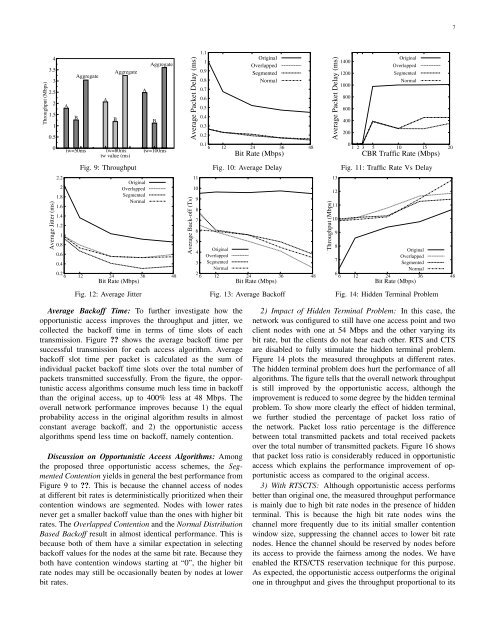

6mode with two clients: A at 130 Mbps and B at 39 Mbps. t wtook the values <strong>of</strong> 50, 80, and 100 ms in the evaluation.Figure 7 shows the impact on throughput. When t w wasdoubled from 50 to 100 ms, the throughput <strong>of</strong> A improved byabout 40% while that <strong>of</strong> B reduced by about only 15% and theaggregate network throughput improved by about 20%. Thisis because, as the opportunism is increased along with t w , Agot more chance and B got less chance <strong>of</strong> using the channel.Figure 8 shows the impact on jitter. The impact is oppositeto that on throughput. When t w was doubled, the jitter <strong>of</strong> Awas reduced from 5 ms to about 3.5 ms while the jitter <strong>of</strong>B increased from 7.7 ms to 10 ms just because A got morechance and B got less chance <strong>of</strong> using the channel. However,both <strong>of</strong> their jitters are much lower than what is required forreal-time applications.B. Evaluation with SimulationWe developed all three proposed algorithms into the simulatorNS3 [25] and comprehensively evaluated the performancewith extensive simulations. Our algorithms were comparedwith the default CSMA/CA without opportunism for each case.The evaluation began with a simple infrastructure topologyhaving one access point and two clients, then studied theimpact <strong>of</strong> hidden terminal on opportunistic access, comparedwith opportunistic transmission in the case <strong>of</strong> mobility, andfinally evaluated the performance on a multiple-hop meshnetwork. All experiments were performed with constant bitrate (CBR) UDP traffic at a rate <strong>of</strong> 10 Mbps for 5 minuteswith packet size <strong>of</strong> 1K bytes. The results are averaged over50 runs for each case. In simulations, α in Formula 1 wasset to 1.7 and the weighted window t w was set to 50 ms.In the following performance figures, “Original” representsthe default algorithm in the CSMA/CA, “Overlapped” forthe Overlapped Contention, “Segmented” for the SegmentedContention and “Normal” for the Normal Distribution BasedBack<strong>of</strong>f Selection.1) Triple-node Infrastructure Topology: We first evaluatedthe throughput and jitter performance <strong>of</strong> opportunistic accessover the original algorithm in a simple topology: one accesspoint and two client nodes. All nodes were in radio range<strong>of</strong> each other. These client nodes transmitted packets to theaccess point at different bit rates. One client node was set toa 54 Mbps, but the other node changed its bit rate from theIEEE 802.11 rate set consisting <strong>of</strong> 6, 12, 24, 36, and 48 Mbps.Throughput Performance: Figure 9 plots the throughputperformance when one client changes its bit rate each timeto imitate the changes <strong>of</strong> channel conditions. The X-axisrepresents the different bit rates and the Y -axis represents thethroughputs <strong>of</strong> opportunistic access algorithms and the originalone in the CSMA/CA. From the figure, opportunistic accessimproves the throughput by approximately 30 - 100% basedon channel conditions as compared to the original algorithm.Delay Performance: Figure 10 plots the measured averagedelay at different bit rates The opportunistic access shows asignificant improvement in delay as compared to the originalaccess. This is because the high bit rate nodes in opportunisticaccess has very less contention time and transmits packetsrapidly. The lower bit rate nodes experiences a much largerdelay comparitively beacause <strong>of</strong> collision due to back-<strong>of</strong>fterminate at the same time and the contetion window sizegets doubled everytime when it encounters a collision. Yet theoverall network performance in delay is improved significantlyover the default CSMA/CA method. To show more clearly, wefurther studied the delay performance with increase in trafficrate Figure 11. As expected, the delay gets increased as CBRtraffic rate increases because more packets are transmittedfrequently. Although the variation in individual packet delaybetween high bit rate nodes and low bit rate nodes is high,the overall network performance <strong>of</strong> opportunistic access isimproved.Jitter Performance: Figure 12 shows the measured jittersat different bit rates. Jitter is the delay variation between twoconsecutive successful packet receptions and the result plottedis the average <strong>of</strong> delay variation <strong>of</strong> total received packets.Surprisingly, the opportunistic access outperforms the originalone in jitter as well. This is because, with opportunistic access,the high bit rate nodes obtain much smaller initial contentionwindows than those in the default CSMA/CA and the selectedback<strong>of</strong>f in the contention is thereby significantly reduced.As a result, although lower bit rate nodes experience largerjitters than higher bit rate nodes, the overall jitter across thenetwork is improved because <strong>of</strong> the shortened time spent onthe contention with opportunistic access.Throughput (Mbps)876543210Throughput <strong>of</strong> Proposed AlgorithmThroughput <strong>of</strong> Original AlgorithmJitter <strong>of</strong> Proposed AlgorithmJitter <strong>of</strong> Originial AlgorihtmAggregate ThroughputC->DB->EB->EC->DJitter (ms)Throughput (Mbps)43.532.52A1.51AggregateAggregateAggregateAABBB0.50tw=50ms tw=80ms tw=100mstw value (ms)Jitter (ms)109B8 B76 AA543BA210tw=50ms tw=80ms tw=100mstw value(ms)Fig. 6: Ad-hocFig. 7: Impact <strong>of</strong> t w on ThroughputFig. 8: Impact <strong>of</strong> t w on Jitter

7Throughput (Mbps)43.532.521.510.50Average Jitter (ms)2.221.81.61.41.210.80.60.4AAggregateBAAggregateBtw=50ms tw=80ms tw=100mstw value (ms)Fig. 9: ThroughputAOriginalOverlappedSegmentedNormalAggregate0.26 12 24 36 48Bit Rate (Mbps)Fig. 12: Average JitterBAverage Packet Delay (ms)Average Back-<strong>of</strong>f (Ts)1110987651.110.90.80.70.60.50.40.30.2OriginalOverlappedSegmentedNormal0.16 12 24 36 48Bit Rate (Mbps)Fig. 10: Average Delay4OriginalOverlapped3 SegmentedNormal26 12 24 36Bit Rate (Mbps)48Fig. 13: Average Back<strong>of</strong>fThroughput (Mbps)Average Packet Delay (ms)131211109140012001000800600400200OriginalOverlappedSegmentedNormal01 2 3 5 10 15 20CBR Traffic Rate (Mbps)Fig. 11: Traffic Rate Vs Delay8Original7OverlappedSegmentedNormal66 12 24 36Bit Rate (Mbps)48Fig. 14: Hidden Terminal ProblemAverage Back<strong>of</strong>f Time: To further investigate how theopportunistic access improves the throughput and jitter, wecollected the back<strong>of</strong>f time in terms <strong>of</strong> time slots <strong>of</strong> eachtransmission. Figure ?? shows the average back<strong>of</strong>f time persuccessful transmission for each access algorithm. Averageback<strong>of</strong>f slot time per packet is calculated as the sum <strong>of</strong>individual packet back<strong>of</strong>f time slots over the total number <strong>of</strong>packets transmitted successfully. From the figure, the opportunisticaccess algorithms consume much less time in back<strong>of</strong>fthan the original access, up to 400% less at 48 Mbps. Theoverall network performance improves because 1) the equalprobability access in the original algorithm results in almostconstant average back<strong>of</strong>f, and 2) the opportunistic accessalgorithms spend less time on back<strong>of</strong>f, namely contention.Discussion on Opportunistic Access Algorithms: Amongthe proposed three opportunistic access schemes, the SegmentedContention yields in general the best performance fromFigure 9 to ??. This is because the channel access <strong>of</strong> nodesat different bit rates is deterministically prioritized when theircontention windows are segmented. Nodes with lower ratesnever get a smaller back<strong>of</strong>f value than the ones with higher bitrates. The Overlapped Contention and the Normal DistributionBased Back<strong>of</strong>f result in almost identical performance. This isbecause both <strong>of</strong> them have a similar expectation in selectingback<strong>of</strong>f values for the nodes at the same bit rate. Because theyboth have contention windows starting at “0”, the higher bitrate nodes may still be occasionally beaten by nodes at lowerbit rates.2) Impact <strong>of</strong> Hidden Terminal Problem: In this case, thenetwork was configured to still have one access point and twoclient nodes with one at 54 Mbps and the other varying itsbit rate, but the clients do not hear each other. RTS and CTSare disabled to fully stimulate the hidden terminal problem.Figure 14 plots the measured throughputs at different rates.The hidden terminal problem does hurt the performance <strong>of</strong> allalgorithms. The figure tells that the overall network throughputis still improved by the opportunistic access, although theimprovement is reduced to some degree by the hidden terminalproblem. To show more clearly the effect <strong>of</strong> hidden terminal,we further studied the percentage <strong>of</strong> packet loss ratio <strong>of</strong>the network. Packet loss ratio percentage is the differencebetween total transmitted packets and total received packetsover the total number <strong>of</strong> transmitted packets. Figure 16 showsthat packet loss ratio is considerably reduced in opportunisticaccess which explains the performance improvement <strong>of</strong> opportunisticaccess as compared to the original access.3) With RTSCTS: Although opportunistic access performsbetter than original one, the measured throughput performanceis mainly due to high bit rate nodes in the presence <strong>of</strong> hiddenterminal. This is because the high bit rate nodes wins thechannel more frequently due to its initial smaller contentionwindow size, suppressing the channel acces to lower bit ratenodes. Hence the channel should be reserved by nodes beforeits access to provide the fairness among the nodes. We haveenabled the RTS/CTS reservation technique for this purpose.As expected, the opportunistic access outperforms the originalone in throughput and gives the throughput proportional to its