4) The tile loops are reordered and optimized. Again,this may involve reshaping the tile iteration spacevia loop skewing and interchanging. The optimizationat this level will depend on the model of parallelismused by the system, and the dependence constraintsbetween tiles. The method described in(IrTr88] has one outermost serial loop surroundingseveral inner parallel tile loops, using loop skewing(wavefronting) in the tile iteration space to satisfyany dependence relations. We also wish to takeadvantage of locality between tiles by giving eachprocessor either a rule for which tile to execute nextor at least a preference for which direction in the tileiteration space to process tiles to best take advantageof locality. The sizes of the tiles are also set atthis time.Let us show some simple examples to illustratezation process.the optimi-Example 1: Given a simple nested sequential loop,such as Program 19a, let us see how tiling would optimizethe loop for multiple vector processors with private caches.For a simple vector computer, we would be tempted tointerchange and vectorize the I loop, because it gives achained multiply-add vector operation and all the memoryreferences are stride-l (with Fortran column-major storage;otherwise the J loop would be used); this is shown in Program19b. However, if the column size (N) was larger thanthe cache size, each pass through the K loop would have toreload the whole column of A into the cache.Program 19a:do I = 1, Ndo J = 1, MA(I,J) = 0.0doK=l, LA(I,J) = A(I,J) + B(I,K)*C(K,J)enddoenddoenddoProgramlSb:do J = 1, MA(1:N.J) = 0.0doK=l, LA(l:N,J) = A(1enddoenddo:N,J)+ B(l:N,K)*C(K,J)For a simple multiprocessor, we might be tempted tointerchange the J loop outwards and parallelize it, as inProgram 19c, so that each processor would operate on distinctcolumns of A and C. Each pass through the K loopwould again have to reload the cache with a row of B andcolumn of C if L is too large.Program19c:doall J = 1, Mdo I = 1, NA(1.J) = 0.0doK=l, LA(I,J) = A(I,J) + B(I,K)*C(K,J)enddoenddoenddoInstead, let us attempt to tile the entire iterationspace. We will use symbolic names for the tile size in eachdimension, since determining the tile sizes will be donelater. <strong>Tiling</strong> the iteration space can proceed even thoughthe loops are not perfectly nested. Essentially, each loop isstrip-mined, then the strip loops are interchanged outwardsto become the tile loops. The tiled program isshown in Program 19d.Program 19d:do IT = 1, N, ITSdo JT = 1, M, JTSdo I = IT, MIN(N,IT+ITS-1)do J = 1, MIN(M,JT+JTS-1)A(I,J) = 0.0enddoenddodo KT = 1, L, KTSdo I = IT, MIN(N,IT+ITS-1)do J = 1, MIN(M,JT+JTS-1)do K = 1, MIN(L,KT+KTS-1)A(1.J) = A(I,J) + B(I,X)*C(K,J)enddoenddoenddoenddoenddoenddoEach set of element loops is ordered to provide the kind oflocal performance the machine needs. The first set of elementloops has no locality (the footprint of A is ITSXJTS,the same size as the iteration space), so we need onlyoptimize for vector operations and perhaps memory stride;we do this by vectorizing the I loop.IVL = MIN(N,IT+ITS-1)do J = 1, MIN(M,.JT+JTS-1)A(IT:IT+IVL,J) = 0.0enddoThe second set of element loops can be reordered 6 ways;the JKI ordering gives stride-l vector operations in theinner loop, and one level of locality for A in the secondinner loop (the footprint of A in the K loop is ITS whilethe iteration space is ITSXKTS. Furthermore, if ITS isthe size of a vector register, the footprint of A fits into avector register during that loop, meaning that the vectorregister load and store of A cart be floated out of the K loopentirely.662

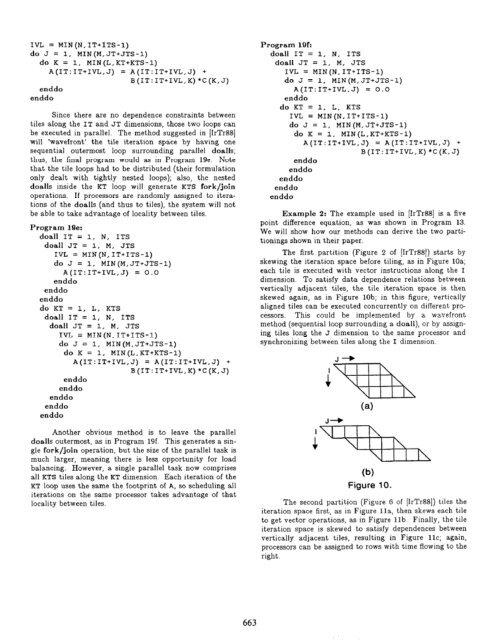

IVL = MTN(N,IT+ITS-1)do J = 1, MIN(M,JT+JTS-1)do K = 1, MIN(L,KT+KTS-1)A(IT:IT+IVL,J) = A(IT:IT+IVL,J) +B(IT:IT+IVL,K)*C(K,J)enddoenddoSince there are no dependence constraints betweentiles along the IT and JT dimensions, those two loops canbe executed in parallel. The method suggested in [IrTr88]will ‘wavefront’ the tile iteration space by having onesequential outermost loop surrounding parallel doalls;thus, the final program would as in Program lge. Notethat the tile loops had to be distributed (their formulationonly dealt with tightly nested loops); also, the nesteddoalls inside the KT loop will generate KTS fork/joinoperations. If processors are randomly assigned to iterationsof the doalls (and thus to tiles), the system will notbe able to take advantage of locality between tiles.Program 19,:doall IT = 1, N, ITSdoall JT = 1, M, JTSIVL = MIN(N,IT+ITS-1)do J = 1, MIN(M,JT+JTS-1)A(IT:IT+IVL,J) = 0.0enddoenddoenddodo KT = 1, L, KTSdoall IT = 1, N, ITSdoall JT = 1, M, JTSIVL = MIN(N,IT+ITS-1)do J = 1, MIN(M,JT+JTS-1)do K = 1. MIN(L,KT+KTS-1)A(IT:IT+IVL,J) = A(IT:IT+IVL,J) +B(IT:IT+IVL,K)*C(K,J)enddoenddoenddoenddoenddoAnother obvious method is to leave the paralleldoalls outermost, as in Program 19f. This generates a singlefork/join operation, but the size of the parallel task ismuch larger, meaning there is less opportunity for loadbalancing. However, a single parallel task now comprisesall KTS tiles along the KT dimension. Each iteration of theKT loop uses the same the footprint of A, so scheduling alliterations on the same processor takes advantage of thatlocality between tiles.Program 19f:doall IT = 1, N, ITSdoall JT = 1, M, JTSIVL = MIN(N,IT+ITS-1)da J = 1, MIN(M,JT+JTS-1)A(IT:IT+IVL,J) = 0.0enddodo KT = 1, L, KTSIVL = MIN(N,IT+ITS-1)do J = 1, MIN(M,JT+JTS-1)do K = 1, MIN(L,KT+KTS-1)A(IT:IT+IVL,J) = A(IT:IT+IVL,J) +B(IT:IT+IVL,K)*C(K,J)enddoenddoenddoenddoenddoExample 2: The example used in [IrTr88] is a fivepoint difference equation, as was shown in Program 13.We will show how our methods can derive the two partitioningsshown in their paper.The first partition (Figure 2 of [IrTr88]) starts byskewing the iteration space before tiling, as in Figure 10a;each tile is executed with vector instructions along the Idimension. To satisfy data dependence relations betweenvertically adjacent tiles, the tile iteration space is thenskewed again, as in Figure lob; in this figure, verticallyaligned tiles can be executed concurrently on different processors.This could be implemented by a wavefrontmethod (sequential loop surrounding a doall), or by assigningtiles long the J dimension to the same processor andsynchronizing between tiles along the I dimension.(a)(b)Figure 10.The second partition (Figure G of [IrTr88]) tiles theiteration space first, as in Figure lla, then skews each tileto get vector operations, as in Figure lib. Finally, the tileiteration space is skewed to satisfy dependences betweenvertically adjacent tiles, resulting in Figure llc; again,processors can be assigned to rows with time flowing to theright.663

![Problem 1: Loop Unrolling [18 points] In this problem, we will use the ...](https://img.yumpu.com/36629594/1/184x260/problem-1-loop-unrolling-18-points-in-this-problem-we-will-use-the-.jpg?quality=85)