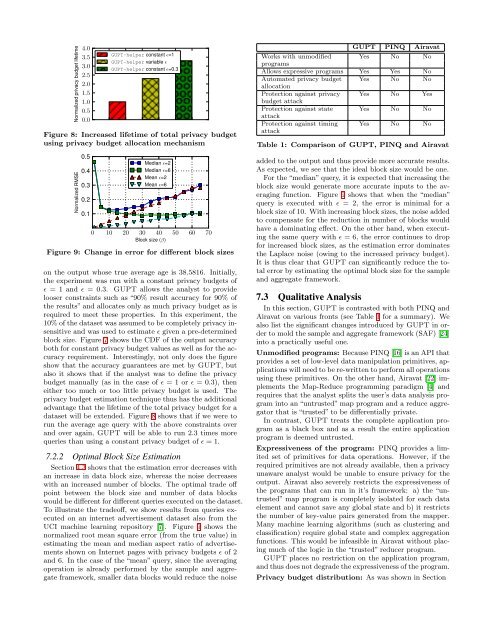

Normalized privacy budget lifetime4.03.53.02.52.01.51.00.50.0<strong>GUPT</strong>-helper constant ɛ=1<strong>GUPT</strong>-helper variable ɛ<strong>GUPT</strong>-helper constant ɛ=0.3Figure 8: Increased lifetime of total privacy budgetusing privacy budget allocation mechanismNormalized RMSE0.50.40.30.20.1Median ɛ=2Median ɛ=6Mean ɛ=2Mean ɛ=60 10 20 30 40 50 60 70Block size (β)Figure 9: Change in error for different block sizeson the output whose true average age is 38.5816. Initially,the experiment was run with a constant privacy budgets ofɛ = 1 and ɛ = 0.3. <strong>GUPT</strong> allows the analyst to providelooser constraints such as “90% result accuracy for 90% ofthe results” and allocates only as much privacy budget as isrequired to meet these properties. In this experiment, the10% of the dataset was assumed to be completely privacy insensitiveand was used to estimate ɛ given a pre-determinedblock size. Figure 7 shows the CDF of the output accuracyboth for constant privacy budget values as well as for the accuracyrequirement. Interestingly, not only does the figureshow that the accuracy guarantees are met by <strong>GUPT</strong>, butalso it shows that if the analyst was to define the privacybudget manually (as in the case of ɛ = 1 or ɛ = 0.3), theneither too much or too little privacy budget is used. Theprivacy budget estimation technique thus has the additionaladvantage that the lifetime of the total privacy budget for adataset will be extended. Figure 8 shows that if we were torun the average age query with the above constraints overand over again, <strong>GUPT</strong> will be able to run 2.3 times morequeries than using a constant privacy budget of ɛ = 1.7.2.2 Optimal Block Size EstimationSection 4.3 shows that the estimation error decreases withan increase in data block size, whereas the noise decreaseswith an increased number of blocks. The optimal trade offpoint between the block size and number of data blockswould be different for different queries executed on the dataset.To illustrate the tradeoff, we show results from queries executedon an internet advertisement dataset also from theUCI machine learning repository [7]. Figure 9 shows thenormalized root mean square error (from the true value) inestimating the mean and median aspect ratio of advertisementsshown on Internet pages with privacy budgets ɛ of 2and 6. In the case of the “mean” query, since the averagingoperation is already performed by the sample and aggregateframework, smaller data blocks would reduce the noise<strong>GUPT</strong> PINQ AiravatWorks with unmodified Yes No NoprogramsAllows expressive programs Yes Yes NoAutomated privacy budget Yes No NoallocationProtection against privacy Yes No Yesbudget attackProtection against state Yes No NoattackProtection against timing Yes No NoattackTable 1: Comparison of <strong>GUPT</strong>, PINQ and Airavatadded to the output and thus provide more accurate results.As expected, we see that the ideal block size would be one.For the “median” query, it is expected that increasing theblock size would generate more accurate inputs to the averagingfunction. Figure 9 shows that when the “median”query is executed with ɛ = 2, the error is minimal for ablock size of 10. With increasing block sizes, the noise addedto compensate for the reduction in number of blocks wouldhave a dominating effect. On the other hand, when executingthe same query with ɛ = 6, the error continues to dropfor increased block sizes, as the estimation error dominatesthe Laplace noise (owing to the increased privacy budget).It is thus clear that <strong>GUPT</strong> can significantly reduce the totalerror by estimating the optimal block size for the sampleand aggregate framework.7.3 Qualitative <strong>Analysis</strong>In this section, <strong>GUPT</strong> is contrasted with both PINQ andAiravat on various fronts (see Table 1 for a summary). Wealso list the significant changes introduced by <strong>GUPT</strong> in orderto mold the sample and aggregate framework (SAF) [24]into a practically useful one.Unmodified programs: Because PINQ [16] is an API thatprovides a set of low-level data manipulation primitives, applicationswill need to be re-written to perform all operationsusing these primitives. On the other hand, Airavat [22] implementsthe Map-Reduce programming paradigm [4] andrequires that the analyst splits the user’s data analysis programinto an “untrusted” map program and a reduce aggregatorthat is “trusted” to be differentially private.In contrast, <strong>GUPT</strong> treats the complete application programas a black box and as a result the entire applicationprogram is deemed untrusted.Expressiveness of the program: PINQ provides a limitedset of primitives for data operations. However, if therequired primitives are not already available, then a privacyunaware analyst would be unable to ensure privacy for theoutput. Airavat also severely restricts the expressiveness ofthe programs that can run in it’s framework: a) the “untrusted”map program is completely isolated for each dataelement and cannot save any global state and b) it restrictsthe number of key-value pairs generated from the mapper.Many machine learning algorithms (such as clustering andclassification) require global state and complex aggregationfunctions. This would be infeasible in Airavat without placingmuch of the logic in the “trusted” reducer program.<strong>GUPT</strong> places no restriction on the application program,and thus does not degrade the expressiveness of the program.<strong>Privacy</strong> budget distribution: As was shown in Section

7.1.2, PINQ requires the analyst to allocate a privacy budgetfor each operation on the data. An inefficient distribution ofthe budget either add too much noise or use up too much ofthe budget. Like <strong>GUPT</strong>, Airavat spends a constant privacybudget on the entire program. However, neither Airavat norPINQ provides any support for distributing an aggregateprivacy budget across multiple data analysis programs.Using the aging of sensitivity model, <strong>GUPT</strong> provides anmechanism for efficiently distributing an aggregate privacybudget across multiple data analysis programs.Side Channel Attacks: Current implementation of PINQlays the onus of protecting against side channel attacks onthe program developer. As was noted in [10], although Airavatprotects against privacy budget attacks, it remains vulnerableto state attacks. <strong>GUPT</strong> defends against both stateattacks and privacy budget attacks (see Section 6.2).Other differences: <strong>GUPT</strong> extends SAF to use a noveldata resampling mechanism to reduce the variance in theoutput induced via data sub-sampling. Using the aging ofsensitivity model, <strong>GUPT</strong> overcomes a fundamental issue indifferential privacy not considered previously (to the best ofour knowledge): for an arbitrary data analysis application,how do we describe an abstract privacy budget in terms ofutility? The model also allows us to further reduce the errorin SAF by estimating a reasonable block size.8. DISCUSSIONApproximately normal computation: <strong>GUPT</strong> does notprovide any theoretical guarantees on the accuracy of theoutputs, if the applied computation is not approximatelynormal, or if the data entries are not independently andidentically distributed (i.i.d). However, <strong>GUPT</strong> still guaranteesthat the differential privacy of individual data recordsis always maintained. In practice, <strong>GUPT</strong> provides reasonablyaccurate results for a lot of queries that do not satisfyapproximate normality.For some real world datasets, such as sensing and streamingdata that have temporal correlation and do not satisfythe i.i.d property, the current implementation of <strong>GUPT</strong> failsto provide reasonable output. In our future work we intendto design <strong>GUPT</strong> for catering to these kind of datasets.Ordering of multiple outputs: When data analysis programyields multi-dimensional outputs, the program maynot be able to guarantee the same ordering of outputs fordifferent data blocks. In this case, we have to sort the outputsaccording to some canonical form before applying theaveraging operation. For example, in the case of k-meansclustering, each block can yield different ordering of the kcluster centers. In our experiments, the cluster centers weresorted according to their first coordinate.8.1 LimitationsForeknowledge of output dimension: <strong>GUPT</strong> assumesthat the output dimensions are known in advance. This mayhowever not always be true in practice. For example, SupportVector Machines (SVM) output an indefinite numberof support vectors. Unless the dimension is fixed in advance,private information may leak through the dimensionality itself.Another problem is that if the output dimensions fordifferent blocks are different, then performing an average ofthe outputs will not be meaningful. A partial solution is toclamp or pad the output dimension to a predefined number.We note that this limitation is not unique to <strong>GUPT</strong>. Forexample, Airavat [22] also suffers from the same problem inthe sense that their mappers have to output a fixed numberof (key, value) pairs.Inherits limitations of differential privacy: <strong>GUPT</strong> inheritssome of the issues that are common to many differentialprivacy mechanisms. For high dimensional data outputs,the privacy budget needs to be split across the multipleoutputs. The privacy budget also needs to be split acrossmultiple data mining tasks and users. Given a fixed totalprivacy budget, the more tasks and queries we divide thebudget over, the more noise there is – and at some point, themagnitude of noise may cause the data analysis to becomeunusable. In Section 5.2, we outlined a potential method formanaging privacy budgets. Alternative approaches such asauctioning of privacy budget [9] can also be used.While differential privacy guarantees the privacy of individualrecords in the dataset, many real world applicationwill want to enforce higher level privacy concepts such asuser-level privacy [6]. In cases where multiple records maycontain information about the same user, user-level privacyneeds to be accounted for accordingly.9. CONCLUSION AND FUTURE WORK<strong>GUPT</strong> makes privacy-preserving data analytics easy forprivacy non-experts. The analyst can upload arbitrary datamining programs and <strong>GUPT</strong> guarantees the privacy of theoutputs. We propose novel improvements to the sample andaggregate framework to enhance the usability and accuracyof the data analysis. Through a new model that reduces thesensitivity of the data over time, <strong>GUPT</strong> is able to representprivacy budgets as accuracy guarantees on the final output.Although this model is not strictly required for the defaultfunctioning of <strong>GUPT</strong>, it improves both usability and accuracy.Through the efficient distribution of the limited privacybudget between data queries, <strong>GUPT</strong> is able to ensurethat more queries that meet both their accuracy and privacygoals can be executed on the dataset. Through experimentson real-world datasets, we demonstrate that <strong>GUPT</strong>can achieve reasonable accuracy in private data analysis.AcknowledgementsWe would like to thank Adam Smith, Daniel Kifer, FrankMcSherry, Ganesh Ananthanarayanan, David Zats, PiyushSrivastava and the anonymous reviewers of SIGMOD fortheir insightful comments that have helped improve thispaper. This project was funded by Nokia, Siemens, Intelthrough the ISTC for Secure Computing, NSF awards (CPS-0932209, CPS-0931843, BCS-0941553 and CCF-0747294) andthe Air Force Office of Scientific Research under MURI Grants(22178970-4170 and FA9550-08-1-0352).10. REFERENCES[1] N. Anciaux, L. Bouganim, H. H. van, P. Pucheral, andP. M. Apers. <strong>Data</strong> degradation: Making private dataless sensitive over time. In CIKM, 2008.[2] F. Bancilhon and R. Ramakrishnan. An amateur’sintroduction to recursive query processing strategies.In SIGMOD, 1986.