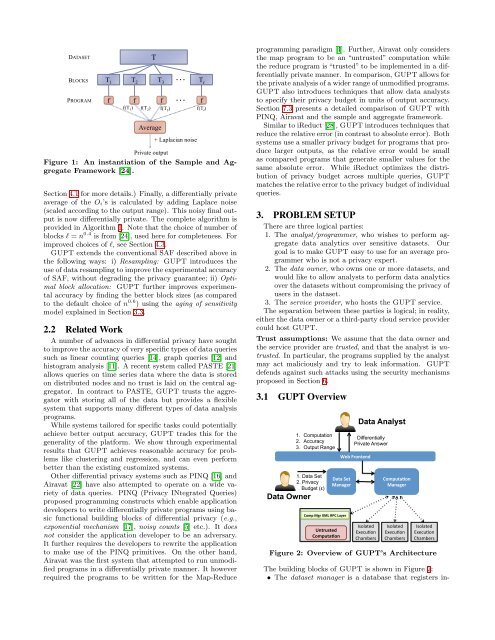

While differential privacy has strong theoretical properties,the shortcomings of existing differentially private dataanalysis systems have limited its adoption. For instance, existingprograms cannot be leveraged for private data analysiswithout modification. The magnitude of the perturbationintroduced in the final output is another cause ofconcern for data analysts. Differential privacy systems operateusing an abstract notion of privacy, called the ‘privacybudget’. Intuitively a lower privacy budget implies betterprivacy. However, this unit of privacy does not easily translateinto the utility of the program and is thus difficult fordata analysts who are not experts in privacy to interpret.Further, analysts would also be required to efficiently distributethis limited privacy budget between multiple queriesoperating on a dataset. An inefficient distribution of theprivacy budget would result in inaccurate data analysis andreduce the number of queries that can be safely performedon the dataset.We introduce <strong>GUPT</strong> 1 , a platform that allows organizationsto allow external aggregate analysis on their datasetswhile ensuring that data analysis is performed in a differentiallyprivate manner. It allows the execution of existingprograms with no modifications, eliminating the expensiveand demanding task of rewriting programs to be differentiallyprivate. <strong>GUPT</strong> enables data analysts to specify adesired output accuracy rather than work with an abstractprivacy budget. Finally, <strong>GUPT</strong> automatically parallelizesthe task across a cluster ensuring scalability for concurrentanalytics. We show through experiments on real datasetsthat <strong>GUPT</strong> overcomes many shortcomings of existing differentialprivacy systems without sacrificing accuracy.1.1 ContributionsWe design and develop <strong>GUPT</strong>, a platform for privacypreservingdata analytics. We introduce a new model fordata sensitivity which applies to a large class of datasetswhere the privacy requirement of data decreases over time.As we will explain in Section 3.3, using this model is appropriateand allows us to overcome significant challengesthat are fundamental to differential privacy. This approachenables us to analyze less sensitive data to get reasonableapproximations of privacy parameters that can be used fordata queries running on the newer data.<strong>GUPT</strong> makes the following technical contributions thatmake differential privacy usable in practice:1. Describing privacy budget in terms of accuracy:<strong>Data</strong> analysts are accustomed to the idea of workingwith inaccurate output (as is the case with data samplingin large datasets and many machine learning algorithmshave probabilistic output). <strong>GUPT</strong> uses theaging model of data sensitivity, to allow analysts todescribe the abstract ‘privacy budget’ in terms of expectedaccuracy of the final output.2. <strong>Privacy</strong> budget distribution: <strong>GUPT</strong> automaticallyallocates a privacy budget to each query in orderto match the data analysts’ accuracy requirements.Further, the analyst also does not have to distributethe privacy budget between the individual data operationsin the program.3. Accuracy of output: <strong>GUPT</strong> extends a theoreticaldifferential privacy framework called “sample and aggregate”(described in Section 2.1) for practical ap-1 <strong>GUPT</strong> is a Sanskrit word meaning ‘Secret’.plicability. This includes using a novel data resamplingtechnique that reduces the error introduced bythe framework’s data partitioning scheme. Further,the aging model of data sensitivity allows <strong>GUPT</strong> toselect an optimal partition size that reduces the perturbationadded for differential privacy.4. Prevent side channel attacks: <strong>GUPT</strong> defends againstside channel attacks such as the privacy budget attacks,state attacks and timing attacks described in [10].2. BACKGROUNDDifferential privacy places privacy research on a firm theoreticalfoundation. It guarantees that the presence or absenceof a particular record in a dataset will not significantlychange the output of any computation on a statisticaldataset. An adversary thus learns approximately the sameinformation about any individual record, irrespective of itspresence or absence in the original dataset.Definition 1 (ɛ-differential privacy [5]). Arandomized algorithm A is ɛ-differentially private if for alldatasets T, T ′ ∈ D n differing in at most one data record andfor any set of possible outputs O ⊆ Range(A), Pr[A(T ) ∈O] ≤ e ɛ Pr[A(T ′ ) ∈ O] . Here D is the domain from whichthe data records are drawn.The privacy parameter ɛ, also called the privacy budget [16],is fundamental to differential privacy. Intuitively, a lowervalue of ɛ implies stronger privacy guarantee and a highervalue implies a weaker privacy guarantee while possibly achievinghigher accuracy.2.1 Sample and AggregateAlgorithm 1 Sample and Aggregate Algorithm [24]Input: <strong>Data</strong>set T ∈ R n , length of the dataset n, privacyparameters ɛ, output range (min, max).1: Let l = n 0.42: Randomly partition T into l disjoint blocks T 1, · · · , T l .3: for i ∈ {1, · · · , l} do4: O i ← Output of user application on dataset T i.5: If O i > max, then O i ← max.6: If O i < min, then O i ← min.7: end for∑8: A ← 1 l | max − min |l i=1Oi + Lap( )l·ɛ<strong>GUPT</strong> leverages and extends the “sample and aggregate”framework [24, 19] (SAF) to design a practical and usablesystem which will guarantee differential privacy for arbitraryapplications. Given a statistical estimator P(T ) , where Tis the input dataset , SAF constructs a differentially privatestatistical estimator ˆP(T ) using P as a black box. Moreover,theoretical analysis guarantees that the output of ˆP(T ) convergesto that of P(T ) as the size of the dataset T increases.As the name “sample and aggregate” suggests, the algorithmfirst partitions the dataset into smaller subsets; i.e.,l = n 0.4 blocks (call them T 1, · · · , T l ) (see Figure 1). Theanalytics program P is applied on each of these datasets T iand the outputs O i are recorded. The O i’s are now clampedto within an output range that is either provided by the analystor inferred using a range estimator function. (Refer to

DATASETBLOCKSPROGRAMTT 1 T 2 T 3… T lfAverage…f f ff(T 1 ) f(T 2 ) f(T 3 ) f(T l )+ Laplacian noisePrivate outputFigure 1: An instantiation of the Sample and AggregateFramework [24].Section 4.1 for more details.) Finally, a differentially privateaverage of the O i’s is calculated by adding Laplace noise(scaled according to the output range). This noisy final outputis now differentially private. The complete algorithm isprovided in Algorithm 1. Note that the choice of number ofblocks l = n 0.4 is from [24], used here for completeness. Forimproved choices of l, see Section 4.3.<strong>GUPT</strong> extends the conventional SAF described above inthe following ways: i) Resampling: <strong>GUPT</strong> introduces theuse of data resampling to improve the experimental accuracyof SAF, without degrading the privacy guarantee; ii) Optimalblock allocation: <strong>GUPT</strong> further improves experimentalaccuracy by finding the better block sizes (as comparedto the default choice of n 0.6 ) using the aging of sensitivitymodel explained in Section 3.3.2.2 Related WorkA number of advances in differential privacy have soughtto improve the accuracy of very specific types of data queriessuch as linear counting queries [14], graph queries [12] andhistogram analysis [11]. A recent system called PASTE [21]allows queries on time series data where the data is storedon distributed nodes and no trust is laid on the central aggregator.In contract to PASTE, <strong>GUPT</strong> trusts the aggregatorwith storing all of the data but provides a flexiblesystem that supports many different types of data analysisprograms.While systems tailored for specific tasks could potentiallyachieve better output accuracy, <strong>GUPT</strong> trades this for thegenerality of the platform. We show through experimentalresults that <strong>GUPT</strong> achieves reasonable accuracy for problemslike clustering and regression, and can even performbetter than the existing customized systems.Other differential privacy systems such as PINQ [16] andAiravat [22] have also attempted to operate on a wide varietyof data queries. PINQ (<strong>Privacy</strong> INtegrated Queries)proposed programming constructs which enable applicationdevelopers to write differentially private programs using basicfunctional building blocks of differential privacy (e.g.,exponential mechanism [17], noisy counts [5] etc.). It doesnot consider the application developer to be an adversary.It further requires the developers to rewrite the applicationto make use of the PINQ primitives. On the other hand,Airavat was the first system that attempted to run unmodifiedprograms in a differentially private manner. It howeverrequired the programs to be written for the Map-Reduceprogramming paradigm [4]. Further, Airavat only considersthe map program to be an “untrusted” computation whilethe reduce program is “trusted” to be implemented in a differentiallyprivate manner. In comparison, <strong>GUPT</strong> allows forthe private analysis of a wider range of unmodified programs.<strong>GUPT</strong> also introduces techniques that allow data analyststo specify their privacy budget in units of output accuracy.Section 7.3 presents a detailed comparison of <strong>GUPT</strong> withPINQ, Airavat and the sample and aggregate framework.Similar to iReduct [28], <strong>GUPT</strong> introduces techniques thatreduce the relative error (in contrast to absolute error). Bothsystems use a smaller privacy budget for programs that producelarger outputs, as the relative error would be smallas compared programs that generate smaller values for thesame absolute error. While iReduct optimizes the distributionof privacy budget across multiple queries, <strong>GUPT</strong>matches the relative error to the privacy budget of individualqueries.3. PROBLEM SETUPThere are three logical parties:1. The analyst/programmer, who wishes to perform aggregatedata analytics over sensitive datasets. Ourgoal is to make <strong>GUPT</strong> easy to use for an average programmerwho is not a privacy expert.2. The data owner, who owns one or more datasets, andwould like to allow analysts to perform data analyticsover the datasets without compromising the privacy ofusers in the dataset.3. The service provider, who hosts the <strong>GUPT</strong> service.The separation between these parties is logical; in reality,either the data owner or a third-party cloud service providercould host <strong>GUPT</strong>.Trust assumptions: We assume that the data owner andthe service provider are trusted, and that the analyst is untrusted.In particular, the programs supplied by the analystmay act maliciously and try to leak information. <strong>GUPT</strong>defends against such attacks using the security mechanismsproposed in Section 6.3.1 <strong>GUPT</strong> Overview1. <strong>Data</strong> Set2. <strong>Privacy</strong>↵Budget (ε)<strong>Data</strong> Owner1. Computation2. Accuracy3. Output RangeWeb Frontend <strong>Data</strong> Set Manager Comp Mgr XML RPC Layer Untrusted Computa4on <strong>Data</strong> AnalystDifferentiallyPrivate AnswerIsolated Execu.on Chambers Computa4on Manager Isolated Execu.on Chambers Isolated Execu.on Chambers Figure 2: Overview of <strong>GUPT</strong>’s ArchitectureThe building blocks of <strong>GUPT</strong> is shown in Figure 2:• The dataset manager is a database that registers in-