additional slides - MIT

additional slides - MIT

additional slides - MIT

You also want an ePaper? Increase the reach of your titles

YUMPU automatically turns print PDFs into web optimized ePapers that Google loves.

nikhil−96<br />

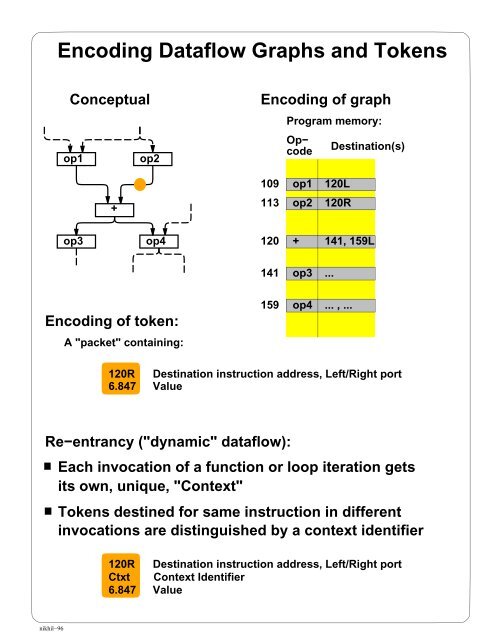

Encoding Dataflow Graphs and Tokens<br />

Conceptual Encoding of graph<br />

op1 op2<br />

+<br />

op3 op4<br />

Encoding of token:<br />

A "packet" containing:<br />

109<br />

113<br />

120<br />

141<br />

159<br />

Program memory:<br />

op1<br />

op2<br />

+<br />

op3<br />

op4<br />

Destination(s)<br />

120R Destination instruction address, Left/Right port<br />

6.847 Value<br />

Re−entrancy ("dynamic" dataflow):<br />

Op−<br />

code<br />

120L<br />

120R<br />

141, 159L<br />

... , ...<br />

Each invocation of a function or loop iteration gets<br />

its own, unique, "Context"<br />

Tokens destined for same instruction in different<br />

invocations are distinguished by a context identifier<br />

120R Destination instruction address, Left/Right port<br />

Ctxt Context Identifier<br />

6.847 Value<br />

...

<strong>MIT</strong> Tagged Token Dataflow Architecture<br />

nikhil−97<br />

Designed by Arvind et. al., <strong>MIT</strong>, early 1980’s<br />

Simulated; never built<br />

Global view:<br />

Processor Nodes<br />

(including local program and "stack" memory)<br />

Interconnection Network<br />

"I−Structure" Memory Nodes (global heap data memory)<br />

Resource Manager Nodes responsible for<br />

Resource<br />

Manager<br />

Nodes<br />

Function allocation (allocation of context identifiers)<br />

Heap allocation<br />

etc.<br />

Stack memory and heap memory: globally addressed

<strong>MIT</strong> Tagged Token Dataflow Architecture<br />

Processor<br />

nikhil−98<br />

Token<br />

Queue<br />

To network<br />

Wait−Match Unit:<br />

Wait−Match<br />

Unit<br />

Instruction<br />

Fetch<br />

Execute<br />

Op<br />

Form<br />

Tokens<br />

From network<br />

Waiting token<br />

memory (associative)<br />

Program<br />

memory<br />

Tokens for unary ops go straight through<br />

Tokens for binary ops: try to match incoming token<br />

and a waiting token with same instruction address<br />

and context id<br />

Success:<br />

Fail:<br />

Output<br />

Both tokens forwarded<br />

Incoming token −−> Waiting Token Mem,<br />

Bubble (no−op) forwarded

<strong>MIT</strong> Tagged Token Dataflow Architecture<br />

Processor Operation<br />

nikhil−99<br />

Token<br />

Queue<br />

120R,c, 6.847<br />

Wait−Match<br />

Unit<br />

Instruction<br />

Fetch<br />

141,159L,c, +,6.001,6.847<br />

To network<br />

120,c, 6.001,6.847<br />

Execute<br />

Op<br />

141,159L,c, 12.848<br />

Form<br />

Tokens<br />

141,c, 12.848<br />

159L,c, 12.848<br />

Output<br />

Output Unit routes tokens:<br />

From network<br />

Back to local Token Queue<br />

To another Processor<br />

To heap memory<br />

based on the addresses on the token<br />

Waiting token<br />

memory (associative)<br />

120L,c, 6.001<br />

Program<br />

memory<br />

120 + 141, 159L<br />

Tokens from network are placed in Token Queue

<strong>MIT</strong> Tagged Token Dataflow Architecture<br />

Support for "Remote Loads"<br />

nikhil−100<br />

Conceptual:<br />

A<br />

Fetch<br />

v<br />

op1<br />

Encoding of graph:<br />

Program memory:<br />

200<br />

Opcode Destination(s)<br />

Fetch<br />

207 op1<br />

Heap Memory<br />

207<br />

...<br />

A v

<strong>MIT</strong> Tagged Token Dataflow Architecture<br />

Support for "Remote Loads"<br />

nikhil−101<br />

Token<br />

Queue<br />

207,c, v<br />

200,c, A<br />

Fetch, A, 207,c<br />

Fetch, A, 207,c<br />

Wait−Match<br />

Unit<br />

200,c, A<br />

Instruction<br />

Fetch<br />

207,c, Fetch,A<br />

Execute<br />

Op<br />

Fetch,A, 207,c<br />

Form<br />

Tokens<br />

Fetch, A, 207,c<br />

Output<br />

Network<br />

Heap Memory Module<br />

Containing Address A<br />

207,c, v<br />

207,c, v<br />

A v<br />

Multiple remote loads are no problem:<br />

Waiting token<br />

memory (associative)<br />

Program<br />

memory<br />

200 Fetch 207<br />

Can be issued in parallel<br />

"Join" of responses is implicit in Wait−Match

<strong>MIT</strong> Tagged Token Dataflow Architecture<br />

Support for "Synchronizing Loads"<br />

nikhil−102<br />

Heap memory locations have FULL/EMPTY bits<br />

When "I−Fetch" request arrives (instead of "Fetch"),<br />

if the heap location is EMPTY, heap memory module<br />

queues request at that location<br />

Later, when "I−Store" arrives, pending requests are<br />

discharged<br />

"I−structure semantics"<br />

Note: no busy waiting, no extra messages<br />

I−Store,A,v, 191,c2<br />

I−Fetch,A, 207,c<br />

I−Fetch,A, 900,c1<br />

Heap Memory Module<br />

Containing Address A<br />

207,c, v<br />

A E Nil A E<br />

A F v<br />

207,c<br />

900,c1<br />

191,c2, ack<br />

900,c1, v

nikhil−180<br />

Unification of Dataflow & von Neumann<br />

Designs<br />

pure Dataflow<br />

Static<br />

(Dennis &<br />

Misunas ’74)<br />

Argument<br />

Fetching<br />

(Gao,<br />

et. al. 88)<br />

"Static"<br />

(Dennis 91)<br />

Manchester<br />

Dataflow<br />

(Gurd &<br />

Watson 82)<br />

Sigma−1<br />

(Shimada &<br />

Hiraki 87)<br />

Epsilon<br />

(Grafe<br />

et.al. 88)<br />

Epsilon 2<br />

(Grafe &<br />

Hoch 89)<br />

EM 5<br />

(Kodama<br />

& Sakai 91)<br />

TTDA<br />

(Arvind<br />

et.al. 80−83)<br />

Monsoon<br />

(Papadopoulos<br />

& Culler 88)<br />

EM 4<br />

(Sakai 89)<br />

Monsoon<br />

Threads<br />

(Traub &<br />

Papadopoulos<br />

91)<br />

Unified<br />

Hybrid<br />

(Buehrer &<br />

Ekanadham 87)<br />

VNDF<br />

Hybrid<br />

(Iannucci 88)<br />

P−RISC<br />

(Nikhil &<br />

Arvind 88)<br />

Hybrid<br />

*T<br />

multithreaded<br />

von Neumann<br />

TAM<br />

CDC 6600<br />

MASA<br />

(Halstead &<br />

Fujita 88)<br />

EMPIRE<br />

(Iannucci<br />

et. al. 89−91)<br />

(Culler,<br />

et. al. 90)<br />

(Nikhil,<br />

Papadopoulos,<br />

Arvind &<br />

Greiner 91)<br />

1964<br />

HEP<br />

(B.Smith 78)<br />

iPSCs,<br />

CM−5<br />

Horizon<br />

Tera<br />

(B.Smith,<br />

et.al. 88−93)<br />

Alewife<br />

(Agarwal 89)<br />

Message<br />

Passing<br />

Cosmic<br />

Cube<br />

(Seitz 85)<br />

J−Machine<br />

(Dally 87)<br />

(adapted<br />

from a slide<br />

by Greg<br />

Papadopoulos)