FAST Forth Native-Language Embedded Computers

FAST Forth Native-Language Embedded Computers

FAST Forth Native-Language Embedded Computers

You also want an ePaper? Increase the reach of your titles

YUMPU automatically turns print PDFs into web optimized ePapers that Google loves.

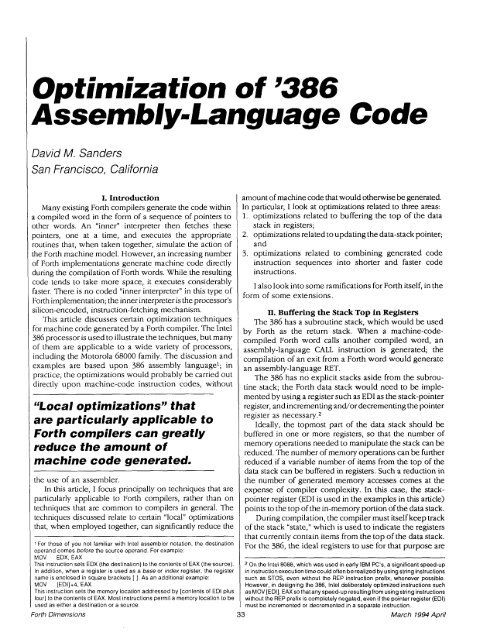

David M. Sanders<br />

San Francisco, California<br />

I. Introduction<br />

Many existing <strong>Forth</strong> compilers generate the code within<br />

a compiled word in the form of a sequence of pointers to<br />

other words. An "inner" interpreter then fetches these<br />

pointers, one at a time, and executes the appropriate<br />

routines that, when taken together, simulate the action of<br />

the <strong>Forth</strong> machine model. However, an increasing number<br />

of <strong>Forth</strong> implementations generate machine code directly<br />

during the compilation of <strong>Forth</strong> words. While the resulting<br />

code tends to take more space, it executes considerably<br />

faster. There is no coded "inner interpreter" in this type of<br />

<strong>Forth</strong> implementation; the inner interpreter is the processor's<br />

silicon-encoded, instruction-fetching mechanism.<br />

This article discusses certain optimization techniques<br />

for machine code generated by a <strong>Forth</strong> compiler. The Intel<br />

386 processor is used to illustrate the techniques, but many<br />

of them are applicable to a wide variety of processors,<br />

including the Motorola 68000 family. The discussion and<br />

examples are based upon 386 assembly language1; in<br />

practice, the optimizations would probably be carried out<br />

directly upon machine-code instruction codes, without<br />

CCLocal optimizations" that<br />

are particularly applicable to<br />

<strong>Forth</strong> compilers can greatly<br />

reduce the amount of<br />

machine code generated.<br />

the use of an assembler.<br />

In this article, I focus principally on techniques that are<br />

particularly applicable to <strong>Forth</strong> compilers, rather than on<br />

techniques that are common to compilers in general. The<br />

techniques dscussed relate to certain "local" optimizations<br />

that, when employed together, can significantly reduce the<br />

amount of machine code that would otherwise be generated.<br />

In particular, I look at optimizations related to three areas:<br />

1. optimizations related to buffering the top of the data<br />

stack in registers;<br />

2. optimizations related to updating the data-stack pointer;<br />

and<br />

3. optimizations related to combining generated code<br />

instruction sequences into shorter and faster code<br />

instructions.<br />

I also look into some ramifications for <strong>Forth</strong> itself, in the<br />

form of some extensions.<br />

II. Buffering the Stack Top in Registers<br />

The 386 has a subroutine stack, which would be used<br />

by <strong>Forth</strong> as the return stack. When a machine-codecompiled<br />

<strong>Forth</strong> word calls another compiled word, an<br />

assembly-language CALL instruction is generated; the<br />

compilation of an exit from a <strong>Forth</strong> word would generate<br />

an assembly-language RET.<br />

The 386 has no explicit stacks aside from the subroutine<br />

stack; the <strong>Forth</strong> data stack would need to be implemented<br />

by using a register such as ED1 as the stack-pointer<br />

register, and incrementing and/or decrementing the pointer<br />

register as ne~essary.~<br />

Ideally, the topmost part of the data stack should be<br />

buffered in one or more registers, so that the number of<br />

memory operations needed to manipulate the stack can be<br />

reduced. The number of memory operations can be further<br />

reduced if a variable number of items from the top of the<br />

I data stack can be buffered in registers. Such a reduction in<br />

I -<br />

the number of generated memory accesses comes at the<br />

expense of compiler complexity. In this case, the stack-<br />

pointer register (ED1 is used in the examples in this article)<br />

points to the top of the in-memory portion of the data stack.<br />

During compilation, the compiler must itself keep track<br />

of the stack "state," which is used to indicate the registers<br />

that currently contain items from the top of the data stack.<br />

F~~ the 386, the ideal registers to use for that purpose are<br />

1 For those of you not familiar with lntel assembler notation, the destination<br />

o~erand comes before the source operand. For example:<br />

MOV EDX, EAX<br />

This instruction sets EDX (the destinat~on) to the contents of €AX (the source). On the lntel 8088, which was used in early IBM PC's, a significant speed-up<br />

In addition, when a register is used as a base or index register, the register in instructionexecution timecould often be realized by using string instructions<br />

name is enclosed in square brackets [I. As an additional example:<br />

such as STOS, even without the REP instruction prefix, whenever possible.<br />

MOV [ED1]+4. EAX<br />

However, in designing the 386, lntel deliberately optimized instructions such<br />

This instruction sets the memory location addressed by [contents of ED1 plus as MOV [EDI], EAX so thatany speed-up resulting from using string instructions<br />

four] to the contents of EAX. Most instructions permit a memory location to be without the REP prefix is completely negated, even if the pointer register (EDI)<br />

used as either a destination or a source.<br />

must be incremented or decremented in a separate instruction.<br />

<strong>Forth</strong> Dimensions 33 March 1994 April