Solution - Ugrad.cs.ubc.ca

Solution - Ugrad.cs.ubc.ca

Solution - Ugrad.cs.ubc.ca

Create successful ePaper yourself

Turn your PDF publications into a flip-book with our unique Google optimized e-Paper software.

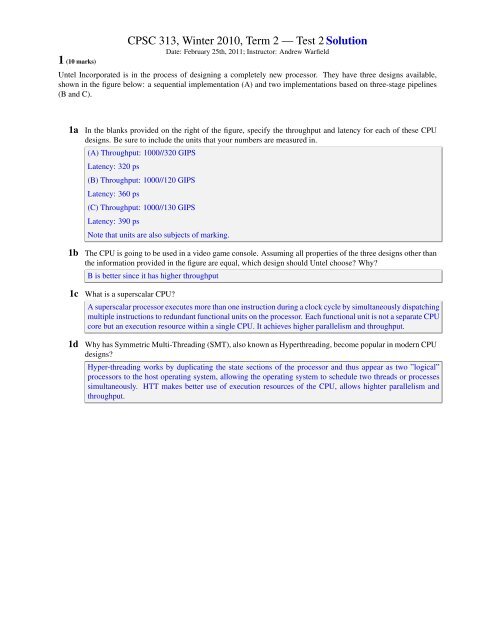

1 (10 marks)<br />

CPSC 313, Winter 2010, Term 2 — Test 2 <strong>Solution</strong><br />

Date: February 25th, 2011; Instructor: Andrew Warfield<br />

Untel Incorporated is in the process of designing a completely new processor. They have three designs available,<br />

shown in the figure below: a sequential implementation (A) and two implementations based on three-stage pipelines<br />

(B and C).<br />

1a In the blanks provided on the right of the figure, specify the throughput and latency for each of these CPU<br />

designs. Be sure to include the units that your numbers are measured in.<br />

(A) Throughput: 1000//320 GIPS<br />

Latency: 320 ps<br />

(B) Throughput: 1000//120 GIPS<br />

Latency: 360 ps<br />

(C) Throughput: 1000//130 GIPS<br />

Latency: 390 ps<br />

Note that units are also subjects of marking.<br />

1b The CPU is going to be used in a video game console. Assuming all properties of the three designs other than<br />

the information provided in the figure are equal, which design should Untel choose Why<br />

B is better since it has higher throughput<br />

1c What is a supers<strong>ca</strong>lar CPU<br />

A supers<strong>ca</strong>lar processor executes more than one instruction during a clock cycle by simultaneously dispatching<br />

multiple instructions to redundant functional units on the processor. Each functional unit is not a separate CPU<br />

core but an execution resource within a single CPU. It achieves higher parallelism and throughput.<br />

1d Why has Symmetric Multi-Threading (SMT), also known as Hyperthreading, become popular in modern CPU<br />

designs<br />

Hyper-threading works by dupli<strong>ca</strong>ting the state sections of the processor and thus appear as two ”logi<strong>ca</strong>l”<br />

processors to the host operating system, allowing the operating system to schedule two threads or processes<br />

simultaneously. HTT makes better use of execution resources of the CPU, allows highter parallelism and<br />

throughput.

2 (10 marks) The Y86-Pipe-Minus CPU implementation is a pipelined version of the Y86 that does not detect or<br />

eliminate hazards automati<strong>ca</strong>lly.<br />

Consider the following Y86 assembly code:<br />

0x000: irmovl $10, %edx<br />

0x006: irmovl $13, %eax<br />

0x00c: subl %edx, %eax<br />

0x00e: halt<br />

2a What unexpected thing will happen if this code is run and why it will happen<br />

Data harzard @ line 3.<br />

values in register %eax and %edx haven’t been written back when ”subl” instuction is executed.<br />

2b How <strong>ca</strong>n the developer fix it Write a modified version of the code that will behave as expected<br />

irmovl $10, %edx<br />

irmovl $13, %eax<br />

nop<br />

nop<br />

nop<br />

subl %edx, %eax<br />

halt<br />

2c Describe the two ways that the CPU designer could change the architecture to fix the problem that we discussed<br />

in class, and explain why one is better than the other.<br />

Stalling and Data forwarding (You need to simply explain what they are). Data forwarding is a better way<br />

when taking throughput, consistency into consideration.<br />

2d In the full, final, pipelined Y86 implementation (Y86-PIPE), there is a single type of data dependency that is<br />

not completely eliminated using data forwarding. What is it, and why <strong>ca</strong>n’t it be fixed<br />

use/load dependency <strong>ca</strong>nnot be fixed by using data forwarding since data is available only after memory stage<br />

and it is needed at decode stage of the load instruction. One bubble is needed.<br />

2

3 (4 marks) The following program has two instructions that stall the Y86-Pipe pipeline. Identify these instructions,<br />

list the number of cycles they stall, and explain the reason for the stall.<br />

[A] irmovl $1, %eax # r[eax]

y86 Cheat Sheet!<br />

Byte 0 1 2 3 4 5<br />

halt 0 0<br />

nop 1 0<br />

rrmovl rA, rB<br />

2 0 rA rB<br />

irmovl V, rB 3 0 F rB V<br />

rmmovl rA, D(rB) 4 0 rA rB<br />

mrmovl D(rB), rA 5 0 rA rB<br />

D<br />

D<br />

OPl rA, rB<br />

6 fn rA rB<br />

jXX Dest 7 fn Dest<br />

cmovXX rA, rB<br />

2 fn rA rB<br />

<strong>ca</strong>ll Dest 8 0 Dest<br />

ret 9 0<br />

pushl rA<br />

popl rA<br />

A 0 rA F<br />

B 0 rA F<br />

Operations<br />

Branches<br />

Moves<br />

addl 6 0<br />

jmp 7 0<br />

jne 7 4<br />

rrmovl 2 0<br />

cmovne 2 4<br />

subl 6 1<br />

jle 7 1<br />

jge 7 5<br />

cmovle 2 1<br />

cmovge 2 5<br />

andl 6 2<br />

jl 7 2<br />

jg 7 6<br />

cmovl 2 2<br />

cmovg 2 6<br />

xorl 6 3<br />

je 7 3<br />

cmove 2 3<br />

4