Richard S. Sutton - Webdocs Cs Ualberta - University of Alberta

Richard S. Sutton - Webdocs Cs Ualberta - University of Alberta

Richard S. Sutton - Webdocs Cs Ualberta - University of Alberta

You also want an ePaper? Increase the reach of your titles

YUMPU automatically turns print PDFs into web optimized ePapers that Google loves.

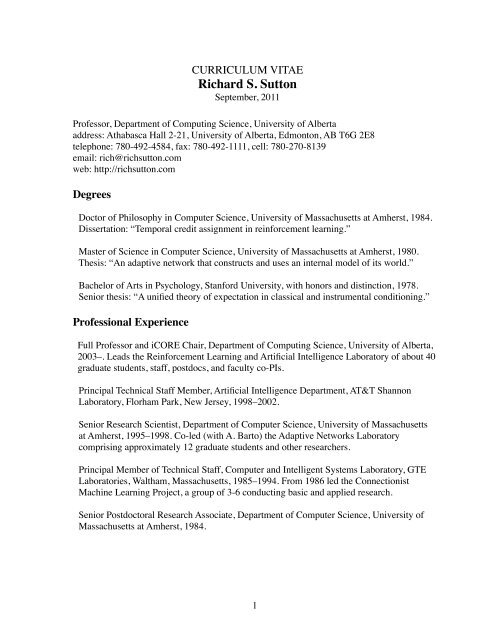

CURRICULUM VITAE<br />

<strong>Richard</strong> S. <strong>Sutton</strong><br />

September, 2011<br />

Pr<strong>of</strong>essor, Department <strong>of</strong> Computing Science, <strong>University</strong> <strong>of</strong> <strong>Alberta</strong><br />

address: Athabasca Hall 2-21, <strong>University</strong> <strong>of</strong> <strong>Alberta</strong>, Edmonton, AB T6G 2E8<br />

telephone: 780-492-4584, fax: 780-492-1111, cell: 780-270-8139<br />

email: rich@richsutton.com<br />

web: http://richsutton.com<br />

Degrees<br />

Doctor <strong>of</strong> Philosophy in Computer Science, <strong>University</strong> <strong>of</strong> Massachusetts at Amherst, 1984.<br />

Dissertation: “Temporal credit assignment in reinforcement learning.”<br />

Master <strong>of</strong> Science in Computer Science, <strong>University</strong> <strong>of</strong> Massachusetts at Amherst, 1980.<br />

Thesis: “An adaptive network that constructs and uses an internal model <strong>of</strong> its world.”<br />

Bachelor <strong>of</strong> Arts in Psychology, Stanford <strong>University</strong>, with honors and distinction, 1978.<br />

Senior thesis: “A unified theory <strong>of</strong> expectation in classical and instrumental conditioning.”<br />

Pr<strong>of</strong>essional Experience<br />

Full Pr<strong>of</strong>essor and iCORE Chair, Department <strong>of</strong> Computing Science, <strong>University</strong> <strong>of</strong> <strong>Alberta</strong>,<br />

2003–. Leads the Reinforcement Learning and Artificial Intelligence Laboratory <strong>of</strong> about 40<br />

graduate students, staff, postdocs, and faculty co-PIs.<br />

Principal Technical Staff Member, Artificial Intelligence Department, AT&T Shannon<br />

Laboratory, Florham Park, New Jersey, 1998–2002.<br />

Senior Research Scientist, Department <strong>of</strong> Computer Science, <strong>University</strong> <strong>of</strong> Massachusetts<br />

at Amherst, 1995–1998. Co-led (with A. Barto) the Adaptive Networks Laboratory<br />

comprising approximately 12 graduate students and other researchers.<br />

Principal Member <strong>of</strong> Technical Staff, Computer and Intelligent Systems Laboratory, GTE<br />

Laboratories, Waltham, Massachusetts, 1985–1994. From 1986 led the Connectionist<br />

Machine Learning Project, a group <strong>of</strong> 3-6 conducting basic and applied research.<br />

Senior Postdoctoral Research Associate, Department <strong>of</strong> Computer Science, <strong>University</strong> <strong>of</strong><br />

Massachusetts at Amherst, 1984.<br />

1

Research<br />

Pioneered and made repeated contributions to reinforcement learning, an approach to<br />

artificial and natural intelligence that emphasizes learning and planning from sample<br />

experience. Currently seeking to extend reinforcement learning ideas to an empirically<br />

grounded approach to knowledge representation.<br />

Most significant contributions:<br />

• The theory <strong>of</strong> temporal-difference learning and the TD(λ) algorithm (1988).<br />

• The standard textbook for reinforcement learning (with Barto, 1998)<br />

• The actor-critic (policy gradient) class <strong>of</strong> algorithms (1984, 2000).<br />

• The Dyna architecture integrating learning, planning and reacting (1990)<br />

• Temporal-difference models <strong>of</strong> animal learning (with Barto, 1981, 1990)<br />

• The “options” framework for temporal abstraction (with Precup, Singh, 1999)<br />

• Predictive state representations (with Littman, Singh, 2002)<br />

• Algorithms for online step-size adaptation (1981, 1992)<br />

• Temporal-difference networks for grounded knowledge representation (2005, 2006)<br />

• Gradient temporal-difference algorithms (with Maei, Szepesvari, 2008–)<br />

Selected Grants<br />

NSERC Collaborative Research and Development Grant, with Nortel Networks and Bell<br />

Canada, “Learning and Prediction in High-dimensional Stochastic Domains,” September<br />

2006 – August 2009, funded at CAD$186,523. One <strong>of</strong> five principal investigators.<br />

iCORE Chair and Pr<strong>of</strong>essorship Establishment Grant, “Reinforcement Learning and<br />

Artificial Intelligence,” September 1, 2003 – August 31, 2008, funded at CAD$3,000,000.<br />

Principal investigator. Renewed until August 2013 at an additional CAD$2,750,000.<br />

<strong>Alberta</strong> Ingenuity Centre Grant, “<strong>Alberta</strong> Ingenuity Centre for Machine Learning,” April<br />

2003 – March 2008, funded at CAD$9,887,600. One <strong>of</strong> eight principal investigators.<br />

Renewed until March 2009 at CAD$2,000,000. Renewed again in 2009 for another five<br />

years at CAD$10,000,000.<br />

NSERC Discovery Grant, “Reinforcement Learning and Artificial Intelligence,” April, 2004<br />

– March 2009, funded at CAD$250,000. Principal investigator. Renewed in 2009 for a<br />

second five years at an additional CAD$190,000.<br />

Air Force Office <strong>of</strong> Scientific Research to the <strong>University</strong> <strong>of</strong> Massachusetts, “Stochastic<br />

Scheduling and Planning Using Reinforcement Learning,” AFOSR Grant Number<br />

F49620-96-1-0254, June 1, 1996 – May 31, 2000, funded at USD$446,570. Coprincipal<br />

investigator with A. Barto.<br />

2

National Science Foundation to the <strong>University</strong> <strong>of</strong> Massachusetts, “Multiple Time Scale<br />

Reinforcement Learning,” Communications & Computational Systems/Neuroengineering<br />

Grant ECS-9511805, September 15, 1995 – August 31, 1998, funded at USD$157,261.<br />

Primary senior personnel (A. Barto, PI).<br />

Teaching Experience<br />

Courses<br />

Intelligent Systems, CMPUT 366, an introduction to artificial intelligence for<br />

undergraduates at the <strong>University</strong> <strong>of</strong> <strong>Alberta</strong>, 2008–2010<br />

Reinforcement Learning for Artificial Intelligence, CMPUT 499/609, cross-listed<br />

undergraduate/graduate course at the <strong>University</strong> <strong>of</strong> <strong>Alberta</strong>, 2003–2007, 2009-10<br />

Non-procedural Programming Languages, CMPUT 325, undergraduate course at the<br />

<strong>University</strong> <strong>of</strong> <strong>Alberta</strong>, Fall 2006<br />

Reinforcement Learning in Practice, CMPUT 607, graduate course at the <strong>University</strong> <strong>of</strong><br />

<strong>Alberta</strong>, Spring 2005<br />

Reinforcement Learning, CMPSCI 791O, graduate course at the <strong>University</strong> <strong>of</strong><br />

Massachusetts, with A. Barto, Fall 1996 and 1997<br />

Reinforcement Learning, special intensive course at the <strong>University</strong> <strong>of</strong> Uppsala, Sweden,<br />

April–May 1996<br />

Reinforcement Learning, CMPSCI 791N, graduate seminar at the <strong>University</strong> <strong>of</strong><br />

Massachusetts, with A. Barto, Fall 1995<br />

Cybernetics <strong>of</strong> Adaptation and Learning, COINS 791M, graduate seminar, teaching<br />

assistant including some lecturing, Fall 1981<br />

Summer Schools<br />

Instructor at Machine Learning Summer School, Ile de Rey, France, 2008<br />

Instructor at Cambridge <strong>University</strong> Neural Networks Summer School (three lectures each<br />

summer), 1993–1997<br />

Instructor at Cold Spring Harbor Summer School on Computational Neuroscience:<br />

Learning and Memory, July 1990<br />

3

Tutorials on Reinforcement Learning at Conferences<br />

Conference <strong>of</strong> the Association for the Advancement <strong>of</strong> Artificial Intelligence, 2010<br />

International Conference on Machine Learning, 1999<br />

Genetic Programming Conference, 1998<br />

Second Asia-Pacific Conference on Simulated Evolution and Learning, Australia, 1998<br />

National Conference on Artificial Intelligence, with L. Kaelbling, 1997<br />

National Conference on Computer Science – Mexico, 1997<br />

Conference on Neural Information Processing Systems, 1996<br />

Scholarly Service<br />

Meetings Organized<br />

Co-chair, Multidisciplinary Symposium on Reinforcement Learning, 2009<br />

Program co-chair, National Conference on Artificial Intelligence, 2002<br />

Chair, Workshop on Reinforcement Learning, Int’l. Conference on Machine Learning, 1993<br />

Co-chair, Bellairs Workshop on Reinforcement Learning, 2005-6, 2008-11<br />

Co-chair, Workshop on Reinforcement Learning–Benchmarks and Bake<strong>of</strong>fs, International<br />

Conference on Neural Information Processing Systems, 2004<br />

Co-chair, Workshop on Predictive Representation <strong>of</strong> World Knowledge, International<br />

Conference on Machine Learning, 2004<br />

Co-chair, NSF Workshop on Neural Networks for Robotics and Control, 1988<br />

Editorial<br />

Foundations and Trends in Machine Learning, founding editorial board, 2007–<br />

Journal <strong>of</strong> Machine Learning Research: advisory board 1999–<br />

J. <strong>of</strong> Artificial Intelligence Research: action editor 1993-1996, advisory board 1996–2000<br />

Machine Learning: action editor 1989–94, editorial board 1994–2001<br />

Connection Science: action editor 1989–90, editorial board 1990–1995<br />

Adaptive Behavior: editorial board 1991–2000<br />

Guest Editor, special double issue <strong>of</strong> Machine Learning on Reinforcement Learning, 1992<br />

Conference Program Committees<br />

International Conference on Machine Learning, 1988–01,03,04,10, area chair 2005–6,8<br />

Conf. on Neural Information Processing Systems, 1989–2000, 2003–2010, area chair 1997<br />

Conference on Uncertainty in Artificial Intelligence, 2005, 2009<br />

International Joint Conference on Autonomous Agents and Multi-Agent Systems, 2005<br />

AAAI, 1993–1996, 2007, 2008, senior program committee 1998–2000<br />

International Joint Conference on Artificial Intelligence, 1995, 1997, 2001, 2005<br />

International Conference on the Simulation <strong>of</strong> Adaptive Behavior, 1990, 1992<br />

Cognitive Science Conference, 1986, 1987<br />

Conference on Computational Learning Theory, 1996<br />

Canadian Conference on Artificial Intelligence, 2006<br />

4

Honors<br />

iCORE chair, 2003; renewed 2008<br />

Fellow <strong>of</strong> the American Association for Artificial Intelligence, 2001<br />

Elected member <strong>of</strong> the executive council <strong>of</strong> the American Association for Artificial<br />

Intelligence, 2007–2010<br />

President's Award, International Neural Network Society (INNS), 2003<br />

Warner award, GTE’s highest award for technical achievement contributing to business<br />

success, 1994, for leading a team that developed a method for adaptive channel assignment<br />

that improved call-carrying capacity in a detailed simulation <strong>of</strong> the GTE Airfone network<br />

by over 40%.<br />

Outstanding Leadership in <strong>Alberta</strong> Technology Award from the <strong>Alberta</strong> Science and<br />

Technology Leadership Foundation, awarded in 2006 to the <strong>Alberta</strong> Ingenuity Center for<br />

Machine Learning. (One <strong>of</strong> eight principal investigators.)<br />

Students<br />

Currently Under Supervision at the <strong>University</strong> <strong>of</strong> <strong>Alberta</strong><br />

Adam White, PhD<br />

Brian Tanner, PhD<br />

Marc Gendron-Bellemare, PhD<br />

Hamid Reza Maei, PhD<br />

Anna Koop, PhD<br />

Hengshuai Yao, MSc<br />

Leah Hackman, MSc<br />

Ashique Mahmood, PhD<br />

Neda Mirian HosseinAbadi, MSc<br />

Doctoral Graduates Supervised and Graduated<br />

Doina Precup, “Temporal abstraction in reinforcement learning,” <strong>University</strong> <strong>of</strong><br />

Massachusetts, 2000. Currently an associate pr<strong>of</strong>essor <strong>of</strong> Computer Science at McGill<br />

<strong>University</strong>.<br />

David Silver, “Reinforcement Learning and Simulation-Based Search in Computer Go,”<br />

<strong>University</strong> <strong>of</strong> <strong>Alberta</strong>, 2009. Currently a postdoctoral fellow at MIT.<br />

5

Masters Graduates Supervised and Graduated<br />

Michael Delp, MSc<br />

Ashique Mahmood, MSc<br />

Masoud Shahamiri, <strong>University</strong> <strong>of</strong> <strong>Alberta</strong>, 2008<br />

Anna Koop, <strong>University</strong> <strong>of</strong> <strong>Alberta</strong> 2007<br />

Eddie Rafols, <strong>University</strong> <strong>of</strong> <strong>Alberta</strong> 2006<br />

Adam White, <strong>University</strong> <strong>of</strong> <strong>Alberta</strong> 2006<br />

Alborz Geramifard, <strong>University</strong> <strong>of</strong> <strong>Alberta</strong> 2006<br />

Cosmin Paduraru, <strong>University</strong> <strong>of</strong> <strong>Alberta</strong> 2006<br />

Brian Tanner, <strong>University</strong> <strong>of</strong> <strong>Alberta</strong> 2005<br />

Amy McGovern, <strong>University</strong> <strong>of</strong> Massachusetts 1998<br />

Doina Precup, <strong>University</strong> <strong>of</strong> Massachusetts 1996<br />

Doctoral Examination Committees<br />

Hado van Hasselt, “Insights in Reinforcement Learning,” SIKS, Dutch Research School for<br />

Information and Knowledge Systems, 2011.<br />

Tao Wang, “New Representations and Approximations for Sequential Decision<br />

Making under Uncertainty,” <strong>University</strong> <strong>of</strong> <strong>Alberta</strong>, 2007<br />

Mohammad Al-Ansari, Northeastern <strong>University</strong>, 2001<br />

Manfred Huber, “A hybrid architecture for adaptive robot control,” <strong>University</strong> <strong>of</strong><br />

Massachusetts, 2000<br />

Gavin Rummery, “Problem solving with reinforcement learning,” Cambridge <strong>University</strong>,<br />

1995<br />

Chen Tham, “Online function approximation for scaling up reinforcement learning,”<br />

Cambridge <strong>University</strong>, 1994<br />

Jing Peng, “Efficient dynamic programming based learning for control,” Northeastern<br />

<strong>University</strong>, 1994<br />

Satinder Singh, “Learning to solve Markovian decision processes,” <strong>University</strong> <strong>of</strong><br />

Massachusetts, 1993<br />

Long-Ji Lin, “Reinforcement learning for robots using neural networks,” Carnegie-Mellon<br />

<strong>University</strong>, 1992<br />

Steve Whitehead, “Reinforcement learning for the adaptive control <strong>of</strong> perception and<br />

action,” <strong>University</strong> <strong>of</strong> Rochester, 1992<br />

Leslie Kaelbling, “Learning in embedded systems,” Stanford <strong>University</strong>, 1990<br />

6

Selected Invited Presentations<br />

“Learning about Sensorimotor data,” Posner (invited plenary) talk at the Twenty-Fifth<br />

Annual Conference on Neural Information Processing Systems, Granada, Spain, 2011, and<br />

keynote at the 21st Annual Conference <strong>of</strong> the Japanese Neural Network Society, Okinawa,<br />

Japan.<br />

“Beyond Reward: The Problem <strong>of</strong> Knowledge and Data,” Keynote at the 21st International<br />

Conference on Inductive Logic Programming, Windsor Great Park, United Kingdom, 2011<br />

“Toward Learning Human-level Predictive Knowledge,” Keynote at the Third Conference<br />

on Artificial General Intelligence, Lugano, Switzerland, 2010<br />

“Mind and Time: A View <strong>of</strong> Constructivist Reinforcement Learning,” invited plenary talk at<br />

the European Workshop on Reinforcement Learning, Lille, France, 2008<br />

“Stimulus Representation in Temporal-difference Models <strong>of</strong> the Dopamine System,”<br />

Computation and Neural Systems seminar series on the California Institute <strong>of</strong> Technology,<br />

2007<br />

“Reinforcement Learning’s Computational Theory <strong>of</strong> Mind,” plenary invited talk at a special<br />

Workshop on Dopamine and its Role in Learning, Motivation, and Psychiatric Disorders,<br />

McGill <strong>University</strong>, 2005<br />

“Experience-Oriented Artificial Intelligence,” Distinguished Lecture Series <strong>of</strong> the<br />

Mathematics and Computer Science Department <strong>of</strong> the <strong>University</strong> <strong>of</strong> Lethbridge, 2005<br />

“Experience-Oriented Artificial Intelligence,” Distinguished Lecture Series <strong>of</strong> the Cognitive<br />

Science Department <strong>of</strong> the <strong>University</strong> <strong>of</strong> California, San Diego, 2005<br />

“Grounding Knowledge in Subjective Experience” plenary provocateur talk, Second<br />

Cognitive Systems Conference, 2005<br />

“Reinforcement Learning: How Far Can It Go” joint plenary at the (co-located)<br />

International Conference on Machine Learning, Conference on Computational Learning<br />

Theory, and Conference on Uncertainty in Artificial Intelligence, 1998<br />

“Reinforcement Learning: Past, Present, and Future,” plenary at the Second Asia-Pacific<br />

Conference on Simulated Evolution and Learning, 1998, Australia<br />

“Between MDPs and Semi-MDPs: Learning, Planning and Representing Knowledge at<br />

Multiple Temporal Scales,” 1998, <strong>University</strong> Simon Bolivar, Caracas, Venezuela<br />

7

“Computational Reinforcement Learning,” plenary at the Annual Meeting <strong>of</strong> the Society for<br />

the Quantitative Analysis <strong>of</strong> Behavior, 1998<br />

“On the Significance <strong>of</strong> Markov Decision Processes,” plenary at the European Conference<br />

on Computational Learning Theory, 1997, Germany<br />

“Reinforcement Learning: Lessons for AI,” International Joint Conference on Artificial<br />

Intelligence, 1997, Japan<br />

“Markov Decision Processes: Lessons from Reinforcement Learning,” plenary at the<br />

International Conference on Artificial Neural Networks, 1997, Switzerland<br />

“Learning from Interaction,” keynote address, National Conference on Computer Science –<br />

Mexico, 1997, Mexico<br />

“The Value <strong>of</strong> Reinforcement Learning in Neurobiology,” Gottingen Neurobiology<br />

Conference, 1995, Germany<br />

“Temporal-Difference Learning,” National Conference on Artificial Intelligence, 1993<br />

“Temporal-Difference Learning,” plenary at the IEEE International Conference on Neural<br />

Networks, 1993<br />

“Reinforcement Learning Architectures,” International Symposium on Neural Information<br />

Processing, 1992, Kyushu, Japan<br />

Publications<br />

Books<br />

1. <strong>Sutton</strong>, R. S., Barto, A. G., Reinforcement Learning: An Introduction. MIT Press, 1998.<br />

Also translated into Japanese.<br />

2. Miller, W. T., <strong>Sutton</strong>, R. S., Werbos, P. J. (Eds.), Neural Networks for Control. MIT<br />

Press, 1991.<br />

3. <strong>Sutton</strong>, R. S. (Ed.), Reinforcement Learning. Reprinting <strong>of</strong> a special issue <strong>of</strong> Machine<br />

Learning Journal. Kluwer Academic Press, 1992<br />

Journal Articles<br />

4. Kehoe, E. J., Ludvig, E. A., <strong>Sutton</strong>, R. S., “Timing in trace conditioning <strong>of</strong> the<br />

nictitating membrane response <strong>of</strong> the rabbit (Oryctolagus cuniculus): Scalar, nonscalar,<br />

and adaptive features,” Learning and Memory 17:600-604, 2010.<br />

8

5. Kehoe, E. J., Ludvig, E. A., <strong>Sutton</strong>, R. S., “Magnitude and timing <strong>of</strong> conditioned<br />

responses in delay and trace classical conditioning <strong>of</strong> the nictitating membrane response<br />

<strong>of</strong> the rabbit (Oryctolagus cuniculus),” Behavioral Neuroscience 123(5):1095–1101,<br />

2009.<br />

6. Kehoe, E. J., Olsen, K. N., Ludvig, E. A., <strong>Sutton</strong>, R. S., “Scalar timing varies with<br />

response magnitude in classical conditioning <strong>of</strong> the rabbit nictitating membrane<br />

response (Oryctolagus cuniculus),” Behavioral Neuroscience 123, 212–217, 2009.<br />

7. Bhatnagar, S., <strong>Sutton</strong>, R. S., Ghavamzadeh, M., Lee, M., “Natural actor–critic<br />

algorithms,” Automatica 45(11):2471–2482, 2009.<br />

8. Ludvig, E. A., <strong>Sutton</strong>, R. S., Kehoe, E. J., “Stimulus representation and the timing <strong>of</strong><br />

reward-prediction errors in models <strong>of</strong> the dopamine system,” Neural Computation<br />

20:3034–3054, 2008.<br />

9. Kehoe, E. J., Ludvig, E. A., Dudeney, J. E., Neufeld, J., <strong>Sutton</strong>, R. S., “Magnitude and<br />

timing <strong>of</strong> nictitating membrane movements during classical conditioning <strong>of</strong> the rabbit<br />

(Oryctolagus cuniculus),” Behavioral Neuroscience 122(2):471–476, 2008.<br />

10. Stone, P., <strong>Sutton</strong>, R. S., Kuhlmann, G., “Reinforcement Learning for RoboCup-Soccer<br />

Keepaway.” Adaptive Behavior 13(3):165–188, 2005.<br />

11. <strong>Sutton</strong>, R. S., Precup, D., Singh, S., “Between MDPs and semi-MDPs: a framework for<br />

temporal abstraction in reinforcement learning,” Artificial Intelligence, 112, 1999. pp.<br />

181–211.<br />

12. Santamaria, J. C., <strong>Sutton</strong>, R. S., Ram, A., “Experiments with reinforcement learning in<br />

problems with continuous state and action spaces,” Adaptive Behavior, 6, No. 2, 1998,<br />

pp. 163–218.<br />

13. Singh, S., <strong>Sutton</strong>, R. S., “Reinforcement learning with replacing eligibility traces,”<br />

Machine Learning, 22, 1996, pp. 123–158.<br />

14. <strong>Sutton</strong>, R. S., “Learning to predict by the methods <strong>of</strong> temporal differences,” Machine<br />

Learning, 3, 1988, No. 1, pp. 9–44.<br />

15. Moore, J., Desmond, J, Berthier, N., Blazis, D., <strong>Sutton</strong>, R. S., Barto, A. G., “Simulation<br />

<strong>of</strong> the classically conditioned nictitating membrane response by a neuron-like adaptive<br />

element: response topography, neuronal firing, and interstimulus intervals,”<br />

Behavioural Brain Research, 21, 1986, pp. 143–154.<br />

16. Barto, A. G., <strong>Sutton</strong>, R. S., Anderson, C., “Neuron-like adaptive elements that can solve<br />

difficult learning control problems,” IEEE Transactions on Systems, Man, and<br />

Cybernetics, SMC-13, No. 5, 1983, pp. 834–846.<br />

17. Barto, A. G., <strong>Sutton</strong>, R. S., “Simulation <strong>of</strong> anticipatory responses in classical<br />

conditioning by a neuron-like adaptive element,” Behavioral Brain Research, 4, 1982,<br />

pp. 221–235.<br />

9

18. Barto, A. G., Anderson, C., <strong>Sutton</strong>, R. S., “Synthesis <strong>of</strong> nonlinear control surfaces by a<br />

layered associative network,” Biological Cybernetics, 43, 1982, pp. 175–185.<br />

19. <strong>Sutton</strong>, R. S., Barto, A. G., “Toward a modern theory <strong>of</strong> adaptive networks: expectation<br />

and prediction,” Psychological Review, 88, No. 2, 1981, pp. 135–140. Translated into<br />

Spanish by G. Ruiz to appear in the journal Estudios de Psicologia.<br />

20. <strong>Sutton</strong>, R. S., Barto, A. G., “An adaptive network that constructs and uses an internal<br />

model <strong>of</strong> its world,” Cognition and Brain Theory, 4, 1981, pp. 217–246.<br />

21. Barto, A. G., <strong>Sutton</strong>, R. S., Brouwer, P., “Associative search network: a reinforcement<br />

learning associative memory,” Biological Cybernetics, 40, 1981, pp. 201–211.<br />

22. Barto, A., <strong>Sutton</strong>, R. S., “Landmark learning: an illustration <strong>of</strong> associative search,”<br />

Biological Cybernetics, 42, 1981, pp. 1–8.<br />

Book Chapters<br />

23. Stone, P., <strong>Sutton</strong>, R. S., “Keepaway soccer: A machine learning testbed.” In Lecture Notes<br />

in Computer Science 2377, pp. 207–237, Springer, 2002.<br />

24. Stone, P., <strong>Sutton</strong>, R. S., Singh, S., “Reinforcement learning for 3 vs. 2 keepaway.” In<br />

RoboCup-2000: Robot Soccer World Cup IV, P. Stone, T. Balch, G. Kraetszchmar, Eds.<br />

Springer Verlag, 2001.<br />

25. <strong>Sutton</strong>, R. S. “Reinforcement learning.” In The MIT Encyclopedia <strong>of</strong> the Cognitive<br />

Sciences, R. Wilson, F. Keil, Eds., pp. 715–717, MIT Press, 1999.<br />

26. <strong>Sutton</strong>, R. S., “On the significance <strong>of</strong> Markov decision processes.” In Artificial Neural<br />

Networks – ICANN'97, W. Gerstner, A. Germond, M. Hasler, J.-D. Nicoud, Eds., pp.<br />

273–282. Springer, 1997.<br />

27. Barto, A. G., <strong>Sutton</strong>, R. S., “Reinforcement learning in artificial intelligence.” In Neural<br />

Network Models <strong>of</strong> Cognition, Donahoe, J. W., Packard Dorsel, V., Eds., pp. 358–386,<br />

Elsevier, 1997.<br />

28. <strong>Sutton</strong>, R. S., “First results with Dyna: an integrated architecture for learning, planning,<br />

and reacting.” In Neural Networks for Control, Miller, T., <strong>Sutton</strong>, R. S., Werbos, P., Eds.,<br />

pp. 179–189, MIT Press, 1990.<br />

29. <strong>Sutton</strong>, R. S., Barto, A. G., “Time-derivative models <strong>of</strong> pavlovian reinforcement.” In<br />

Learning and Computational Neuroscience, M. Gabriel, J.W. Moore, Eds., pages 497–<br />

537, MIT Press, 1990.<br />

10

30. Barto, A. G., <strong>Sutton</strong>, R. S., Watkins, C., “Learning and sequential decision making.” In<br />

Learning and Computational Neuroscience, M. Gabriel, J.W. Moore, Eds., pages 539–<br />

602, MIT Press, 1990.<br />

31. Selfridge, O., <strong>Sutton</strong>, R. S., Anderson, C., “Selected bibliography on connectionism,” In:<br />

Evolution, Learning, and Cognition, Y.C. Lee, Ed., pp. 391–403, World Scientific<br />

Publishing, 1988.<br />

32. Barto, A., <strong>Sutton</strong>, R. S., “Neural problem solving.” In: Synaptic Modification, Neuron<br />

Selectivity, and Nervous System Organization, W.B. Levy, J.A. Anderson, Eds., pp. 123–<br />

152, Lawrence Erlbaum, 1985.<br />

Refereed Articles in Conference Proceedings<br />

33. Pilarski, P. M., Dawson, M. R., Degris, T.,Fahimi, F., Carey, J. P., <strong>Sutton</strong>, R. S., “Human<br />

training for online myoelectric prosthesis control using actor-critic reinforcement<br />

learning.” In Proceedings <strong>of</strong> the 2011 IEEE International Conference on Rehabilitation<br />

Robotics, Zurich, Switzerland, 7 pages, 2011.<br />

34. <strong>Sutton</strong>, R. S., Modayil, J., Delp, M., Degris, T., Pilarski, P. M., White, A., Precup, D.,<br />

“Horde: A scalable real-time architecture for learning knowledge from unsupervised<br />

sensorimotor interaction.” In Proceedings <strong>of</strong> the Tenth International Conference on<br />

Autonomous Agents and Multiagent Systems, pp. 761–768, Taipei, Taiwan, 2011.<br />

35. Maei, H. R., Szepesvari, <strong>Cs</strong>., Bhatnagar, S., <strong>Sutton</strong>, R. S., “Toward <strong>of</strong>f-policy learning<br />

control with function approximation.” In Proceedings <strong>of</strong> the 27th International<br />

Conference on Machine Learning, Haifa, Israel, 8 pages, 2010. Omnipress.<br />

36. Maei, H. R., <strong>Sutton</strong>, R. S., “GQ(λ): A general gradient algorithm for temporal-difference<br />

prediction learning with eligibility traces.” In Proceedings <strong>of</strong> the Third Conference on<br />

Artificial General Intelligence, Lugano, Switzerland, 2010.<br />

37. Maei, H. R., Szepesvari, <strong>Cs</strong>., Bhatnagar, S., Precup, D., Silver, D., <strong>Sutton</strong>, R. S.,<br />

“Convergent temporal-difference learning with arbitrary smooth function<br />

approximation.” Advances in Neural Information Processing Systems 22, 9 pages, 2009.<br />

MIT Press.<br />

38. <strong>Sutton</strong>, R. S., Maei, H. R., Precup, D., Bhatnagar, S., Silver, D., Szepesvari, <strong>Cs</strong>.,<br />

Wiewiora, E., “Fast gradient-descent methods for temporal-difference learning with<br />

linear function approximation.” Proceedings <strong>of</strong> the 26th International Conference on<br />

Machine Learning, 2009.<br />

39. <strong>Sutton</strong>, R. S., Szepesvari, <strong>Cs</strong>., Maei, H. R., “A convergent O(n) algorithm for <strong>of</strong>f-policy<br />

temporal-difference learning with linear function approximation,” Advances in Neural<br />

Information Processing Systems 21, 8 pages, 2009. MIT Press.<br />

11

40. Ludvig, E., <strong>Sutton</strong>, R. S., Verbeek, E., Kehoe, E. J., “A computational model <strong>of</strong><br />

hippocampal function in trace conditioning,” Advances in Neural Information Processing<br />

Systems 21, 8 pages, 2009. MIT Press.<br />

41. Cutumisu, M., Szafron, D., Bowling, M., <strong>Sutton</strong> R. S., “Agent learning using actiondependent<br />

learning rates in computer role-playing games,” Proceedings <strong>of</strong> the 4th<br />

Artificial Intelligence and Interactive Digital Entertainment Conference, pp. 22-29, 2008.<br />

42. <strong>Sutton</strong>, R. S., Szepesvari, <strong>Cs</strong>., Geramifard, A., Bowling, M., “Dyna-style planning with<br />

linear function approximation and prioritized sweeping,” Proceedings <strong>of</strong> the 24th<br />

Conference on Uncertainty in Artificial Intelligence, pp. 528–536, 2008.<br />

43. Silver, D., <strong>Sutton</strong>, R. S., Müller, M., “Sample-based learning and search with permanent<br />

and transient memories,” Proceedings <strong>of</strong> the 25th International Conference on Machine<br />

Learning, 2008. (27% Acceptance)<br />

44. Bhatnagar, S., <strong>Sutton</strong>, R. S., Ghavamzadeh, M., Lee, M., “Incremental natural actor-critic<br />

algorithms,” Advances in Neural Information Processing Systems 20, 2008.<br />

45. <strong>Sutton</strong>, R. S., Koop, A., Silver, D., “On the role <strong>of</strong> tracking in stationary environments,”<br />

Proceedings <strong>of</strong> the 24th International Conference on Machine Learning, 2007.<br />

46. Silver, D., <strong>Sutton</strong>, R. S., Müller, M., “Reinforcement learning <strong>of</strong> local shape in the game<br />

<strong>of</strong> Go,” Proceedings <strong>of</strong> the 20th International Joint Conference on Artificial Intelligence,<br />

2007.<br />

47. Geramifard, A., Bowling, M., Zinkevich, M., <strong>Sutton</strong>, R. S., “iLSTD: Eligibility traces and<br />

convergence analysis,” Advances in Neural Information Processing Systems 19, 2007.<br />

48. Geramifard, A., Bowling, M., <strong>Sutton</strong>, R. S., “Incremental least-squares temporal<br />

difference learning,” Proceedings <strong>of</strong> the Twenty-First National Conference on Artificial<br />

Intelligence, pages 356-361, 2006.<br />

49. Precup, D., <strong>Sutton</strong>, R. S., Paduraru, C., Koop, A., Singh, S., “Off-policy learning with<br />

options and recognizers,” Advances in Neural Information Processing Systems 18, 2006.<br />

50. <strong>Sutton</strong>, R. S., Rafols, E. J., Koop, A., “Temporal abstraction in temporal-difference<br />

networks,” Advances in Neural Information Processing Systems 18, 2006.<br />

51. Tanner, B., <strong>Sutton</strong>, R. S., “TD(λ) networks: Temporal-difference networks with eligibility<br />

traces,” Proceedings <strong>of</strong> the 22nd International Conference on Machine Learning<br />

(ICML), Bonn, Germany, 2005. 8 large typeset pages. 27% acceptance rate.<br />

52. Rafols, E. J., Ring, M. B., <strong>Sutton</strong>, R. S., Tanner, B., “Using predictive representations to<br />

improve generalization in reinforcement learning,” Proceedings <strong>of</strong> the 19th International<br />

Joint Conference on Artificial Intelligence, Edinburgh, Scotland, 2005. 6 large typeset<br />

pages. 18% acceptance rate.<br />

12

53. Tanner, B., <strong>Sutton</strong>, R. S., “Temporal-difference networks with history,” Proceedings <strong>of</strong><br />

the Nineteenth International Joint Conference on Artificial Intelligence (IJCAI),<br />

Edinburgh, Scotland, 2005. 6 large typeset pages. 18% acceptance rate.<br />

54. <strong>Sutton</strong>, R. S., Tanner, B., “Temporal-difference networks,” Advances in Neural<br />

Information Processing Systems 17. MIT Press, 2005.<br />

55. Littman, M. L., <strong>Sutton</strong>, R. S., Singh, S., “Predictive representations <strong>of</strong> state,” Advances in<br />

Neural Information Processing Systems 14. MIT Press, 2002.<br />

56. Stone, P., <strong>Sutton</strong>, R. S., “Scaling reinforcement learning toward RoboCup soccer,”<br />

Proceedings <strong>of</strong> the 18th International Conference on Machine Learning, pp. 537–544.<br />

Morgan Kaufmann, 2001.<br />

57. Precup, D., <strong>Sutton</strong>, R. S., Dasgupta, S., “Off-policy temporal-difference learning with<br />

function approximation,” Proceedings <strong>of</strong> the 18th International Conference on Machine<br />

Learning, pp. 417–424. Morgan Kaufmann, 2001.<br />

58. <strong>Sutton</strong>, R. S., McAllester, D., Singh, S., Mansour, Y., “Policy gradient methods for<br />

reinforcement learning with function approximation,” Advances in Neural Information<br />

Processing Systems 12 (Proceedings <strong>of</strong> the 1999 conference), pp. 1057–1063. MIT Press,<br />

2000.<br />

59. Precup, D., <strong>Sutton</strong>, R. S., Singh, S., “Eligibility traces for <strong>of</strong>f-policy policy evaluation,”<br />

Proceedings <strong>of</strong> the 17th International Conference on Machine Learning, pp. 759–766.<br />

Morgan Kaufmann, 2000.<br />

60. <strong>Sutton</strong>, R. S., Singh, S., Precup, D., Ravindran, B., “Improved switching among<br />

temporally abstract actions,” Advances in Neural Information Processing Systems 11<br />

(Proceedings <strong>of</strong> the 1998 conference), pp. 1066–1072. MIT Press, 1999.<br />

61. Moll, R., Barto, A. G., Perkins, T. J., <strong>Sutton</strong>, R. S., “Learning instance-independent value<br />

functions to enhance local search,” Advances in Neural Information Processing Systems<br />

11 (Proceedings <strong>of</strong> the 1998 conference), pp. 1017–1023. MIT Press, 1999.<br />

62. <strong>Sutton</strong>, R. S., Precup, D., Singh, S., “Intra-option learning about temporally abstract<br />

actions,” Proceedings <strong>of</strong> the 15th International Conference on Machine Learning, pp.<br />

556–564. Morgan Kaufmann, 1998.<br />

63. Precup, D., <strong>Sutton</strong>, R. S., Singh, S., “Theoretical results on reinforcement learning with<br />

temporally abstract options,” Machine Learning: ECML-98, Proceedings <strong>of</strong> the 10th<br />

European Conference on Machine Learning, pp. 382–393. Springer Verlag, 1998.<br />

64. Precup, D., <strong>Sutton</strong>, R. S., “Multi-time models for temporally abstract planning,”<br />

Advances in Neural Information Processing Systems 10 (Proceedings <strong>of</strong> the 1997<br />

conference), pp. 1050–1056. MIT Press, 1998.<br />

13

65. Precup, D., <strong>Sutton</strong>, R. S., “Exponentiated gradient methods for reinforcement learning,”<br />

Proceedings <strong>of</strong> the 14th International Conference on Machine Learning, pp. 272-277.<br />

Morgan Kaufmann, 1997.<br />

66. <strong>Sutton</strong>, R. S., “Generalization in reinforcement learning: successful examples using<br />

sparse coarse coding,” Advances in Neural Information Processing Systems 8<br />

(Proceedings <strong>of</strong> the 1995 conference), pp. 1038–1044. MIT Press, 1996.<br />

67. <strong>Sutton</strong>, R. S. “TD models: modeling the world at a mixture <strong>of</strong> time scales,” Proceedings<br />

<strong>of</strong> the Twelfth International Conference on Machine Learning, pp. 531–539. Morgan<br />

Kaufmann, 1995.<br />

68. <strong>Sutton</strong>, R. S., Whitehead, S. “Online learning with random representations,” Proceedings<br />

<strong>of</strong> the Tenth International Conference on Machine Learning, pp. 314–321. Morgan<br />

Kaufmann, 1993.<br />

69. Gluck, M., Glauthier, P., <strong>Sutton</strong>, R. S. “Adaptation <strong>of</strong> cue-specific learning rates in<br />

network models <strong>of</strong> human category learning,” Proceedings <strong>of</strong> the Fourteenth Annual<br />

Conference <strong>of</strong> the Cognitive Science Society, pp. 540–545. Erlbaum, 1992.<br />

70. <strong>Sutton</strong>, R. S. “Adapting bias by gradient descent: an incremental version <strong>of</strong> delta-bardelta,”<br />

Proceedings <strong>of</strong> the Tenth National Conference on Artificial Intelligence, pp. 171–<br />

176. MIT/AAAI Press, 1992.<br />

71. Sanger, T., <strong>Sutton</strong>, R. S., Matheus, C., “Iterative construction <strong>of</strong> sparse polynomial<br />

approximations,” Advances in Neural Information Processing Systems 4, pp. 1064–1071.<br />

Morgan Kaufmann, 1992.<br />

72. <strong>Sutton</strong>, R. S., “Planning by incremental dynamic programming,” Proceedings <strong>of</strong> the<br />

Eighth International Workshop on Machine Learning, pp. 353–357. Morgan Kaufmann,<br />

1991.<br />

73. <strong>Sutton</strong>, R. S., Matheus, C., “Learning polynomial functions by feature construction,”<br />

Proceedings <strong>of</strong> the Eighth International Workshop on Machine Learning, pp. 208–212.<br />

Morgan Kaufmann, 1991.<br />

74. <strong>Sutton</strong>, R. S., Barto, A. G., Williams, R., “Reinforcement learning is direct adaptive<br />

optimal control,” Proceedings <strong>of</strong> the American Control Conference, pp. 2143–2146,<br />

1991. Also published in IEEE Control Systems Magazine 12, No. 2, 19-22.<br />

75. <strong>Sutton</strong>, R. S., “Integrated modeling and control based on reinforcement learning and<br />

dynamic programming,” Advances in Neural Information Processing Systems 3, pp.<br />

471–478. MIT Press, 1991.<br />

76. <strong>Sutton</strong>, R. S., “Reinforcement learning architectures for animats,” Proceedings <strong>of</strong> the<br />

First International Conference on Simulation <strong>of</strong> Adaptive Behavior: From Animals to<br />

Animats, pp. 288–296, 1991.<br />

14

77. <strong>Sutton</strong>, R. S., “Integrated architectures for learning, planning, and reacting based on<br />

approximating dynamic programming,” Proceedings <strong>of</strong> the Seventh International<br />

Conference on Machine Learning, pp. 216–224, 1990. Reprinted in Artificial Neural<br />

Networks: Concepts and Theory, edited by P. Mehra and B. Wah, IEEE Computer Society<br />

Press, 1992.<br />

78. Barto, A. G., <strong>Sutton</strong>, R. S., Watkins, C., “Sequential decision problems and neural<br />

networks,” Advances in Neural Information Processing Systems 2, pp. 686–693. MIT<br />

Press, 1990.<br />

79. <strong>Sutton</strong>, R. S., Barto, A. G., “A temporal-difference model <strong>of</strong> classical conditioning,”<br />

Proceedings <strong>of</strong> the Ninth Annual Conference <strong>of</strong> the Cognitive Science Society, pp. 355–<br />

378, 1987.<br />

80. <strong>Sutton</strong>, R. S., “Two problems with backpropagation and other steepest-descent learning<br />

procedures for networks,” Proceedings <strong>of</strong> the Eighth Annual Conference <strong>of</strong> the Cognitive<br />

Science Society, pp. 823–831, 1986. Reprinted in Artificial Neural Networks: Concepts<br />

and Theory, edited by P. Mehra and B. Wah, IEEE Computer Society Press, 1992.<br />

81. Selfridge, O., <strong>Sutton</strong>, R. S., Barto, A. G., “Training and tracking in robotics,”<br />

Proceedings <strong>of</strong> the Ninth International Joint Conference on Artificial Intelligence, pp.<br />

670–672, 1985.<br />

82. <strong>Sutton</strong>, R. S., Pinette, B., “The learning <strong>of</strong> world models by connectionist networks,”<br />

Proceedings <strong>of</strong> the Seventh Annual Conference <strong>of</strong> the Cognitive Science Society, pp. 54–<br />

64, 1985.<br />

83. Moore, J., Desmond, J., Berthier, N., Blazis, D., <strong>Sutton</strong>, R. S., Barto, A. G.,<br />

“Connectionist learning in real time: <strong>Sutton</strong>-Barto adaptive element and classical<br />

conditioning <strong>of</strong> the nictitating membrane response,” Proceedings <strong>of</strong> the Seventh Annual<br />

Conference <strong>of</strong> the Cognitive Science Society, pp. 318–322, 1985.<br />

Other Articles in Proceedings<br />

84. Modayil, J., Pilarski, P. M., White, A., Degris, T., <strong>Sutton</strong>, R. S., “Off-policy knowledge<br />

maintenance for robots.” Robotics Science and Systems Workshop (Towards Closing the<br />

Loop: Active Learning for Robotics). Extended abstract and poster, 2010.<br />

85. <strong>Sutton</strong>, R. S., “The grand challenge <strong>of</strong> predictive empirical abstract knowledge.” Working<br />

Notes <strong>of</strong> the IJCAI-09 Workshop on Grand Challenges for Reasoning from Experiences,<br />

2009.<br />

86. <strong>Sutton</strong>, R. S., “Open theoretical questions in reinforcement learning,” Proceedings <strong>of</strong> the<br />

Fourth European Conference on Computational Learning Theory, pp. 11–17. Springer-<br />

Verlag, 1999.<br />

15

87. McGovern, A., Precup, D., Ravindran, B., Singh, S., <strong>Sutton</strong>, R. S., “Hierarchical Optimal<br />

Control <strong>of</strong> MDPs,” Proceedings <strong>of</strong> the Tenth Yale Workshop on Adaptive and Learning<br />

Systems, pp. 186–191. Yale <strong>University</strong>, New Haven, CT, 1998.<br />

88. Precup, D., <strong>Sutton</strong>, R. S., “Multi-time models for reinforcement learning,” Proceedings <strong>of</strong><br />

the ICML'97 Workshop on Modeling in Reinforcement Learning, 1997.<br />

89. McGovern, A., <strong>Sutton</strong>, R. S., Fagg, A. H., “Roles <strong>of</strong> macro-actions in accelerating<br />

reinforcement learning,” Proceedings <strong>of</strong> the 1997 Grace Hopper Celebration <strong>of</strong> Women<br />

in Computing, pp. 13–17, 1997.<br />

90. Precup, D., <strong>Sutton</strong>, R. S., Singh, S. P., “Planning with closed-loop macro actions,”<br />

Working notes <strong>of</strong> the 1997 AAAI Fall Symposium on Model-directed Autonomous<br />

Systems, pp. 70–76, 1997.<br />

91. Kuvayev, L., <strong>Sutton</strong>, R. S., “Model-based reinforcement learning with an approximate,<br />

learned model,” Proceedings <strong>of</strong> the Ninth Yale Workshop on Adaptive and Learning<br />

Systems, pp. 101–105. Yale <strong>University</strong>, New Haven, CT, 1996.<br />

92. Mehra, R. K., Ravichandran, B., <strong>Sutton</strong>, R. S., “Adaptive intelligent scheduling for ATM<br />

networks,” Proceedings <strong>of</strong> the Ninth Yale Workshop on Adaptive and Learning Systems,<br />

pp. 106–111. Yale <strong>University</strong>, New Haven, CT, 1996.<br />

93. <strong>Sutton</strong>, R. S., Singh, S. “On step size and bias in temporal-difference learning,”<br />

Proceedings <strong>of</strong> the Eighth Yale Workshop on Adaptive and Learning Systems, pp. 91–96.<br />

Yale <strong>University</strong>, New Haven, CT, 1994.<br />

94. <strong>Sutton</strong>, R. S., “Gain adaptation beats least squares,” Proceedings <strong>of</strong> the Seventh Yale<br />

Workshop on Adaptive and Learning Systems, pp. 161–166. Yale <strong>University</strong>, New<br />

Haven, CT, 1992.<br />

95. <strong>Sutton</strong>, R. S., “Reinforcement learning architectures,” Proceedings ISKIT'92<br />

International Symposium on Neural Information Processing, pp. 211–216. Fukuoka,<br />

Japan, 1992.<br />

96. <strong>Sutton</strong>, R. S., “Dyna, an integrated architecture for learning, planning and reacting,”<br />

Working Notes <strong>of</strong> the 1991 AAAI Spring Symposium on Integrated Intelligent<br />

Architectures and SIGART Bulletin 2, pp. 160-163, 1991.<br />

97. Whitehead, S., <strong>Sutton</strong>, R. S., Ballard, D., “Advances in reinforcement learning and their<br />

implications for intelligent control,” Proceedings <strong>of</strong> the Fifth IEEE International<br />

Symposium on Intelligent Control, pp. 1289–1297, 1990.<br />

98. Anderson, C., Franklin, J., <strong>Sutton</strong>, R. S., “Learning a nonlinear model <strong>of</strong> a manufacturing<br />

process using multilayer connectionist networks,” Proceedings <strong>of</strong> the Fifth IEEE<br />

International Symposium on Intelligent Control, pp. 404–409, 1990.<br />

16

99. <strong>Sutton</strong>, R. S., “Artificial intelligence by dynamic programming,” Proceedings <strong>of</strong> the Sixth<br />

Yale Workshop on Adaptive and Learning Systems, pp. 89–95. Yale <strong>University</strong>, New<br />

Haven, CT, 1990.<br />

100.Franklin, J., <strong>Sutton</strong>, R. S., Anderson, C., Selfridge, O., Schwartz, D., “Connectionist<br />

learning control at GTE laboratories,” Intelligent Control and Adaptive Systems, G.<br />

Rodriguez, Ed., Proc. SPIE 1196, pp. 242–253, 1990.<br />

101.<strong>Sutton</strong>, R. S., “Artificial intelligence as a control problem: comments on the relationship<br />

between machine learning and intelligent control,” Proceedings <strong>of</strong> the IEEE International<br />

Symposium on Intelligent Control 1988, pp. 500–507, 1989.<br />

102.Franklin, J., <strong>Sutton</strong>, R. S., Anderson, C. “Application <strong>of</strong> connectionist learning methods<br />

to manufacturing process monitoring,” Proceedings <strong>of</strong> the IEEE International<br />

Symposium on Intelligent Control 1988, pp. 709–712, 1989.<br />

103.<strong>Sutton</strong>, R. S., “Convergence theory for a new kind <strong>of</strong> prediction learning,” Proceedings<br />

<strong>of</strong> the 1988 Workshop on Computational Learning Theory, pp. 421–422 1988.<br />

104.<strong>Sutton</strong>, R. S., “Learning distributed, searchable, internal models,” Proceedings <strong>of</strong> the<br />

Distributed Artificial Intelligence Workshop, pp. 287–289, 1985.<br />

105.Barto, A. G., <strong>Sutton</strong>, R. S., Anderson, C., “Spatial learning simulation systems,”<br />

Proceedings <strong>of</strong> the 10th IMACS World Congress on Systems Simulation and Scientific<br />

Computation, pp. 204–206, 1982.<br />

Other Publications<br />

106.“Beyond Reward: The Problem <strong>of</strong> Knowledge and Data.” Extended abstract in the<br />

Proceedings <strong>of</strong> the 21st International Conference on Inductive Logic Programming,<br />

Muggleton, S., Watanabe, H., Tamaddoni-Nezhad, A., and Chen, J. Eds. Lecture Notes in<br />

Computer Science, Springer, 2011<br />

107.“Interview with <strong>Richard</strong> S. <strong>Sutton</strong>,” by Verena Heidrich-Meisner. Kuenstliche<br />

Intelligenz. (2009). pp. 41-43.<br />

108.<strong>Sutton</strong>, R. S., “Reinforcement learning: Past, present, and future.” Extended abstract in<br />

Simulated Evolution and Learning, McKay, B., Yao, X., Newton, C. S., Kim, J.-H.,<br />

Furuhashi, T., Eds. Published as Lecture Notes in Computer Science 1585, pp. 195–197,<br />

Springer, 1999.<br />

109.McGovern, A., <strong>Sutton</strong>, R. S., “Macro-actions in reinforcement learning: an empirical<br />

analysis.” Technical Report 98-70, Department <strong>of</strong> Computer Science, <strong>University</strong> <strong>of</strong><br />

Massachusetts, Amherst, MA 01003, 1998.<br />

17

110.Precup, D., <strong>Sutton</strong>, R. S., “Empirical comparison <strong>of</strong> gradient descent and exponentiated<br />

gradient descent in supervised and reinforcement learning.” Technical Report UM-<br />

CS-1996-070, Department <strong>of</strong> Computer Science, <strong>University</strong> <strong>of</strong> Massachusetts, Amherst,<br />

MA 01003, 1996.<br />

111.<strong>Sutton</strong>, R. S., “On the virtues <strong>of</strong> linear learning and trajectory distributions.” Abstract in<br />

Proceedings <strong>of</strong> the Workshop on Value Function Approximation, Conference on Neural<br />

Information Processing Systems, 1995.<br />

112.<strong>Sutton</strong>, R. S., “Machines that learn and mimic the brain.” In ACCESS, GTE's Journal <strong>of</strong><br />

Science and Technology, 1992. Reprinted in Stethoscope Quarterly, Spring 1993.<br />

113.<strong>Sutton</strong>, R. S., “The challenge <strong>of</strong> reinforcement learning.” Introduction to a special issue<br />

on reinforcement learning, Machine Learning 8, No 3/4, pp. 225–227, 1992.<br />

114.<strong>Sutton</strong>, R. S., “Implementation details <strong>of</strong> the TD(lambda) procedure for the case <strong>of</strong><br />

vector predictions and backpropagation.” GTE Laboratories Technical Report<br />

TR87-509.1, GTE Laboratories, 40 Sylvan Road, Waltham, MA 02254, 1989.<br />

115.<strong>Sutton</strong>, R. S., “NADALINE: a normalized adaptive linear element that learns<br />

efficiently.” GTE Laboratories Technical Report TR88-509.4, GTE Laboratories, 40<br />

Sylvan Road, Waltham, MA 02254, 1988.<br />

116.<strong>Sutton</strong>, R. S., “Temporal credit assignment in reinforcement learning,” Ph.D.<br />

dissertation, <strong>University</strong> <strong>of</strong> Massachusetts, Amherst, MA. Published as COINS Technical<br />

Report 84-2, 1984.<br />

117.Barto, A. G., <strong>Sutton</strong>, R. S., “Goal seeking components for adaptive intelligence: an initial<br />

assessment.” Air Force Wright Aeronautical Laboratories Technical Report, AFWAL-<br />

TR-81-1070, Wright-Patterson Air Force Base, Ohio, 1981.<br />

118.<strong>Sutton</strong>, R. S., “Single channel theory: a neuronal theory <strong>of</strong> learning,” Brain Theory<br />

Newsletter 3, No. 3/4, pp. 72–75, 1978. (earliest publication)<br />

Patent<br />

119.<strong>Sutton</strong>, R. S., “Apparatus for Machine Learning.” United States Patent No. 5,946,675,<br />

August 31, 1999. Assigned to GTE Laboratories Incorporated, Waltham, Mass.<br />

18