Considerations on Sample Size and Power Calculations in ...

Considerations on Sample Size and Power Calculations in ...

Considerations on Sample Size and Power Calculations in ...

- No tags were found...

You also want an ePaper? Increase the reach of your titles

YUMPU automatically turns print PDFs into web optimized ePapers that Google loves.

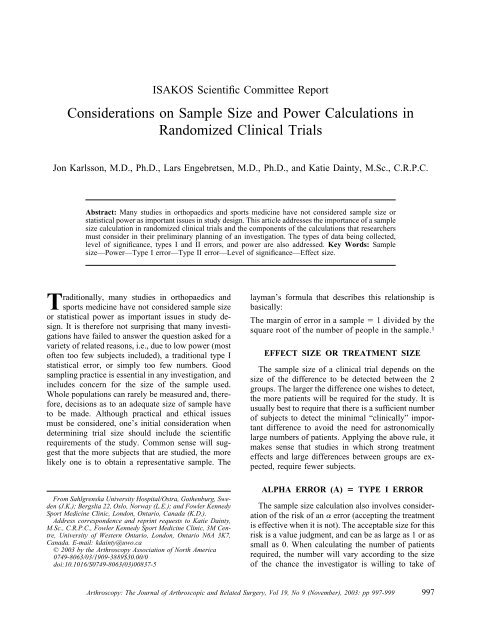

ISAKOS Scientific Committee Report<str<strong>on</strong>g>C<strong>on</strong>siderati<strong>on</strong>s</str<strong>on</strong>g> <strong>on</strong> <strong>Sample</strong> <strong>Size</strong> <strong>and</strong> <strong>Power</strong> Calculati<strong>on</strong>s <strong>in</strong>R<strong>and</strong>omized Cl<strong>in</strong>ical TrialsJ<strong>on</strong> Karlss<strong>on</strong>, M.D., Ph.D., Lars Engebretsen, M.D., Ph.D., <strong>and</strong> Katie Da<strong>in</strong>ty, M.Sc., C.R.P.C.Abstract: Many studies <strong>in</strong> orthopaedics <strong>and</strong> sports medic<strong>in</strong>e have not c<strong>on</strong>sidered sample size orstatistical power as important issues <strong>in</strong> study design. This article addresses the importance of a samplesize calculati<strong>on</strong> <strong>in</strong> r<strong>and</strong>omized cl<strong>in</strong>ical trials <strong>and</strong> the comp<strong>on</strong>ents of the calculati<strong>on</strong>s that researchersmust c<strong>on</strong>sider <strong>in</strong> their prelim<strong>in</strong>ary plann<strong>in</strong>g of an <strong>in</strong>vestigati<strong>on</strong>. The types of data be<strong>in</strong>g collected,level of significance, types I <strong>and</strong> II errors, <strong>and</strong> power are also addressed. Key Words: <strong>Sample</strong>size—<strong>Power</strong>—Type I error—Type II error—Level of significance—Effect size.Traditi<strong>on</strong>ally, many studies <strong>in</strong> orthopaedics <strong>and</strong>sports medic<strong>in</strong>e have not c<strong>on</strong>sidered sample sizeor statistical power as important issues <strong>in</strong> study design.It is therefore not surpris<strong>in</strong>g that many <strong>in</strong>vestigati<strong>on</strong>shave failed to answer the questi<strong>on</strong> asked for avariety of related reas<strong>on</strong>s, i.e., due to low power (mostoften too few subjects <strong>in</strong>cluded), a traditi<strong>on</strong>al type Istatistical error, or simply too few numbers. Goodsampl<strong>in</strong>g practice is essential <strong>in</strong> any <strong>in</strong>vestigati<strong>on</strong>, <strong>and</strong><strong>in</strong>cludes c<strong>on</strong>cern for the size of the sample used.Whole populati<strong>on</strong>s can rarely be measured <strong>and</strong>, therefore,decisi<strong>on</strong>s as to an adequate size of sample haveto be made. Although practical <strong>and</strong> ethical issuesmust be c<strong>on</strong>sidered, <strong>on</strong>e’s <strong>in</strong>itial c<strong>on</strong>siderati<strong>on</strong> whendeterm<strong>in</strong><strong>in</strong>g trial size should <strong>in</strong>clude the scientificrequirements of the study. Comm<strong>on</strong> sense will suggestthat the more subjects that are studied, the morelikely <strong>on</strong>e is to obta<strong>in</strong> a representative sample. TheFrom Sahlgrenska University Hospital/Ostra, Gothenburg, Sweden(J.K.); Bergslia 22, Oslo, Norway (L.E.); <strong>and</strong> Fowler KennedySport Medic<strong>in</strong>e Cl<strong>in</strong>ic, L<strong>on</strong>d<strong>on</strong>, Ontario, Canada (K.D.).Address corresp<strong>on</strong>dence <strong>and</strong> repr<strong>in</strong>t requests to Katie Da<strong>in</strong>ty,M.Sc., C.R.P.C., Fowler Kennedy Sport Medic<strong>in</strong>e Cl<strong>in</strong>ic, 3M Centre,University of Western Ontario, L<strong>on</strong>d<strong>on</strong>, Ontario N6A 3K7,Canada. E-mail: kda<strong>in</strong>ty@uwo.ca© 2003 by the Arthroscopy Associati<strong>on</strong> of North America0749-8063/03/1909-3889$30.00/0doi:10.1016/S0749-8063(03)00837-5layman’s formula that describes this relati<strong>on</strong>ship isbasically:The marg<strong>in</strong> of error <strong>in</strong> a sample 1 divided by thesquare root of the number of people <strong>in</strong> the sample. 1EFFECT SIZE OR TREATMENT SIZEThe sample size of a cl<strong>in</strong>ical trial depends <strong>on</strong> thesize of the difference to be detected between the 2groups. The larger the difference <strong>on</strong>e wishes to detect,the more patients will be required for the study. It isusually best to require that there is a sufficient numberof subjects to detect the m<strong>in</strong>imal “cl<strong>in</strong>ically” importantdifference to avoid the need for astr<strong>on</strong>omicallylarge numbers of patients. Apply<strong>in</strong>g the above rule, itmakes sense that studies <strong>in</strong> which str<strong>on</strong>g treatmenteffects <strong>and</strong> large differences between groups are expected,require fewer subjects.ALPHA ERROR () TYPE I ERRORThe sample size calculati<strong>on</strong> also <strong>in</strong>volves c<strong>on</strong>siderati<strong>on</strong>of the risk of an error (accept<strong>in</strong>g the treatmentis effective when it is not). The acceptable size for thisrisk is a value judgment, <strong>and</strong> can be as large as 1 or assmall as 0. When calculat<strong>in</strong>g the number of patientsrequired, the number will vary accord<strong>in</strong>g to the sizeof the chance the <strong>in</strong>vestigator is will<strong>in</strong>g to take ofArthroscopy: The Journal of Arthroscopic <strong>and</strong> Related Surgery, Vol 19, No 9 (November), 2003: pp 997-999997

998 J. KARLSSON ET AL.falsely c<strong>on</strong>clud<strong>in</strong>g that a given <strong>in</strong>terventi<strong>on</strong> is valuable.The larger the chance accepted, the fewer thepatients needed. As easy as this sounds, it does not domuch for the credibility of a trial.Alpha (p ) is usually set at 0.05 (1 <strong>in</strong> 20) or 0.01 (1<strong>in</strong> 100).BETA ERROR () TYPE II ERRORType II or error is similar to error, but it is thechance of miss<strong>in</strong>g true differences <strong>in</strong> a particularstudy, or <strong>in</strong> other words, accept<strong>in</strong>g a null hypothesiseven though it is false. errors are c<strong>on</strong>venti<strong>on</strong>allymuch larger than errors, reflect<strong>in</strong>g the higher valueplaced <strong>on</strong> be<strong>in</strong>g sure that an effect is really presentwhen <strong>on</strong>e reports that it is. 3LEVEL OF SIGNIFICANCEThe chosen level of significance sets the likelihoodof detect<strong>in</strong>g a treatment effect when n<strong>on</strong>e exists (lead<strong>in</strong>gto a so-called false-positive result), <strong>and</strong> def<strong>in</strong>es thethreshold P value. Results with a P value above thethreshold lead to the c<strong>on</strong>clusi<strong>on</strong> that an observeddifference may be due to chance al<strong>on</strong>e, while thosewith a P value below the threshold lead to reject<strong>in</strong>gchance <strong>and</strong> c<strong>on</strong>clud<strong>in</strong>g that the <strong>in</strong>terventi<strong>on</strong> has a realeffect. The level of significance is most comm<strong>on</strong>ly setat 5% (i.e., P .05) or 1% (P .01). This means thatthe <strong>in</strong>vestigator is prepared to accept a 5% (or 1%)chance of err<strong>on</strong>eously report<strong>in</strong>g a significant effect.POWER (1-)The power of a study is def<strong>in</strong>ed as its ability todetect a true difference <strong>in</strong> outcome between the st<strong>and</strong>ardor c<strong>on</strong>trol arm <strong>and</strong> the <strong>in</strong>terventi<strong>on</strong> arm. It is thepower to detect a statistical difference between 2different treatments, when the difference <strong>in</strong> fact exists.This can also be described as the possibility that thestudy shows a statistical significance (at a level decided<strong>on</strong> beforeh<strong>and</strong>) provided there is an effect. Thisis correlated to the possibility to f<strong>in</strong>d a cl<strong>in</strong>icallyrelevant effect for any given drug or treatment. Thismay be termed “cl<strong>in</strong>ical effect,” which is not alwaysthe same as statistical effect. Before decid<strong>in</strong>g <strong>on</strong> thenull hypothesis, a m<strong>in</strong>imal cl<strong>in</strong>ically important differenceshould be discussed. One must c<strong>on</strong>sider thiscarefully, s<strong>in</strong>ce “cl<strong>in</strong>ically significant difference” canbe a matter of great debate.<strong>Power</strong> is affected by many factors, namely the significancelevel, the effect size, <strong>and</strong> the sample size.The lower the significance level, the lower the power.If the researcher wishes to reduce the risk of c<strong>on</strong>clud<strong>in</strong>gthat an <strong>in</strong>effective medicati<strong>on</strong> (or any other treatment)is effective, then they must underst<strong>and</strong> thatthey, <strong>in</strong> turn, run the risk of miss<strong>in</strong>g a treatment whichis, <strong>in</strong> fact, effective. The larger effect a treatment has,the larger is the possibility to discover it with<strong>in</strong> thetrial. A generally accepted power is 80% or 0.8. Inmany recent studies, the power limit is set to 0.9 oreven 0.95. This is more often the case <strong>in</strong> pharmacologicstudies, i.e., test<strong>in</strong>g new drugs, than is the case <strong>in</strong><strong>in</strong>vestigati<strong>on</strong> of surgical techniques. A power of 0.8means that the researcher is correct 8 times <strong>in</strong> 10 whenaccept<strong>in</strong>g his c<strong>on</strong>clusi<strong>on</strong>. Often, a higher power isrequested <strong>in</strong> studies for dramatic new treatments suchas for diabetes or cancer. A power of 0.8 also meansthat the researcher may be <strong>in</strong>correct 2 times out of 10.The cl<strong>in</strong>ically relevant difference <strong>and</strong> the risk of committ<strong>in</strong>ga type II error should be taken <strong>in</strong>to c<strong>on</strong>siderati<strong>on</strong>when def<strong>in</strong><strong>in</strong>g the power limit desired.METHODOLOGY<strong>Sample</strong> size calculati<strong>on</strong>s should be performed dur<strong>in</strong>gthe design phase of the study. There are severalcomputerized methods that can be easily accessedthrough the Internet if statistical support is not at h<strong>and</strong>.However, the formula must be appropriate for thedesign of the trial <strong>and</strong> the subsequent analysis. Eachsample size calculati<strong>on</strong> is unique, <strong>and</strong> there is noglobal soluti<strong>on</strong> applicable to all trials.<strong>Sample</strong> <strong>Size</strong> Equati<strong>on</strong> for 2 <strong>in</strong>dependent groupsmeans:n/group 2{(Z Z ) /} 2Z z-value for found <strong>in</strong> a Z table (i.e., Z for 0.05 is 1.96).Z z-value for found <strong>in</strong> a Z table. st<strong>and</strong>ard deviati<strong>on</strong> <strong>in</strong> mean resp<strong>on</strong>se.m<strong>in</strong>imal difference desired <strong>in</strong> outcome.It is str<strong>on</strong>gly recommended that <strong>on</strong>e enlist the aid ofa biostatistitian at the beg<strong>in</strong>n<strong>in</strong>g to help determ<strong>in</strong>e thecorrect sample size calculati<strong>on</strong>s. However, it is alwaysa good idea to underst<strong>and</strong> the calculati<strong>on</strong> <strong>and</strong> why thestatistician did what he or she did.Th<strong>in</strong>gs to c<strong>on</strong>sider when determ<strong>in</strong><strong>in</strong>g sample size:1. The <strong>in</strong>vestigati<strong>on</strong> or analytical procedure that<strong>on</strong>e wishes to follow as part of the <strong>in</strong>itial experimentaldesign stage of the <strong>in</strong>vestigati<strong>on</strong>.

SAMPLE SIZE AND POWER CALCULATIONS IN RCT9992. The statistical procedure that <strong>on</strong>e may wish touse for analysis (e.g., 1-tailed or 2-tailed t test,l<strong>in</strong>ear regressi<strong>on</strong>).3. The percentage change <strong>and</strong>/or level of significanceat which <strong>on</strong>e would wish to detect thechange.4. The appropriate sample size formula for thestudy design <strong>in</strong> questi<strong>on</strong>.One can see why it is extremely useful to have thehelp of a statistician from the outset. F<strong>in</strong>ally, <strong>on</strong>eshould explicitly choose between 2-sided or 1-sidedstatistical test<strong>in</strong>g. Unfortunately, the importance ofthis decisi<strong>on</strong> is often neglected. In the 2-sided opti<strong>on</strong>,<strong>on</strong>e is able to detect the predef<strong>in</strong>ed relevant difference,if present, <strong>in</strong> both directi<strong>on</strong>s. That is, if the <strong>in</strong>terventi<strong>on</strong>is better than the reference of a given amount, thiswill probably be found, but also a similar advantage ofthe reference over the pr<strong>in</strong>cipal <strong>in</strong>terventi<strong>on</strong> can bedetected. The 1-sided opti<strong>on</strong> would <strong>on</strong>ly allow a1-sided evaluati<strong>on</strong> (e.g., whether or not the <strong>in</strong>terventi<strong>on</strong>is better than the reference treatment of a givenamount), without test<strong>in</strong>g whether the reference is superiorto the pr<strong>in</strong>cipal <strong>in</strong>terventi<strong>on</strong>. 4-6CONCLUSIONSIt can be c<strong>on</strong>cluded that the larger the sample size,the more precisi<strong>on</strong> <strong>on</strong>e will have when stat<strong>in</strong>g thef<strong>in</strong>d<strong>in</strong>gs. With <strong>in</strong>creased precisi<strong>on</strong>, there is an <strong>in</strong>creasedpossibility of establish<strong>in</strong>g an effect (if it trulyexists). In other words, it is possible to f<strong>in</strong>d even verysmall effects (e.g., differences between groups) if thesample size is large enough. However, it can be questi<strong>on</strong>edwhether it is correct, from an ethical perspective,to perform a very large <strong>in</strong>vestigati<strong>on</strong> (“the largerthe sample, the higher the power”) to identify an effectthat may be small <strong>and</strong> not cl<strong>in</strong>ically relevant. Thismay, <strong>in</strong> fact, be both <strong>in</strong>correct <strong>and</strong> dangerous, <strong>and</strong> <strong>on</strong>emust always remember that any new treatment may bepotentially harmful. <strong>Sample</strong> size calculati<strong>on</strong>, <strong>in</strong>clud<strong>in</strong>gpower analysis, should always be <strong>in</strong>cluded <strong>in</strong> themethodologic design of any cl<strong>in</strong>ical trial. Especiallywhen no statistical difference is found, the possibilityof either type I or type II error should be discussed. Infact, a study show<strong>in</strong>g no statistical difference is oftenmore the result of low power than to true lack ofdifference between the study groups.REFERENCES1. Friedman LM, Furberg CD, Demets DL. <strong>Sample</strong> size. In:Fundamentals of cl<strong>in</strong>ical epidemiology. New York: Mosby,1996;94-125.2. Pocock SJ. Cl<strong>in</strong>ical trials, a practical approach. Chichester:Wiley, 1983.3. Bl<strong>and</strong> JM, Altman DG. One <strong>and</strong> two sided tests of significance.BMJ 1994;309:248.4. Dunnett CW, Gent M. An alternative to the use of two-sidedtests <strong>in</strong> cl<strong>in</strong>ical trials. Stat Med 1996;15:1729-1738.5. Koch GG. One-sided <strong>and</strong> two-sided tests <strong>and</strong> P values. J BiopharmStat 1991;1:161-170.6. Peace KE. The alternative hypothesis: One-sided or two-sided?J Cl<strong>in</strong> Epidemiol 1989;42:473-476.RECOMMENDED READING1. Greenfield ML, Kuhn JE, Wojtys EM. A statistics primer.<strong>Power</strong> analysis <strong>and</strong> sample size determ<strong>in</strong>ati<strong>on</strong>. Am J Sports Med1997;25:138-140.2. Lach<strong>in</strong> JM. Introducti<strong>on</strong> to sample size determ<strong>in</strong>ati<strong>on</strong> <strong>and</strong>power analysis for cl<strong>in</strong>ical use. C<strong>on</strong>trol Cl<strong>in</strong> Trials 1981;2:93-113.