- Page 1 and 2: ACL 2013 51st Annual Meeting of the

- Page 3: Introduction In recent years, there

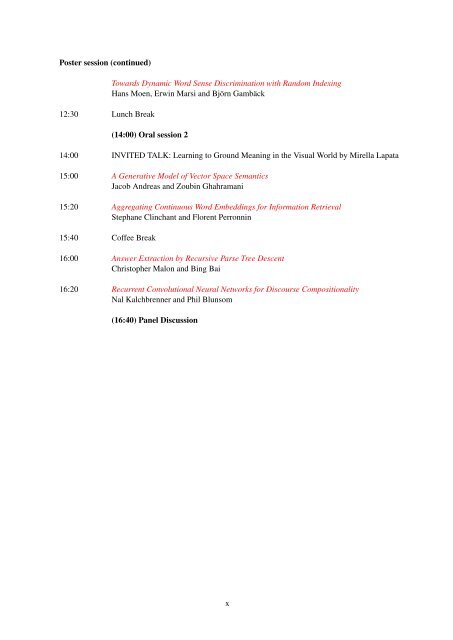

- Page 7: Table of Contents Vector Space Sema

- Page 12 and 13: Input: Log. Form: “red ball”

- Page 14 and 15: the NP/N λx.x red N/N λx.A red x

- Page 16 and 17: dicted and target distributions, an

- Page 18 and 19: typed objects lie in the same vecto

- Page 20 and 21: Peter D. Turney. 2006. Similarity o

- Page 22 and 23: (1999), who suggested that the real

- Page 24 and 25: mation. In addition, using a new se

- Page 26 and 27: Figure 6: Positive rate, negative r

- Page 28 and 29: References Rens Bod, Remko Scha, an

- Page 30 and 31: A Structured Distributional Semanti

- Page 32 and 33: Figure 1: Sample sentences & triple

- Page 34 and 35: el as its three axes. We explore th

- Page 36 and 37: as well as our framing of word comp

- Page 38 and 39: References Marco Baroni and Roberto

- Page 40 and 41: Letter N-Gram-based Input Encoding

- Page 42 and 43: Hidden my house my house Visibl

- Page 44 and 45: … m my y h se us Hidden Visible m

- Page 46 and 47: line of the systems presented in Ta

- Page 48 and 49: References Yoshua Bengio, Réjean D

- Page 50 and 51: Transducing Sentences to Syntactic

- Page 52 and 53: t s “We booked the flight” →

- Page 54 and 55: D 3(s) = ❀ PRP + ❀ V + DT ❀ +

- Page 56 and 57: Output Model λ = 0 λ = 0.2 λ = 0

- Page 58 and 59: References James A. Anderson. 1973.

- Page 60 and 61:

General estimation and evaluation o

- Page 62 and 63:

Guevara (2010) and Zanzotto et al.

- Page 64 and 65:

⃗p = A u ⃗v where A u is a matr

- Page 66 and 67:

stituents. For this reason, we must

- Page 68 and 69:

References Hervé Abdi and Lynne Wi

- Page 70 and 71:

GOOD VERTICAL safe out raise level

- Page 72 and 73:

HLBL HLBL SENNA 4lang original scal

- Page 74 and 75:

Determining Compositionality of Wor

- Page 76 and 77:

ability of a word in the investigat

- Page 78 and 79:

terminer) wheel” and “reinvent

- Page 80 and 81:

ing LSA are depicted. Discussion As

- Page 82 and 83:

WSM Measure wAvg(of ρ) ρAN-VO-SV

- Page 84 and 85:

“Not not bad” is not “bad”:

- Page 86 and 87:

activation functions in recursive n

- Page 88 and 89:

logic experiment in Socher et al. (

- Page 90 and 91:

tinction without the need to move t

- Page 92 and 93:

T. K. Landauer and S. T. Dumais. 19

- Page 94 and 95:

This differs from the classical Ran

- Page 96 and 97:

Hungarian Algorithm First cosine si

- Page 98 and 99:

Features: MSRpar MSRvid SMTeuroparl

- Page 100 and 101:

Joseph Reisinger and Raymond J. Moo

- Page 102 and 103:

phrase meaning in vector space. •

- Page 104 and 105:

By Bayes’ rule, p(x|a, n; Θ) ∝

- Page 106 and 107:

4.2 Learning from distributional re

- Page 108 and 109:

W = (w 1 , w 2 , · · · , w n ) a

- Page 110 and 111:

Aggregating Continuous Word Embeddi

- Page 112 and 113:

trieval, Sivic and Zisserman propos

- Page 114 and 115:

Another advantage is that the propo

- Page 116 and 117:

e 50 100 200 300 400 500 CLEF 4.0 6

- Page 118 and 119:

Y. Bengio, J. Louradour, R. Collobe

- Page 120 and 121:

Answer Extraction by Recursive Pars

- Page 122 and 123:

Algorithm 1: Auto-encoders co-train

- Page 124 and 125:

NP NP ) , ADJP , NP ( NP - NP Engli

- Page 126 and 127:

System Short Answer Short Answer Sh

- Page 128 and 129:

References Y. Bengio and R. Ducharm

- Page 130 and 131:

problem of paragraphs, of tence, mo

- Page 132 and 133:

m1 k 4 only on the length of s and

- Page 134 and 135:

Dialogue Act Label Example Train (%

- Page 136 and 137:

[Calhoun et al.2010] Sasha Calhoun,