Towards Wiki-based Dense City Modeling - Institute for Computer ...

Towards Wiki-based Dense City Modeling - Institute for Computer ...

Towards Wiki-based Dense City Modeling - Institute for Computer ...

Create successful ePaper yourself

Turn your PDF publications into a flip-book with our unique Google optimized e-Paper software.

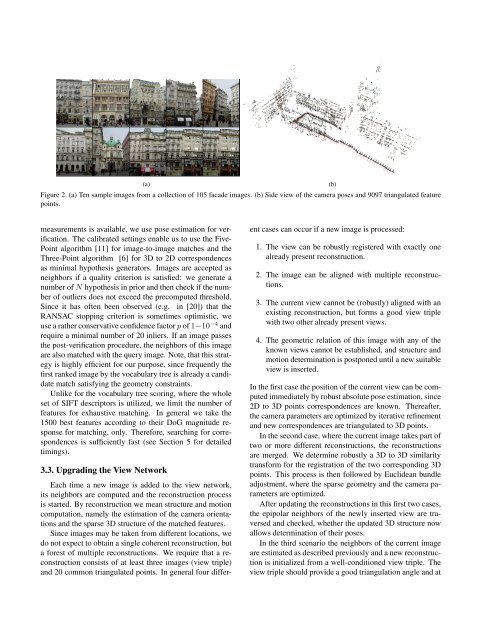

(a)<br />

Figure 2. (a) Ten sample images from a collection of 105 facade images. (b) Side view of the camera poses and 9097 triangulated feature<br />

points.<br />

(b)<br />

measurements is available, we use pose estimation <strong>for</strong> verification.<br />

The calibrated settings enable us to use the Five-<br />

Point algorithm [11] <strong>for</strong> image-to-image matches and the<br />

Three-Point algorithm [6] <strong>for</strong> 3D to 2D correspondences<br />

as minimal hypothesis generators. Images are accepted as<br />

neighbors if a quality criterion is satisfied: we generate a<br />

number of N hypothesis in prior and then check if the number<br />

of outliers does not exceed the precomputed threshold.<br />

Since it has often been observed (e.g. in [20]) that the<br />

RANSAC stopping criterion is sometimes optimistic, we<br />

use a rather conservative confidence factor p of 1−10 −4 and<br />

require a minimal number of 20 inliers. If an image passes<br />

the post-verification procedure, the neighbors of this image<br />

are also matched with the query image. Note, that this strategy<br />

is highly efficient <strong>for</strong> our purpose, since frequently the<br />

first ranked image by the vocabulary tree is already a candidate<br />

match satisfying the geometry constraints.<br />

Unlike <strong>for</strong> the vocabulary tree scoring, where the whole<br />

set of SIFT descriptors is utilized, we limit the number of<br />

features <strong>for</strong> exhaustive matching. In general we take the<br />

1500 best features according to their DoG magnitude response<br />

<strong>for</strong> matching, only. There<strong>for</strong>e, searching <strong>for</strong> correspondences<br />

is sufficiently fast (see Section 5 <strong>for</strong> detailed<br />

timings).<br />

3.3. Upgrading the View Network<br />

Each time a new image is added to the view network,<br />

its neighbors are computed and the reconstruction process<br />

is started. By reconstruction we mean structure and motion<br />

computation, namely the estimation of the camera orientations<br />

and the sparse 3D structure of the matched features.<br />

Since images may be taken from different locations, we<br />

do not expect to obtain a single coherent reconstruction, but<br />

a <strong>for</strong>est of multiple reconstructions. We require that a reconstruction<br />

consists of at least three images (view triple)<br />

and 20 common triangulated points. In general four different<br />

cases can occur if a new image is processed:<br />

1. The view can be robustly registered with exactly one<br />

already present reconstruction.<br />

2. The image can be aligned with multiple reconstructions.<br />

3. The current view cannot be (robustly) aligned with an<br />

existing reconstruction, but <strong>for</strong>ms a good view triple<br />

with two other already present views.<br />

4. The geometric relation of this image with any of the<br />

known views cannot be established, and structure and<br />

motion determination is postponed until a new suitable<br />

view is inserted.<br />

In the first case the position of the current view can be computed<br />

immediately by robust absolute pose estimation, since<br />

2D to 3D points correspondences are known. Thereafter,<br />

the camera parameters are optimized by iterative refinement<br />

and new correspondences are triangulated to 3D points.<br />

In the second case, where the current image takes part of<br />

two or more different reconstructions, the reconstructions<br />

are merged. We determine robustly a 3D to 3D similarity<br />

trans<strong>for</strong>m <strong>for</strong> the registration of the two corresponding 3D<br />

points. This process is then followed by Euclidean bundle<br />

adjustment, where the sparse geometry and the camera parameters<br />

are optimized.<br />

After updating the reconstructions in this first two cases,<br />

the epipolar neighbors of the newly inserted view are traversed<br />

and checked, whether the updated 3D structure now<br />

allows determination of their poses.<br />

In the third scenario the neighbors of the current image<br />

are estimated as described previously and a new reconstruction<br />

is initialized from a well-conditioned view triple. The<br />

view triple should provide a good triangulation angle and at