Case Study: ESPN STAR Sports - TSL

Case Study: ESPN STAR Sports - TSL

Case Study: ESPN STAR Sports - TSL

Create successful ePaper yourself

Turn your PDF publications into a flip-book with our unique Google optimized e-Paper software.

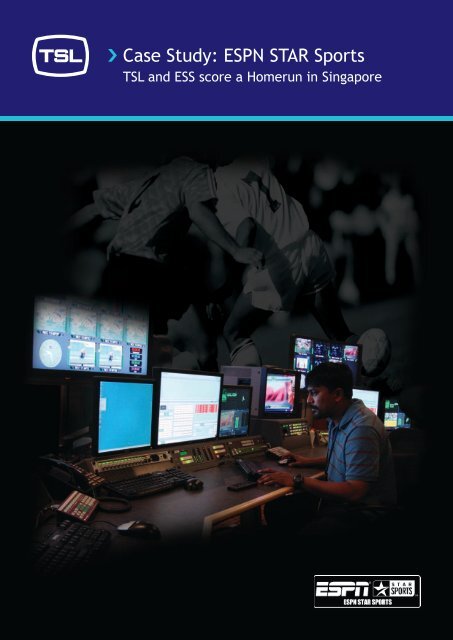

<strong>Case</strong> <strong>Study</strong>: <strong>ESPN</strong> <strong>STAR</strong> <strong>Sports</strong><br />

<strong>TSL</strong> and ESS score a Homerun in Singapore

<strong>ESPN</strong> <strong>STAR</strong> <strong>Sports</strong><br />

Homerun<br />

<strong>ESPN</strong> <strong>STAR</strong> <strong>Sports</strong> is a 50:50 joint venture between two of the<br />

world’s leading cable and satellite broadcasters, Walt Disney<br />

(<strong>ESPN</strong>, Inc.) and News Corporation Limited (<strong>STAR</strong>). The combined<br />

resources of these media giants has enabled the delivery of<br />

a diverse array of international and regional sports to viewers<br />

via encrypted pay and free-to-air services.<br />

<strong>ESPN</strong> <strong>STAR</strong> <strong>Sports</strong> reaches out to more than 310 million<br />

viewers in Asia via 17 networks covering 24 countries,<br />

each localised to deliver differentiated world-class<br />

premier sports programming to Asian viewers.<br />

With an ever-expanding portfolio of channels transmitted<br />

across Asia from ESS’ Singapore headquarters, it quickly<br />

became clear that the existing tape-based system was<br />

becoming increasingly inefficient in supporting the<br />

localised programming needs for each market.<br />

The concept of a digital production ‘factory’ was born out of<br />

the requirement to streamline the operation from acquisition to<br />

transmission. Business expansion plans that could take advantage<br />

of other, less traditional, distribution channels were another driver<br />

for this transformation. To build upon the existing business, it was<br />

important to be able to stage content for other distribution channels<br />

such as Internet streaming and mobile etc.<br />

Having already engaged <strong>TSL</strong> to transform the transmission facility<br />

to a semi-tapeless, automation-controlled facility, Tom McVeigh,<br />

Senior Vice-President, Operations & Technology, contacted <strong>TSL</strong><br />

again in 2005 for technical consultation on the proposed production<br />

system; later dubbed Project Homerun.<br />

<strong>TSL</strong>, in conjunction with Tom’s team comprising Andy Rylance<br />

(Senior Director, Engineering) and Sabil Salim (Senior Director,<br />

Operations), prepared the technical requirements to go out to the<br />

market. By early 2006, key partners had been identified who could<br />

demonstrate their ability to deliver the required functionality.<br />

Omneon had recently unveiled MediaGrid that year, a scalable, active<br />

content storage system. OmniBus had the OPUS content management<br />

suite, which demonstrated the capability to manage content and<br />

media workflows. The Apple Final Cut Pro editing package was the<br />

logical choice for post production, since Omneon supported in-place<br />

editing on the MediaGrid and shot markers could be imported into<br />

the timeline from the OmniBus metadata schema.

Project Stages<br />

Contracts were signed and Project Homerun began in late August 2006. It was at this time that a ‘Super-user<br />

Group’ was formed; each member represented a key aspect of the operation. This Group would be closely<br />

involved in the detailed design and testing of the system throughout the project. The project timeline was<br />

split into six stages with each stage having clearly defined and measurable outcomes.<br />

Stage 1: System design<br />

The main deliverables were the system schematics and the<br />

specification of the OmniBus content management system<br />

(Process Description Document – PDD). Preparation of the PDD<br />

involved detailed workflow discussions for a period of three<br />

weeks in Singapore with the User Group.<br />

Stage 2: System pre-fabrication & equipment procurement<br />

The main deliverable was pre-build and testing of the majority<br />

of the system infrastructure in the UK headquarters of <strong>TSL</strong>. This<br />

included a substantial amount of commissioning of the OmniBus<br />

content management system.<br />

Due to the fact that some of the sub-systems would only be available<br />

on site in Singapore for logistical and practical reasons, covering<br />

components like the ENPS newsroom computer system, the main<br />

video router, the Front Porch Digital/SUN/StorageTek library, and the<br />

Sony Flexi-Cart, as well as the house IT-network itself, could not be<br />

directly tested during the pre-build. However, these sub-systems<br />

were simulated wherever possible in advance at <strong>TSL</strong>’s premises.<br />

Stage 3: Factory acceptance<br />

ESS was then invited to inspect and test the system against a set<br />

of pre-defined test scripts - the Factory Acceptance Test (FAT). Upon<br />

sign-off, the system was packed and air-freighted to Singapore.<br />

Stage 4: Site installation & commissioning<br />

The next stage saw the installation of the infrastructure at ESS<br />

in Singapore, including roll-out of the OmniBus desktop workstations<br />

across the Newsroom and production areas. This was the first<br />

opportunity to connect, configure & test the interfaces to the<br />

sub-systems that were not present during the FAT. Sign-off of the<br />

Site Acceptance Test (SAT) concluded that the system in its ‘manual’<br />

state was now operational.<br />

Stage 5: Workflow development<br />

From the beginning, it was planned that the complex content<br />

management workflows would only be configured when it was proven<br />

that the underlying OmniBus architecture was fully operational in<br />

‘manual’ mode. Stage 5 saw the overlaying of workflows such as<br />

language track stacking, screening and segment replace. The User<br />

Group ran through a pre-defined set of test scripts in the Basic<br />

Workflow Acceptance Test (BWAT) to prove functionality of the<br />

system once the workflows had been configured.<br />

Stage 6: Training & workflow refinement<br />

It was at the final stage in the project that more production, news<br />

and promotions staff (the ‘super-users’) were trained on the system<br />

and in turn they would later train all other remaining staff. Provision<br />

was also given for minor refinements to OmniBus workflows and<br />

OPUS layouts from feedback given by this wider user community.<br />

The project concluded with the Final Site Acceptance Test (SAT),<br />

whereby all functionality including the refinements, were tested.<br />

Project Skyhawk<br />

A separate project (dubbed Skyhawk) managed by ESS<br />

saw the introduction of a new business management system<br />

from Pilat. The design of the Homerun content management<br />

system ensured that the necessary interfaces were built in<br />

as the Pilat IBMS sends and receives information throughout<br />

the workflow processes.<br />

One example would be where programmes are planned and<br />

scheduled in the IBMS; a screening task can be sent from<br />

the IBMS that can be assigned to a Homerun user to screen a<br />

clip, when the clip has been screened an update is sent back<br />

to the IBMS. To mitigate against project slippage due to the<br />

dependency on the Skyhawk project, all IBMS interfaces could<br />

be manually simulated, thus enabling all features of Homerun<br />

to be tested independently.<br />

System pre-fabrication and commissioning at <strong>TSL</strong> UK headquarters<br />

For further information about <strong>TSL</strong> please visit: www.tsl.co.uk

<strong>ESPN</strong> <strong>STAR</strong> <strong>Sports</strong><br />

System technology<br />

System technology<br />

The heart of the new asset management system is a central media<br />

storage system using the Omneon MediaGrid. A Front Porch Digital<br />

archive mounts directly to the MediaGrid enabling content to be<br />

archived to and restored from an expandable StorageTek tape library.<br />

The standard file format across the system is QuickTime wrapped<br />

30Mbits/s IMX with 8 channels of 16 bit audio, and the MediaGrid<br />

provides 3000 hours of storage capacity to this content from its 50<br />

servers. Each MediaGrid server contributes 100MBytes/s of bandwidth;<br />

hence the aggregate bandwidth of the MediaGrid is 5000MBytes/s<br />

(40Gbit/s). The Front Porch Digital system takes advantage of this<br />

bandwidth by accessing the MediaGrid at up to 18x real-time for<br />

each of its five connected streams.<br />

Ingest into and playback<br />

Ingest into and playback out of the system is via Omneon Spectrum<br />

servers. Four servers, each with 650 hours of storage, provide<br />

capacity for 25 simultaneous ingest ports and 24 playback ports for<br />

studio, QC, voiceover and fast-turnaround. A further four 650 hour<br />

Omneon servers, arranged as main and mirror pairs, provide the<br />

playback to each of the 26 transmission channels.<br />

Currently, 24 Apple Mac Pro workstations are connected and mounted<br />

directly to the MediaGrid. Apple Final Cut Studio is installed on<br />

these workstations where Final Cut Pro is used to edit-in-place on the<br />

Omneon MediaGrid. Functionality from other applications within the<br />

Final Cut Studio suite, such as Motion and Color, provide additional<br />

post-production capability.<br />

Active transfers<br />

A key design principle of the system is that it must support live,<br />

fast-turnaround sports production. Active transfers via Omneon<br />

ContentBridges move QuickTime-wrapped content in chunks between<br />

the Spectrums and MediaGrid. All content ingested into the Spectrums<br />

is moved under automatic OmniBus control onto the MediaGrid using<br />

this technique. This technology allows Apple Final Cut Pro to import<br />

content onto the timeline within two minutes of being ingested on to<br />

the Spectrum server, enabling ‘highlights’ packages to be cut during<br />

a live sports event. This same technology allows playback of a clip<br />

whilst it is being recorded from a different Spectrum. For delayed<br />

playback within the order of seconds, ‘fast turnaround’ ports have<br />

been assigned on each Spectrum.<br />

Every piece of content is available almost immediately for users<br />

accessing either the high resolution or browse content. Limitations on<br />

the tape-based system that Homerun replaces are overcome, since<br />

multiple users can access the same content without the need to wait<br />

for recordings on tape to complete or tape-dubs to be made. Even<br />

without the many other benefits of the new system, this efficiency<br />

is of major significance.<br />

Content management<br />

Content management is undertaken by the OmniBus OPUS G3<br />

client/server architecture. Server components, servicing requests<br />

from the client workstations, are distributed across multiple<br />

HP DL380 and DL360 servers, the two critical system databases<br />

are deployed on clustered systems.<br />

All content transfers and most control systems use TCP/IP; virtual<br />

LANs have been created to isolate the various content and control<br />

networks. Inter-VLAN routing occurs on a pair of core HP ProCurve<br />

5406zl switches, configured as a main and backup resilient pair via<br />

VRRP protocols. All networked devices are attached to a number<br />

of HP 3400cl or HP 3500yl edge switches. Each edge switch<br />

is configured, where possible, to exist in only one VLAN so the<br />

configuration of these devices is straightforward and can be replaced<br />

with minimum configuration should a unit fail. Edge switches also<br />

exist in resilient pairs, with each networked device dual connected<br />

to each switch where possible. This dual connection increases system<br />

resilience and aids system maintenance when upgrading or replacing<br />

switches. All OmniBus workstations are installed with the same G3<br />

software components, a library of custom-designed user layouts are<br />

deployed appropriately to all workstations across the facility. Most<br />

user layouts contain an embedded browse video display that enables<br />

users to see the content they are manipulating or controlling.<br />

Tagging audio channels<br />

Ingest workstations give the ability to either schedule recordings<br />

or crash record content into the system. It is at this point that audio<br />

channels are tagged to identify the language tracks that are present<br />

on the incoming feed. These tags insert both a physical flag within the<br />

file, for automatic track routing by Omneon Spectrum upon playback,<br />

and a metadata entry into the OmniBus system, which is used to<br />

display a presence indicator on the browse video user layout.<br />

Browse encoding<br />

Browse encoding is an operation that occurs automatically in<br />

parallel with the ingest process. SDI video with up to eight channels<br />

of embedded audio is encoded via IPV Soyuz dual channel encoders<br />

to 2Mb/s WM9 browse proxy video for playback from the OmniBus<br />

OPUS workstations. Two dual-channel IPV XCode units provide the<br />

facility to transcode published IMX30 content from Headline and Final<br />

Cut Pro to WM9. XCode also responds to ‘scavenging’ requests from<br />

the OmniBus content management system in the event of a browse<br />

encoding failure. The browse proxy media is stored on expandable<br />

14TB EMC CLARiiON networked storage. This storage will mirror the<br />

20,000 hours of content on the MediaGrid and StorageTek tape library.<br />

Both encoded and transcoded content is available for browsing on the<br />

OmniBus workstations within ten seconds of the start of the encode/<br />

transcode process.<br />

Typical user layout with language track presence indicator to the left of browse video

Ingest operators monitor recordings on multi-split displays fed from<br />

a pair of Miranda Kaleido multi-image display systems. The Kaleido<br />

units are configured to trigger audible and visual alarms for a variety<br />

of faults e.g. English sound (audio track 1) missing, black, picture<br />

freeze etc. If faults occur, a flag is set by the ingest operator in<br />

the OmniBus system to generate a more detailed QC review task<br />

whereupon content can be marked either ‘not fixable’ or ‘fixable’ in<br />

post production. In both cases the Pilat IBMS system is updated so that<br />

the programming department can track the status of incoming content<br />

and make requests for re-feeds or replacement tapes as required.<br />

Logging layouts<br />

Another key requirement of the content management system<br />

was the ability to log all material, since a typical sports match<br />

or event can have hundreds of shot markers e.g. goal and penalty<br />

for a football match. Logging layouts were designed for various<br />

sports, such as: Football, Rugby, Tennis, Basketball, Cricket, Motor<br />

racing, 9 Ball Pool, Golf, Baseball, Triathlon, X-Games, Generic<br />

(contained schema for fault logging, segment logging etc).<br />

These markers are stored as metadata alongside the clip at the click<br />

of a button. This logging process can either happen live or against<br />

pre-recorded clips. Production staff would previously log on paper<br />

against a VT timecode. Through the powerful OPUS PinPoint search<br />

engine they can now search, view and retrieve relevant shots from a<br />

keyword search, for compilation into news stories, promos and other<br />

programmes from their workstations. Shot markers can be imported<br />

into Final Cut Pro; this can even be done on a live incoming feed to<br />

turn round highlights very quickly.<br />

Omnibus Headline desktop editor<br />

With the Omnibus Headline desktop editor, production staff<br />

and journalists now have the ability to cut news stories at their<br />

workstations. Craft editors have therefore been redeployed from<br />

their duty news-editing roles to concentrate on more substantial<br />

editing projects with Final Cut Pro. However, EDLs from both Headline<br />

and OPUS workstations can be sent to Final Cut Pro for finishing<br />

if necessary, whereby an edit has already been assembled for the<br />

craft editor to overlay more complex enhancements. Some OmniBus<br />

OPUS layouts take advantage of the OmniBus Desktop Control<br />

(ODC) whereby a layout is embedded into an ENPS screen. This<br />

allows journalists and production users to ‘drag and drop’ clips into a<br />

rundown. This same rundown can be imported into the OmniBus News<br />

Control application in the production studios for semi-automatic clip<br />

playback. ODC users can also create new clips and send lines/tape<br />

ingest requests to the main Media Ingest area. Full and partial restore<br />

of clips from the Front Porch Digital/StorageTek library is possible from<br />

appropriate Omnibus OPUS workstations where archived material is<br />

required for post production, e.g. historical footage.<br />

Language tracks<br />

Voicing additional language tracks against both live and prerecorded<br />

content is an important requirement for audiences<br />

across ESS’ Asian market. Using the OmniBus OPUS track stacking<br />

tool, a language track can be added to a clip once recorded on to the<br />

MediaGrid server via the Final Cut Pro voiceover tool. The new audio<br />

tracks are tagged so that its presence is indicated for users accessing<br />

the browse clip. Final Cut Pro is also used to tidy up any ‘fluffs’ made<br />

during a live voiceover recording.<br />

Clip screening layout with ‘single-click’ shot marker buttons<br />

Playback of material<br />

There are different options for playback of material published<br />

and transferred into the MediaGrid, all involve an active transfer<br />

to a Spectrum Omneon whereby the clip can be played out<br />

whilst transferring.<br />

• QC ports are used for quality control monitoring in the Media<br />

Ingest area. Clips requiring QC are automatically identified<br />

to the user from a dedicated OmniBus OPUS user layout.<br />

• Voiceover ports are dedicated ports to each of the three<br />

voiceover booths. Playback is under manual control from a<br />

dedicated OmniBus OPUS user layout. An OmniBus workflow<br />

‘task’ automatically instructs the user of which clip to load.<br />

• Studio ports are used for playback into each of the three<br />

production studios. This can either be under manual control<br />

via a dedicated OmniBus OPUS user layout or from the OmniBus<br />

News Control application that imports a rundown from the<br />

ENPS newsroom computer system.<br />

• Transmission ports are used to playback content to air.<br />

Content is cached automatically on to the transmission servers<br />

by OmniBus cache managers by importing a schedule from the<br />

Pilat IBMS system. Transmission schedules are also sent from the<br />

Pilat IBMS to Harris D-Series automation, which in turn controls<br />

the playback ports directly.<br />

Content management rules<br />

Content management rules are defined in the OmniBus system to<br />

manage space and minimise the number of transfers from server<br />

to MediaGrid and MediaGrid to archive. These rules perform copy<br />

and delete functions automatically, for example:<br />

• Certain clip types will be copied to the archive after a predefined<br />

period and deleted off the MediaGrid, whereas other<br />

clip types are deleted directly without archiving.<br />

• All clips are automatically copied to the MediaGrid from the ingest<br />

servers so that they are available for editing on Final Cut Pro.<br />

For further information about <strong>TSL</strong> please visit: www.tsl.co.uk

<strong>ESPN</strong> <strong>STAR</strong> <strong>Sports</strong><br />

Conclusion<br />

Homerun has been an extremely challenging<br />

project. Its requirements are unlike any other<br />

broadcaster in the world: many systems support<br />

live sports programming; some specialise in multiple<br />

live channels; there are a number of multi-lingual<br />

broadcasters in the world.<br />

However ESS is unique in combining them all by fully exploiting the<br />

new system to achieve maximum efficiency with the aim of taking<br />

the company to the next level, and as a highly successful service,<br />

it continues to grow.<br />

Designing Homerun required <strong>TSL</strong>, as a systems integrator, to work<br />

closely with ESS and the key suppliers on both the technical and<br />

operational issues. Everyone invested time to break new ground in<br />

their understanding of both the customer’s requirements and the<br />

technology needed to support them.<br />

It is often only by taking an independent, collaborative and all-round<br />

view that the challenges of complex modern broadcast integration<br />

can be met.

For further information about <strong>TSL</strong> please visit: www.tsl.co.uk

<strong>TSL</strong> is the UK’s leading independent broadcast systems integrator, with an international<br />

client base. The company has a strong professional products division that includes Audio<br />

Monitoring, Mains Distribution, UMD and Tally Systems. <strong>TSL</strong> also develops custom products<br />

in-house to meet customer requirements that cannot be satisfied by standard products.<br />

For further information please visit www.tsl.co.uk<br />

<strong>TSL</strong> Sales: +44 (0)1628 676 221 E-mail: sales@tsl.co.uk Web: www.tsl.co.uk<br />

<strong>TSL</strong>, Vanwall Business Park, Vanwall Road, Maidenhead, Berkshire SL6 4UB, United Kingdom<br />

Tel: +44 (0)1628 676 200 Fax: +44 (0)1628 676 299 E-mail: sales@tsl.co.uk<br />

© 2008 <strong>TSL</strong> Systems. All rights reserved.