1FfUrl0

1FfUrl0

1FfUrl0

You also want an ePaper? Increase the reach of your titles

YUMPU automatically turns print PDFs into web optimized ePapers that Google loves.

Regression – Recommendations Improved<br />

This works correctly, but is very slow. A better implementation has more<br />

infrastructure so you can avoid having to loop over all the datasets to get the count<br />

(c_newset). In particular, we keep track of which shopping baskets have which<br />

frequent itemsets. This accelerates the loop but makes the code harder to follow.<br />

Therefore, we will not show it here. As usual, you can find both implementations on<br />

the book's companion website. The code there is also wrapped into a function that<br />

can be applied to other datasets.<br />

The Apriori algorithm returns frequent itemsets, that is, small baskets that are not in<br />

any specific quantity (minsupport in the code).<br />

Association rule mining<br />

Frequent itemsets are not very useful by themselves. The next step is to build<br />

association rules. Because of this final goal, the whole field of basket analysis is<br />

sometimes called association rule mining.<br />

An association rule is a statement of the "if X then Y" form; for example, if a<br />

customer bought War and Peace, they will buy Anna Karenina. Note that the rule is not<br />

deterministic (not all customers who buy X will buy Y), but it is rather cumbersome<br />

to always spell it out. So if a customer bought X, he is more likely to buy Y according<br />

to the baseline; thus, we say if X then Y, but we mean it in a probabilistic sense.<br />

Interestingly, the antecedent and conclusion may contain multiple objects: costumers<br />

who bought X, Y, and Z also bought A, B, and C. Multiple antecedents may allow<br />

you to make more specific predictions than are possible from a single item.<br />

You can get from a frequent set to a rule by just trying all possible combinations of X<br />

implies Y. It is easy to generate many of these rules. However, you only want to have<br />

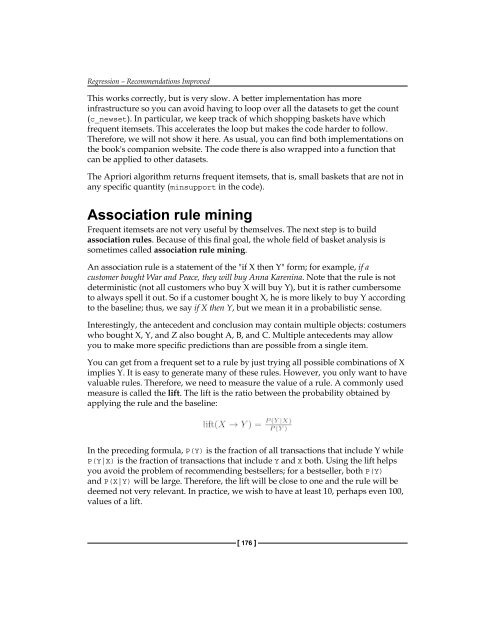

valuable rules. Therefore, we need to measure the value of a rule. A commonly used<br />

measure is called the lift. The lift is the ratio between the probability obtained by<br />

applying the rule and the baseline:<br />

In the preceding formula, P(Y) is the fraction of all transactions that include Y while<br />

P(Y|X) is the fraction of transactions that include Y and X both. Using the lift helps<br />

you avoid the problem of recommending bestsellers; for a bestseller, both P(Y)<br />

and P(X|Y) will be large. Therefore, the lift will be close to one and the rule will be<br />

deemed not very relevant. In practice, we wish to have at least 10, perhaps even 100,<br />

values of a lift.<br />

[ 176 ]