ment; convection enables relatively rapidcycling between hot <strong>and</strong> cold levels. Thermalvacuum testing does the same thing, but in avacuum chamber; cycles are slower, but themethod provides the most realistic simulationof flight conditions. In thermal balancetesting, also conducted in vacuum, dedicatedtest phases that simulate flight conditions areused to obtain steady-state temperature datathat are then compared to model predictions.This allows verification of the thermal controlsubsystem <strong>and</strong> gathering of data <strong>for</strong> correlationwith thermal analytic models. Burnintests are typically part of thermal cycletests; additional test time is allotted, <strong>and</strong> theitem is made to operate while the temperatureis cycled or held at an elevated level.For electronic units, the test temperaturerange <strong>and</strong> the number of test cycles have thegreatest impact on test effectiveness. Otherimportant parameters include dwell time atextreme temperatures, whether the unit isoperational, <strong>and</strong> the rate of change betweenhot <strong>and</strong> cold plateaus. For mechanical assemblies,these same parameters are important,along with simulation of thermal spatialgradients <strong>and</strong> transient thermal conditions.Thermal test specifications are based primarilyon test objectives. At the unit level,the emphasis is on part screening, whichis best achieved through thermal cycle <strong>and</strong>burn-in testing. Temperature ranges aremore severe than would be encountered inflight, which allows problems to be isolatedquickly. Also, individual components areeasier to fix than finished assemblies.At the payload, subsystem, <strong>and</strong> spacevehicle levels, the emphasis shifts towardper<strong>for</strong>mance verification. At higher levels ofShock response spectra10,00010001001010.1100 1000Frequency (hertz)10,000Maximum predicted shock level <strong>for</strong> fairing separationMaximum predicted shock level <strong>for</strong> stage separationMaximum predicted shock level <strong>for</strong> spacecraft separationTypical shock test level is a smooth envelope plus 6 dB marginassembly in flight-like conditions, end-toendper<strong>for</strong>mance capabilities can be demonstrated,subsystems <strong>and</strong> their interfacescan be verified, <strong>and</strong> flightworthiness requirementscan be met. On the other h<strong>and</strong>, at thehigher levels of assembly, it is difficult (if notimpossible) to achieve wide test temperatureranges, so part screening is less effective.At the unit, subsystem, <strong>and</strong> vehicle levels,Aerospace thermal engineers work with thecontractor in developing test plans that provethe design, workmanship, <strong>and</strong> flightworthinessof the test article. Temperature rangesare selected that will adequately screen or accuratelysimulate mission conditions, <strong>and</strong> theproper number of hot <strong>and</strong> cold test plateausare specified to adequately cycle the testequipment. Aerospace will provide expertiseduring the test to protect the space hardwarein the test environment, resolve test issues<strong>and</strong> concerns, <strong>and</strong> investigate test articlediscrepancies. The reason, of course, is thatTypical test level usedto simulate the shockenvironment. Qualificationmargins at theunit level are typically6 decibels.identifying <strong>and</strong> correcting problems in thermaltesting significantly increases confidencein mission success.ConclusionSince the first satellite launch in 1957, morethan 600 space vehicles have been launchedthrough severe <strong>and</strong> sometimes unknown environments.Even with extensive experience<strong>and</strong> a wealth of historical data to consult,mission planners face a difficult task in ensuringthat critical hardware reaches spacesafely. Every new component, new process,<strong>and</strong> new technology introduces uncertaintiesthat can only be resolved through rigorous<strong>and</strong> methodical testing. As an independentobserver of the testing process, Aerospacehelps instill confidence that environmentalrequirements have been adequately defined<strong>and</strong> the corresponding tests have been properlyplanned <strong>and</strong> executed to generate useful<strong>and</strong> reliable results.Left: A spacecraft is placed in the acoustic chamber <strong>and</strong> is ready <strong>for</strong> testing. Air horns at the corners of thechamber generate a prescribed sound pressure into the confined space <strong>and</strong> onto the spacecraft. Microphoneslocated around the spacecraft are used to monitor <strong>and</strong> control the pressure levels. Middle: The sudden separationof the payload fairing is used to expose spacecraft components to the shock environment expected inflight. Right: Space instrument placed on an electrodynamically controlled slip table <strong>for</strong> vibration testing. Thecontrol accelerometers are mounted at the base of the test fixture at a location that represents the interface tothe launch vehicle adapter. Accelerometers mounted on the test specimen measure the dynamic responses.Crosslink Fall 2005 • 15

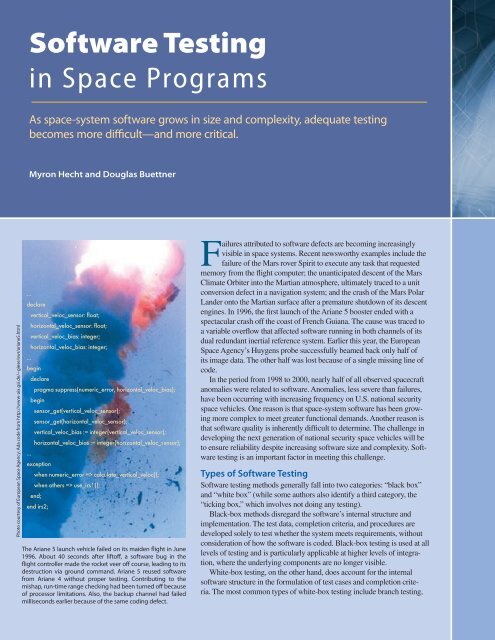

Software <strong>Testing</strong>in Space ProgramsAs space-system software grows in size <strong>and</strong> complexity, adequate testingbecomes more difficult—<strong>and</strong> more critical.Myron Hecht <strong>and</strong> Douglas BuettnerPhoto courtesy of European Space Agency; Ada code from http://www-aix.gsi.de/~giese/swr/ariane5.html...declarevertical_veloc_sensor: float;horizontal_veloc_sensor: float;vertical_veloc_bias: integer;horizontal_veloc_bias: integer;...begindeclarepragma suppress(numeric_error, horizontal_veloc_bias);beginsensor_get(vertical_veloc_sensor);sensor_get(horizontal_veloc_sensor);vertical_veloc_bias := integer(vertical_veloc_sensor);horizontal_veloc_bias := integer(horizontal_veloc_sensor);...exceptionwhen numeric_error => calculate_vertical_veloc();when others => use_irs1();end;end irs2;The Ariane 5 launch vehicle failed on its maiden flight in June1996. About 40 seconds after liftoff, a software bug in theflight controller made the rocket veer off course, leading to itsdestruction via ground comm<strong>and</strong>. Ariane 5 reused softwarefrom Ariane 4 without proper testing. Contributing to themishap, run-time range checking had been turned off becauseof processor limitations. Also, the backup channel had failedmilliseconds earlier because of the same coding defect.Failures attributed to software defects are becoming increasinglyvisible in space systems. Recent newsworthy examples include thefailure of the Mars rover Spirit to execute any task that requestedmemory from the flight computer; the unanticipated descent of the MarsClimate Orbiter into the Martian atmosphere, ultimately traced to a unitconversion defect in a navigation system; <strong>and</strong> the crash of the Mars PolarL<strong>and</strong>er onto the Martian surface after a premature shutdown of its descentengines. In 1996, the first launch of the Ariane 5 booster ended with aspectacular crash off the coast of French Guiana. The cause was traced toa variable overflow that affected software running in both channels of itsdual redundant inertial reference system. Earlier this year, the EuropeanSpace Agency’s Huygens probe successfully beamed back only half ofits image data. The other half was lost because of a single missing line ofcode.In the period from 1998 to 2000, nearly half of all observed spacecraftanomalies were related to software. Anomalies, less severe than failures,have been occurring with increasing frequency on U.S. national securityspace vehicles. One reason is that space-system software has been growingmore complex to meet greater functional dem<strong>and</strong>s. Another reason isthat software quality is inherently difficult to determine. The challenge indeveloping the next generation of national security space vehicles will beto ensure reliability despite increasing software size <strong>and</strong> complexity. Softwaretesting is an important factor in meeting this challenge.Types of Software <strong>Testing</strong>Software testing methods generally fall into two categories: “black box”<strong>and</strong> “white box” (while some authors also identify a third category, the“ticking box,” which involves not doing any testing).Black-box methods disregard the software’s internal structure <strong>and</strong>implementation. The test data, completion criteria, <strong>and</strong> procedures aredeveloped solely to test whether the system meets requirements, withoutconsideration of how the software is coded. Black-box testing is used at alllevels of testing <strong>and</strong> is particularly applicable at higher levels of integration,where the underlying components are no longer visible.White-box testing, on the other h<strong>and</strong>, does account <strong>for</strong> the internalsoftware structure in the <strong>for</strong>mulation of test cases <strong>and</strong> completion criteria.The most common types of white-box testing include branch testing,