Constraint-driven generators offer some key advantages over the more common, parameterizedgenerators. First and <strong>for</strong>emost, they give the <strong>verification</strong> engineer full control over the generationprocess. By using simple constraints, engineers can generate tests that are completely random, completelydeterministic, or anywhere in between. Either these constraints can be spec constraints, used to define thelegal parameters that the generator must always hold to, or test constraints, which target the generatorto a specific test from the <strong>functional</strong> test plan. Instead of randomizing only what the user specificallyinstructs the system to randomize, constraint-driven generators take the infinity-minus approach: theyrandomize everything except what the engineer specifically constrains the generator not to randomize.This allows the generator to approach any given test scenario from multiple paths and find bugs thatwere not identified in the <strong>functional</strong> test plan.This capability to capture the “same” test scenario from different paths is critical. Most post-silicon<strong>functional</strong> bugs are not due to an inadequacy in the <strong>functional</strong> test plan, but to unanticipated usage bythe end user or ambiguities in the interface specifications. It’s impossible to think of all the possible bugswhen writing the <strong>functional</strong> test plan, so it is critical that all tests be run from multiple, different paths.Beyond offering support <strong>for</strong> the common pre-run generation <strong>method</strong>ology, constraint-driven generatorscan also support the more advanced "on-the-fly" generation <strong>method</strong>ology. Using the on-the-fly approach,the generator interacts with the DUT and reacts to the state of the device in real-time. In this manner,even hard-to-reach corner cases can be captured because the generation constraints are state dependent.On-the-fly generation also eliminates the need <strong>for</strong> big memories to hold the entire test. Instead ofgenerating the test all at one time, the inputs are generated as required. This reduces the requiredmemory and swapping by the simulator, significantly increasing simulation per<strong>for</strong>mance.Constraint-driven generators are also generic, or design independent. They receive a description of the dataelements to be generated (instructions, packets, or polygons) and generate them accordingly. If the designerintroduces changes to the architecture, the generator easily adapts, making the tests easy to reuse.One of the main challenges facing a <strong>verification</strong> engineer is to test corner cases in the <strong>functional</strong>ity ofthe device. These corner cases are usually reached after a long sequence of inputs, and they’re oftenthe result of several independent streams of input reaching a special combination at the same time.The constraint-driven generator’s ability to generate massive amounts of tests on-the-fly allows thegenerator to monitor the state of the device constantly and generate the required inputs at exactly theright time to reach the desired corner case. The result is high throughput of effective tests, which achievehigh <strong>functional</strong> coverage. Thus, when used in combination with <strong>functional</strong> coverage analysis, constraintdrivengeneration provides easy confirmation that a corner case was reached, and under what conditions.A powerful feature often missing in homegrown pre-run generators is the interface to the simulator.In the generic constraint-driven generators, this simulator interface does not have to be specified <strong>for</strong>every project, but is included as part of the generic generator. Manually created generators requirecreation of a custom interface that is specific not only to the simulator, but also to a particular versionof the simulator — a maintenance nightmare.All of these capabilities supplied by on-the-fly constraint-driven generators result in a major reductionin the <strong>verification</strong> cycle and significant increases in the <strong>verification</strong> quality.ON-THE-FLY TEMPORAL CHECKSToday’s highly integrated designs contain many components that are often developed by differentengineers, resulting in different interpretations of the protocols between components. This can causethe component interfaces to become the weakest link in the design. On-the-fly temporal checks verifythe temporal behavior and protocols associated with the design or system. They constantly monitor thedesign, sensing triggers (or events) that signal the beginning of a sequence. They then follow thesequence, verifying that it con<strong>for</strong>ms to the temporal rules specified by the <strong>verification</strong> engineer.There are several types of rules. In some cases, the protocol can be specified explicitly, defining exactlyon which cycle each part of the protocol should be per<strong>for</strong>med. In other cases, the exact cycle of aresponse can’t be defined; it is known only that it must happen eventually, or that it will occur withina certain interval of time. All of these checks — explicit, eventual, and interval — must be easy to specifyand must run on-the-fly to allow <strong>for</strong> protocol <strong>verification</strong> and efficient debugging of interface problems.8

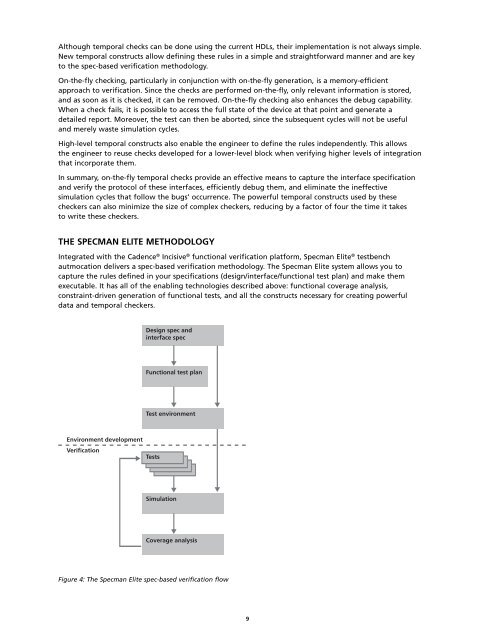

Although temporal checks can be done using the current HDLs, their implementation is not always simple.New temporal constructs allow defining these rules in a simple and straight<strong>for</strong>ward manner and are keyto the spec-<strong>based</strong> <strong>verification</strong> <strong>method</strong>ology.On-the-fly checking, particularly in conjunction with on-the-fly generation, is a memory-efficientapproach to <strong>verification</strong>. Since the checks are per<strong>for</strong>med on-the-fly, only relevant in<strong>for</strong>mation is stored,and as soon as it is checked, it can be removed. On-the-fly checking also enhances the debug capability.When a check fails, it is possible to access the full state of the device at that point and generate adetailed report. Moreover, the test can then be aborted, since the subsequent cycles will not be usefuland merely waste simulation cycles.High-level temporal constructs also enable the engineer to define the rules independently. This allowsthe engineer to reuse checks developed <strong>for</strong> a lower-level block when verifying higher levels of integrationthat incorporate them.In summary, on-the-fly temporal checks provide an effective means to capture the interface specificationand verify the protocol of these interfaces, efficiently debug them, and eliminate the ineffectivesimulation cycles that follow the bugs’ occurrence. The powerful temporal constructs used by thesecheckers can also minimize the size of complex checkers, reducing by a factor of four the time it takesto write these checkers.THE SPECMAN ELITE METHODOLOGYIntegrated with the <strong>Cadence</strong> ® Incisive ® <strong>functional</strong> <strong>verification</strong> plat<strong>for</strong>m, <strong>Spec</strong>man Elite ® testbenchautmocation delivers a spec-<strong>based</strong> <strong>verification</strong> <strong>method</strong>ology. The <strong>Spec</strong>man Elite system allows you tocapture the rules defined in your specifications (design/interface/<strong>functional</strong> test plan) and make themexecutable. It has all of the enabling technologies described above: <strong>functional</strong> coverage analysis,constraint-driven generation of <strong>functional</strong> tests, and all the constructs necessary <strong>for</strong> creating powerfuldata and temporal checkers.Design spec andinterface specFunctional test planTest environmentEnvironment developmentVerificationTestsSimulationCoverage analysisFigure 4: The <strong>Spec</strong>man Elite spec-<strong>based</strong> <strong>verification</strong> flow9