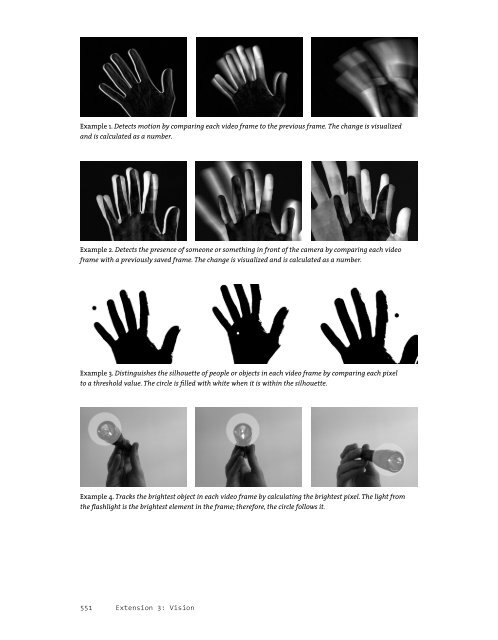

Example 1. Detects motion by comparing each video frame to the previous frame. The change is visualized and is calculated as a number. Example 2. Detects the presence of someone or something in front of the camera by comparing each video frame <strong>with</strong> a previously saved frame. The change is visualized and is calculated as a number. Example 3. Distinguishes the silhouette of people or objects in each video frame by comparing each pixel to a threshold value. The circle is fi lled <strong>with</strong> white when it is <strong>with</strong>in the silhouette. Example 4. Tracks the brightest object in each video frame by calculating the brightest pixel. The light from the fl ashlight is the brightest element in the frame; therefore, the circle follows it. 551 Extension 3: Vision

Example 4: Brightness tracking (p. 560) A rudimentary scheme for object tracking, ideal for tracking the location of a single illuminated point (such as a fl ashlight), fi nds the location of the single brightest pixel in every fresh frame of video. In this algorithm, the brightness of each pixel in the incoming video frame is compared <strong>with</strong> the brightest value yet encountered in that frame; if a pixel is brighter than the brightest value yet encountered, then the location and brightness of that pixel are stored. After all of the pixels have been examined, then the brightest location in the video frame is known. This technique relies on an operational assumption that there is only one such object of interest. With trivial modifi cations, it can equivalently locate and track the darkest pixel in the scene, or track multiple and differently colored objects. Of course, many more software techniques exist, at every level of sophistication, for detecting, recognizing, and interacting <strong>with</strong> people and other objects of interest. Each of the tracking algorithms described above, for example, can be found in elaborated versions that amend its various limitations. Other easy-to-implement algorithms can compute specifi c features of a tracked object, such as its area, center of mass, angular orientation, compactness, edge pixels, and contour features such as corners and cavities. On the other hand, some of the most diffi cult to implement algorithms, representing the cutting edge of computer vision research today, are able (<strong>with</strong>in limits) to recognize unique people, track the orientation of a person’s gaze, or correctly identify facial expressions. Pseudocodes, source codes, or ready-to-use implementations of all of these techniques can be found on the Internet in excellent resources like Daniel Huber’s Computer Vision Homepage, Robert Fisher’s HIPR (Hypermedia Image <strong>Processing</strong> Reference), or in the software toolkits discussed on pages 554-555. Computer vision in the physical world Unlike the human eye and brain, no computer vision algorithm is completely general, which is to say, able to perform its intended function given any possible video input. Instead, each software tracking or detection algorithm is critically dependent on certain unique assumptions about the real-world video scene it is expected to analyze. If any of these expectations are not met, then the algorithm can produce poor or ambiguous results or even fail altogether. For this reason, it is essential to design physical conditions in tandem <strong>with</strong> the development of computer vision code, and to select the software techniques that are most compatible <strong>with</strong> the available physical conditions. Background subtraction and brightness thresholding, for example, can fail if the people in the scene are too close in color or brightness to their surroundings. For these algorithms to work well, it is greatly benefi cial to prepare physical circumstances that naturally emphasize the contrast between people and their environments. This can be achieved <strong>with</strong> lighting situations that silhouette the people, or through the use of specially colored costumes. The frame-differencing technique, likewise, fails to detect people if they are stationary. It will therefore have very different degrees of success 552 Extension 3: Vision

- Page 2 and 3:

Processing: a programming handbook

- Page 4 and 5:

29 34 45 57 67 72 91 99 113 121 131

- Page 6 and 7:

88 342 55 65 305 220 98 319 323 351

- Page 8 and 9:

29 30 44 55 63 70 88 97 113 124 128

- Page 10 and 11:

51 51 53 57 61 61 65 67 69 69 71 72

- Page 12 and 13:

237 237 238 239 242 243 245 245 249

- Page 14 and 15:

501 503 507 511 515 519 519 521 522

- Page 16 and 17:

Processing… Processing relates so

- Page 18 and 19:

Literacy Processing does not presen

- Page 20 and 21:

languages suitable for different co

- Page 22 and 23:

The Processing website, www.process

- Page 24 and 25:

Using Processing Download, Install

- Page 26 and 27:

Sketch_123.java The JAVA fi le gene

- Page 28 and 29:

13 Using Processing int x = 0; // S

- Page 30 and 31: 15 Using Processing } } diagonals(d

- Page 33 and 34: Shape 1: Coordinates, Primitives Th

- Page 35 and 36: A position on the screen is compris

- Page 37 and 38: While it’s possible to draw any l

- Page 39 and 40: triangle(55, 9, 110, 100, 85, 100);

- Page 41 and 42: Drawing order The order in which sh

- Page 43 and 44: ect(33, 20, 33, 60); fill(255, 204)

- Page 45 and 46: the width and the fourth parameter

- Page 47 and 48: also use this range. Setting the re

- Page 49 and 50: ackground(56, 90, 94); smooth(); st

- Page 51 and 52: RGB HSB HEX 255 0 0 360 100 100 #FF

- Page 53 and 54: Change the brightness, hue and satu

- Page 56 and 57: Synthesis 1: Form and Code This uni

- Page 58 and 59: Riley Waves. These images were infl

- Page 61 and 62: Substrate, 2004. Image courtesy of

- Page 63 and 64: 159 Interviews 1: Print

- Page 65 and 66: When a program starts, mouseX and m

- Page 67 and 68: 208 Input 1: Mouse I // Exponential

- Page 69 and 70: Using the mouseX and mouseY variabl

- Page 71 and 72: Mouse buttons Computer mice and oth

- Page 73 and 74: Draw an ellipse to show the positio

- Page 76 and 77: Rafael Lozano-Hemmer. Standards and

- Page 78 and 79: obtained, in this case, with the he

- Page 82 and 83: detecting people in videos of offi

- Page 84 and 85: trajectories in 2D space. EyesWeb

- Page 86 and 87: color currColor = video.pixels[i];

- Page 88 and 89: Example 3: Detection through bright

- Page 90 and 91: Resources Computer vision software

- Page 92 and 93: map(), 81 mask(), 354 max(), 50 mil

- Page 94 and 95: C, 7, 264, 515-517, 522-523, 592, 6

- Page 96 and 97: Listening Post (Rubin, Hansen), 514

- Page 98: Spark Fun Electronics, 640 SQL (Str