July 2006 Volume 9 Number 3 - CiteSeerX

July 2006 Volume 9 Number 3 - CiteSeerX

July 2006 Volume 9 Number 3 - CiteSeerX

You also want an ePaper? Increase the reach of your titles

YUMPU automatically turns print PDFs into web optimized ePapers that Google loves.

Precision: measures the number of correct mapping found against the total number of retrieved mappings.<br />

number of correct found mapping<br />

precision = Eq. 1<br />

number of retrieved mappings<br />

Recall: measures the number of correct mapping found comparable to the total number of existing mappings.<br />

number of correct found mapping<br />

recall = Eq. 2<br />

number of existing mappings<br />

F-measure: combines measure of precision and recall as single efficiency measure.<br />

2 x precision x recall<br />

f - measure = Eq. 3<br />

precision + recall<br />

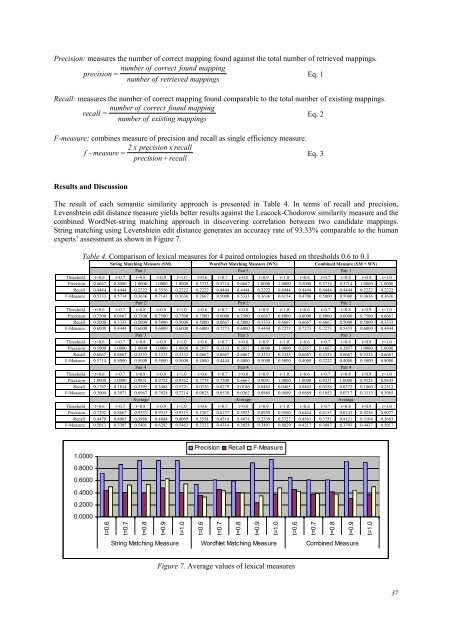

Results and Discussion<br />

The result of each semantic similarity approach is presented in Table 4. In terms of recall and precision,<br />

Levenshtein edit distance measure yields better results against the Leacock-Chodorow similarity measure and the<br />

combined WordNet-string matching approach in discovering correlation between two candidate mappings.<br />

String matching using Levenshtein edit distance generates an accuracy rate of 93.33% comparable to the human<br />

experts’ assessment as shown in Figure 7.<br />

Table 4. Comparison of lexical measures for 4 paired ontologies based on thresholds 0.6 to 0.1<br />

String Matching Measure (SM) WordNet Matching Measure (WN) Combined Measure (SM + WN)<br />

Pair 1 Pair 1 Pair 1<br />

Threshold t=0.6 t=0.7 t=0.8 t=0.9 t=1.0 t=0.6 t=0.7 t=0.8 t=0.9 t=1.0 t=0.6 t=0.7 t=0.8 t=0.9 t=1.0<br />

Precision 0.6667 0.8000 1.0000 1.0000 1.0000 0.3333 0.5714 0.6667 1.0000 1.0000 0.5000 0.5714 0.5714 1.0000 1.0000<br />

Recall 0.4444 0.4444 0.2222 0.5556 0.2222 0.2222 0.4444 0.4444 0.2222 0.4444 0.4444 0.4444 0.4444 0.2222 0.2222<br />

F-Measure 0.5333 0.5714 0.3636 0.7143 0.3636 0.2667 0.5000 0.5333 0.3636 0.6154 0.4706 0.5000 0.5000 0.3636 0.3636<br />

Pair 2 Pair 2 Pair 2<br />

Threshold t=0.6 t=0.7 t=0.8 t=0.9 t=1.0 t=0.6 t=0.7 t=0.8 t=0.9 t=1.0 t=0.6 t=0.7 t=0.8 t=0.9 t=1.0<br />

Precision 0.7500 0.6667 0.7500 0.7500 0.7500 0.7500 0.8000 0.7500 0.6667 0.8000 0.8000 0.8000 0.6000 0.7500 0.6667<br />

Recall 0.5000 0.3333 0.5000 0.5000 0.5000 0.5000 0.6667 0.5000 0.3333 0.6667 0.6667 0.6667 0.5000 0.5000 0.3333<br />

F-Measure 0.6000 0.4444 0.6000 0.6000 0.6000 0.6000 0.7273 0.6000 0.4444 0.7273 0.7273 0.7273 0.5455 0.6000 0.4444<br />

Pair 3 Pair 3 Pair 3<br />

Threshold t=0.6 t=0.7 t=0.8 t=0.9 t=1.0 t=0.6 t=0.7 t=0.8 t=0.9 t=1.0 t=0.6 t=0.7 t=0.8 t=0.9 t=1.0<br />

Precision 0.5000 1.0000 1.0000 1.0000 1.0000 0.2857 0.3333 0.2857 1.0000 1.0000 0.2857 0.1667 0.2857 1.0000 1.0000<br />

Recall 0.6667 0.6667 0.3333 0.3333 0.3333 0.6667 0.6667 0.6667 0.3333 0.3333 0.6667 0.3333 0.6667 0.3333 0.6667<br />

F-Measure 0.5714 0.8000 0.5000 0.5000 0.5000 0.4000 0.4444 0.4000 0.5000 0.5000 0.4000 0.2222 0.4000 0.5000 0.8000<br />

Pair 4 Pair 4 Pair 4<br />

Threshold t=0.6 t=0.7 t=0.8 t=0.9 t=1.0 t=0.6 t=0.7 t=0.8 t=0.9 t=1.0 t=0.6 t=0.7 t=0.8 t=0.9 t=1.0<br />

Precision 1.0000 1.0000 0.9831 0.9752 0.9762 0.7778 0.7500 0.6667 0.9091 1.0000 1.0000 0.9231 1.0000 0.9524 0.9643<br />

Recall 0.1767 0.1814 0.5395 0.5488 0.5721 0.0326 0.0279 0.0186 0.0465 0.0465 0.0465 0.0558 0.0372 0.1860 0.2512<br />

F-Measure 0.3004 0.3071 0.6967 0.7024 0.7214 0.0625 0.0538 0.0362 0.0885 0.0889 0.0889 0.1053 0.0717 0.3113 0.3985<br />

Average Average Average<br />

Threshold t=0.6 t=0.7 t=0.8 t=0.9 t=1.0 t=0.6 t=0.7 t=0.8 t=0.9 t=1.0 t=0.6 t=0.7 t=0.8 t=0.9 t=1.0<br />

Precision 0.7292 0.8667 0.9333 0.9313 0.9315 0.5367 0.6137 0.5923 0.8939 0.9500 0.6464 0.6153 0.6143 0.9256 0.9077<br />

Recall 0.4470 0.4065 0.3988 0.4844 0.4069 0.3554 0.4514 0.4074 0.2339 0.3727 0.4561 0.3751 0.4121 0.3104 0.3683<br />

F-Measure 0.5013 0.5307 0.5401 0.6292 0.5463 0.3323 0.4314 0.3924 0.3491 0.4829 0.4217 0.3887 0.3793 0.4437 0.5017<br />

1.0000<br />

0.8000<br />

0.6000<br />

0.4000<br />

0.2000<br />

0.0000<br />

t=0.6<br />

t=0.7<br />

t=0.8<br />

t=0.9<br />

t=1.0<br />

Precision Recall F-Measure<br />

t=0.6<br />

t=0.7<br />

t=0.8<br />

String Matching Measure WordNet Matching Measure Combined Measure<br />

t=0.9<br />

t=1.0<br />

t=0.6<br />

Figure 7. Average values of lexical measures<br />

t=0.7<br />

t=0.8<br />

t=0.9<br />

t=1.0<br />

37