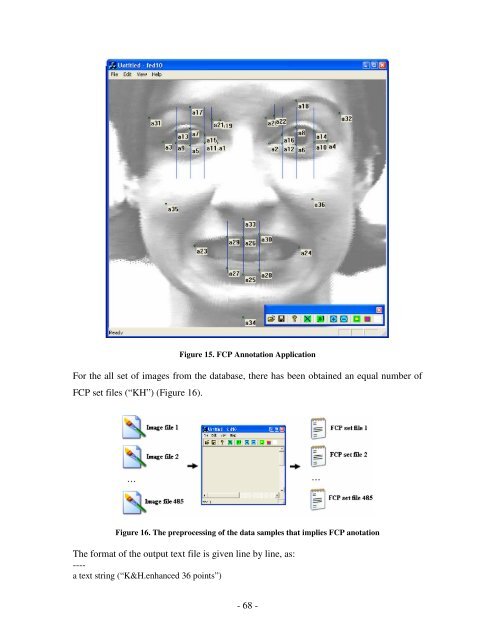

Figure 15. FCP Annotation Application For the all set <strong>of</strong> images from the database, there has been obtained an equal number <strong>of</strong> FCP set files (“KH”) (Figure 16). Figure 16. The preprocessing <strong>of</strong> the data samples that implies FCP anotation The format <strong>of</strong> the output text file is given line by line, as: ---- a text string (“K&H.enhanced 36 points”) - 68 -

[image width] [image height] ---- An example <strong>of</strong> an output file is given below K&H.enhanced 36 points 640 490 348 227 407 226 p1:349,226 p2:407,227 p3:289,225 p4:471,224 p5:319,229 p6:437,228 p7:319,210 p8:437,209 p9:303,226 p10:457,225 p11:335,226 p12:421,227 p13:303,213 p14:457,213 p15:335,217 p16:421,217 p17:319,186 p18:437,179 p19:352,201 p20:403,198 p21:343,200 p22:412,196 p23:324,341 p24:440,343 p25:378,373 p26:378,332 p27:360,366 p28:396,368 p29:360,331 p30:396,328 p31:273,198 p32:485,193 p33:378,311 p34:378,421 p35:292,294 p36:455,289 Parameter Discretization By using the FCP Management Application, all the images from the initial Cohn-Kanade AU-Coded Facial Expression Database were manually processed and a set <strong>of</strong> text files including the specification <strong>of</strong> Facial Characteristic Point locations has been obtained. The Parameter Discretization Application was further used for analyzing all the “KH” files previously created and to gather all the data in a single output text file. An important task <strong>of</strong> the application consisted in performing the discretization process for the value <strong>of</strong> each <strong>of</strong> the parameters, for all the input samples. - 69 -

- Page 1 and 2:

Automatic recognition of facial exp

- Page 3 and 4:

Man-Machine Interaction Group Facul

- Page 5 and 6:

Acknowledgements The author would l

- Page 7 and 8:

Eye Detection Module ..............

- Page 9:

List of tables Table 1. The used se

- Page 12 and 13:

data taken from the Cohn-Kanade AU-

- Page 14 and 15:

- The discussions on the current ap

- Page 16 and 17:

ecognition in static pictures, for

- Page 18 and 19: In [Wang and Tang, 2003] the author

- Page 20 and 21: Data preparation Starting from the

- Page 22 and 23: Figure 2. Facial characteristic poi

- Page 24 and 25: The only additional time is that of

- Page 26 and 27: African-American and three percent

- Page 28 and 29: Table 4. The emotion projections of

- Page 30 and 31: contains a large sample of varying

- Page 32 and 33: Bayesian networks were designed to

- Page 34 and 35: - correctly identify the goals of m

- Page 36 and 37: In the final step of constructing a

- Page 38 and 39: - renormalize the w ijk to assure t

- Page 40 and 41: Principal Component Analysis The ne

- Page 42 and 43: The term σ ij is the covariance be

- Page 44 and 45: T The term rank( X ∗ X ) is gener

- Page 46 and 47: numeric information. Usually, a neu

- Page 48 and 49: defined as: ∆w = −η ∇ ji ji

- Page 50 and 51: ∂E ∂a j = i E ∂a pk ∂a pk

- Page 52 and 53: Spatial Filtering The spatial filte

- Page 54 and 55: way high pass filter were used for

- Page 56 and 57: module includes some routines for d

- Page 59 and 60: IMPLEMENTATION Facial Feature Datab

- Page 61 and 62: SMILE resides in a dynamic link lib

- Page 63 and 64: FCP Management Application The Cohn

- Page 65 and 66: Figure 13. Head rotation in the ima

- Page 67: Table 6. The set of rules for the u

- Page 71 and 72: Figure 17. The facial areas involve

- Page 73 and 74: The functionality of the tool was b

- Page 75 and 76: A small part of the output text fil

- Page 77 and 78: o call a specialized routine for co

- Page 79 and 80: There is another kind of structure

- Page 81 and 82: performing classification of facial

- Page 83 and 84: Figure 22. Sobel edge detector appl

- Page 85 and 86: almost closed it obviously does not

- Page 87 and 88: Figure 28. FCP detection The effici

- Page 89 and 90: TESTING AND RESULTS The following s

- Page 91 and 92: BBN experiment 2 “Detection of fa

- Page 93 and 94: General recognition rate is 63.77%

- Page 95 and 96: Recognition results. Confusion Matr

- Page 97 and 98: 5 states model General recognition

- Page 99 and 100: LVQ experiment “LVQ based facial

- Page 101 and 102: ANN experiment Back Propagation Neu

- Page 103 and 104: PCA experiment “Principal Compone

- Page 105 and 106: Eigenvalues: Eigenvectors: Factor l

- Page 107 and 108: Squared cosines of the variables: C

- Page 109 and 110: - 109 -

- Page 111 and 112: CONCLUSION The human face has attra

- Page 113 and 114: REFERENCES Almageed, W. A., M. S. F

- Page 115 and 116: Essa, A. Pentland, ‘Coding, analy

- Page 117: Samal, A., P. Iyengar, ‘Automatic

- Page 120 and 121:

61: } 62: } 63: float model::comput

- Page 122 and 123:

119: for(k=0;k

- Page 125 and 126:

APPENDIX B Datcu D., Rothkrantz L.J

- Page 127 and 128:

facial feature and store the inform

- Page 129 and 130:

available as part of the knowledge

- Page 131 and 132:

detailed in table 4. The dependency

- Page 133:

[11] M. Turk, A. Pentland ‘Face r