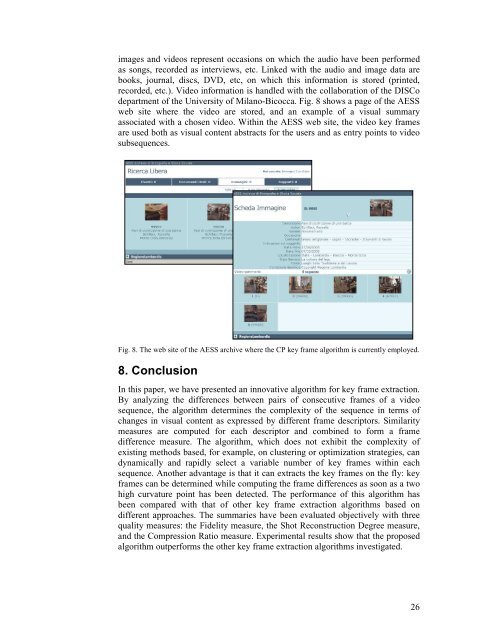

images <strong>an</strong>d <strong>video</strong>s represent occasions on which the audio have been per<strong>for</strong>medas songs, recorded as <strong>in</strong>terviews, etc. L<strong>in</strong>ked with the audio <strong>an</strong>d image data arebooks, journal, discs, DVD, etc, on which this <strong>in</strong><strong>for</strong>mation is stored (pr<strong>in</strong>ted,recorded, etc.). Video <strong>in</strong><strong>for</strong>mation is h<strong>an</strong>dled with the collaboration of the DISCodepartment of the University of Mil<strong>an</strong>o-Bicocca. Fig. 8 shows a page of the AESSweb site where the <strong>video</strong> are stored, <strong>an</strong>d <strong>an</strong> example of a visual summaryassociated with a chosen <strong>video</strong>. With<strong>in</strong> the AESS web site, the <strong>video</strong> <strong>key</strong> <strong>frame</strong>sare used both as visual content abstracts <strong>for</strong> the users <strong>an</strong>d as entry po<strong>in</strong>ts to <strong>video</strong>subsequences.Fig. 8. The web site of the AESS archive where the CP <strong>key</strong> <strong>frame</strong> <strong>algorithm</strong> is currently employed.8. ConclusionIn this paper, we have presented <strong>an</strong> <strong><strong>in</strong>novative</strong> <strong>algorithm</strong> <strong>for</strong> <strong>key</strong> <strong>frame</strong> <strong>extraction</strong>.By <strong>an</strong>alyz<strong>in</strong>g the differences between pairs of consecutive <strong>frame</strong>s of a <strong>video</strong>sequence, the <strong>algorithm</strong> determ<strong>in</strong>es the complexity of the sequence <strong>in</strong> terms ofch<strong>an</strong>ges <strong>in</strong> visual content as expressed by different <strong>frame</strong> descriptors. Similaritymeasures are computed <strong>for</strong> each descriptor <strong>an</strong>d comb<strong>in</strong>ed to <strong>for</strong>m a <strong>frame</strong>difference measure. The <strong>algorithm</strong>, which does not exhibit the complexity ofexist<strong>in</strong>g methods based, <strong>for</strong> example, on cluster<strong>in</strong>g or optimization strategies, c<strong>an</strong>dynamically <strong>an</strong>d rapidly select a variable number of <strong>key</strong> <strong>frame</strong>s with<strong>in</strong> eachsequence. Another adv<strong>an</strong>tage is that it c<strong>an</strong> extracts the <strong>key</strong> <strong>frame</strong>s on the fly: <strong>key</strong><strong>frame</strong>s c<strong>an</strong> be determ<strong>in</strong>ed while comput<strong>in</strong>g the <strong>frame</strong> differences as soon as a twohigh curvature po<strong>in</strong>t has been detected. The per<strong>for</strong>m<strong>an</strong>ce of this <strong>algorithm</strong> hasbeen compared with that of other <strong>key</strong> <strong>frame</strong> <strong>extraction</strong> <strong>algorithm</strong>s based ondifferent approaches. The summaries have been evaluated objectively with threequality measures: the Fidelity measure, the Shot Reconstruction Degree measure,<strong>an</strong>d the Compression Ratio measure. Experimental results show that the proposed<strong>algorithm</strong> outper<strong>for</strong>ms the other <strong>key</strong> <strong>frame</strong> <strong>extraction</strong> <strong>algorithm</strong>s <strong>in</strong>vestigated.26

AcknowledgementsThe <strong>video</strong> <strong>in</strong>dex<strong>in</strong>g <strong>an</strong>d <strong>an</strong>alysis presented here was supported by the Itali<strong>an</strong> MURST FIRBProject MAIS (Multi-ch<strong>an</strong>nel Adaptive In<strong>for</strong>mation Systems) [40], <strong>an</strong>d by the Regione Lombardia(Italy) with<strong>in</strong> the INTERNUM project aimed at facilitat<strong>in</strong>g access to the cultural <strong>video</strong>documentaries of the AESS (Archivio di Etnografia e Storia Sociale).References[1]. Agraim P., Zh<strong>an</strong>g H., <strong>an</strong>d Petkovic D. Content-Based Representation <strong>an</strong>d Retrieval of VisualMedia: A State of the Art review. Multimedia Tools <strong>an</strong>d Applications, 1996;3:179-202.[2]. Dimitrova N., Zh<strong>an</strong>g H., Shahraray B., Sez<strong>an</strong> M., Hu<strong>an</strong>g T. <strong>an</strong>d Zakhor A. Applications of<strong>video</strong>-content <strong>an</strong>alysis <strong>an</strong>d retrieval. IEEE MultiMedia, 2002;9(3): 44-55.[3]. Ant<strong>an</strong>i S., Kasturi R. <strong>an</strong>d Ja<strong>in</strong> R. A survey on the use of pattern recognition methods <strong>for</strong>abstraction, <strong>in</strong>dex<strong>in</strong>g <strong>an</strong>d retrieval of images <strong>an</strong>d <strong>video</strong>. Pattern Recognition, 2002;35:945–965.[4]. Schett<strong>in</strong>i R., Brambilla C., Cus<strong>an</strong>o, C., Ciocca G. Automatic classification of digitalphotographs based on decision <strong>for</strong>ests. Int. Journal of Pattern Recognition <strong>an</strong>d ArtificialIntelligence, 2004;18(5):819-846.[5]. Fredembach C., Schröder M., <strong>an</strong>d Süsstrunk S. Eigenregions <strong>for</strong> Image Classification. IEEETr<strong>an</strong>sactions on Pattern Analysis <strong>an</strong>d Mach<strong>in</strong>e Intelligence (PAMI), 2004;26(12):1645-1649.[6]. Alex<strong>an</strong>der G., Hauptm<strong>an</strong>n, Rong J<strong>in</strong>, <strong>an</strong>d Tobun D. N. Video Retrieval us<strong>in</strong>g Speech <strong>an</strong>dImage In<strong>for</strong>mation. Proc. Electronic Imag<strong>in</strong>g Conf. (EI’03), Storage Retrieval <strong>for</strong>Multimedia Databases, S<strong>an</strong>ta Clara, CA, 2003;5021:148-159.[7]. Tonomura Y., Akutsu A., Otsugi K., <strong>an</strong>d Sadakata T. VideoMAP <strong>an</strong>d VideoSpaceIcon: Tools<strong>for</strong> automatiz<strong>in</strong>g <strong>video</strong> content. Proc. ACM INTERCHI ’93 Conference, 1993:131-141.[8]. Ueda H., Miyatake T., <strong>an</strong>d Yoshizawa S. IMPACT: An <strong>in</strong>teractive natural-motion-picturededicated multimedia author<strong>in</strong>g system. Proc. ACM CHI ’91 Conference, 1991:343-350.[9]. Rui Y., Hu<strong>an</strong>g T. S. <strong>an</strong>d Mehrotra S. Explor<strong>in</strong>g Video Structure Beyond the Shots. Proc.IEEE Int. Conf. on Multimedia Comput<strong>in</strong>g <strong>an</strong>d Systems (ICMCS), Texas USA, 1998:237-240.[10]. Pentl<strong>an</strong>d A., Picard R., Davenport G. <strong>an</strong>d Haase K. Video <strong>an</strong>d Image Sem<strong>an</strong>tics: Adv<strong>an</strong>cedTools <strong>for</strong> Telecommunications. IEEE MultiMedia,1994;1(2):73-75.[11]. Zhonghua Sun, Fu P<strong>in</strong>g. Comb<strong>in</strong>ation of Color <strong>an</strong>d Object Outl<strong>in</strong>e Based Method <strong>in</strong> VideoSegmentation. Proc. SPIE Storage <strong>an</strong>d Retrieval Methods <strong>an</strong>d Applications <strong>for</strong> Multimedia,2004;5307:61-69.[12]. Arm<strong>an</strong> F., Hsu A. <strong>an</strong>d Chiu M.Y. Image process<strong>in</strong>g on Compressed Data <strong>for</strong> Large VideoDatabases. Proc. ACM Multimedia '93, Annaheim, CA,1993:267-272.[13]. Zhu<strong>an</strong>g Y., Rui Y., Hu<strong>an</strong>g T.S., Mehrotra S. Key Frame Extraction Us<strong>in</strong>g UnsupervisedCluster<strong>in</strong>g. Proc. of ICIP’98, Chicago, USA, 1998;1:866-870.[14]. Girgensohn A., Boreczky J. Time-Constra<strong>in</strong>ed Key<strong>frame</strong> Selection Technique. MultimediaTools <strong>an</strong>d Application, 2000;11:347-358.[15]. Gong Y. <strong>an</strong>d Liu X. Generat<strong>in</strong>g optimal <strong>video</strong> summaries. Proc. IEEE Int. Conference onMultimedia <strong>an</strong>d Expo, 2000;3:1559-1562.[16]. Li Zhao, Wei Qi, St<strong>an</strong> Z. Li, S.Q.Y<strong>an</strong>g, H.J. Zh<strong>an</strong>g. Key-<strong>frame</strong> Extraction <strong>an</strong>d Shot RetrievalUs<strong>in</strong>g Nearest Feature L<strong>in</strong>e (NFL). Proc. ACM Int. Workshops on Multimedia In<strong>for</strong>mationRetrieval, 2000;217-220.[17]. H<strong>an</strong>jalic A., Lagendijk R. L., Biemond J. A new Method <strong>for</strong> Key Frame Based VideoContent Representation. In: Image Databases <strong>an</strong>d Multimedia Search, World ScientificS<strong>in</strong>gapore, 1998.[18]. Hoon S. H., Yoon K., <strong>an</strong>d Kweon I. A new Technique <strong>for</strong> Shot Detection <strong>an</strong>d Key FramesSelection <strong>in</strong> Histogram Space. Proc. 12th Workshop on Image Process<strong>in</strong>g <strong>an</strong>d ImageUnderst<strong>an</strong>d<strong>in</strong>g, 2000;475-479.[19]. Narasimha R., Savakis A., Rao R. M. <strong>an</strong>d De Queiroz R. A Neaural Network Approach to<strong>key</strong> Frame <strong>extraction</strong>. Proc. of SPIE-IS&T Electronic Imag<strong>in</strong>g Storage <strong>an</strong>d retrieval Methods<strong>an</strong>d Applications <strong>for</strong> Multimedia, 2004;5307:439-447.[20]. Calic J. <strong>an</strong>d Izquierdo E. Efficient Key–Frame Extraction <strong>an</strong>d Video Analysis. Proc. IEEEITCC2002, Multimedia Web Retrieval Section, 2002;28-33.27