ST2401

You also want an ePaper? Increase the reach of your titles

YUMPU automatically turns print PDFs into web optimized ePapers that Google loves.

TECHNOLOGY: RDMA<br />

"The secret to boosting throughput is reduced latency and better storage bandwidth.<br />

These translate directly into improved productivity and capability, primarily through<br />

clever IO and networking techniques that rely on direct and remote memory access.<br />

Faster model training and job completion mean AI-powered applications can be<br />

deployed more quickly, and get things done faster, speeding time to value."<br />

access across all three preceding networking<br />

technologies. Each offers a different priceperformance<br />

trade-off, where more cost<br />

translates into greater speed and lower<br />

latency. Organisations can choose the<br />

underlying connection type that best fits their<br />

budgets and needs, understanding that each<br />

option represents a specific combination of<br />

price and performance upon which they can<br />

rely. As various AI- or ML-based (and other<br />

data- and compute-intensive applications)<br />

run on such a server, they can exploit the<br />

tiered architecture of GPU storage.<br />

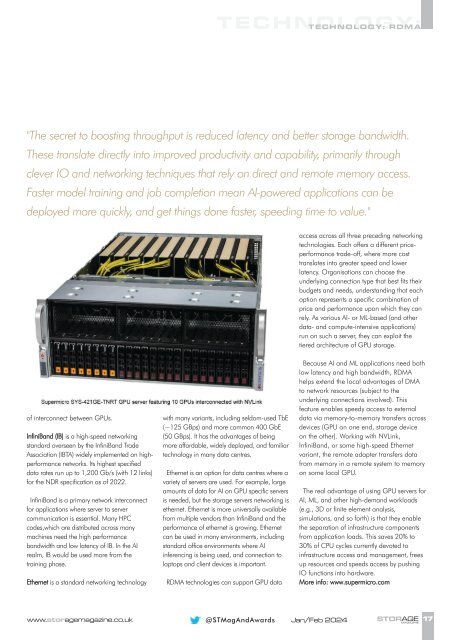

of interconnect between GPUs.<br />

InfiniBand (IB) is a high-speed networking<br />

standard overseen by the InfiniBand Trade<br />

Association (IBTA) widely implemented on highperformance<br />

networks. Its highest specified<br />

data rates run up to 1,200 Gb/s (with 12 links)<br />

for the NDR specification as of 2022.<br />

InfiniBand is a primary network interconnect<br />

for applications where server to server<br />

communication is essential. Many HPC<br />

codes,which are distributed across many<br />

machines need the high performance<br />

bandwidth and low latency of IB. In the AI<br />

realm, IB would be used more from the<br />

training phase.<br />

Ethernet is a standard networking technology<br />

with many variants, including seldom-used TbE<br />

(~125 GBps) and more common 400 GbE<br />

(50 GBps). It has the advantages of being<br />

more affordable, widely deployed, and familiar<br />

technology in many data centres.<br />

Ethernet is an option for data centres where a<br />

variety of servers are used. For example, large<br />

amounts of data for AI on GPU specific servers<br />

is needed, but the storage servers networking is<br />

ethernet. Ethernet is more universally available<br />

from multiple vendors than InfiniBand and the<br />

performance of ethernet is growing. Ethernet<br />

can be used in many environments, including<br />

standard office environments where AI<br />

inferencing is being used, and connection to<br />

laptops and client devices is important.<br />

RDMA technologies can support GPU data<br />

Because AI and ML applications need both<br />

low latency and high bandwidth, RDMA<br />

helps extend the local advantages of DMA<br />

to network resources (subject to the<br />

underlying connections involved). This<br />

feature enables speedy access to external<br />

data via memory-to-memory transfers across<br />

devices (GPU on one end, storage device<br />

on the other). Working with NVLink,<br />

InfiniBand, or some high-speed Ethernet<br />

variant, the remote adapter transfers data<br />

from memory in a remote system to memory<br />

on some local GPU.<br />

The real advantage of using GPU servers for<br />

AI, ML, and other high-demand workloads<br />

(e.g., 3D or finite element analysis,<br />

simulations, and so forth) is that they enable<br />

the separation of infrastructure components<br />

from application loads. This saves 20% to<br />

30% of CPU cycles currently devoted to<br />

infrastructure access and management, frees<br />

up resources and speeds access by pushing<br />

IO functions into hardware.<br />

More info: www.supermicro.com<br />

www.storagemagazine.co.uk<br />

@STMagAndAwards Jan/Feb 2024<br />

STORAGE<br />

MAGAZINE<br />

17