Scarica (PDF – 6.19 MB)

Scarica (PDF – 6.19 MB)

Scarica (PDF – 6.19 MB)

Create successful ePaper yourself

Turn your PDF publications into a flip-book with our unique Google optimized e-Paper software.

3.6 Egocentric and exocentric teleoperation interface<br />

using real-time, 3D video projection<br />

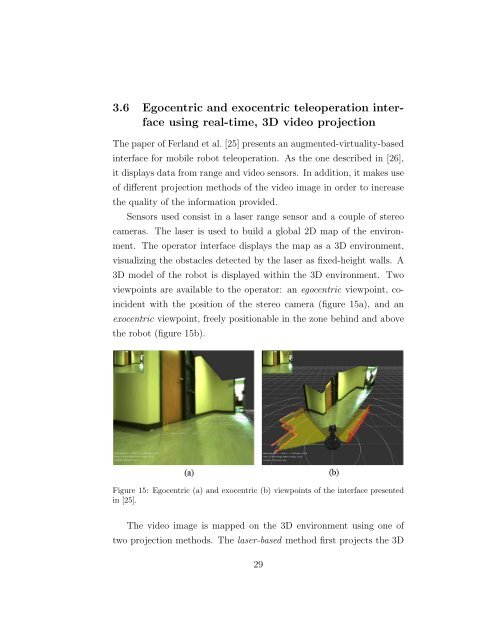

The paper of Ferland et al. [25] presents an augmented-virtuality-based<br />

interface for mobile robot teleoperation. As the one described in [26],<br />

it displays data from range and video sensors. In addition, it makes use<br />

of different projection methods of the video image in order to increase<br />

the quality of the information provided.<br />

Sensors used consist in a laser range sensor and a couple of stereo<br />

cameras. The laser is used to build a global 2D map of the environ-<br />

ment. The operator interface displays the map as a 3D environment,<br />

visualizing the obstacles detected by the laser as fixed-height walls. A<br />

3D model of the robot is displayed within the 3D environment. Two<br />

viewpoints are available to the operator: an egocentric viewpoint, co-<br />

incident with the position of the stereo camera (figure 15a), and an<br />

exocentric viewpoint, freely positionable in the zone behind and above<br />

the robot (figure 15b).<br />

Figure 15: Egocentric (a) and exocentric (b) viewpoints of the interface presented<br />

in [25].<br />

The video image is mapped on the 3D environment using one of<br />

two projection methods. The laser-based method first projects the 3D<br />

29