Shared Gaussian Process Latent Variables Models - Oxford Brookes ...

Shared Gaussian Process Latent Variables Models - Oxford Brookes ...

Shared Gaussian Process Latent Variables Models - Oxford Brookes ...

You also want an ePaper? Increase the reach of your titles

YUMPU automatically turns print PDFs into web optimized ePapers that Google loves.

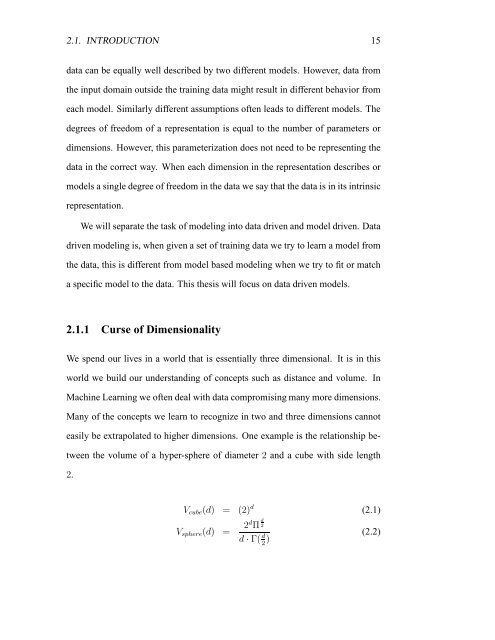

2.1. INTRODUCTION 15<br />

data can be equally well described by two different models. However, data from<br />

the input domain outside the training data might result in different behavior from<br />

each model. Similarly different assumptions often leads to different models. The<br />

degrees of freedom of a representation is equal to the number of parameters or<br />

dimensions. However, this parameterization does not need to be representing the<br />

data in the correct way. When each dimension in the representation describes or<br />

models a single degree of freedom in the data we say that the data is in its intrinsic<br />

representation.<br />

We will separate the task of modeling into data driven and model driven. Data<br />

driven modeling is, when given a set of training data we try to learn a model from<br />

the data, this is different from model based modeling when we try to fit or match<br />

a specific model to the data. This thesis will focus on data driven models.<br />

2.1.1 Curse of Dimensionality<br />

We spend our lives in a world that is essentially three dimensional. It is in this<br />

world we build our understanding of concepts such as distance and volume. In<br />

Machine Learning we often deal with data compromising many more dimensions.<br />

Many of the concepts we learn to recognize in two and three dimensions cannot<br />

easily be extrapolated to higher dimensions. One example is the relationship be-<br />

tween the volume of a hyper-sphere of diameter 2 and a cube with side length<br />

2.<br />

Vcube(d) = (2) d<br />

Vsphere(d) = 2d Π d<br />

2<br />

d · Γ( d<br />

2 )<br />

(2.1)<br />

(2.2)