Shared Gaussian Process Latent Variables Models - Oxford Brookes ...

Shared Gaussian Process Latent Variables Models - Oxford Brookes ...

Shared Gaussian Process Latent Variables Models - Oxford Brookes ...

You also want an ePaper? Increase the reach of your titles

YUMPU automatically turns print PDFs into web optimized ePapers that Google loves.

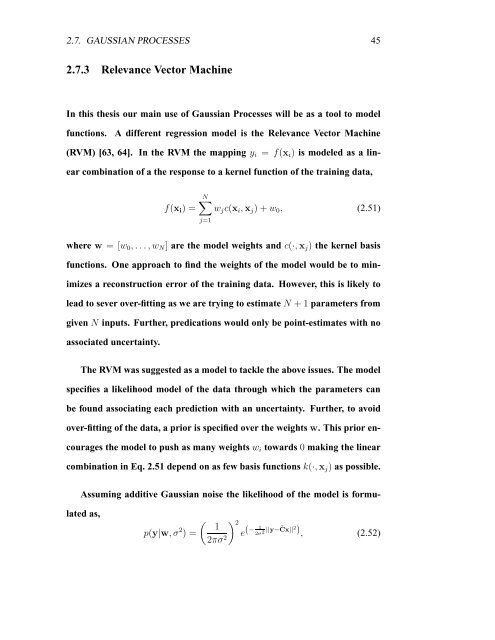

2.7. GAUSSIAN PROCESSES 45<br />

2.7.3 Relevance Vector Machine<br />

In this thesis our main use of <strong>Gaussian</strong> <strong>Process</strong>es will be as a tool to model<br />

functions. A different regression model is the Relevance Vector Machine<br />

(RVM) [63, 64]. In the RVM the mapping yi = f(xi) is modeled as a lin-<br />

ear combination of a the response to a kernel function of the training data,<br />

f(xi) =<br />

N<br />

wjc(xi,xj) + w0, (2.51)<br />

j=1<br />

where w = [w0, . . .,wN] are the model weights and c(·,xj) the kernel basis<br />

functions. One approach to find the weights of the model would be to min-<br />

imizes a reconstruction error of the training data. However, this is likely to<br />

lead to sever over-fitting as we are trying to estimate N + 1 parameters from<br />

given N inputs. Further, predications would only be point-estimates with no<br />

associated uncertainty.<br />

The RVM was suggested as a model to tackle the above issues. The model<br />

specifies a likelihood model of the data through which the parameters can<br />

be found associating each prediction with an uncertainty. Further, to avoid<br />

over-fitting of the data, a prior is specified over the weights w. This prior en-<br />

courages the model to push as many weights wi towards 0 making the linear<br />

combination in Eq. 2.51 depend on as few basis functions k(·,xj) as possible.<br />

Assuming additive <strong>Gaussian</strong> noise the likelihood of the model is formu-<br />

lated as,<br />

p(y|w, σ 2 ) =<br />

<br />

1<br />

2πσ2 2 1<br />

(−<br />

e 2σ2 ||y−˜ Cx|| 2 ) , (2.52)