Signal Analysis Research (SAR) Group - RNet - Ryerson University

Signal Analysis Research (SAR) Group - RNet - Ryerson University

Signal Analysis Research (SAR) Group - RNet - Ryerson University

Create successful ePaper yourself

Turn your PDF publications into a flip-book with our unique Google optimized e-Paper software.

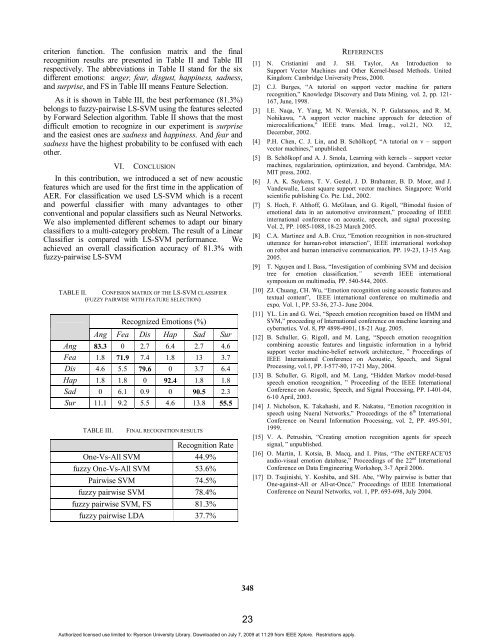

criterion function. The confusion matrix and the final<br />

recognition results are presented in Table II and Table III<br />

respectively. The abbreviations in Table II stand for the six<br />

different emotions: anger, fear, disgust, happiness, sadness,<br />

and surprise, and FS in Table III means Feature Selection.<br />

As it is shown in Table III, the best performance (81.3%)<br />

belongs to fuzzy-pairwise LS-SVM using the features selected<br />

by Forward Selection algorithm. Table II shows that the most<br />

difficult emotion to recognize in our experiment is surprise<br />

and the easiest ones are sadness and happiness. And fear and<br />

sadness have the highest probability to be confused with each<br />

other.<br />

VI. CONCLUSION<br />

In this contribution, we introduced a set of new acoustic<br />

features which are used for the first time in the application of<br />

AER. For classification we used LS-SVM which is a recent<br />

and powerful classifier with many advantages to other<br />

conventional and popular classifiers such as Neural Networks.<br />

We also implemented different schemes to adapt our binary<br />

classifiers to a multi-category problem. The result of a Linear<br />

Classifier is compared with LS-SVM performance. We<br />

achieved an overall classification accuracy of 81.3% with<br />

fuzzy-pairwise LS-SVM<br />

TABLE II. CONFISION MATRIX OF THE LS-SVM CLASSIFIER<br />

(FUZZY PAIRWISE WITH FEATURE SELECTION)<br />

Recognized Emotions (%)<br />

Ang Fea Dis Hap Sad Sur<br />

Ang 83.3 0 2.7 6.4 2.7 4.6<br />

Fea 1.8 71.9 7.4 1.8 13 3.7<br />

Dis 4.6 5.5 79.6 0 3.7 6.4<br />

Hap 1.8 1.8 0 92.4 1.8 1.8<br />

Sad 0 6.1 0.9 0 90.5 2.3<br />

Sur 11.1 9.2 5.5 4.6 13.8 55.5<br />

TABLE III. FINAL RECOGNITION RESULTS<br />

Recognition Rate<br />

One-Vs-All SVM 44.9%<br />

fuzzy One-Vs-All SVM 53.6%<br />

Pairwise SVM 74.5%<br />

fuzzy pairwise SVM 78.4%<br />

fuzzy pairwise SVM, FS 81.3%<br />

fuzzy pairwise LDA 37.7%<br />

348<br />

REFERENCES<br />

[1] N. Cristianini and J. SH. Taylor, An Introduction to<br />

Support Vector Machines and Other Kernel-based Methods. United<br />

Kingdom: Cambridge <strong>University</strong> Press, 2000.<br />

[2] C.J. Burges, “A tutorial on support vector machine for pattern<br />

recognition,” Knowledge Discovery and Data Mining, vol. 2, pp. 121-<br />

167, June, 1998.<br />

[3] I.E. Naqa, Y. Yang, M. N. Wernick, N. P. Galatsanos, and R. M.<br />

Nohikawa, “A support vector machine approach for detection of<br />

microcalifications,” IEEE trans. Med. Imag., vol.21, NO. 12,<br />

December, 2002.<br />

[4] P.H. Chen, C. J. Lin, and B. Schölkopf, “A tutorial on ν – support<br />

vector machines,” unpublished.<br />

[5] B. Schölkopf and A. J. Smola, Learning with kernels – support vector<br />

machines, regularization, optimization, and beyond. Cambridge, MA:<br />

MIT press, 2002.<br />

[6] J. A. K. Suykens, T. V. Gestel, J. D. Brabanter, B. D. Moor, and J.<br />

Vandewalle, Least square support vector machines. Singapore: World<br />

scientific publishing Co. Pte. Ltd., 2002.<br />

[7] S. Hoch, F. Althoff, G. McGlaun, and G. Rigoll, “Bimodal fusion of<br />

emotional data in an automotive environment,” proceeding of IEEE<br />

international conference on acoustic, speech, and signal processing.<br />

Vol. 2, PP. 1085-1088, 18-23 March 2005.<br />

[8] C.A. Martinez and A.B. Cruz, “Emotion recognition in non-structured<br />

utterance for human-robot interaction”, IEEE international workshop<br />

on robot and human interactive communication, PP. 19-23, 13-15 Aug.<br />

2005.<br />

[9] T. Nguyen and I. Bass, “Investigation of combining SVM and decision<br />

tree for emotion classification,” seventh IEEE international<br />

symposium on multimedia, PP. 540-544, 2005.<br />

[10] ZJ. Chuang, CH. Wu, “Emotion recognition using acoustic features and<br />

textual content”, IEEE international conference on multimedia and<br />

expo, Vol. 1, PP. 53-56, 27-3- June 2004.<br />

[11] YL. Lin and G. Wei, “Speech emotion recognition based on HMM and<br />

SVM,” proceeding of International conference on machine learning and<br />

cybernetics, Vol. 8, PP 4898-4901, 18-21 Aug. 2005.<br />

[12] B. Schuller, G. Rigoll, and M. Lang, “Speech emotion recognition<br />

combining acoustic features and linguistic information in a hybrid<br />

support vector machine-belief network architecture, ” Proceedings of<br />

IEEE International Conference on Acoustic, Speech, and <strong>Signal</strong><br />

Processing, vol.1, PP. I-577-80, 17-21 May, 2004.<br />

[13] B. Schuller, G. Rigoll, and M. Lang, “Hidden Markov model-based<br />

speech emotion recognition, ” Proceeding of the IEEE International<br />

Conference on Acoustic, Speech, and <strong>Signal</strong> Processing, PP. I-401-04,<br />

6-10 April, 2003.<br />

[14] J. Nicholson, K. Takahashi, and R. Nakatsu, “Emotion recognition in<br />

speech using Nueral Networks,” Proceedings of the 6 th International<br />

Conference on Neural Information Processing, vol. 2, PP. 495-501,<br />

1999.<br />

[15] V. A. Petrushin, “Creating emotion recognition agents for speech<br />

signal, ” unpublished.<br />

[16] O. Martin, I. Kotsia, B. Macq, and I. Pitas, “The eNTERFACE’05<br />

audio-visual emotion database,” Proceedings of the 22 nd International<br />

Conference on Data Emgineering Workshop, 3-7 April 2006.<br />

[17] D. Tsujinishi, Y. Koshiba, and SH. Abe, “Why pairwise is better that<br />

One-against-All or All-at-Once,” Proceedings of IEEE International<br />

Conference on Neural Networks, vol. 1, PP. 693-698, July 2004.<br />

Authorized licensed use limited to: <strong>Ryerson</strong> <strong>University</strong> Library. Downloaded on July 7, 2009 at 11:29 from IEEE Xplore. Restrictions apply.<br />

23