Non-parametric estimation of a time varying GARCH model

Non-parametric estimation of a time varying GARCH model

Non-parametric estimation of a time varying GARCH model

You also want an ePaper? Increase the reach of your titles

YUMPU automatically turns print PDFs into web optimized ePapers that Google loves.

<strong>Non</strong>-<strong>parametric</strong> <strong>estimation</strong> <strong>of</strong> a <strong>time</strong><br />

<strong>varying</strong> <strong>GARCH</strong> <strong>model</strong><br />

by Neelabh Rohan and T. V. Ramanathan<br />

Technical Report 3/2011<br />

Department <strong>of</strong> Statistics and Centre for Advanced Studies<br />

University <strong>of</strong> Pune, 411 007, INDIA<br />

May, 2012 (Revised)<br />

1

<strong>Non</strong>-<strong>parametric</strong> <strong>estimation</strong> <strong>of</strong> a <strong>time</strong><br />

<strong>varying</strong> <strong>GARCH</strong> <strong>model</strong><br />

Neelabh Rohan 1 and T. V. Ramanathan 2<br />

Department <strong>of</strong> Statistics and Centre for Advanced Studies<br />

University <strong>of</strong> Pune, 411 007, INDIA<br />

Abstract<br />

In this paper, a non-stationary <strong>time</strong>-<strong>varying</strong> <strong>GARCH</strong> (tv<strong>GARCH</strong>) <strong>model</strong> has been<br />

introduced by allowing the parameters <strong>of</strong> a stationary <strong>GARCH</strong> <strong>model</strong> to vary as functions<br />

<strong>of</strong> <strong>time</strong>. It is shown that the tv<strong>GARCH</strong> process is locally stationary in the sense that it<br />

can be locally approximated by stationary <strong>GARCH</strong> processes at fixed <strong>time</strong> points. We<br />

develop a two step local polynomial procedure for the <strong>estimation</strong> <strong>of</strong> the parameter functions<br />

<strong>of</strong> the proposed <strong>model</strong>. Several asymptotic properties <strong>of</strong> the estimators have been<br />

established including the asymptotic optimality. It has been found that the tv<strong>GARCH</strong><br />

<strong>model</strong> performs better than many <strong>of</strong> the standard <strong>GARCH</strong> <strong>model</strong>s for various real data<br />

sets.<br />

Mathematical Subject classification: 62M10, 62G05<br />

Keywords: Local polynomial <strong>estimation</strong>, <strong>time</strong>-<strong>varying</strong> <strong>GARCH</strong>, volatility <strong>model</strong>ling.<br />

1 Corresponding author Email: neelabh.stats@yahoo.co.in<br />

2 Email: ram@stats.unipune.ac.in<br />

2

1 Introduction<br />

The first decade <strong>of</strong> the 21 st century left the global economies grappling with the conse-<br />

quences <strong>of</strong> the financial crisis followed by an uninvited rash <strong>of</strong> currency wars. Many <strong>of</strong><br />

the emerging economies started receiving large capital inflows that have the potential to<br />

destabilizing the economy. Perhaps, the most deleterious consequence <strong>of</strong> capital inflows<br />

has been the strengthening <strong>of</strong> domestic currency, which can lead to a loss in export com-<br />

petitiveness. This, in turn led to currency wars-the phenomenon <strong>of</strong> several emerging and<br />

developed countries intervening in currency market simultaneously in order to ensure that<br />

their currency will not be the only one that appreciates. Such a phenomenon may induce<br />

instability and hence non-stationarity in the bilateral exchange rate volatility process,<br />

implying the failure <strong>of</strong> standard stationary volatility <strong>model</strong>s. In this paper, we address<br />

this problem by considering a <strong>GARCH</strong> <strong>model</strong> with <strong>time</strong> <strong>varying</strong> parameters.<br />

<strong>Non</strong>-stationary volatility <strong>model</strong>s have got considerable attention recently, see for ex-<br />

ample Mercurio and Spokoiny (2004), Mikosch and Starica (2004), Starica and Granger<br />

(2005), Dahlhaus and Subba Rao (2006), Amado and Terasvirta (2008), Fryzlewicz, Sap-<br />

atinas and Subba Rao (2008) and Chen and Hong (2009) and among others. Dahlhaus<br />

and Subba Rao (2006) proposed a <strong>time</strong>-<strong>varying</strong> ARCH (tvARCH) <strong>model</strong> for the volatil-<br />

ity process by allowing the parameters <strong>of</strong> a stationary ARCH <strong>model</strong> to change slowly<br />

through <strong>time</strong>. Fryzlewicz et al. (2008) developed a least-squares <strong>estimation</strong> procedure<br />

for such a tvARCH <strong>model</strong>. We generalize the tvARCH <strong>model</strong> introduced by Dahlhaus<br />

and Subba Rao (2006) to <strong>time</strong> <strong>varying</strong> <strong>GARCH</strong> (tv<strong>GARCH</strong>) by allowing the parameters<br />

<strong>of</strong> a stationary <strong>GARCH</strong> <strong>model</strong> to vary as functions <strong>of</strong> <strong>time</strong>.<br />

Dahlhaus and Subba Rao (2006) showed that the tvARCH <strong>model</strong> can be approxi-<br />

mated by stationary ARCH processes locally. We extend their results to the tv<strong>GARCH</strong><br />

<strong>model</strong> and show that a non-stationary tv<strong>GARCH</strong> process can be locally approximated by<br />

stationary processes at specific <strong>time</strong> points. Therefore, the tv<strong>GARCH</strong> <strong>model</strong> is asymp-<br />

totically locally stationary at every point <strong>of</strong> observation, but it is globally non-stationary<br />

because <strong>of</strong> <strong>time</strong>-<strong>varying</strong> parameters. Such an approximation further helps us in deriving<br />

the asymptotic distribution <strong>of</strong> the estimators.<br />

An alternative approach to incorporate non-stationarity in the volatility process is the<br />

<strong>varying</strong> coefficient <strong>GARCH</strong> <strong>model</strong> (see Číˇzek and Spkoiny (2009) and references therein).<br />

The <strong>estimation</strong> <strong>of</strong> a <strong>varying</strong> coefficient <strong>GARCH</strong> <strong>model</strong> requires the search for local <strong>time</strong><br />

3

intervals <strong>of</strong> homogeneity over the entire period, such that the parameters <strong>of</strong> the process<br />

remain nearly a constant over each interval. The <strong>estimation</strong> is carried out using the<br />

quasi-maximum likelihood (QML) approach. However, the QML procedure is not very<br />

reliable when the sample size is small, since the quasi-likelihood tends to be shallow about<br />

the minimum for small sample sizes, see Shephard (1996), Bose and Mukherjee (2003)<br />

and Fryzlewicz et al. (2008). In addition, the QML estimator does not admit a closed<br />

form solution. The <strong>model</strong> and <strong>estimation</strong> procedure <strong>of</strong> Amado and Terasvirta (2008) also<br />

suffers from similar drawbacks.<br />

We develop a two-step local polynomial <strong>estimation</strong> procedure for the <strong>estimation</strong> <strong>of</strong> the<br />

proposed tv<strong>GARCH</strong> <strong>model</strong>. One can refer to Wand and Jones (1995), Fan and Gijbels<br />

(1996) and Fan and Zhang (1999) among others for the application <strong>of</strong> local polynomial<br />

techniques in various regression <strong>model</strong>s. The proposed two-step <strong>estimation</strong> procedure<br />

requires the <strong>estimation</strong> <strong>of</strong> a tvARCH <strong>model</strong> initially in the first step. In the second step,<br />

we obtain the estimator <strong>of</strong> the tv<strong>GARCH</strong> <strong>model</strong> using the initial estimator. Expressions<br />

for the asymptotic bias and variance <strong>of</strong> the estimators in both the steps are derived and<br />

asymptotic normality is established. It is found that the asymptotic MSE <strong>of</strong> estimators<br />

<strong>of</strong> the parameter functions <strong>of</strong> tv<strong>GARCH</strong> <strong>model</strong> remain invariable for a wide range <strong>of</strong> the<br />

initial step bandwidths, thus making it computation friendly. Moreover, our estimator<br />

achieves the optimal rate <strong>of</strong> convergence under a higher order differentiability assumption<br />

<strong>of</strong> the parameter functions.<br />

Even though this paper deals with tv<strong>GARCH</strong> (1,1) process only, the results presented<br />

here can be extended to a general tv<strong>GARCH</strong> (p,q) with appropriate modifications. In<br />

the empirical analysis <strong>of</strong> financial data, lower order <strong>GARCH</strong> (1,1) <strong>model</strong> has <strong>of</strong>ten been<br />

found appropriate to account for the conditional heteroscedasticity. It usually describes<br />

the dynamics <strong>of</strong> conditional variance <strong>of</strong> many economic <strong>time</strong> series quite well, see for<br />

example Palm (1996). Therefore, in this paper we concentrate on tv<strong>GARCH</strong> (1,1) <strong>model</strong>.<br />

We illustrate the performance <strong>of</strong> the tv<strong>GARCH</strong> <strong>model</strong> using various bilateral ex-<br />

change rate and stock indices data in the past decade. The tv<strong>GARCH</strong> <strong>model</strong> is shown<br />

to outperform several stationary <strong>GARCH</strong> as well as tvARCH <strong>model</strong>s in terms <strong>of</strong> both<br />

in-sample and out <strong>of</strong> sample prediction. The <strong>model</strong> is also found to be performing better<br />

than a long memory <strong>model</strong> in predicting the volatility.<br />

The rest <strong>of</strong> the paper is organized as follows. A tv<strong>GARCH</strong> <strong>model</strong> and its properties<br />

4

have been discussed in Section 2. Section 3 develops a two step local polynomial estima-<br />

tion procedure for the <strong>model</strong>. We establish the asymptotic properties <strong>of</strong> the estimators<br />

in Section 4. Several applications <strong>of</strong> the tv<strong>GARCH</strong> <strong>model</strong> are given in Section 5. All the<br />

pro<strong>of</strong>s are deferred to the Appendix.<br />

2 A <strong>time</strong> <strong>varying</strong> <strong>GARCH</strong> <strong>model</strong><br />

Let {ǫt} be a process such that E(ǫt|Ft−1) = 0 and E(ǫ 2 t |Ft−1) = σ 2 t , where Ft−1 =<br />

σ(ǫt−1,ǫt−2,...). Suppose {vt} is a sequence, independent <strong>of</strong> {ǫt}, <strong>of</strong> real valued indepen-<br />

dent and identically distributed random variables, having mean 0 and variance 1. Then<br />

a <strong>GARCH</strong> <strong>model</strong> with <strong>time</strong> <strong>varying</strong> parameters is defined as<br />

ǫt = σtvt,<br />

σ 2 t = ω(t) + α(t)ǫ 2 t−1 + β(t)σ 2 t−1<br />

where ω(·), α(·) and β(·) are certain non-negative functions <strong>of</strong> <strong>time</strong>.<br />

In order to obtain a meaningful asymptotic theory, we rescale the domain <strong>of</strong> the<br />

parameter functions <strong>of</strong> (1) to unit interval. That is, we study the following process,<br />

σ2 t = ω � �<br />

t<br />

n<br />

+ α � �<br />

t<br />

n<br />

ǫt = σtvt,<br />

ǫ 2 t−1 + β � t<br />

n<br />

�<br />

σ 2 t−1, t = 1, 2,...,n.<br />

The sequence <strong>of</strong> stochastic processes {ǫt, t = 1, 2,...,n} is said to follow a tv<strong>GARCH</strong><br />

process if it satisfies (2). Here ω(u),α(u),β(u) ≥ 0 ∀ u ∈ (0, 1] ensure the non-negativity<br />

<strong>of</strong> σ 2 t . We define ω(u),α(u),β(u) = 0 for u < 0. Such a rescaling is a common technique in<br />

non-<strong>parametric</strong> regression and it does not affect the <strong>estimation</strong> procedure, see Dahlhaus<br />

and Subba Rao (2006).<br />

Now we show that the tv<strong>GARCH</strong> process can be locally approximated by stationary<br />

<strong>GARCH</strong> processes at specific <strong>time</strong> points. This allows us to refer the tv<strong>GARCH</strong> as a lo-<br />

cally stationary process. Towards this, first we state the following technical assumptions:<br />

Assumption 1. (i) There exists δ > 0 such that<br />

0 < α(u) + β(u) ≤ 1 − δ, ∀ 0 < u ≤ 1 and supω(u)<br />

< ∞.<br />

u<br />

(ii) There exist finite constants M1,M2 and M3 such that ∀ u1,u2 ∈ (0, 1],<br />

|ω(u1) − ω(u2)| ≤ M1|u1 − u2|<br />

|α(u1) − α(u2)| ≤ M2|u1 − u2|<br />

|β(u1) − β(u2)| ≤ M3|u1 − u2|.<br />

5<br />

(1)<br />

(2)

The Assumption 1 (i) here is similar in spirit to the stationarity condition for <strong>GARCH</strong><br />

(1,1) <strong>model</strong> discussed by Nelson (1991). This condition is required for the existence<br />

<strong>of</strong> a well defined unique solution to the tv<strong>GARCH</strong> process. It is also sufficient for the<br />

tv<strong>GARCH</strong> to be a short memory process. The Lipschitz continuity condition for the<br />

parameters in Assumption 1 (ii) is required for the local stationarity <strong>of</strong> the tv<strong>GARCH</strong><br />

process. Similar condition is also assumed by Dahlhaus and Subba Rao (2006) for pa-<br />

rameters <strong>of</strong> the tvARCH process. Notice that we do not make any assumption on the<br />

density function <strong>of</strong> ǫt. Therefore, the methodology introduced in the paper will be useful<br />

for analyzing data with heavy tailed distributions which is a common phenomenon in<br />

financial <strong>time</strong> series.<br />

Before proceeding further, we show in Proposition 2.1 that the tv<strong>GARCH</strong> process<br />

possesses a well defined unique solution. In the Proposition 2.2, we derive the covariance<br />

structure <strong>of</strong> the tv<strong>GARCH</strong> process and show that tv<strong>GARCH</strong> is a short memory process.<br />

Proposition 2.1. Let the Assumption 1 (i) hold. Then the variance process (2) has<br />

a well defined unique solution given by<br />

¯σ 2 t = ω � �<br />

t + n<br />

∞� i� �<br />

α<br />

i=1 j=1<br />

� �<br />

t−j+1<br />

v n<br />

2 t−j + β � ��<br />

t−j+1<br />

ω n<br />

� �<br />

t−i , n<br />

such that |σ 2 t − ¯σ 2 t | → 0 a.s., if σ 2 0 (starting point) is finite with probability one. Also,<br />

inf<br />

u ω(u)/(1 − inf<br />

u β(u)) ≤ ¯σ 2 t < ∞ ∀ t a.s.<br />

Proposition 2.2. Suppose that the Assumption 1 (i) is satisfied for the tv<strong>GARCH</strong><br />

process. Further assume that E|vt| 4 < ∞. Then for a fixed k ≥ 0 and 0 < δ < 1,<br />

Cov(ǫ 2 t,ǫ 2 t+k) = O �<br />

(1 − δ) k�<br />

.<br />

Now we define a stationary <strong>GARCH</strong> (1,1) process, which locally approximates the original<br />

process (2) in the neighborhood <strong>of</strong> a fixed point (see Proposition 2.3). Let �ǫt(u0), u0 ∈<br />

(0, 1] be a process with E(�ǫt(u0)| � Ft−1) = 0 and E(�ǫ 2 t(u0)| � Ft−1) = �σ 2 t (u0) where � Ft−1 =<br />

σ(�ǫt−1, �ǫt−2,...). Then {�ǫt(u0)} is said to follow a stationary <strong>GARCH</strong> process associated<br />

with (2) at <strong>time</strong> point u0 if it satisfies,<br />

�ǫt(u0) = �σt(u0)vt,<br />

�σ 2 t (u0) = ω(u0) + α(u0)�ǫ 2 t−1(u0) + β(u0)�σ 2 t−1(u0).<br />

6<br />

(3)

Under Assumption 1(i), (3) is a stationary ergodic process. It is also sufficient for �ǫt(u0)<br />

to be weakly stationary. A unique stationary ergodic solution to (3) is<br />

¯σ 2 t (u0) = ω (u0) + ∞� i� �<br />

α (u0)v<br />

i=1 j=1<br />

2 t−j + β (u0) �<br />

ω (u0). (4)<br />

Here |¯σ 2 t (u0) − �σ 2 t (u0)| → 0 a.s. (see Nelson (1991)). Now in the following proposition,<br />

we show that if the <strong>time</strong> point (t/n) is close to u0, then (3) can be locally considered as<br />

an approximation to (2).<br />

Proposition 2.3. Suppose that the Assumptions 1 (i) and (ii) are satisfied, then the<br />

process {ǫ 2 t } can be approximated locally by a stationary ergodic process {�ǫ 2 t(u0)}. That<br />

is, there exists a well defined stationary ergodic process Vt independent <strong>of</strong> u0 and a con-<br />

stant Q < ∞ such that<br />

or equivalently<br />

|ǫ 2 t − �ǫ 2 t(u0)| ≤ Q �� � � t<br />

n<br />

ǫ 2 t = �ǫ 2 t + OP<br />

�� �� t<br />

n<br />

�<br />

�<br />

− u0<br />

We can also write (2) by recursive substitution,<br />

where<br />

α0( t<br />

n ) = ω � �<br />

t<br />

n<br />

k = 1, 2,...t − 1.<br />

σ 2 t = α0( t<br />

n<br />

+ t−1 �<br />

k=1<br />

t−1 �<br />

) +<br />

k=1<br />

ω � � k� t−k<br />

n<br />

i=1<br />

�<br />

�<br />

− u0<br />

� + 1<br />

αk( t<br />

n )ǫ2 t−k + σ 2 0<br />

n<br />

� + 1<br />

�<br />

Vt a.s.<br />

n<br />

t�<br />

i=1<br />

�<br />

.<br />

β � �<br />

t−i+1 ,αk( n<br />

t<br />

n ) = α � t−k+1<br />

n<br />

β � �<br />

t−i+1 , (5)<br />

n<br />

� k−1 �<br />

i=1<br />

β � �<br />

t−i+1 , n<br />

Here we take 0�<br />

β<br />

i=1<br />

� �<br />

t−i+1 = 1. Notice that the functions αk(·) here are geometrically<br />

n<br />

decaying as k → ∞ under Assumption 1(i). Also, if σ2 0 is finite with probability one, then<br />

σ2 t�<br />

0 β<br />

i=1<br />

� �<br />

t−i+1 P→ P<br />

0 as t → ∞, n → ∞. Here, → denotes convergence in probability.<br />

n<br />

3 Local polynomial <strong>estimation</strong><br />

The local polynomial <strong>estimation</strong> <strong>of</strong> the tv<strong>GARCH</strong> <strong>model</strong> (2) can be carried out in two<br />

steps. In Step 1, we obtain a preliminary estimate <strong>of</strong> σ 2 t using a <strong>time</strong> <strong>varying</strong> ARCH<br />

(p) <strong>model</strong>, exploiting the representation (5) <strong>of</strong> tv<strong>GARCH</strong>. In the second step, we finally<br />

7

each the estimators <strong>of</strong> the parameter functions <strong>of</strong> tv<strong>GARCH</strong>. It has been shown that<br />

with appropriately chosen bandwidth, the rate <strong>of</strong> convergence <strong>of</strong> the MSE <strong>of</strong> final esti-<br />

mates become independent <strong>of</strong> the initial step estimates.<br />

Step 1. First, we obtain a preliminary estimate <strong>of</strong> σ 2 t using the following tvARCH<br />

(p) <strong>model</strong>;<br />

which can also be written as<br />

σ2 t = α0( t t ) + α1( n n )ǫ2t−1 ... + αp( t<br />

n )ǫ2t−p ǫ2 t = α0( t t ) + α1( n n )ǫ2t−1 ... + αp( t<br />

n )ǫ2t−p + σ2 t (v2 t − 1).<br />

Here, p is such that p = pn → ∞ as n → ∞. Among several choices <strong>of</strong> such a p, one<br />

specific choice is log n. The asymptotic results derived in Section 4 for the tv<strong>GARCH</strong><br />

<strong>model</strong> hold for pn → ∞. However, we drop the suffix n for notational simplicity. We<br />

use local polynomial technique to estimate the functions αi(u), i = 0, 1,...p, treating<br />

σ 2 t (v 2 t −1) as error. Now onwards, we will denote (t/n) = ut. We assume that the function<br />

αi(·) possesses a bounded continuous derivative up to order d + 1, (d ≥ 1) (see Section<br />

4). Using Taylor’s series expansion, the function αi(u) can locally be approximated in<br />

the neighborhood <strong>of</strong> a point u0 by,<br />

αi(ut) ≈ αi0 + αi1(ut − u0) + ... + αid(ut − u0) d , i = 0, 1,...,p<br />

where αij, j = 0, 1,...d are constants. Therefore, given a Kernel function K(·), we get<br />

the estimator by minimizing,<br />

L = n�<br />

�<br />

ǫ<br />

i=p+1<br />

2 i − d�<br />

(α0k +<br />

k=0<br />

p �<br />

αjkǫ<br />

j=1<br />

2 i−j)(ui − u0) k<br />

�2 where Kh1(·) = (1/h1)K(·/h1) and h1 denotes the bandwidth. Define<br />

Ut = [1, (ut − u0),...,(ut − u0) d ]1×(d+1) t = 1, 2,...,n ,<br />

⎡<br />

⎢<br />

X1 = ⎢<br />

⎣<br />

Up+1 ǫ 2 pUp+1 ... ǫ 2 1Up+1<br />

Up+2 ǫ 2 p+1Up+2 ... ǫ 2 2Up+2<br />

.<br />

.<br />

...<br />

Un ǫ 2 n−1Un ... ǫ 2 n−pUn<br />

.<br />

⎤<br />

Kh1(ui − u0) (6)<br />

W1 = diag(Kh1(up+1 − u0),...,Kh1(un − u0)) and Y1 = [ǫ 2 p+1,...ǫ 2 n] ⊤ .<br />

8<br />

⎥<br />

⎦ ,

The estimator <strong>of</strong> αi(u0) as a solution to least-squares problem (6) can be expressed as,<br />

ˆαi(u0) = e ⊤ i(d+1)+1,(p+1)(d+1) (X⊤ 1 W1X1) −1 X ⊤ 1 W1Y1, i = 0, 1,...,p. (7)<br />

Here and throughout the paper, we use the notation ek,m for a column vector <strong>of</strong> length<br />

m with 1 at k th position and 0 elsewhere. Therefore, an initial estimate <strong>of</strong> σ 2 t is obtained<br />

by,<br />

ˆσ 2 t = ˆα0(ut) + p �<br />

ˆαk(ut)ǫ<br />

k=1<br />

2 t−k,<br />

where ˆα0(ut) and ˆαk(ut) represent the estimators <strong>of</strong> α0(ut) and αk(ut) respectively. They<br />

are calculated using (7) at ut. We set ǫ 2 t = 0, ∀ t ≤ 0 for the practical implementation.<br />

This method can also be used for the <strong>estimation</strong> <strong>of</strong> a tvARCH (p) <strong>model</strong> <strong>of</strong> Dahlhaus<br />

and Subba Rao (2006).<br />

Step 2. In this step, we use the conditional variance initially estimated in Step 1 to<br />

get the estimates <strong>of</strong> the parameter functions <strong>of</strong> tv<strong>GARCH</strong> process. The parameter func-<br />

tions ω(·),α(·) and β(·) are assumed to be continuously differentiable up to order d + 1.<br />

Using Taylor’s series expansion, we can write,<br />

ω(ut) ≈ ω02 + ω12(ut − u0) + ... + ωd2(ut − u0) d<br />

α(ut) ≈ a02 + a12(ut − u0) + ... + ad2(ut − u0) d<br />

β(ut) ≈ b02 + b12(ut − u0) + ... + bd2(ut − u0) d<br />

where ωi2,ai2 and bi2, i = 0, 1,...,d are constants. We can write (2) as<br />

ǫ2 t = ω( t t ) + α( n n )ǫ2 t−1 + β( t<br />

n )ˆσ2 t−1 − β( t<br />

n )(ˆσ2 t−1 − σ2 t−1) + σ2 t (v2 t − 1). (8)<br />

Corollary 2 (in Section 4) shows that for a particular choice <strong>of</strong> the Step 1 bandwidth<br />

h1 = o(h2), E(ˆσ 2 t−1 − σ 2 t−1) is asymptotically negligible. Here h2 denotes the bandwidth<br />

in the Step 2. The estimates are obtained by minimizing<br />

Define<br />

L = n�<br />

�<br />

i=2<br />

ǫ2 i − d�<br />

(ωk2 + ak2ǫ<br />

k=0<br />

2 i−1 + bk2ˆσ 2 i−1)(ui − u0) k<br />

�2 ⎡<br />

⎢<br />

X2 = ⎢<br />

⎣ .<br />

U2 ǫ 2 1U2 ˆσ 2 1U2<br />

U3 ǫ 2 2U3 ˆσ 2 2U3<br />

.<br />

Un ǫ 2 n−1Un ˆσ 2 n−1Un<br />

.<br />

⎤<br />

⎥<br />

⎦ ,<br />

Kh2(ui − u0).<br />

W2 = diag(Kh2(u2 − u0),...,Kh2(un − u0)), and Y2 = [ǫ 2 2,...,ǫ 2 n] ⊤ .<br />

9

Then, the exact expressions for the estimators are given by<br />

ˆω(u0) = e ⊤ 1,3(d+1) (X⊤ 2 W2X2) −1 X ⊤ 2 W2Y2,<br />

ˆα(u0) = e ⊤ d+2,3(d+1) (X⊤ 2 W2X2) −1 X ⊤ 2 W2Y2 and<br />

ˆβ(u0) = e ⊤ 2d+3,3(d+1) (X⊤ 2 W2X2) −1 X ⊤ 2 W2Y2.<br />

The final estimates <strong>of</strong> σ 2 t in tv<strong>GARCH</strong> <strong>model</strong> can be obtained using these estimators.<br />

These estimators achieve the optimal rate <strong>of</strong> convergence when an optimal bandwidth is<br />

used (see Section 4).<br />

3.1 Bandwidth selection<br />

As will be discussed in the next section, the two step estimator is not very sensitive to the<br />

choice <strong>of</strong> initial bandwidth h1 as long as it is small enough, so that the bias in the first<br />

step is asymptotically negligible. Therefore, one can simply apply the standard univariate<br />

bandwidth selection procedures to select the smoothing parameter for Step 2. The initial<br />

smoothing parameter can be chosen according to the second step bandwidth. For the<br />

practical implementation, we select the optimal bandwidth (h2) using the cross validation<br />

method based on the best linear predictor <strong>of</strong> ǫ2 t given the past (see Hart (1994)), which<br />

is, ω � �<br />

t + α n<br />

� �<br />

t ǫ n<br />

2 t−1 + β � �<br />

t σ n<br />

2 t−1. That is, such a bandwidth (h2) is chosen for which,<br />

CV (h2) = 1<br />

n−1<br />

n�<br />

t=2<br />

�<br />

ǫ2 t − ˆω −t (ut) − ˆα −t (ut)ǫ2 t−1 − ˆ β−t (ut)σ2 �2 t−1<br />

is minimum, where ˆω −t (ut), ˆα −t (ut) and ˆ β−t (ut) denote the local polynomial estimators<br />

<strong>of</strong> ω � �<br />

t ,α n<br />

� �<br />

t and β n<br />

� �<br />

t obtained by leaving the t n<br />

th observation. A pilot bandwidth is<br />

chosen initially to get the initial estimate <strong>of</strong> σ 2 t−1 using the full data. Using the similar<br />

arguments as in Hart (1994), asymptotically it can be shown that such a bandwidth is<br />

a minimizer <strong>of</strong> the mean squared prediction error <strong>of</strong> ǫ 2 t. The pilot bandwidth should be<br />

small enough to be <strong>of</strong> o(h2) and at the same <strong>time</strong>, should satisfy nh1 → ∞. In case, if<br />

h2 comes out be such that the pilot bandwidth is not <strong>of</strong> o(h2), the above cross validation<br />

procedure can be repeated by choosing even smaller initial bandwidth.<br />

However, it is not feasible to compute (9) practically, as it requires the repeated<br />

refitting <strong>of</strong> the <strong>model</strong> after deletion <strong>of</strong> the data points each <strong>time</strong>. The bandwidth selection<br />

procedure is computationally too cumbersome, specially when n is large. Therefore we<br />

provide a simplified version <strong>of</strong> (9) to reduce the computational complexity and make the<br />

bandwidth selection easy and doable. This has been described in the Appendix B.<br />

10<br />

(9)

4 Asymptotic results<br />

Towards proving the asymptotic results corresponding to estimators in Steps 1 and 2, we<br />

first state the following standard technical assumptions and then introduce some nota-<br />

tions:<br />

Assumption 2. (i) The functions ω(·),α(·) and β(·) (and hence αj(·)) have the bounded<br />

and continuous derivatives up to order d+1 (d ≥ 1), in a neighborhood <strong>of</strong> u0, u0 ∈ (0, 1].<br />

(ii) K(u) is a symmetric density function <strong>of</strong> bounded variation with a compact support.<br />

(iii) The bandwidths h1 and h2 are such that h1 → 0,h2 → 0 and nh1 → ∞,nh2 → ∞<br />

as n → ∞.<br />

(iv) E|vt| 4 < ∞.<br />

Notations.<br />

µi = � u i K(u)du, νi = � u i K 2 (u)du,<br />

S = S(u0) = E �<br />

[1, �ǫ 2 t−1(u0),..., �ǫ 2 t−p(u0)] ⊤ [1, �ǫ 2 t−1(u0),..., �ǫ 2 t−p(u0)] �<br />

,<br />

Cj = Cj(u0) = E(�ǫ 2 t(u0) �ǫ 2 t−j(u0)),<br />

Ω = Ω(u0) = E �<br />

�σ 4 t (u0)[1, �ǫ 2 t−1(u0),..., �ǫ 2 t−p(u0)] ⊤ [1, �ǫ 2 t−1(u0),..., �ǫ 2 t−p(u0)] �<br />

,<br />

wj = E(�ǫ j<br />

t(u0)), αtvARCH(u0) = [α0(u0),α1(u0),...,αp(u0)] ⊤ ,<br />

Di = [µd+1,hiµd+2,...,h d iµ2d+1] ⊤ , i = 1, 2,<br />

em = a column vector <strong>of</strong> length m with 1 everywhere,<br />

⎡<br />

⎢<br />

Ai = ⎢<br />

⎣<br />

⎡<br />

⎢<br />

Bi = ⎢<br />

⎣ .<br />

1 hiµ1 ... hd iµd<br />

hiµ1 h2 iµ2 ... h d+1<br />

...<br />

. . .<br />

i µd+1<br />

h d iµd h d+1<br />

i µd+1 ... h 2d<br />

i µ2d<br />

ν0 hiν1 ... hd iνd<br />

hiν1 h2 iν2 ... h d+1<br />

i νd+1<br />

.<br />

...<br />

h d iνd h d+1<br />

i νd+1 ... h 2d<br />

i ν2d<br />

.<br />

⎤<br />

⎥<br />

⎦ ,<br />

⎤<br />

⎥ , i = 1, 2.<br />

⎦<br />

In the following theorem, we obtain the exact expressions for the biases <strong>of</strong> the estimators<br />

<strong>of</strong> tvARCH (p) <strong>of</strong> Step 1.<br />

Theorem 4.1 Let the Assumptions 1 and 2 be satisfied. Then the asymptotic bias <strong>of</strong><br />

ˆαj(u0), j = 0, 1,...,p is given by,<br />

Bias(ˆαj(u0)) = hd+1<br />

�<br />

1<br />

(d+1)!<br />

α (d+1)<br />

j<br />

(u0) �<br />

e ⊤ 1,d+1A −1<br />

1 D1 + oP(h d+1<br />

1 ).<br />

11

Further, if E|vt| 8 < ∞, then the asymptotic variance <strong>of</strong> the estimator is<br />

V ar(ˆα0(u0),..., ˆαp(u0))<br />

= 1<br />

nh1 e⊤ 1,d+1A −1<br />

1 B1A −1<br />

1 e1,d+1V ar(v 2 t )S −1 ΩS −1 (1 + oP(1)),<br />

Interestingly, the bias expression for ˆαj(u0) depends on the (d+1) th derivative <strong>of</strong> αj(u0)<br />

only due to the structure <strong>of</strong> the <strong>model</strong>. The procedure introduced in Step 1 can be<br />

used for the <strong>estimation</strong> <strong>of</strong> a <strong>time</strong> <strong>varying</strong> ARCH (p) <strong>model</strong>. Now it is clear that the<br />

MSE <strong>of</strong> the estimator ˆαj(u0) is OP(h 2d+2<br />

1<br />

+ (nh1) −1 ). Also, when the optimal bandwidth<br />

h1 = O(n −1/(2d+3) ) is used, then the local polynomial estimator achieves the optimal rate<br />

<strong>of</strong> convergence OP(n −(2d+2)/(2d+3) ) for estimating αj(u0). Notice that for d = 3, the opti-<br />

mal convergence rate is OP(n −8/9 ). Now in the following corollary, we show the asymptotic<br />

normality <strong>of</strong> the estimator as a simple application <strong>of</strong> the martingale central limit theorem.<br />

Corollary 4.1. Under the same assumptions as that <strong>of</strong> Theorem 4.1,<br />

√<br />

nh1 (ˆαtvARCH(u0) − αtvARCH(u0) − b(u0)) D �<br />

→<br />

Np+1 0,e ⊤ 1,d+1A −1<br />

1 B1A −1<br />

1 e1,d+1V ar(v2 t )S−1ΩS −1�<br />

where b(u0) = Bias(ˆαtvARCH(u0)) and D → denotes the convergence in distribution.<br />

Corollary 4.2. Let ˆσ 2 t = ˆαtvARCH(ut) ⊤ [1,ǫ2 t−1,...,ǫ 2 t−p] ⊤ (p+1)×1 . Then under the Assump-<br />

tions 1 and 2,<br />

where 0 < ρ < 1 and pn → ∞ as n → ∞.<br />

Bias(ˆσ 2 t ) = E(ˆσ 2 t − σ 2 t ) = OP(h d+1<br />

1 ) + O(ρ pn )<br />

Corollary 4.2 can be proved using Proposition 2.2, equation (5) and Theorem 4.1. It<br />

shows that the choice <strong>of</strong> pn will contribute towards the bias <strong>of</strong> the conditional variance<br />

in the initial step by a term which decays geometrically. Therefore, this term will have<br />

negligible effect on final estimators as pn → ∞. In Theorem 4.2, we derive the asymp-<br />

totic bias and the variance <strong>of</strong> the estimators <strong>of</strong> tv<strong>GARCH</strong> parameter functions obtained<br />

in Step 2. Towards this, first we introduce few more notations.<br />

12

Notations.<br />

bj = bj(u0) = Bias(ˆαj(u0)), δj = δj(u0) = αj(u0) + bj(u0), j = 0, 1,...,p,<br />

λ1 = δ0 + p �<br />

δjw2, λ2 = δ0w2 + p �<br />

δjCj,<br />

j=1<br />

λ3 = δ2 p�<br />

0 + 2δ0w2<br />

j=1<br />

j=1<br />

δj + p �<br />

δ2 jw4 + 2<br />

j=1<br />

λ1b = b0 + p �<br />

bjw2, λ2b = b0w2 + p �<br />

bjCj,<br />

j=1<br />

p� p�<br />

λ3b = δ0b0 + (b0 δj + δ0<br />

j=1 j=1<br />

j=1<br />

p�<br />

δiδjCj−i,<br />

i,j=1(i

It is interesting to note that the bias expressions are free <strong>of</strong> the derivatives <strong>of</strong> other pa-<br />

rameter functions. Also, if h1 = o(h2), then δj = αj(u0) + oP(h d+1<br />

2 ) and the variance<br />

<strong>of</strong> the estimator does not depend on the first step bandwidth. This means that when<br />

the optimal bandwidth is used, then the <strong>estimation</strong> remains unaffected for a large choice<br />

<strong>of</strong> initial step bandwidth. This makes the <strong>estimation</strong> procedure relatively easy to imple-<br />

ment. The MSE <strong>of</strong> the final estimator is OP(h 2d+2<br />

2 +(nh2) −1 ), which is independent <strong>of</strong> the<br />

initial step bandwidth. Notice that this MSE achieves the optimal rate <strong>of</strong> convergence at<br />

an order <strong>of</strong> n −(2d+2)/(2d+3) for an optimal bandwidth h2 <strong>of</strong> order n −1/(2d+3) and h1 = o(h2).<br />

Now in the following corollary, we prove the asymptotic normality <strong>of</strong> the estimator using<br />

martingale central limit theorem.<br />

Corollary 4.3. Under the same assumptions as that <strong>of</strong> Theorem 4.2,<br />

√ �<br />

nh2<br />

ˆβtv<strong>GARCH</strong>(u0) − βtv<strong>GARCH</strong>(u0) − btv<strong>GARCH</strong>(u0) �<br />

�<br />

D<br />

→ N3 0,e ⊤ 1,d+1A −1<br />

2 B2A −1<br />

2 e1,d+1V ar(v2 t )S −1<br />

2 Ω2S −1<br />

�<br />

2<br />

where βtv<strong>GARCH</strong>(u0) = [ω(u0),α(u0),β(u0)] ⊤ and btv<strong>GARCH</strong>(u0) =<br />

[Bias(ˆω(u0)),Bias(ˆα(u0)), Bias( ˆ β(u0))] ⊤ .<br />

Remark 4.1. Above results have led us to the following two important issues, which<br />

need further investigation.<br />

1. The asymptotic distributions <strong>of</strong> the estimators <strong>of</strong> the parameter functions depend<br />

on the parameters <strong>of</strong> the stationary approximation to tv<strong>GARCH</strong> defined in (3),<br />

which is unobservable. Therefore, to derive a confidence band (or point-wise con-<br />

fidence intervals), one can use the bootstrap methods. Fryzlewicz, Sapatinas and<br />

Subba Rao (2008) used residual bootstrap methods <strong>of</strong> Franke and Kreiss (1992)<br />

to construct point-wise confidence intervals for the least-squares estimator <strong>of</strong> the<br />

tvARCH <strong>model</strong>. To avoid instability <strong>of</strong> the generated process, they modified their<br />

estimator so that the sum <strong>of</strong> all the estimated coefficients remain less than one.<br />

However, their method does not guarantee the estimators to be non-negative. This<br />

results in some <strong>of</strong> the bootstrapped residual squares to be negative. In order to<br />

tackle this problem, one needs to carefully formulate a bootstrap procedure and<br />

establish its working. Another approach would be to modify the <strong>estimation</strong> proce-<br />

dure itself to satisfy these constraints, see for example Bose and Mukherjee (2009).<br />

14

This problem is under investigation.<br />

2. Our method assumes that all the three tv<strong>GARCH</strong> parameter functions have the<br />

same degree <strong>of</strong> smoothness and hence they can be approximated equally well in the<br />

same interval. But if the functions possess different degrees <strong>of</strong> smoothness, then the<br />

proposed method may not give the optimal estimators (see Fan and Zhang (1999)).<br />

Therefore, one has to construct an estimator that is adaptive to different degrees<br />

<strong>of</strong> smoothness in different parameter functions.<br />

5 Modelling and forecasting volatility using tv<strong>GARCH</strong><br />

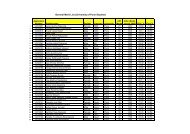

We analyze the currency exchange rates between five major developing economies in the<br />

forefront <strong>of</strong> global economic recovery viz. Brazil (BRL), Russia (RUB), India (INR),<br />

China (CNY) and South Africa (RND) (so called ‘BRICS’) and the developed economies<br />

viz. United States (USD) and Europe (EURO). The last decade saw the ‘BRICS’ mak-<br />

ing their mark on the global economic landscape. In recent <strong>time</strong>s, these economies are<br />

severely affected due to the global financial crisis and currency wars. This was our mo-<br />

tivational factor in analyzing these exchange rates data using tv<strong>GARCH</strong>. Applications<br />

<strong>of</strong> the tv<strong>GARCH</strong> <strong>model</strong> has also been discussed in four stock indices, S & P 500, Dow<br />

Jones, Bombay stock exchange (BSE, India) and National stock exchange (NSE, India).<br />

All the data sets consist <strong>of</strong> daily percent log returns ranging from the beginning <strong>of</strong> 2000<br />

(dates <strong>varying</strong>) to December 31, 2010 except NSE data, which start from January 2002.<br />

The data are available from the websites <strong>of</strong> US Federal Reserve, European Central Bank<br />

and www.finance.yahoo.com. Figures 1 and 2 depict the plot <strong>of</strong> the return data and au-<br />

tocorrelation functions <strong>of</strong> squared returns. In Table 1, we provide the summary statistics<br />

<strong>of</strong> <strong>of</strong> the data.<br />

To compare the in-sample prediction performance <strong>of</strong> tv<strong>GARCH</strong> with several other<br />

well known existing <strong>model</strong>s, we compute the aggregated mean squared error (AMSE)<br />

(see Fryzlewicz, Sapatinas and Subba Rao (2008)):<br />

AMSE = n�<br />

(ǫ<br />

t=1<br />

2 t − ˆσ 2 t ) 2 ,<br />

where ˆσ 2 t and ǫ 2 t are the predicted volatility and squared return at <strong>time</strong> t and n denotes<br />

the sample size. These are reported in Table 2. The lowest AMSEs are presented in<br />

bold letters. Here, <strong>GARCH</strong> (1,1), E<strong>GARCH</strong> (1,1) and GJR (1,1) (see Engle and Ng<br />

15

(1993) and references therein) <strong>model</strong>s are estimated using SAS, while MATLAB is used<br />

for the <strong>estimation</strong> <strong>of</strong> FI<strong>GARCH</strong> (1, d0, 1) <strong>model</strong>, where d0 is the fractional differencing<br />

parameter to be estimated from the data (Baillie (1996)). The definitions <strong>of</strong> these <strong>model</strong>s<br />

are provided in Appendix C. R codes have been written for the <strong>estimation</strong> <strong>of</strong> tv<strong>GARCH</strong><br />

(with d = 3, 1 and p = log n) and tvARCH <strong>model</strong>s using Epanechnikov kernel. All the<br />

codes can be made available on from authors. The choices <strong>of</strong> d = 3, 1 facilitate the optimal<br />

rate <strong>of</strong> convergence <strong>of</strong> the order <strong>of</strong> n −8/9 and n −4/5 respectively and p = log n requires<br />

lesser number <strong>of</strong> parameters to be estimated in Step 1 as compared to other choices <strong>of</strong><br />

p such as √ n. The bandwidth is selected using the cross-validation method as described<br />

in Section 3.1. Estimation <strong>of</strong> the tvARCH <strong>model</strong> has been carried out using Step 1<br />

methodology <strong>of</strong> Section 3 with bandwidth chosen using cross validation, minimizing the<br />

mean-squared prediction error for tvARCH (Hart (1994)). E<strong>GARCH</strong> <strong>model</strong> could not be<br />

estimated for the CNY/USD data due to convergence problems.<br />

Superiority <strong>of</strong> the tv<strong>GARCH</strong> <strong>model</strong> is evident from the Table 2. The non-stationary<br />

<strong>model</strong>s have clearly outperformed stationary as well as long memory <strong>model</strong>s. The AMSEs<br />

<strong>of</strong> tv<strong>GARCH</strong> with d = 3 are smaller than that with d = 1 in most <strong>of</strong> the cases. However,<br />

the difference between the two is not very high. An illustrative comparison <strong>of</strong> tv<strong>GARCH</strong><br />

(d = 3) <strong>model</strong> is also shown in Figure 3 for BRL/EURO data. The faint plot depicts<br />

the squared returns and the dark plot is the predicted volatility with the corresponding<br />

<strong>model</strong>. Clearly, the tv<strong>GARCH</strong> <strong>model</strong> has captured the ups and downs in the volatility<br />

more accurately.<br />

In Figure 4, we plot the the estimators ˆω(u), ˆα(u), ˆ β(u) and ˆα(u) + ˆ β(u) against<br />

u ∈ (0, 1] for the BSE data. Notice that similar to the least squares estimators <strong>of</strong><br />

Fryzlewicz, Sapatinas and Subba Rao (2008), the local polynomial estimators are not<br />

guaranteed to be non-negative. Although, the estimators satisfy ˆα(u) + ˆ β(u) < 1 for this<br />

data, this may not be the case in general depending on the behaviour <strong>of</strong> the data.<br />

To compare the performance <strong>of</strong> the tv<strong>GARCH</strong> <strong>model</strong> further, in Table 3, we report<br />

the AMSE for the in-sample monthly volatility (<strong>of</strong> 22 trading days) forecasts for the<br />

same data sets, based on the monthly returns. The monthly returns are calculated<br />

as rmt = log(Pt/Pt−1), t = 1, 2,...,T, where Pt denotes the closing price on the last<br />

day <strong>of</strong> t th month and T is the total number <strong>of</strong> complete months in the data. All the<br />

datasets are <strong>of</strong> size around 125 except NSE dataset which has the size 95. This analysis<br />

16

provides insight into the nature <strong>of</strong> the tv<strong>GARCH</strong> <strong>model</strong> for small data sets. Our numerical<br />

evidences indicated that the asymptotic properties derived in Section 4 regarding the<br />

bandwidth selection also hold for these moderate sized monthly datasets. We did not<br />

multiply the returns with 100 to avoid large values. This, together with small data size<br />

has resulted in very small AMSEs. However, for comparative purposes, this does not<br />

make any difference. Clearly, the tv<strong>GARCH</strong> is performing better than other <strong>model</strong>s even<br />

for small sample sizes.<br />

One interesting conclusion that can be drawn from the above analyses is that the<br />

global crisis and specially the currency wars have vehemently turned the exchange rates<br />

volatility towards non-stationarity and short memory. This is quite possible as the fre-<br />

quent manipulation <strong>of</strong> the currencies may lead the currency rates to lose its widespread<br />

notion <strong>of</strong> the long memory behaviour.<br />

The ‘out <strong>of</strong> sample forecasting’ performance <strong>of</strong> the tv<strong>GARCH</strong> <strong>model</strong> has been judged<br />

using 50 daily forecasts computed by a rolling-window scheme. The out <strong>of</strong> sample fore-<br />

casts <strong>of</strong> the tv<strong>GARCH</strong> <strong>model</strong> are computed as follows. Use the n1 = n − 50 observations<br />

for the in-sample <strong>estimation</strong>. Then, forecast into the future using the ‘last’ estimated<br />

coefficient values, that is, the estimate <strong>of</strong> coefficient functions at t = n1. Forecasts into<br />

the future are computed in the same way as in a stationary <strong>GARCH</strong> <strong>model</strong> using these<br />

last coefficient estimates. Similar method has also been used by Fryzlewicz et al. (2008)<br />

for the future forecasts using the tvARCH <strong>model</strong>. Let σ 2 t+1|t , t = n1,n1 + 1,...,n − 1<br />

denote the one-step ahead out <strong>of</strong> sample forecasts using the previous n1 observations.<br />

We compare σ 2 t+1|t with ǫ2 t+1, t = n − 50,n − 49,...,n − 1 to get the AMSEs, which are<br />

reported in Table 4.<br />

The out <strong>of</strong> sample forecasts using tv<strong>GARCH</strong> <strong>model</strong> are better than those <strong>of</strong> the other<br />

<strong>model</strong>s. The tv<strong>GARCH</strong> attains the lowest AMSE for 7 data sets, while tvARCH (2) is<br />

better in 1 case. The FI<strong>GARCH</strong> and E<strong>GARCH</strong> <strong>model</strong>s have shown good forecasts for<br />

two data sets each, while <strong>GARCH</strong> and GJR <strong>model</strong>s are performing abysmally.<br />

It is noticeable that the tv<strong>GARCH</strong> <strong>model</strong> with d = 1 performs better than the tv-<br />

<strong>GARCH</strong> with d = 3 in the out <strong>of</strong> sample forecasting. However, there is not much <strong>of</strong> a<br />

difference between AMSEs <strong>of</strong> tv<strong>GARCH</strong> with d = 3 and d = 1. The better performance<br />

<strong>of</strong> tv<strong>GARCH</strong> (d = 3) than tv<strong>GARCH</strong> (d = 1) in the in-sample forecasting can be ex-<br />

plained to some extent by the fact that bigger d yields a higher convergence rate <strong>of</strong> MSE.<br />

17

However, this need not be the case in out <strong>of</strong> sample forecasting. Since the difference<br />

between the tv<strong>GARCH</strong> <strong>model</strong>s with d = 3 and d = 1 is not very high, it seems better<br />

and more practical to use small d = 1. One more advantage <strong>of</strong> d = 1 is that it reduces<br />

the number <strong>of</strong> parameters to be estimated.<br />

Acknowledgments<br />

The first author would like to acknowledge the Council <strong>of</strong> Scientific and Industrial Re-<br />

search (CSIR), India, for the award <strong>of</strong> a junior research fellowship. The second author’s<br />

research is supported by a research grant from CSIR under the head 25(0175)/09/ EMR-<br />

II.<br />

Appendix A: Pro<strong>of</strong>s<br />

In this Appendix, we provide the pro<strong>of</strong>s <strong>of</strong> the results discussed in Sections 2 and 4<br />

along with some auxiliary lemmas.<br />

Pro<strong>of</strong> <strong>of</strong> Proposition 2.1. By recursive substitution in (2), we obtain<br />

σ2 t = ω � �<br />

t<br />

n<br />

+ t−1 �<br />

i�<br />

�<br />

α � t−j+1<br />

n<br />

�<br />

v2 t−j + β � ��<br />

t−j+1<br />

n<br />

i=1 j=1<br />

+ t� �<br />

α<br />

i=1<br />

� �<br />

i v n<br />

2 i−1 + β � ��<br />

i σ n<br />

2 0<br />

ω � �<br />

t−i<br />

n<br />

Suppose u1 = argmax(α(u) + β(u)) then using strong law <strong>of</strong> large numbers as t → ∞,<br />

t� �<br />

α<br />

i=1<br />

� �<br />

i v n<br />

2 i−1 + β � ��<br />

i σ n<br />

2 0 ≤ t� �<br />

α (u1) v<br />

i=1<br />

2 i−1 + β (u1) �<br />

σ2 0 → σ2 0exp(tγ ∗ ) → 0<br />

as γ ∗ = E[log (α(u1)v 2 t + β(u1))] < 0 using Assumption 1(i). The pro<strong>of</strong> <strong>of</strong> uniqueness <strong>of</strong><br />

the solution is similar to the pro<strong>of</strong> <strong>of</strong> Proposition 1 <strong>of</strong> Dahlhaus and Subba Rao (2006).<br />

The lower limit for ¯σ 2 t is easy to obtain using the series.<br />

Pro<strong>of</strong> <strong>of</strong> Proposition 2.2. Notice that<br />

Cov(ǫ 2 t,ǫ 2 t+h) = Cov(σ 2 t v 2 t ,σ 2 t+hv 2 t+h).<br />

Now the result can be proved using the expansion for σ 2 t as in (10) above and by using<br />

Assumption 1(i). We omit the details.<br />

18<br />

(10)

Pro<strong>of</strong> <strong>of</strong> Proposition 2.3. We can write<br />

|ǫ 2 t − �ǫ 2 t(u0)| ≤ � � �ǫ 2 t − �ǫ 2 t<br />

� �� �<br />

t ��<br />

+<br />

���ǫ 2<br />

n t<br />

� �<br />

t − �ǫ n<br />

2 t(u0) � �<br />

� .<br />

Now using Proposition 2.1 and equation (4),<br />

�<br />

�<br />

�ǫ2 t − �ǫ 2 � �� �<br />

t ��<br />

t =<br />

��σ 2<br />

n t − �σ 2 � ��<br />

t �� 2<br />

t v n t = � � 2 �¯σ t − ¯σ 2 � ��<br />

t �� 2<br />

t v n t a.s., but<br />

�<br />

�<br />

�¯σ 2 t − ¯σ 2 � �� �<br />

t ��<br />

t ≤ α n<br />

� �<br />

t v n<br />

2 t−1 + β � ��<br />

t<br />

n<br />

� ∞�<br />

��<br />

�<br />

� α<br />

i=1<br />

� �<br />

t v n<br />

2 t−2 + β � �<br />

t<br />

n<br />

+ M<br />

�<br />

1 + v n<br />

2 t−2) � i� �<br />

α<br />

j=3<br />

� �<br />

t−j+1<br />

v n<br />

2 t−j + β � ��<br />

t−j+1<br />

ω n<br />

� �<br />

t−i<br />

n<br />

− i� �<br />

α<br />

j=2<br />

� �<br />

t v n<br />

2 t−j + β � ��<br />

t ω n<br />

� �<br />

��<br />

t ��<br />

, n<br />

using Assumption 1(ii) (Lipschitz continuity <strong>of</strong> the parameters). Here we take M =<br />

max(M1,M2,M3) and i−k � �<br />

α<br />

j=i<br />

� �<br />

t v n<br />

2 t−j + β � ��<br />

t = 1, ∀ k > 0. Proceeding in a similar way,<br />

n<br />

that is, replacing α � �<br />

t−j+1<br />

and β n<br />

� �<br />

t−j+1<br />

for each j with α n<br />

� �<br />

t and β n<br />

� �<br />

t successively<br />

n<br />

using Lipschitz continuity, after some algebra, we reach to<br />

�<br />

�<br />

�ǫ2 t − �ǫ 2 � ��<br />

t �� Mv<br />

t ≤ n<br />

2 �<br />

∞� i−1 � �<br />

t α n<br />

i=1 j=1<br />

� �<br />

t v n<br />

2 t−j + β � �� ��<br />

t α n<br />

� �<br />

t−i+1 i + ω n<br />

� �<br />

t (i − 1) n<br />

�<br />

v2 t−i<br />

+ �<br />

β � �<br />

t−i+1 i + ω n<br />

� �<br />

t (i − 1) n<br />

��<br />

+ ∞� i� k−2 � �<br />

α(<br />

i=3 k=3 l=1<br />

t<br />

n )v2 t−l + β( t<br />

n )�<br />

× (1 + v2 t−k+1)ω � � i� �<br />

t−i (k − 2) α( n<br />

t−j+1<br />

)v n 2 t−j + β( t−j+1<br />

) n ��<br />

Now suppose Q ∗ = max (sup<br />

u<br />

u1 = argmax(α(u) + β(u)). Then<br />

�<br />

�<br />

�ǫ 2 t − �ǫ 2 t<br />

ω(u), sup<br />

u<br />

j=k<br />

α(u), sup β(u)) < ∞ and<br />

u<br />

� ��<br />

t �� Q<br />

≤ n nVt, where Q = MQ∗ and<br />

Vt = v2 ∞� i−1 �<br />

t (α(u1)v<br />

i=1 j=1<br />

2 t−j + β(u1))(1 + v2 t−i)(2i − 1)<br />

+v2 ∞� i� k−2 �<br />

t (α(u1)v<br />

i=3 k=3 l=1<br />

2 t−l + β(u1))(1 + v2 t−k+1)(k − 2) i�<br />

j=k<br />

(α(u1)v 2 t−j + β(u1))<br />

It can be shown that Vt is a stationary ergodic process (Stout (1996), Theorem 3.5.8)<br />

with,<br />

E|Vt| ≤ ∞�<br />

2(1 − δ)<br />

i=1<br />

i−1 (2i − 1) + ∞� i�<br />

2(k − 2)(1 − δ)<br />

i=3 k=3<br />

i−1 < ∞,<br />

using Assumption 1 (i). In a similar way, we can show that<br />

�<br />

�<br />

��ǫ 2 t( t<br />

n ) − �ǫ2 t (u0) � � � ≤ Q � �� t<br />

n<br />

19<br />

�<br />

�<br />

− u0<br />

� Vt.

Hence the proposition follows.<br />

In the following lemmas, we prove the results for a general bandwidth h, so that the<br />

results are applicable for both h1 and h2.<br />

Lemma A.1. Let {Zt} be a sequence <strong>of</strong> ergodic random variables with E|Zt| < ∞.<br />

Suppose that Assumption 2(ii) is satisfied. Then<br />

n� 1 (i) nh<br />

k=p+1<br />

(uk − u0) iK � �<br />

uk−u0 P<br />

Zk → h h<br />

i µiE(Zt),<br />

n� 1 (ii) nh (uk − u0) iK2 � �<br />

uk−u0 P<br />

Zk → h h<br />

iνiE(Zt), i = 1, 2,...,2d.<br />

k=p+1<br />

where h is a bandwidth such that h → 0 and nh → ∞ as n → ∞.<br />

Pro<strong>of</strong>. The lemma can be proved using similar techniques as in Dahlhaus and Subba<br />

Rao (2006, Lemmas A.1 and A.2). We omit the details.<br />

Lemma A.2. Let the Assumptions 1 and 2 be satisfied. Then<br />

(i)<br />

n�<br />

k=p+1<br />

1<br />

nh (uk − u0) iK � �<br />

uk−u0 ǫ h<br />

2l<br />

k−j1ǫ2m k−j2<br />

∀ l,m ∈ {0, 1, 2} and j1,j2 ∈ {1, 2,...,p}, j1 �= j2<br />

n� 1 (ii) nh (uk − u0) iK2 � �<br />

uk−u0 σ h<br />

4 kǫ2l k−j1ǫ2m k−j2<br />

k=p+1<br />

∀ l,m ∈ {0, 1} and j1,j2 ∈ {1, 2,...,p},<br />

where (ii) is true for l,m > 0 only if E|vt| 8 < ∞.<br />

P<br />

→ hi µiE(�ǫ 2l 2m<br />

k−j1 (u0)�ǫ k−j2 (u0)),<br />

P<br />

→ hiνiE(�σ 4 k(u0)�ǫ 2l 2m<br />

k−j1 (u0)�ǫ k−j2 (u0)),<br />

Pro<strong>of</strong>. (i) We will prove it for l = m = 2. Other cases can be similarly shown. Using<br />

Lemma A.1 it is clear that<br />

n�<br />

k=p+1<br />

1<br />

nh (uk − u0) iK � �<br />

uk−u0 �ǫ h<br />

2l 2m<br />

k−j1 (u0)�ǫ k−j2 (u0)<br />

20<br />

P<br />

→ hi µiE(�ǫ 2l 2m<br />

k−j1 (u0)�ǫ k−j2 (u0)).<br />

(11)

Now consider<br />

n� 1<br />

nh<br />

k=p+1<br />

(uk − u0) iK � � �<br />

uk−u0 ���ǫ 4<br />

h k−j1 (u0)�ǫ 4 k−j2 (u0) − ǫ4 k−j1ǫ4 �<br />

�<br />

k−j2<br />

�<br />

≤ n� 1<br />

nh<br />

k=p+1<br />

(uk − u0) iK � � �<br />

uk−u0 �ǫ h<br />

4 k−j2 (u0)(�ǫ 2 k−j1 (u0) + ǫ2 k−j1 )<br />

�<br />

�<br />

× ��ǫ 2 k−j1 (u0) − ǫ2 �<br />

�<br />

k−j1<br />

� +ǫ4 k−j1 (�ǫ2 k−j2 (u0) + ǫ2 k−j2 )<br />

�<br />

�<br />

��ǫ 2 k−j2 (u0) − ǫ2 ��<br />

�<br />

k−j2<br />

�<br />

≤ Qhi+1R = OP(hi+1 ), where<br />

R = n� 1<br />

nh<br />

k=p+1<br />

(uk−u0 ) h iK � � �<br />

uk−u0 �ǫ h<br />

4 k−j2 (u0)(�ǫ 2 k−j1 (u0) + ǫ2 �<br />

k−j1 ) | uk−j −u0 1<br />

h<br />

+ 1<br />

�<br />

Vk−j1 +ǫ nh<br />

4 k−j1 (�ǫ2 k−j2 (u0) + ǫ2 �<br />

k−j2 ) | uk−j −u0 2 | + h<br />

1<br />

� �<br />

Vk−j2 nh<br />

(using Proposition 2.3). Now using Proposition 2.3 for ǫ2 k−j1 and ǫ2k−j2 in the expression<br />

<strong>of</strong> R and Lemma A.1, it can be shown that E|R| < ∞. Hence using (11), the lemma<br />

holds as n → ∞.<br />

(ii) Using the form (5) <strong>of</strong> tv<strong>GARCH</strong> <strong>model</strong>, we can write<br />

σ2 t = α0( t t ) + α1( n n )ǫ2t−1 ... + αpn( t<br />

n )ǫ2t−pn + OP(ρpn )<br />

where 0 < ρ < 1 and pn → ∞ as n → ∞. The parameter functions αj(u), j = 0, 1,...,pn<br />

are bounded and continuous under the Assumption 2 (i). The result can be proved using<br />

this form <strong>of</strong> σ 2 t in a similar way as in (i) above. We omit the details.<br />

Lemma A.3. Under Assumptions 1 and 2,<br />

where ⊗ denotes the Kronecker product.<br />

1<br />

n X⊤ P<br />

1 W1X1 → S ⊗ A1<br />

Pro<strong>of</strong>. Pro<strong>of</strong> follows using the expansion <strong>of</strong> X ⊤ 1 W1X1 and Lemma A.2 (i).<br />

Lemma A.4. Suppose the Assumptions 1 and 2 are satisfied. In addition assume that<br />

E|vt| 8 < ∞. Then<br />

�<br />

n�<br />

V ar (uk − u0)<br />

k=p+1<br />

iKh(uk − u0)(v2 k − 1)σ2 k[1,ǫ2 k−1,...,ǫ 2 k−p] ⊤<br />

�<br />

= nh2i−1ν2iV ar(v2 t )Ω(1 + oP(1)), i = 1, 2,...,d.<br />

21<br />

|

Pro<strong>of</strong>. Let Ft−1 = σ(ǫ2 t−1,ǫ2 t−2,...). Then<br />

�<br />

n�<br />

V ar (uk − u0)<br />

k=p+1<br />

iKh(uk − u0)(v2 k − 1)σ2 k[1,ǫ2 k−1,...,ǫ 2 k−p] ⊤<br />

�<br />

�<br />

n�<br />

= E (uk − u0)<br />

k=p+1<br />

2iK2 h(uk − u0)V ar �<br />

(v2 k − 1)σ2 k[1,ǫ2 k−1,...,ǫ 2 k−p] ⊤ �<br />

|Fk−1<br />

�<br />

= �<br />

n�<br />

E (uk − u0)<br />

k=p+1<br />

2iK2 h(uk − u0)V ar(v2 k) �<br />

σ4 k[1,ǫ2 k−1,...,ǫ 2 k−p] ⊤ [1,ǫ2 k−1,...,ǫ 2 k−p] ��<br />

= nh2i−1ν2iV ar(v2 t )Ω(1 + oP(1)), (using Lemma A.2(ii))<br />

Pro<strong>of</strong> <strong>of</strong> Theorem 4.1. Let us denote β1 = [α00,α01,...,α0d,...,αp0,...,αpd] ⊤ . Using<br />

Taylor’s series expansion, we can write,<br />

�<br />

Y1 = X1 α0(u0),α (1)<br />

0 (u0),... α(d)<br />

+<br />

⎡<br />

1 ⎢<br />

(d + 1)!<br />

⎣<br />

+<br />

α (d+1)<br />

0 (ζ0(p+1))(up+1 − u0) d+1<br />

.<br />

α (d+1)<br />

0 (ζ0(n))(un − u0) d+1<br />

⎡<br />

p� 1 ⎢<br />

(d + 1)!<br />

⎣ .<br />

j=1<br />

0 (u0)<br />

,α1(u0),...,αp(u0),... d! α(d)<br />

�⊤ p (u0)<br />

d!<br />

⎤<br />

⎥<br />

⎦<br />

α (d+1)<br />

j (ζj(p+1))(up+1 − u0) d+1ǫ2 p+1−j<br />

α (d+1)<br />

j (ζj(n))(un − u0) d+1ǫ2 n−j<br />

⎤<br />

⎥<br />

⎦ +σ2 ∗ (v 2 − en−p)<br />

where σ 2 = [σ 2 p+1,σ 2 p+2,...,σ 2 n] ⊤ , v 2 = [v 2 p+1,v 2 p+2,...,v 2 n] ⊤ , ∗ denotes the component<br />

wise product 3 <strong>of</strong> vectors and ζjk, j = 0, 1,...,p, k = p + 1,...,n are between uk and u0.<br />

Multiplying both sides by (X ⊤ 1 W1X1) −1 X ⊤ 1 W1,<br />

⎡<br />

⎢<br />

× ⎢<br />

⎣ .<br />

ˆβ1(u0) = β1(u0) +<br />

⎡<br />

⎢<br />

× ⎢<br />

⎣ .<br />

α (d+1)<br />

0 (ζ0(p+1))(up+1 − u0) d+1<br />

α (d+1)<br />

0 (ζ0(n))(un − u0) d+1<br />

α (d+1)<br />

j (ζj(p+1))(up+1 − u0) d+1ǫ2 p+1−j<br />

α (d+1)<br />

j (ζj(n))(un − u0) d+1ǫ2 n−j<br />

1<br />

(d + 1)! (X⊤ 1 W1X1) −1 X ⊤ 1 W1<br />

⎤<br />

⎤<br />

⎥<br />

⎦ +<br />

1<br />

(d + 1)!<br />

Now it is not difficult to show using Lemma A.2 (i) that<br />

⎡<br />

⎤<br />

X ⊤ ⎢<br />

1 W1<br />

⎢<br />

⎣<br />

α (d+1)<br />

0 (ζ0(p+1))(up+1 − u0) d+1<br />

.<br />

α (d+1)<br />

0 (ζ0(n))(un − u0) d+1<br />

p�<br />

(X<br />

j=1<br />

⊤ 1 W1X1) −1 X ⊤ 1 W1<br />

⎥<br />

⎦ + (X⊤ 1 W1X1) −1 X ⊤ 1 W1(σ 2 ∗ (v 2 − en−p)). (12)<br />

3 Let x = [x1,x2,...,xp] ⊤ and y = [y1,y2,...,yp] ⊤ , then x ∗ y = [x1y1,x2y2,...,xpyp] ⊤ .<br />

22<br />

⎥<br />

⎦

⎡<br />

X ⊤ ⎢<br />

1 W1<br />

⎢<br />

⎣<br />

and using Lemma A.3,<br />

= nh d+1<br />

1 α (d+1)<br />

0 (u0)[1,e ⊤ p w2] ⊤ (1 + oP(1)) ⊗ D1,<br />

α (d+1)<br />

j (ζj(p+1))(up+1 − u0) d+1ǫ2 p+1−j<br />

.<br />

α (d+1)<br />

j (ζj(n))(un − u0) d+1ǫ2 n−j<br />

= nh d+1<br />

1 α (d+1)<br />

j<br />

Hence, the asymptotic bias is given as,<br />

⎤<br />

⎥<br />

⎦<br />

(u0)[w2,Cj−1,...,Cj−p] ⊤ (1 + oP(1)) ⊗ D1,<br />

(X ⊤ 1 W1X1) −1 = (1/n)S −1 (1 + oP(1)) ⊗ A −1<br />

1 .<br />

E( ˆ β1(u0) − β1(u0))<br />

= hd+1<br />

�<br />

1 α (d+1)!<br />

(d+1)<br />

0 (u0)(S−1 ⊗ A −1<br />

1 )[(1,w2e ⊤ p ] ⊤ ⊗ D1)<br />

+ p �<br />

α<br />

j=1<br />

(d+1)<br />

j (u0)(S−1 ⊗ A −1<br />

1 )([w2,Cj−1,...,Cj−p] ⊤ ⊗ D1) �<br />

+ oP(h d+1<br />

1 ).<br />

Notice that C0 = w4. Now<br />

E( ˆ β1(u0) − β1(u0))<br />

= hd+1<br />

1<br />

(d+1)! (S−1 ⊗ A −1<br />

1 ) ��<br />

+ p �<br />

j=1<br />

α (d+1)<br />

0<br />

(u0)[1,w2e ⊤ p ] ⊤<br />

α (d+1)<br />

j (u0)[w2,Cj−1,...,Cj−p] ⊤� ⊗ D1<br />

= hd+1<br />

1<br />

(d+1)! (S−1 ⊗ A −1<br />

1 ) �<br />

+ oP(h d+1<br />

1 )<br />

= hd+1<br />

�<br />

1<br />

(d+1)!<br />

[α (d+1)<br />

0<br />

S[α (d+1)<br />

0<br />

(u0),α (d+1)<br />

1<br />

(u0),α (d+1)<br />

1<br />

�<br />

+ oP(h d+1<br />

1 )<br />

(u0),...,α (d+1)<br />

p (u0)] ⊤ ⊗ D1<br />

(u0),...,α (d+1)<br />

p (u0)] ⊤ ⊗ A −1<br />

�<br />

1 D1 + oP(h d+1<br />

1 )<br />

Notice that Bias (ˆαj(u0))= e ⊤ j(d+1)+1,(p+1)(d+1) Bias (ˆ β1(u0)). Hence the bias expression is<br />

obtained.<br />

Now the asymptotic variance is<br />

V ar( ˆ β1(u0))<br />

= (1/n)(S −1 (1 + oP(1)) ⊗ A −1<br />

1 )V ar(X ⊤ 1 W1(σ 2 ∗ (v 2 − en−p)))<br />

× (1/n)(S −1 (1 + oP(1)) ⊗ A −1<br />

1 ).<br />

= (1/n)(S −1 (1 + oP(1)) ⊗ A −1<br />

1 )((n/h1)V ar(v 2 t )Ω(1 + oP(1)) ⊗ B1)<br />

× (1/n)(S −1 (1 + oP(1)) ⊗ A −1<br />

1 ).<br />

using Lemma A.4. The desired expression can be obtained after some simplification using<br />

the properties <strong>of</strong> Kronecker product.<br />

23<br />

�

Lemma A.5. Suppose that the Assumptions 1 and 2 are satisfied. Then<br />

(i) 1<br />

n�<br />

(ut − u0) nh2<br />

t=2<br />

iK( ut−u0)ˆσ<br />

h2<br />

2 P<br />

t−1 → hi 2µiλ1<br />

(ii) 1<br />

n�<br />

(ut − u0) nh2<br />

t=2<br />

iK( ut−u0)ˆσ<br />

h2<br />

2 t−1ǫ2 P<br />

t−1 → hi 2µiλ2<br />

(iii) 1<br />

n�<br />

(ut − u0) nh2<br />

t=2<br />

iK( ut−u0)ˆσ<br />

h2<br />

4 P<br />

t−1 → hi 2µiλ3<br />

Pro<strong>of</strong>. (i) It is evident from (12) (in the pro<strong>of</strong> <strong>of</strong> Theorem 4.1) that for j = 0, 1,...,p<br />

Therefore<br />

ˆαj(u0) = δj(u0) + e ⊤ j(d+1)+1,(p+1)(d+1) (X⊤ 1 W1X1) −1 X ⊤ 1 W1(σ 2 ∗ (v 2 − en−p)).<br />

ˆσ 2 t−1 = δ0(ut−1) + p �<br />

δj(ut−1)ǫ2 t−j−1 + R∗ 1, (13)<br />

where, R ∗ 1 = (e ⊤ 1,(p+1)(d+1) + p �<br />

j=1<br />

e<br />

j=1<br />

⊤ j(d+1)+1,(p+1)(d+1) ǫ2t−j) × (X⊤ 1 W1X1) −1X ⊤ 1 W1(σ2 ∗ (v2 − en−p))<br />

Clearly, E(R ∗ 1) = 0. Here δj(·)’s are continuous functions. Substituting this expression<br />

for ˆσ 2 t−1 (13) in (i), and by using Lemma A.2, the result can be proved. Here,<br />

n� 1 (ut − u0) nh2<br />

t=2<br />

iK( ut−u0)ˆσ<br />

h2<br />

2 t−1<br />

= 1<br />

n�<br />

(ut − u0) nh2<br />

t=2<br />

iK( ut−u0)(δ0(ut−1)<br />

+ h2<br />

p �<br />

δj(ut−1)ǫ<br />

j=1<br />

2 t−j)<br />

+ 1<br />

n�<br />

(ut − u0) nh2<br />

t=2<br />

iK( ut−u0)R<br />

h2<br />

∗ 1.<br />

Now the first term <strong>of</strong> the above expression converges in probability to hi 2µiE(δ0(ut−1) +<br />

p�<br />

δj(ut−1)�ǫ 2 t−j(u0)) = hi 2µiλ1. Now using the similar methodology as in Lemma A.2, it<br />

j=1<br />

can be shown that<br />

n�<br />

(ut − u0) iK( ut−u0)ǫ2l<br />

t−jσ2 t (v2 t − 1) P → hi 2µiE(�ǫ 2l<br />

t−j(u0)�σ 2 t (u0)(v2 t − 1))<br />

1<br />

nh2<br />

t=2<br />

h2<br />

= 0, l ∈ {0, 1}, j = 1, 2,...,p.<br />

This implies that X ⊤ 1 W1σ 2 (v 2 − en−p) P → 0. Therefore, using Lemma A.3, R ∗ 1<br />

the pro<strong>of</strong> follows. Other parts <strong>of</strong> the lemma can be proved similarly.<br />

Lemma A.6. Suppose that the Assumptions 1 and 2 are satisfied.<br />

Pro<strong>of</strong>. Notice that<br />

X ⊤ 2 W2X2 = n�<br />

t=2<br />

1<br />

n X⊤ 2 W2X2<br />

P<br />

→ S2 ⊗ A2<br />

Kh2(ut − u0) �<br />

[1,ǫ2 t−1, ˆσ 2 t−1] ⊤ [1,ǫ2 t−1, ˆσ 2 t−1] ⊗ U ⊤ �<br />

t Ut .<br />

24<br />

P<br />

→ 0. Hence

Hence the result can be easily proved using Lemma A.5.<br />

Lemma A.7. Under the similar assumptions as in Lemma A.4,<br />

V ar<br />

�<br />

n�<br />

(uk − u0)<br />

k=p+1<br />

iKh2(uk − u0)(v2 k − 1)σ2 k[1,ǫ2 k−1, ˆσ 2 �<br />

k−1]<br />

= nh 2i−1<br />

2 ν2iV ar(v2 t )Ω2(1 + oP(1)), i = 1, 2,...,d.<br />

Pro<strong>of</strong>. This can be proved in a similar way as Lemma A.4 using (13). We omit the<br />

details.<br />

Pro<strong>of</strong> <strong>of</strong> Theorem 4.2. Denote<br />

β2 = (ω02,ω12,...,ωd2,a02,...,ad0, b02,...,bd2). Using Taylor’s series expansion in (8),<br />

ˆβ2(u0) = β2(u0) +<br />

1<br />

(d + 1)! (X⊤ 2 W2X2) −1 X ⊤ ⎢<br />

2 W2<br />

⎢<br />

⎣<br />

⎡<br />

ω (d+1) (ξ02)(u2 − u0) d+1<br />

.<br />

ω (d+1) (ξ0n)(un − u0) d+1<br />

+ 1<br />

(d + 1)! (X⊤ 2 W2X2) −1 X ⊤ ⎡<br />

α<br />

⎢<br />

2 W2<br />

⎢<br />

⎣<br />

(d+1) (ξ12)(u2 − u0) d+1ǫ2 1<br />

.<br />

α (d+1) (ξ1n)(un − u0) d+1ǫ2 ⎤<br />

⎥<br />

⎦<br />

n−1<br />

+ 1<br />

(d + 1)! (X⊤ 2 W2X2) −1 X ⊤ ⎡<br />

β<br />

⎢<br />

2 W2<br />

⎢<br />

⎣<br />

(d+1) (ξ22))(u2 − u0) d+1ˆσ 2 1<br />

.<br />

β (d+1) (ξ2n)(un − u0) d+1ˆσ 2 ⎤<br />

⎥<br />

⎦<br />

n−1<br />

−(X ⊤ 2 W2X2) −1 X ⊤ ⎡<br />

⎢ β(u2)(b0(u1) +<br />

⎢<br />

2 W2<br />

⎢<br />

⎣<br />

p �<br />

bj(u1)ǫ<br />

j=1<br />

2 1−j)<br />

.<br />

β(un)(b0(un−1) + p �<br />

bj(un−1)ǫ<br />

j=1<br />

2 ⎤<br />

⎥<br />

⎦<br />

n−1−j)<br />

+(X ⊤ 2 W2X2) −1 X ⊤ 2 W2(σ 2 ∗ (v 2 2 − en−1)),<br />

where ξ0t,ξ1t and ξ2t are between ut and u0. Here v 2 2 = [v 2 2,...,v 2 n] ⊤ and σ 2 2 = [σ 2 2,...,σ 2 n] ⊤ .<br />

We ignore the term O(ρ pn ) (see Corollary 4.2) as it is negligible asymptotically. Now using<br />

Lemmas 6.2 and 6.5, it can be shown that<br />

⎡<br />

X ⊤ ⎢<br />

2 W2<br />

⎢<br />

⎣<br />

ω (d+1) (ξ02)(u2 − u0) d+1<br />

.<br />

ω (d+1) (ξ0n)(un − u0) d+1<br />

⎤<br />

⎥<br />

⎦<br />

= nh d+1<br />

2 ω (d+1) (u0)[1,w2,λ1] ⊤ (1 + oP(1)) ⊗ D2,<br />

25<br />

⎤<br />

⎥<br />

⎦

and<br />

X ⊤ ⎡<br />

α<br />

⎢<br />

2 W2<br />

⎢<br />

⎣<br />

(d+1) (ξ12))(u2 − u0) d+1ǫ2 1<br />

.<br />

α (d+1) (ξ1n)(un − u0) d+1ǫ2 ⎤<br />

⎥<br />

⎦<br />

n−1<br />

= nh d+1<br />

2 α (d+1) (u0)[w2,w4,λ2] ⊤ (1 + oP(1)) ⊗ D2,<br />

X ⊤ ⎡<br />

β<br />

⎢<br />

2 W2<br />

⎢<br />

⎣<br />

(d+1) (ξ22))(u2 − u0) d+1ˆσ 2 1<br />

.<br />

β (d+1) (ξ2n)(un − u0) d+1ˆσ 2 ⎤<br />

⎥<br />

⎦<br />

n−1<br />

= nh d+1<br />

2 β (d+1) (u0)[λ1,λ2,λ3] ⊤ (1 + oP(1)) ⊗ D2<br />

X ⊤ ⎡<br />

⎢ β(u2)(b0(u1) +<br />

⎢<br />

2 W2<br />

⎢<br />

⎣<br />

p �<br />

bj(u1)ǫ<br />

j=1<br />

2 1−j)<br />

.<br />

β(un)(b0(un−1) + p �<br />

bj(un−1)ǫ<br />

j=1<br />

2 ⎤<br />

⎥<br />

⎦<br />

n−1−j)<br />

Using Lemma A.6,<br />

Therefore,<br />

Bias( ˆ β2(u0))<br />

= β(u0)[λ1b,λ2b,λ3b)(1 + oP(1)] ⊤ ⊗ D ∗ .<br />

(X ⊤ 2 W2X2) −1 = (1/n)S −1<br />

2 (1 + oP(1)) ⊗ A −1<br />

2 .<br />

= hd+1<br />

2<br />

(d+1)! (S−1 2 (1 + oP(1)) ⊗ A −1<br />

2 ) ��<br />

ω (d+1) (u0)[1,w2,λ1] ⊤<br />

+ α (d+1) (u0)[w2,w4,λ2] ⊤ + β (d+1) (u0)[λ1,λ2,λ3] ⊤�<br />

− β(u0)S −1<br />

2 [λ1b,λ2b,λ3b] ⊤ ⊗ A −1<br />

2 D ∗ + oP(h d+1<br />

2 )<br />

= hd+1<br />

2<br />

(d+1)! (S−1 2 ⊗ A −1<br />

2 ) �<br />

(1 + oP(1)) ⊗ A −1<br />

2 D �<br />

(S2[ω (d+1) (u0),α (d+1) (u0),β (d+1) (u0)] ⊤ ) ⊗ D2<br />

− β(u0)S −1<br />

2 [λ1b,λ2b,λ3b] ⊤ ⊗ A −1<br />

2 D ∗ + oP(h d+1<br />

2 )<br />

= hd+1<br />

2<br />

(d+1)! [ω(d+1) (u0),α (d+1) (u0),β (d+1) (u0)] ⊤ ⊗ A −1<br />

2 D2<br />

− β(u0)S −1<br />

2 [λ1b,λ2b,λ3b] ⊤ ⊗ A −1<br />

2 D∗ + oP(h d+1<br />

2 ).<br />

The bias expressions can be obtained after some simplification by using<br />

Bias(ˆω(u0)) = e ⊤ 1,3(d+1) Bias(ˆ β2(u0)), Bias(ˆα(u0)) = e ⊤ d+1,3(d+1) Bias(ˆ β2(u0))<br />

and Bias( ˆ β(u0)) = e ⊤ 2d+3,3(d+1) Bias(ˆ β2(u0)).<br />

Now using Lemma A.7<br />

V ar( ˆ β2(u0)) = (1/n)S −1<br />

2 (1 + oP(1)) ⊗ A −1<br />

2 V ar(X ⊤ 2 W2(σ 2 ∗ (v 2 − en−p)))<br />

× (1/n)S −1<br />

2 (1 + oP(1)) ⊗ A −1<br />

2<br />

= 1<br />

nh2 V ar(v2 t )(S −1<br />

2 ⊗ A −1<br />

2 )(Ω2 ⊗ B2)(S −1<br />

2 ⊗ A −1<br />

2 )(1 + oP(1)).<br />

26<br />

�

The variance expression given in Theorem 4.2 can be arrived at after some simplification.<br />

Appendix B<br />

To make the cross validation bandwidth selection computationally feasible, we derive<br />

a relation between the (ˆω, ˆα, ˆ β) and (ˆω −t , ˆα −t , ˆ β −t ) in Proposition B.1. The idea is simi-<br />

lar to the generalized cross validation, which simplifies the intensive computation involved<br />

in the original cross validation (see Wabha (1977), Li and Palta (2009)).<br />

Proposition B.1. Let ˆ β2(u0) be the local polynomial estimator <strong>of</strong> β2(u0) where β2 =<br />

(ω02,ω12,...,ωd2,a02,...,ad0, b02,...,bd2). Suppose that ˆ β −t<br />

2 (u0) denotes the leave one<br />

out (obtained by eliminating the tth observation) estimators <strong>of</strong> β2(u0). Then,<br />

ˆβ −i<br />

2 (u0) = � β2(u0) ˆ − (X⊤ 2 W2X2) −1X ⊤ 2 W2I ∗ �<br />

i Y2<br />

�<br />

+Zi<br />

ˆβ2(u0) − (X⊤ 2 W2X2) −1X ⊤ 2 W2I ∗ � (14)<br />

i Y2<br />

where Zi = (X⊤ 2 W2X2) −1X ⊤ �<br />

2 W2 In−1 + I∗ i X2(X ⊤ 2 W2X2) −1X ⊤ �−1 2 W2 I∗ i X2 and I∗ i de-<br />

notes a matrix <strong>of</strong> order (n − 1) × (n − 1) with (i,i) th element as one and rest <strong>of</strong> them<br />

as zero. Now ˆω −i (u0) = e1,3(d+1)β −i<br />

2 (u0), ˆα −i (u0) = ed+1,3(d+1)β −i<br />

2 (u0) and ˆ β −i (u0) =<br />

e2d+3,3(d+1)β −i<br />

2 (u0).<br />

Notice that to compute (9), we need to fit the <strong>model</strong> just once based on the original<br />

sample (to obtain ˆ β2(u0)). The estimators, (ˆω −i (u0), ˆα −i (u0), ˆ β −i (u0)) can then be easily<br />

computed using the relation (14). This computation is easy and straightforward as we<br />

do not require to delete the data points from the original sample and refit the <strong>model</strong>.<br />

All we need is to change I ∗ i for each i, which can be done easily using a simple program.<br />

Thus the relation (14) facilitates the bandwidth selection and saves enormous amount <strong>of</strong><br />

computing <strong>time</strong>.<br />

Pro<strong>of</strong> <strong>of</strong> Proposition B.1. Let Ip denote the identity matrix <strong>of</strong> order p. Define<br />

the matrices<br />

Ji =<br />

⎡<br />

⎢<br />

⎣<br />

J1 =<br />

I(i−1)<br />

0(i−1)×(n−i−1)<br />

01×(i−1) 01×(i−1)×(n−i−1)<br />

0(n−i−1)×(i−1) I(n−i−1)×(n−i−1)<br />

�<br />

01×(n−2)<br />

In−2<br />

�<br />

(n−1)×(n−2)<br />

⎤<br />

⎥<br />

⎦<br />

, Jn =<br />

27<br />

(n−1)×(n−2)<br />

�<br />

In−2<br />

01×(n−2)<br />

, i = 2,...,n − 1,<br />

�<br />

(n−1)×(n−2)<br />

.

Let W −i<br />

2 denote the matrix W2 with i th row and i th column deleted. Similarly, suppose<br />

X −i<br />

2 and Y −i<br />

2 denote the X2 and Y2 with i th row omitted. It is obvious that<br />

X −i<br />

2 = J ⊤ i X2, W −i<br />

2 = J ⊤ i W2Ji and Y −i<br />

2 = J ⊤ i Y2.<br />

Now, notice that J ⊤ i Ji = In−2 and JiJ ⊤ i = In−1 −I ∗ i . Using these relations and after some<br />

algebra, it can be shown that,<br />

and<br />

Therefore, using the Woodbury formula, 4<br />

X −i⊤<br />

2 W −i<br />

2 X −i<br />

2 = X ⊤ 2 W2X2 − X ⊤ 2 W2I ∗ i X2<br />

X −i⊤<br />

2 W −i<br />

2 Y −i<br />

2 = X ⊤ 2 W2Y2 − X ⊤ 2 W2I ∗ i Y2.<br />

(X −i⊤<br />

2 W −i<br />

2 X −i<br />

2 ) −1 = (X ⊤ 2 W2X2) −1 + Zi(X ⊤ 2 W2X2) −1 ,<br />

where Zi is as defined in Proposition B.1. After some algebraic simplification, this leads<br />

to<br />

Appendix C<br />

β −i<br />

2 (u0) = (X −i⊤<br />

2 W −i<br />

2 X −i<br />

2 ) −1X −i⊤<br />

2 W −i<br />

2 Y −i<br />

2<br />

= � β2(u0) ˆ − (X⊤ 2 W2X2) −1X ⊤ 2 W2I ∗ i Y2<br />

�<br />

+ Zi<br />

ˆβ2(u0) − (X⊤ 2 W2X2) −1X ⊤ 2 W2I ∗ �<br />

i Y2 .<br />

In this appendix, we provide the definitions <strong>of</strong> the <strong>GARCH</strong> <strong>model</strong>s used in Section 5.<br />

The return process {ǫt} with E(ǫt|Ft−1) = 0 and E(ǫ 2 t |Ft−1) = σ 2 t , is said to follow<br />

(i) a <strong>GARCH</strong> process, if<br />

where ω,α,β > 0,<br />

σ 2 t = ω + αǫ 2 t−1 + βσ 2 t−1,<br />

(ii) an E<strong>GARCH</strong> process if<br />

log σ 2 ⎡�<br />

�<br />

�<br />

t = ω + α ⎣�<br />

ǫt−1<br />

�<br />

�<br />

� �<br />

�σt−1<br />

� −<br />

� ⎤<br />

2<br />

⎦ + γ<br />

π<br />

ǫt−1<br />

+ β log σ<br />

σt−1<br />

2 t−1,<br />

4 Let Ap×p, Bp×q and Cq×p denotes the matrices, then according to the Woodbury formula,<br />

where Ip denotes the identity matrix.<br />

(A + BC) −1 = A −1 − � A −1 B(Ip + CA −1 B) −1 CA −1�<br />

28<br />

�

(iii) a GJR process if<br />

where ω,α,β,γ > 0,<br />

(iv) a FI<strong>GARCH</strong> (1,d0,1) process if<br />

where<br />

and ω,φ,β > 0, 0 < d0 < 1.<br />

References<br />

σ 2 t = ω + αǫ 2 t−1 + βσ 2 t−1 + γI[ǫt

Journal <strong>of</strong> Finance 48, 1749-1778.<br />

Fan, J. and Gijbels, I. (1996). Local Polynomial Modeling and Its Applications. Chapman<br />

and Hall, London.<br />

Fan, J. and Zhang, W. (1999). Statistical <strong>estimation</strong> in <strong>varying</strong> coefficient <strong>model</strong>s. Ann.<br />

Statist. 27, 1491-1518.<br />

Franke, J. and Kreiss, J.P. (1992). Bootstrapping stationary autoregressive moving av-<br />

erage <strong>model</strong>s. J. Time Series Anal. 13, 297-317.<br />

Fryzlewicz, P., Sapatinas, T. and Subba Rao, S. (2008). Normalized least-squares esti-<br />

mation in <strong>time</strong>-<strong>varying</strong> ARCH <strong>model</strong>s. Ann. Statist. 36, 742-786.<br />

Hart, J. D. (1994). Automated kernel smoothing <strong>of</strong> dependent data by using <strong>time</strong> series<br />

cross- validation. J. R. Stat. Soc. Ser. B Stat. Methodol. 56, 529-542.<br />

Li, J. and Palta, M. (2009). Bandwidth selection through cross-validation for semi-<br />

<strong>parametric</strong> <strong>varying</strong>-coefficient partially linear <strong>model</strong>s. J. Stat. Comput. Simul. 79,<br />

1277-1286.<br />

Mercurio, D. and Spokoiny, V. (2004). Statistical inference for <strong>time</strong>-inhomogeneous<br />

volatility <strong>model</strong>s. Ann. Statist. 32, 577-602.<br />

Mikosch, T. and Starica, C. (2004). <strong>Non</strong>stationarities in financial <strong>time</strong> series, the long-<br />

range dependence and the I<strong>GARCH</strong> effects. Rev. Econ. Statist. 86, 378-390.<br />