Chapter 14 - Bootstrap Methods and Permutation Tests - WH Freeman

Chapter 14 - Bootstrap Methods and Permutation Tests - WH Freeman

Chapter 14 - Bootstrap Methods and Permutation Tests - WH Freeman

You also want an ePaper? Increase the reach of your titles

YUMPU automatically turns print PDFs into web optimized ePapers that Google loves.

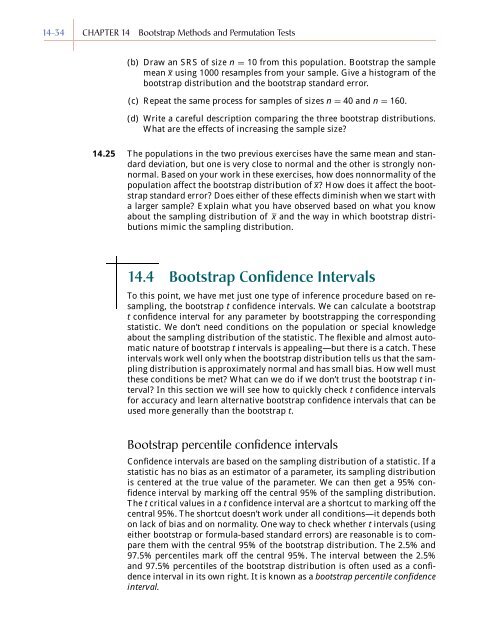

<strong>14</strong>-34 CHAPTER <strong>14</strong> <strong>Bootstrap</strong> <strong>Methods</strong> <strong>and</strong> <strong>Permutation</strong> <strong>Tests</strong><br />

(b) Draw an SRS of size n = 10 from this population. <strong>Bootstrap</strong> the sample<br />

mean x using 1000 resamples from your sample. Give a histogram of the<br />

bootstrap distribution <strong>and</strong> the bootstrap st<strong>and</strong>ard error.<br />

(c) Repeat the same process for samples of sizes n = 40 <strong>and</strong> n = 160.<br />

(d) Write a careful description comparing the three bootstrap distributions.<br />

What are the effects of increasing the sample size?<br />

<strong>14</strong>.25 The populations in the two previous exercises have the same mean <strong>and</strong> st<strong>and</strong>ard<br />

deviation, but one is very close to normal <strong>and</strong> the other is strongly nonnormal.<br />

Based on your work in these exercises, how does nonnormality of the<br />

population affect the bootstrap distribution of x? How does it affect the bootstrap<br />

st<strong>and</strong>ard error? Does either of these effects diminish when we start with<br />

a larger sample? Explain what you have observed based on what you know<br />

about the sampling distribution of x <strong>and</strong> the way in which bootstrap distributions<br />

mimic the sampling distribution.<br />

<strong>14</strong>.4 <strong>Bootstrap</strong> Confidence Intervals<br />

To this point, we have met just one type of inference procedure based on resampling,<br />

the bootstrap t confidence intervals. We can calculate a bootstrap<br />

t confidence interval for any parameter by bootstrapping the corresponding<br />

statistic. We don’t need conditions on the population or special knowledge<br />

about the sampling distribution of the statistic. The flexible <strong>and</strong> almost automatic<br />

nature of bootstrap t intervals is appealing—but there is a catch. These<br />

intervals work well only when the bootstrap distribution tells us that the sampling<br />

distribution is approximately normal <strong>and</strong> has small bias. How well must<br />

these conditions be met? What can we do if we don’t trust the bootstrap t interval?<br />

In this section we will see how to quickly check t confidence intervals<br />

for accuracy <strong>and</strong> learn alternative bootstrap confidence intervals that can be<br />

used more generally than the bootstrap t.<br />

<strong>Bootstrap</strong> percentile confidence intervals<br />

Confidence intervals are based on the sampling distribution of a statistic. If a<br />

statistic has no bias as an estimator of a parameter, its sampling distribution<br />

is centered at the true value of the parameter. We can then get a 95% confidence<br />

interval by marking off the central 95% of the sampling distribution.<br />

The t critical values in a t confidence interval are a shortcut to marking off the<br />

central 95%. The shortcut doesn’t work under all conditions—it depends both<br />

on lack of bias <strong>and</strong> on normality. One way to check whether t intervals (using<br />

either bootstrap or formula-based st<strong>and</strong>ard errors) are reasonable is to compare<br />

them with the central 95% of the bootstrap distribution. The 2.5% <strong>and</strong><br />

97.5% percentiles mark off the central 95%. The interval between the 2.5%<br />

<strong>and</strong> 97.5% percentiles of the bootstrap distribution is often used as a confidence<br />

interval in its own right. It is known as a bootstrap percentile confidence<br />

interval.