Chapter 14 - Bootstrap Methods and Permutation Tests - WH Freeman

Chapter 14 - Bootstrap Methods and Permutation Tests - WH Freeman

Chapter 14 - Bootstrap Methods and Permutation Tests - WH Freeman

Create successful ePaper yourself

Turn your PDF publications into a flip-book with our unique Google optimized e-Paper software.

<strong>14</strong>-8 CHAPTER <strong>14</strong> <strong>Bootstrap</strong> <strong>Methods</strong> <strong>and</strong> <strong>Permutation</strong> <strong>Tests</strong><br />

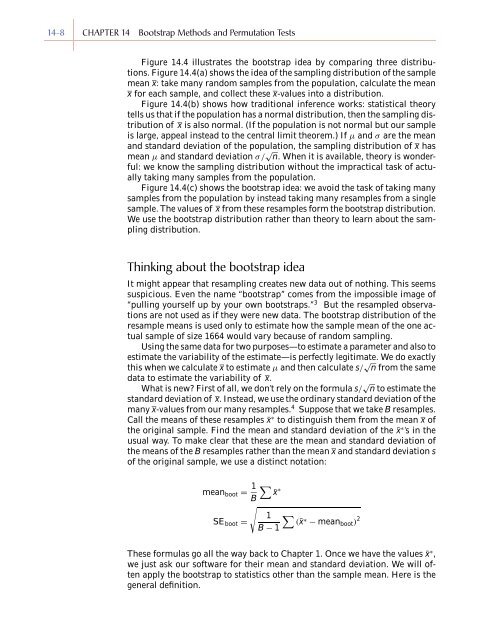

Figure <strong>14</strong>.4 illustrates the bootstrap idea by comparing three distributions.<br />

Figure <strong>14</strong>.4(a) shows the idea of the sampling distribution of the sample<br />

mean x: take many r<strong>and</strong>om samples from the population, calculate the mean<br />

x for each sample, <strong>and</strong> collect these x-values into a distribution.<br />

Figure <strong>14</strong>.4(b) shows how traditional inference works: statistical theory<br />

tells us that if the population has a normal distribution, then the sampling distribution<br />

of x is also normal. (If the population is not normal but our sample<br />

is large, appeal instead to the central limit theorem.) If µ <strong>and</strong> σ are the mean<br />

<strong>and</strong> st<strong>and</strong>ard deviation of the population, the sampling distribution of x has<br />

mean µ <strong>and</strong> st<strong>and</strong>ard deviation σ/ √ n. When it is available, theory is wonderful:<br />

we know the sampling distribution without the impractical task of actually<br />

taking many samples from the population.<br />

Figure <strong>14</strong>.4(c) shows the bootstrap idea: we avoid the task of taking many<br />

samples from the population by instead taking many resamples from a single<br />

sample. The values of x from these resamples form the bootstrap distribution.<br />

We use the bootstrap distribution rather than theory to learn about the sampling<br />

distribution.<br />

Thinking about the bootstrap idea<br />

It might appear that resampling creates new data out of nothing. This seems<br />

suspicious. Even the name “bootstrap” comes from the impossible image of<br />

“pulling yourself up by your own bootstraps.” 3 But the resampled observations<br />

are not used as if they were new data. The bootstrap distribution of the<br />

resample means is used only to estimate how the sample mean of the one actual<br />

sample of size 1664 would vary because of r<strong>and</strong>om sampling.<br />

Using the same data for two purposes—to estimate a parameter <strong>and</strong> also to<br />

estimate the variability of the estimate—is perfectly legitimate. We do exactly<br />

this when we calculate x to estimate µ <strong>and</strong> then calculate s/ √ n from the same<br />

data to estimate the variability of x.<br />

What is new? First of all, we don’t rely on the formula s/ √ n to estimate the<br />

st<strong>and</strong>ard deviation of x. Instead, we use the ordinary st<strong>and</strong>ard deviation of the<br />

many x-values from our many resamples. 4 Suppose that we take B resamples.<br />

Call the means of these resamples ¯x ∗ to distinguish them from the mean x of<br />

the original sample. Find the mean <strong>and</strong> st<strong>and</strong>ard deviation of the ¯x ∗ ’s in the<br />

usual way. To make clear that these are the mean <strong>and</strong> st<strong>and</strong>ard deviation of<br />

the means of the B resamples rather than the mean x <strong>and</strong> st<strong>and</strong>ard deviation s<br />

of the original sample, we use a distinct notation:<br />

mean boot = 1 ∑<br />

¯x<br />

∗<br />

B<br />

√<br />

1 ∑<br />

SE boot =<br />

(¯x∗ − mean boot ) 2<br />

B − 1<br />

These formulas go all the way back to <strong>Chapter</strong> 1. Once we have the values ¯x ∗ ,<br />

we just ask our software for their mean <strong>and</strong> st<strong>and</strong>ard deviation. We will often<br />

apply the bootstrap to statistics other than the sample mean. Here is the<br />

general definition.