ADMIN+Magazine+Sample+PDF

You also want an ePaper? Increase the reach of your titles

YUMPU automatically turns print PDFs into web optimized ePapers that Google loves.

Tools<br />

OCFS2<br />

you can set for the mount operation.<br />

If OCFS2 detects an error in the data<br />

structure, it will default to readonly.<br />

In certain situations, a reboot<br />

can clear this up. The errors=panic<br />

mount option handles this. Another<br />

interesting option is commit=seconds.<br />

The default value is 5, which means<br />

that OCFS2 writes the data out to disk<br />

every five seconds. If a crash occurs,<br />

a consistent filesystem can be guaranteed<br />

– thanks to journaling – and only<br />

the work from the last five seconds<br />

will be lost. The mount option that<br />

specifies the way data are handled<br />

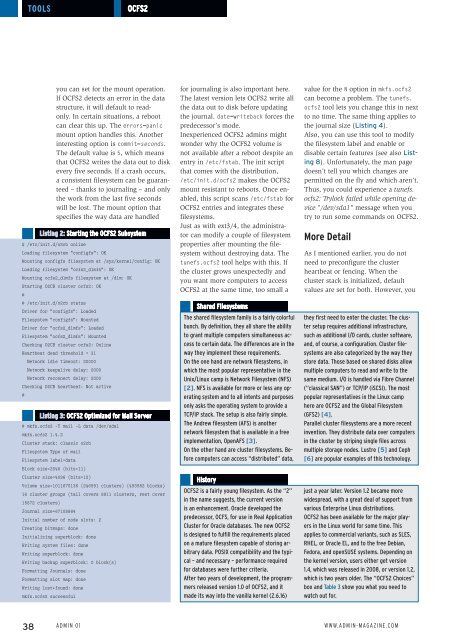

Listing 2: Starting the OCFS2 Subsystem<br />

# /etc/init.d/o2cb online<br />

Loading filesystem "configfs": OK<br />

Mounting configfs filesystem at /sys/kernel/config: OK<br />

Loading filesystem "ocfs2_dlmfs": OK<br />

Mounting ocfs2_dlmfs filesystem at /dlm: OK<br />

Starting O2CB cluster ocfs2: OK<br />

#<br />

# /etc/init.d/o2cb status<br />

Driver for "configfs": Loaded<br />

Filesystem "configfs": Mounted<br />

Driver for "ocfs2_dlmfs": Loaded<br />

Filesystem "ocfs2_dlmfs": Mounted<br />

Checking O2CB cluster ocfs2: Online<br />

Heartbeat dead threshold = 31<br />

Network idle timeout: 30000<br />

Network keepalive delay: 2000<br />

Network reconnect delay: 2000<br />

Checking O2CB heartbeat: Not active<br />

#<br />

Listing 3: OCFS2 Optimized for Mail Server<br />

# mkfs.ocfs2 ‐T mail ‐L data /dev/sda1<br />

mkfs.ocfs2 1.4.2<br />

Cluster stack: classic o2cb<br />

Filesystem Type of mail<br />

Filesystem label=data<br />

Block size=2048 (bits=11)<br />

Cluster size=4096 (bits=12)<br />

Volume size=1011675136 (246991 clusters) (493982 blocks)<br />

16 cluster groups (tail covers 8911 clusters, rest cover<br />

15872 clusters)<br />

Journal size=67108864<br />

Initial number of node slots: 2<br />

Creating bitmaps: done<br />

Initializing superblock: done<br />

Writing system files: done<br />

Writing superblock: done<br />

Writing backup superblock: 0 block(s)<br />

Formatting Journals: done<br />

Formatting slot map: done<br />

Writing lost+found: done<br />

mkfs.ocfs2 successful<br />

for journaling is also important here.<br />

The latest version lets OCFS2 write all<br />

the data out to disk before updating<br />

the journal. date=writeback forces the<br />

predecessor’s mode.<br />

Inexperienced OCFS2 admins might<br />

wonder why the OCFS2 volume is<br />

not available after a reboot despite an<br />

entry in /etc/fstab. The init script<br />

that comes with the distribution,<br />

/etc/init.d/ocfs2 makes the OCFS2<br />

mount resistant to reboots. Once enabled,<br />

this script scans /etc/fstab for<br />

OCFS2 entries and integrates these<br />

filesystems.<br />

Just as with ext3/4, the administrator<br />

can modify a couple of filesystem<br />

properties after mounting the filesystem<br />

without destroying data. The<br />

tunefs.ocfs2 tool helps with this. If<br />

the cluster grows unexpectedly and<br />

you want more computers to access<br />

OCFS2 at the same time, too small a<br />

Shared Filesystems<br />

The shared filesystem family is a fairly colorful<br />

bunch. By definition, they all share the ability<br />

to grant multiple computers simultaneous access<br />

to certain data. The differences are in the<br />

way they implement these requirements.<br />

On the one hand are network filesystems, in<br />

which the most popular representative in the<br />

Unix/Linux camp is Network Filesystem (NFS)<br />

[2]. NFS is available for more or less any operating<br />

system and to all intents and purposes<br />

only asks the operating system to provide a<br />

TCP/IP stack. The setup is also fairly simple.<br />

The Andrew filesystem (AFS) is another<br />

network filesystem that is available in a free<br />

implementation, OpenAFS [3].<br />

On the other hand are cluster filesystems. Before<br />

computers can access “distributed” data,<br />

History<br />

OCFS2 is a fairly young filesystem. As the “2”<br />

in the name suggests, the current version<br />

is an enhancement. Oracle developed the<br />

predecessor, OCFS, for use in Real Application<br />

Cluster for Oracle databases. The new OCFS2<br />

is designed to fulfill the requirements placed<br />

on a mature filesystem capable of storing arbitrary<br />

data. POSIX compatibility and the typical<br />

– and necessary – performance required<br />

for databases were further criteria.<br />

After two years of development, the programmers<br />

released version 1.0 of OCFS2, and it<br />

made its way into the vanilla kernel (2.6.16)<br />

value for the N option in mkfs.ocfs2<br />

can become a problem. The tunefs.<br />

ocfs2 tool lets you change this in next<br />

to no time. The same thing applies to<br />

the journal size (Listing 4).<br />

Also, you can use this tool to modify<br />

the filesystem label and enable or<br />

disable certain features (see also Listing<br />

8). Unfortunately, the man page<br />

doesn’t tell you which changes are<br />

permitted on the fly and which aren’t.<br />

Thus, you could experience a tunefs.<br />

ocfs2: Trylock failed while opening device<br />

"/dev/sda1" message when you<br />

try to run some commands on OCFS2.<br />

More Detail<br />

As I mentioned earlier, you do not<br />

need to preconfigure the cluster<br />

heartbeat or fencing. When the<br />

cluster stack is initialized, default<br />

values are set for both. However, you<br />

they first need to enter the cluster. The cluster<br />

setup requires additional infrastructure,<br />

such as additional I/O cards, cluster software,<br />

and, of course, a configuration. Cluster filesystems<br />

are also categorized by the way they<br />

store data. Those based on shared disks allow<br />

multiple computers to read and write to the<br />

same medium. I/O is handled via Fibre Channel<br />

(“classical SAN”) or TCP/IP (iSCSI). The most<br />

popular representatives in the Linux camp<br />

here are OCFS2 and the Global Filesystem<br />

(GFS2) [4].<br />

Parallel cluster filesystems are a more recent<br />

invention. They distribute data over computers<br />

in the cluster by striping single files across<br />

multiple storage nodes. Lustre [5] and Ceph<br />

[6] are popular examples of this technology.<br />

just a year later. Version 1.2 became more<br />

widespread, with a great deal of support from<br />

various Enterprise Linux distributions.<br />

OCFS2 has been available for the major players<br />

in the Linux world for some time. This<br />

applies to commercial variants, such as SLES,<br />

RHEL, or Oracle EL, and to the free Debian,<br />

Fedora, and openSUSE systems. Depending on<br />

the kernel version, users either get version<br />

1.4, which was released in 2008, or version 1.2,<br />

which is two years older. The “OCFS2 Choices”<br />

box and Table 3 show you what you need to<br />

watch out for.<br />

38 Admin 01 www.admin-magazine.com