ADMIN+Magazine+Sample+PDF

You also want an ePaper? Increase the reach of your titles

YUMPU automatically turns print PDFs into web optimized ePapers that Google loves.

openVz<br />

VirtuAlizAtion<br />

live migration, checkpointing and restoring<br />

OpenVZ containers can be shifted from one physical host to another during operations (Live<br />

migration). Ideally, the user will not even notice this process. However, the host environment<br />

must be configured to support live migration from a technical point of view. In other words, both<br />

virtual environments must reside on the same subnet, and data transmission rate must be high<br />

enough. Additionally, the target virtual environment (VE) must have sufficient hard disk space. If<br />

these conditions are fulfilled, the following command starts the migration:<br />

vzmigrate ‐online target IP VEID<br />

Target IP is the network address of the VE into which you want to migrate to the VE with the ID<br />

of VEID. Of course, the vzmigrate tool supports a plethora of different options (e.g., for migrating<br />

over secure connections). The exact syntax and other examples of applications are discussed<br />

[12]. Additionally, OpenVZ can create what it refers to as checkpoints (snapshots) of VEs: A<br />

checkpoint freezes the current state of the VE and saves it in a file. The checkpoint can be created<br />

from within the host context with the vzctl chkpnt VEID command. The checkpoint file<br />

can be used later to restore the VE on another OpenVZ host with vzctlrestore VEID.<br />

The container abstraction layer makes<br />

sure that the guest system sees its<br />

own process namespace with separate<br />

process IDs. On top of this, the kernel<br />

extension that provides the interface<br />

is required to create, delete, shut<br />

down and assign resources to containers.<br />

Because the container data<br />

are extensible on the host file system,<br />

resource containers are easy to manage<br />

from within the host context.<br />

Efficiency<br />

Resource containers are magnitudes<br />

more efficient than hypervisor systems<br />

because each container uses<br />

only as many CPU cycles and as<br />

much memory as its active applications<br />

need. The resources the abstraction<br />

layer itself needs are negligible.<br />

The Linux installation on the guest<br />

only consumes hard disk space. How-<br />

ever, you can’t load any drivers or<br />

kernels from within a container. The<br />

predecessors of container virtualization<br />

in the Unix world are technologies<br />

that have been used for decades,<br />

such as chroot (Linux), jails (BSD), or<br />

Zones (Solaris). With the exception<br />

of (container) virtualization in User-<br />

Mode Linux [4], only a single host<br />

kernel runs with resource containers.<br />

OpenVZ<br />

OpenVZ is the free variant of a commercial<br />

product called Parallels<br />

Virtuozzo. The kernel component is<br />

available under the GPL; the source<br />

code for the matching tools under the<br />

QPL. OpenVZ runs on any CPU type,<br />

including CPUs without VT extensions.<br />

It supports snapshots of active<br />

containers as well as the Live migration<br />

of containers to a different host<br />

(see the box “Live Migration, Checkpointing<br />

and Restoring”). Incidentally,<br />

the host is referred to as the hardware<br />

node in OpenVZ-speak.<br />

To be able to use OpenVZ, you will<br />

need a kernel with OpenVZ patches.<br />

One problem is that the current stable<br />

release of OpenVZ is still based on<br />

kernel 2.6.18, and what is known as<br />

the super stable version is based on<br />

2.6.9. It looks like the OpenVZ developers<br />

can’t keep pace with official<br />

kernel development. Various distributions<br />

have had an OpenVZ kernel,<br />

such as the last LTS release (v8.04) of<br />

Ubuntu, on which this article is based<br />

(Figure 2).<br />

Ubuntu 9.04 and 9.10 no longer feature<br />

OpenVZ, apart from the VZ tools;<br />

this also applies to Ubuntu 10.04. If<br />

you really need a current kernel on<br />

your host system, your only option is<br />

to download the beta release, which<br />

uses kernel 2.6.32. The option of using<br />

OpenVZ and KVM on the same<br />

host system opens up interesting possibilities<br />

for a free super virtualization<br />

solution with which administrators<br />

can experiment.<br />

If you are planning to deploy OpenVZ<br />

in a production environment, I suggest<br />

you keep to the following recommendations:<br />

You must disable<br />

SELinux because OpenVZ will not<br />

work correctly otherwise. Additionally,<br />

the host system should only be<br />

a minimal system. You will probably<br />

want to dedicate a separate partition<br />

to OpenVZ and to mount this below,<br />

/ovz, for example Besides this, you<br />

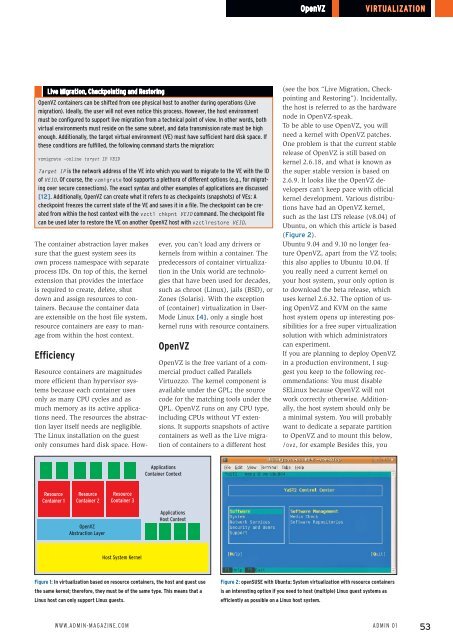

Applications<br />

Container Context<br />

Resource<br />

Container 1<br />

Resource<br />

Container 2<br />

Resource<br />

Container 3<br />

OpenVZ<br />

Abstraction Layer<br />

Applications<br />

Host Context<br />

Host System Kernel<br />

Figure 1: In virtualization based on resource containers, the host and guest use<br />

the same kernel; therefore, they must be of the same type. This means that a<br />

Linux host can only support Linux guests.<br />

Figure 2: openSUSE with Ubuntu: System virtualization with resource containers<br />

is an interesting option if you need to host (multiple) Linux guest systems as<br />

efficiently as possible on a Linux host system.<br />

www.Admin-mAgAzine.com<br />

Admin 01<br />

53