How far does screening women for domestic (partner) - NIHR Health ...

How far does screening women for domestic (partner) - NIHR Health ...

How far does screening women for domestic (partner) - NIHR Health ...

Create successful ePaper yourself

Turn your PDF publications into a flip-book with our unique Google optimized e-Paper software.

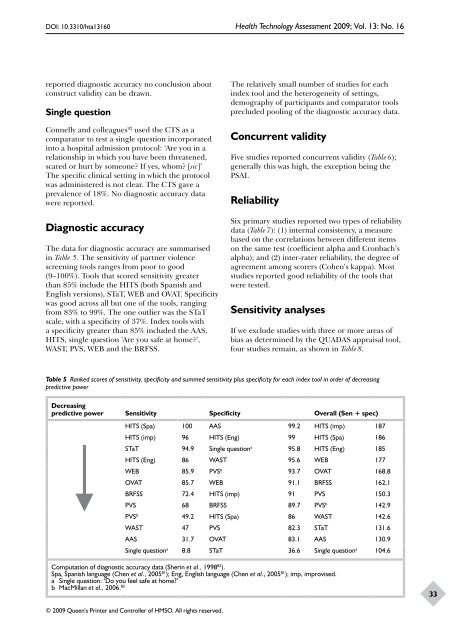

DOI: 10.3310/hta13160 <strong>Health</strong> Technology Assessment 2009; Vol. 13: No. 16<br />

reported diagnostic accuracy no conclusion about<br />

construct validity can be drawn.<br />

Single question<br />

Connelly and colleagues 92 used the CTS as a<br />

comparator to test a single question incorporated<br />

into a hospital admission protocol: ‘Are you in a<br />

relationship in which you have been threatened,<br />

scared or hurt by someone? If yes, whom? [sic]’<br />

The specific clinical setting in which the protocol<br />

was administered is not clear. The CTS gave a<br />

prevalence of 18%. No diagnostic accuracy data<br />

were reported.<br />

Diagnostic accuracy<br />

The data <strong>for</strong> diagnostic accuracy are summarised<br />

in Table 5. The sensitivity of <strong>partner</strong> violence<br />

<strong>screening</strong> tools ranges from poor to good<br />

(9–100%). Tools that scored sensitivity greater<br />

than 85% include the HITS (both Spanish and<br />

English versions), STaT, WEB and OVAT. Specificity<br />

was good across all but one of the tools, ranging<br />

from 83% to 99%. The one outlier was the STaT<br />

scale, with a specificity of 37%. Index tools with<br />

a specificity greater than 85% included the AAS,<br />

HITS, single question ‘Are you safe at home?’,<br />

WAST, PVS, WEB and the BRFSS.<br />

© 2009 Queen’s Printer and Controller of HMSO. All rights reserved.<br />

The relatively small number of studies <strong>for</strong> each<br />

index tool and the heterogeneity of settings,<br />

demography of participants and comparator tools<br />

precluded pooling of the diagnostic accuracy data.<br />

Concurrent validity<br />

Five studies reported concurrent validity (Table 6);<br />

generally this was high, the exception being the<br />

PSAI.<br />

Reliability<br />

Six primary studies reported two types of reliability<br />

data (Table 7): (1) internal consistency, a measure<br />

based on the correlations between different items<br />

on the same test (coefficient alpha and Cronbach’s<br />

alpha); and (2) inter-rater reliability, the degree of<br />

agreement among scorers (Cohen’s kappa). Most<br />

studies reported good reliability of the tools that<br />

were tested.<br />

Sensitivity analyses<br />

If we exclude studies with three or more areas of<br />

bias as determined by the QUADAS appraisal tool,<br />

four studies remain, as shown in Table 8.<br />

Table 5 Ranked scores of sensitivity, specificity and summed sensitivity plus specificity <strong>for</strong> each index tool in order of decreasing<br />

predictive power<br />

Decreasing<br />

predictive power Sensitivity Specificity Overall (Sen + spec)<br />

↓ HITS<br />

(Spa)<br />

HITS (imp)<br />

STaT<br />

100<br />

96<br />

94.9<br />

AAS<br />

HITS (Eng)<br />

Single question<br />

99.2<br />

99<br />

HITS (imp)<br />

HITS (Spa)<br />

187<br />

186<br />

a HITS (Eng)<br />

WEB<br />

86<br />

85.9<br />

WAST<br />

PVS<br />

95.8<br />

95.6<br />

HITS (Eng)<br />

WEB<br />

185<br />

177<br />

b OVAT<br />

BRFSS<br />

PVS<br />

85.7<br />

72.4<br />

68<br />

WEB<br />

HITS (imp)<br />

BRFSS<br />

93.7<br />

91.1<br />

91<br />

89.7<br />

OVAT<br />

BRFSS<br />

PVS<br />

PVS<br />

168.8<br />

162.1<br />

150.3<br />

b 142.9<br />

PVSb 49.2 HITS (Spa) 86 WAST 142.6<br />

WAST 47 PVS 82.3 STaT 131.6<br />

AAS 31.7 OVAT 83.1 AAS 130.9<br />

Single questiona 8.8 STaT 36.6 Single questiona 104.6<br />

Computation of diagnostic accuracy data (Sherin et al., 1998 82 ).<br />

Spa, Spanish language (Chen et al., 2005 81 ); Eng, English language (Chen et al., 2005 81 ); imp, improvised.<br />

a Single question: ‘Do you feel safe at home?’<br />

b MacMillan et al., 2006. 85<br />

33